What if your language model could reason efficiently in an entirely new language?

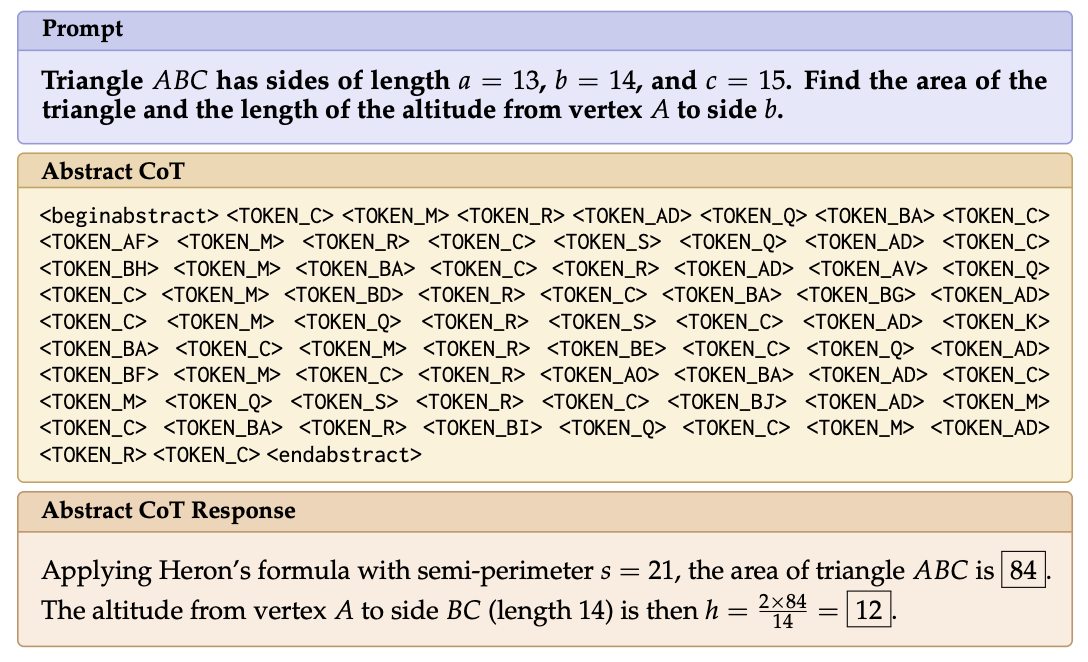

We introduce Abstract Chain-of-Thought, a new mechanism which allows language models to reason through a short sequence of reserved "abstract" tokens through reinforcement learning. It is as performant as verbalized CoT at a fraction of the cost, achieving major gains in inference-time efficiency.

As we move toward harder tasks, we have sought to imbue LLMs with the ability to generate long CoTs — however, verbalized reasoning chains can often be needlessly expensive, as well as unfaithful to the underlying reasoning process. It is valuable to explore alternative reasoning pathways, which is where latent reasoning comes into play.

But rather than mixing text and latent CoTs, or reasoning purely through embedding space, what if we could enable models to produce a much shorter chain, to balance performance and cost?

We consider a set of distinguishable, reserved tokens -- like "

Our aim is to (1.) learn an effective initialization for these tokens, and (2.) learn sequences of abstract tokens that yield high quality responses, toward a notion of compositionality.

To address this, we introduce Abstract Chain-of-Thought (Abstract-CoT). We first introduce a warm-up stage with a Policy Iteration-style loop, alternating between "Bottlenecked SFT" and "Self-Distillation" phases.

The former is designed to enforce an information bottleneck through the sequence of abstract tokens through a block-structured attention mask. The abstract sequence attends to a teacher-written (e.g. human-annotated) verbal CoT for guidance, but the response can only attend to the abstract CoT. The latter phase then involves teacher forcing while generating abstract CoTs on-policy using constrained decoding, dropping the verbal CoT guidance.

We then perform GRPO while generating traces consisting of abstract tokens, rewarded on the quality of the final response (which remain in natural language).

Testing Abstract-CoT on diverse benchmarks, we find that warm-up + GRPO nears or exceeds the performance of SFT + RL with verbal chain-of-thought. Notably, performing just one of the two stages (e.g. cold-start RL) clearly lags in performance -- this highlights the value of the warm-up stage! We also find this behavior extends to harder reasoning tasks like AIME and GPQA-Diamond.

When we scale the size of the abstract vocabulary, we find similar trends. Cold-start RL fails to outperform the baseline, and while increasing the number of warm-up iterations does improve performance, we achieve clear further gains with the warm-started RL.

What about the abstract vocabulary? It turns out that a power law distribution of token frequency emerges, akin to natural language! This suggests that natural token re-use patterns are learned using Abstract-CoT. We also find that Abstract-CoT is sensitive -- like natural language -- to permuting order within the reasoning chain, and to truncating the length, forcing response generation with a partial CoT.

Our findings suggest that there is potential to further improve Abstract-CoT. One exciting line of future work is improving the sample efficiency of learning to use new abstract tokens. If we can continue to learn to think in new ways, perhaps LLMs can do this as well!

Paper:

A big thanks to my collaborators, @TahiraNaseem12 and @RamonAstudill12!arxiv.org/abs/2604.22709

Share this Scrolly Tale with your friends.

A Scrolly Tale is a new way to read Twitter threads with a more visually immersive experience.

Discover more beautiful Scrolly Tales like this.