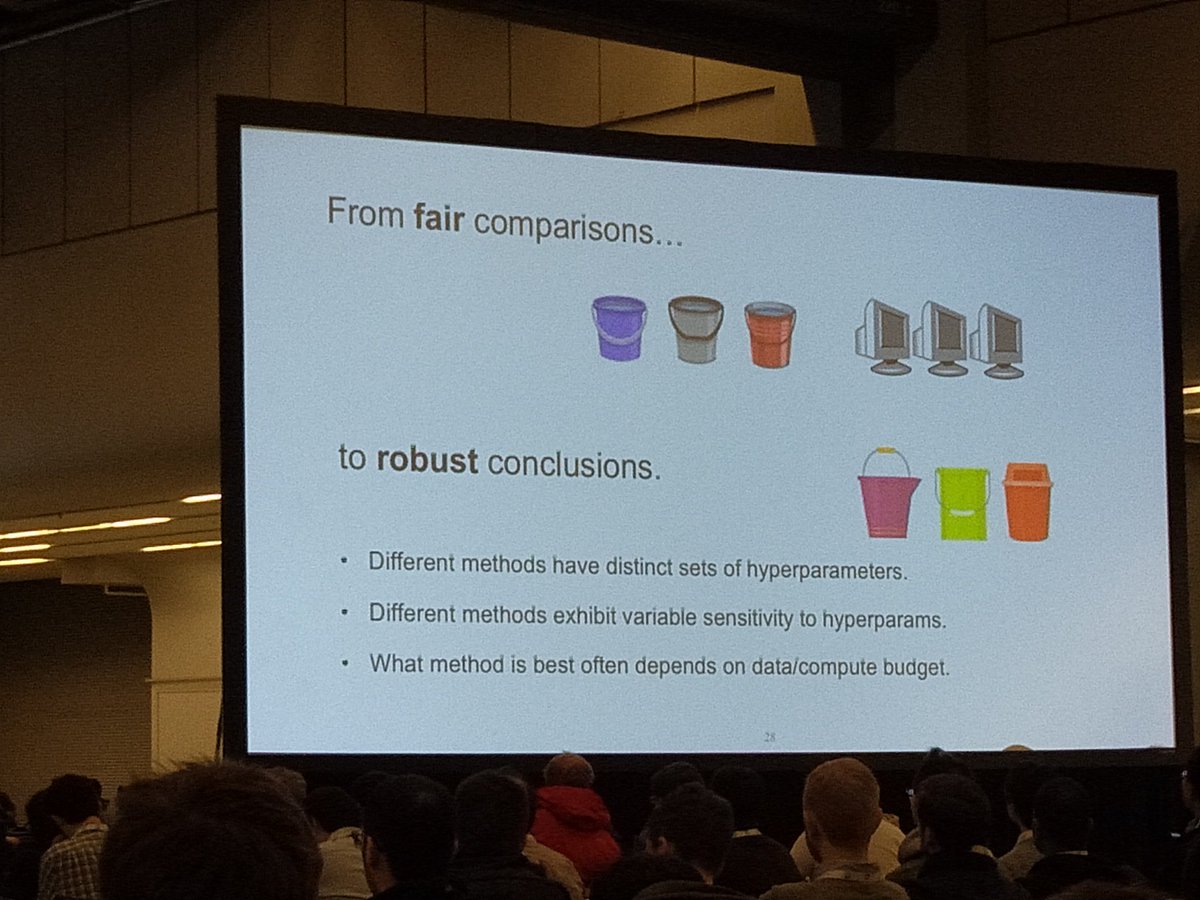

A simple method for fair comparison? #NeurIPS2018

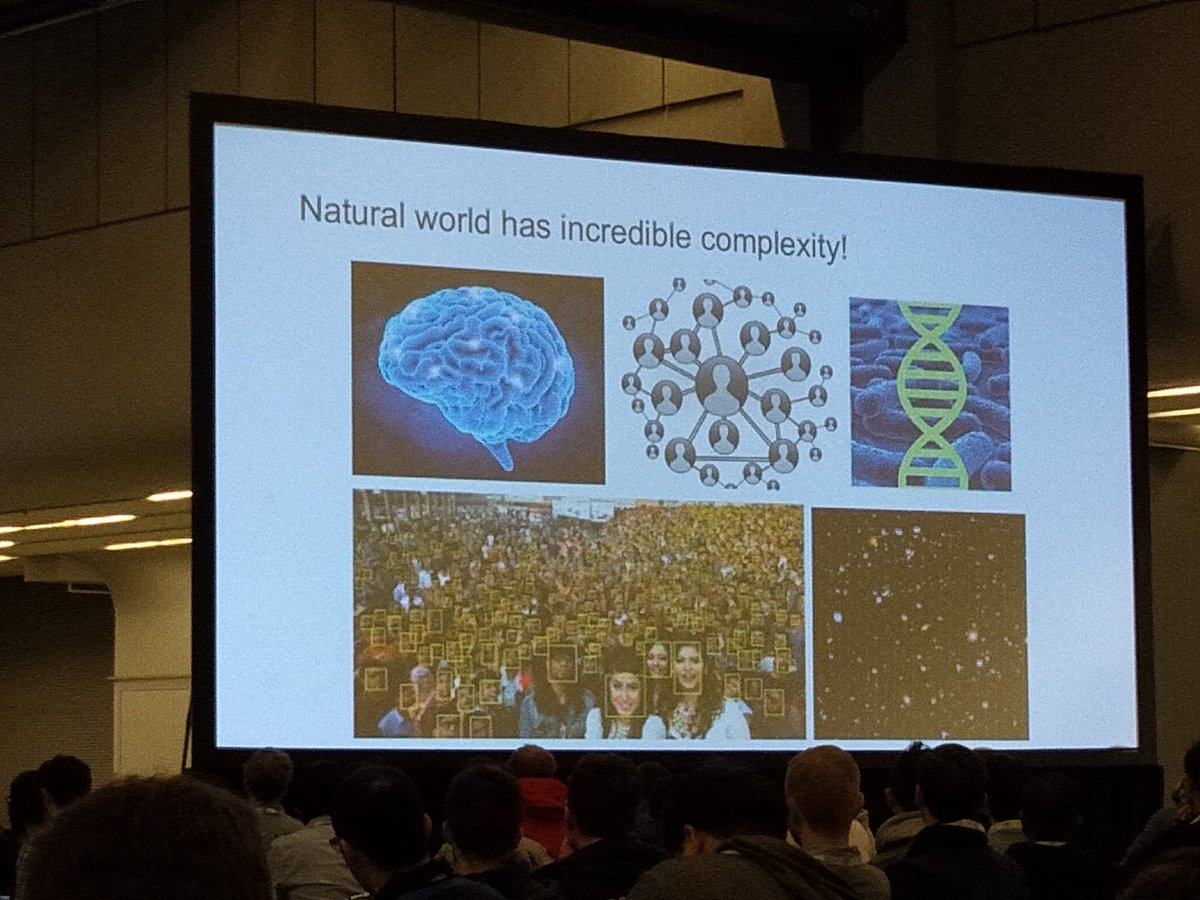

Complexity of the world is discarded... We need to tackle RL in the natural world through more complex simulations.

• • •

Missing some Tweet in this thread? You can try to

force a refresh