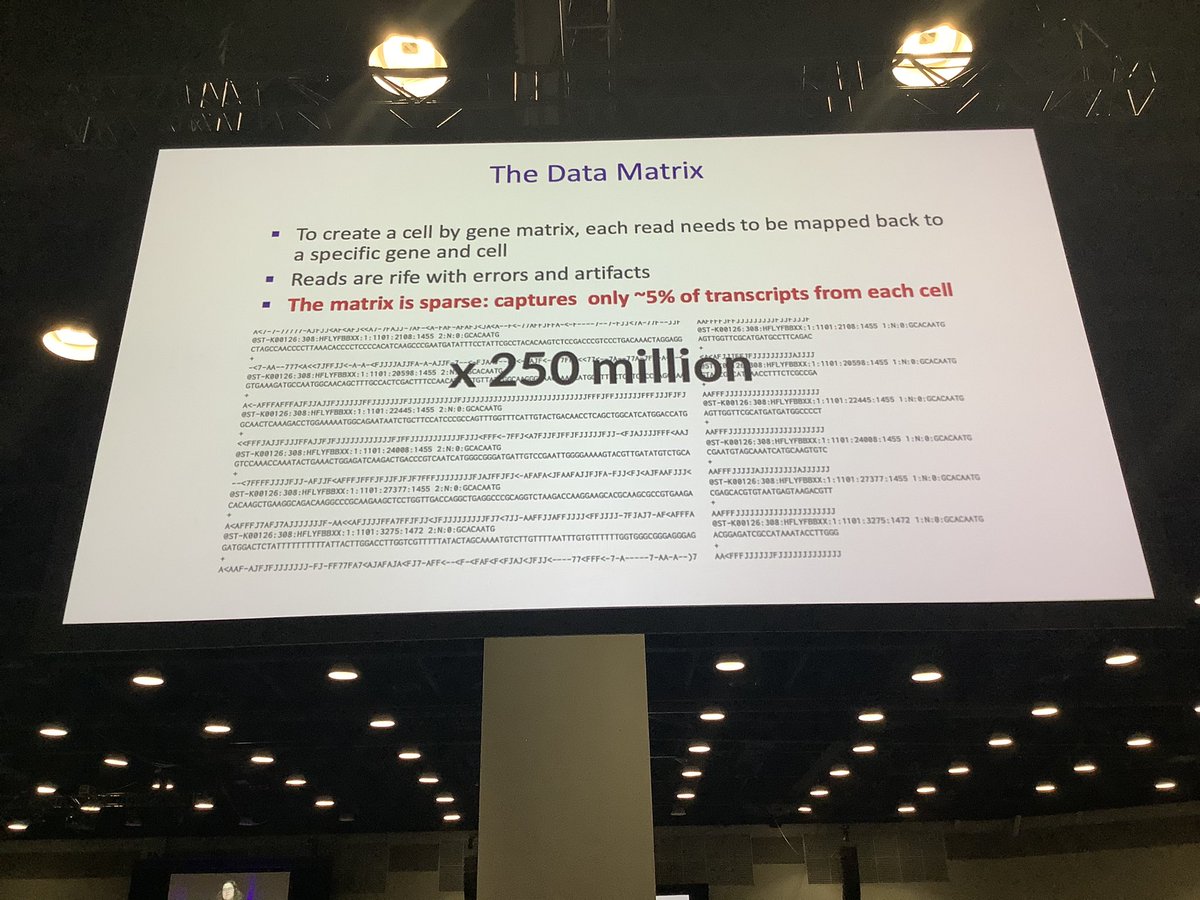

Machine learning for single cell biology: insights and challenges by Dana Pe’er. #NeurIPS2019

• • •

Missing some Tweet in this thread? You can try to

force a refresh