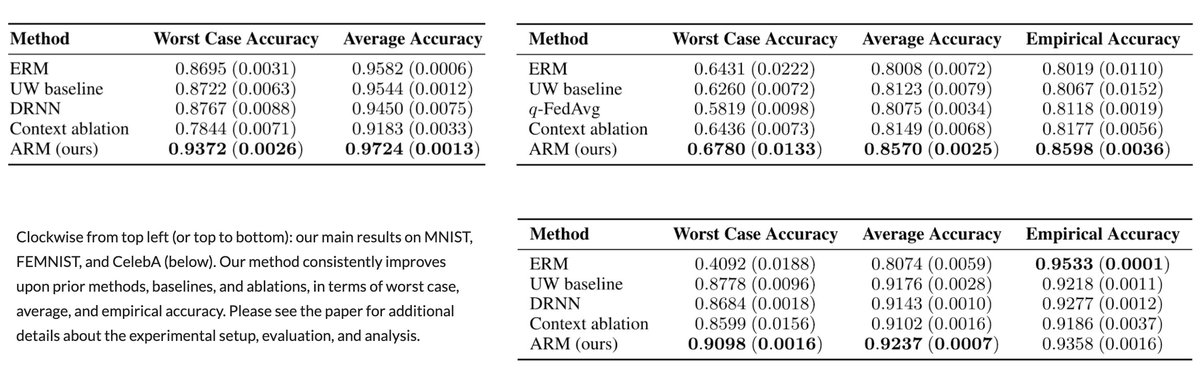

To help, we introduce adaptive risk minimization (ARM):

arxiv.org/abs/2007.02931

With M Zhang, H Marklund @abhishekunique7 @svlevine

(1/6)

Group DRO aims for robustness to shifts in groups underlying the dataset. (e.g. see arxiv.org/abs/1611.02041)

(2/6)

arxiv.org/abs/1911.08731

However, DRO methods often trade-off between robustness & test-time performance.

(3/6)

To do so, we introduce a new assumption to tackling group shift — that we get the unlabeled test data at once in a batch.

This resembles domain adaptation, except that the unlabeled test data is only available at test time.

(4/6)