Super excited to talk tomorrow (July 30th, 3pm pacific) at Abralin ao Vivo about joint with Yuan Yang. I'll be presenting a long-running project on language acquisition that tackles language learnability questions with Bayesian program learning tools.

Here's a summary thread.

Here's a summary thread.

https://twitter.com/abralin_oficial/status/1288465678215897090

This is part of an amazing remote talk series by @abralin_oficial presenting language work all summer

abralin.org/site/en/evento…

abralin.org/site/en/evento…

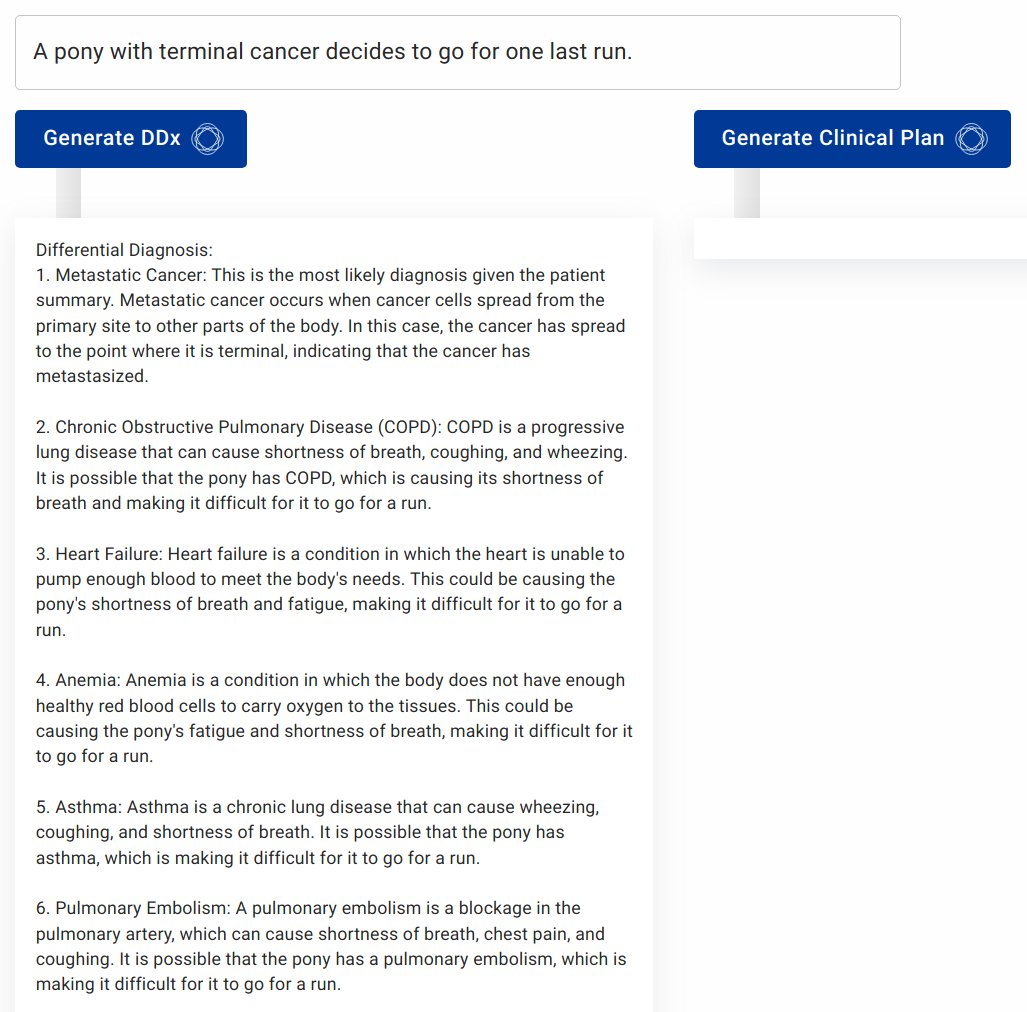

Our project studies how program learning tools can acquire natural language structures from positive evidence alone. We show that learners can *construct* grammatical devices for producing finite-state, context-free, and context-sensitive grammars to explain data they see.

Our interest in this started with @AmyPerfors's study showing that learners could discover that language is context-free from just a few minutes of child directed speech.

cse.iitk.ac.in/users/cs671/20…

cse.iitk.ac.in/users/cs671/20…

Amy et al. compared different grammars and showed that context free grammars provided the best explanation of child-directed speech, meaning that children could discover that language is (roughly) context-free by comparing hypotheses to find a simple theory of the data they hear.

Our work merges this general idea with inductive program learning ideas from @NickJChater and Vitanyi, who showed that statistical inference over Turing machines solves classic Gold-style learnability problems that long dominated language learning theory.

homepages.cwi.nl/~paulv/papers/…

homepages.cwi.nl/~paulv/papers/…

We show that now standard methods from program-based concept learning models can figure out what computation is generating the data the learner sees, using only positive evidence. The hypothesis space can be all computations. We look at learning a lot of formal languages...

All learned from positive evidence only. Same for a simple toy grammar of English (that is, the learning model constructs a program that generates the same strings as this)

This kind of program learning inevitably shows complex, structured patterns of generalization about data that hasn't been seen. That's not something unexpected or fancy or special about human language.

Implementing domain-general learning schemes like this helps to clarify a common confusion: the existence of domain-specific representations doesn't mean they result from domain-specific learning systems. So many times I've heard "Well structure X is only found in language so..."

But *the* thing that domain general learning systems do is create domain specific representations. That's what happens in math, reading, or driving. They all get domain specific representations from domain general mechanisms.

Isn't it harder to build in an infinite space of hypotheses like this model uses, rather than UG? Why build in many possible representations when we could build in just one? Here's my favorite part of the (upcoming) paper

I think Borges is a pretty important work in information theory: maskofreason.files.wordpress.com/2011/02/the-li…

So, you can learn many of the formal structures that have been argued to be necessary for language, using simple, domain-general tools. Maybe this will help to connect language acquisition to algorithm and concept learning found elsewhere in cognitive science.

work... joint work...

talk will be here for anyone interested: aovivo.abralin.org/lives/steven-p…

• • •

Missing some Tweet in this thread? You can try to

force a refresh