A few things I think were done right in that Snowflake paper in 2016 (reporting on 4-year old work!):

1. They got the ergonomics of the cloud early (for the space). Elasticity is a core feature. Storage is no longer a 'you' problem. Cost is compute-as-you go.

1. They got the ergonomics of the cloud early (for the space). Elasticity is a core feature. Storage is no longer a 'you' problem. Cost is compute-as-you go.

2. They had ACID out-of-the-box. This was a huge step forward over the state-of-the-art MPP analytical engines. It helps to have some of the best database engineers in the world on your founding team.

3. They understood that SaaS was the right model and weren't constrained trying to build a solution that worked well for on-prem, unmanaged cloud and managed cloud customers (subtweeting myself of five-plus years ago).

4. They embraced object storage, and invested in what was missing to make it work for a DB engine, rather than trying to make HDFS happen in the cloud:

(4a) I remember sitting in on a meeting with a company that were working on an S3 cache a few years ago. If we had been laser-focused on the cloud and on object storage I think I might have 'got it'.

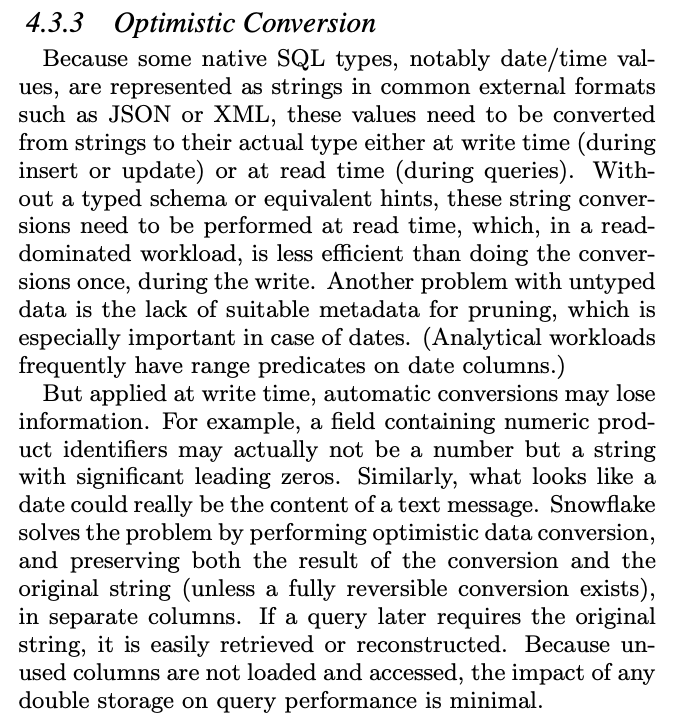

5. They had some really nice ideas around nested data, and taking advantage of cheap storage to make it more efficient in S3:

(5a) I remember it being pretty obvious that cheap storage should be taken advantage of - just like Vertica materialises > 1 projections - but something about HDFS storage being on-prem and shared meant that customers were often surprisingly reluctant to let the DB use the space.

last tweet: I think we did a lot of things right in Impala - and it's still processing millions of queries worldwide as far as I know - but I think it's fair to say that being more <shudder> cloud native would have been a strategic advantage.

• • •

Missing some Tweet in this thread? You can try to

force a refresh