🚨 Now out in @PNASNews 🚨

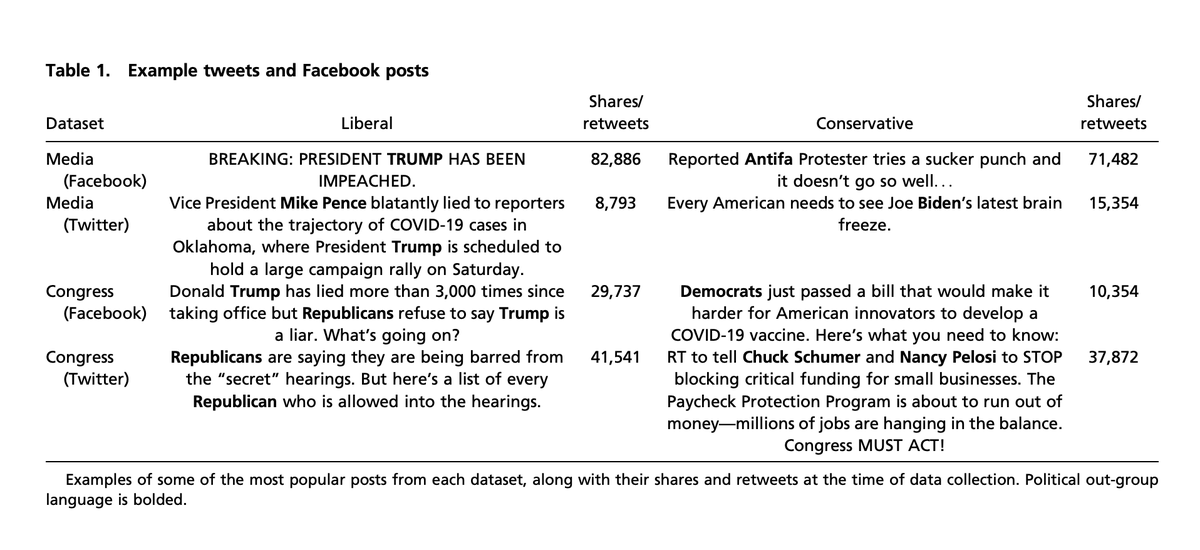

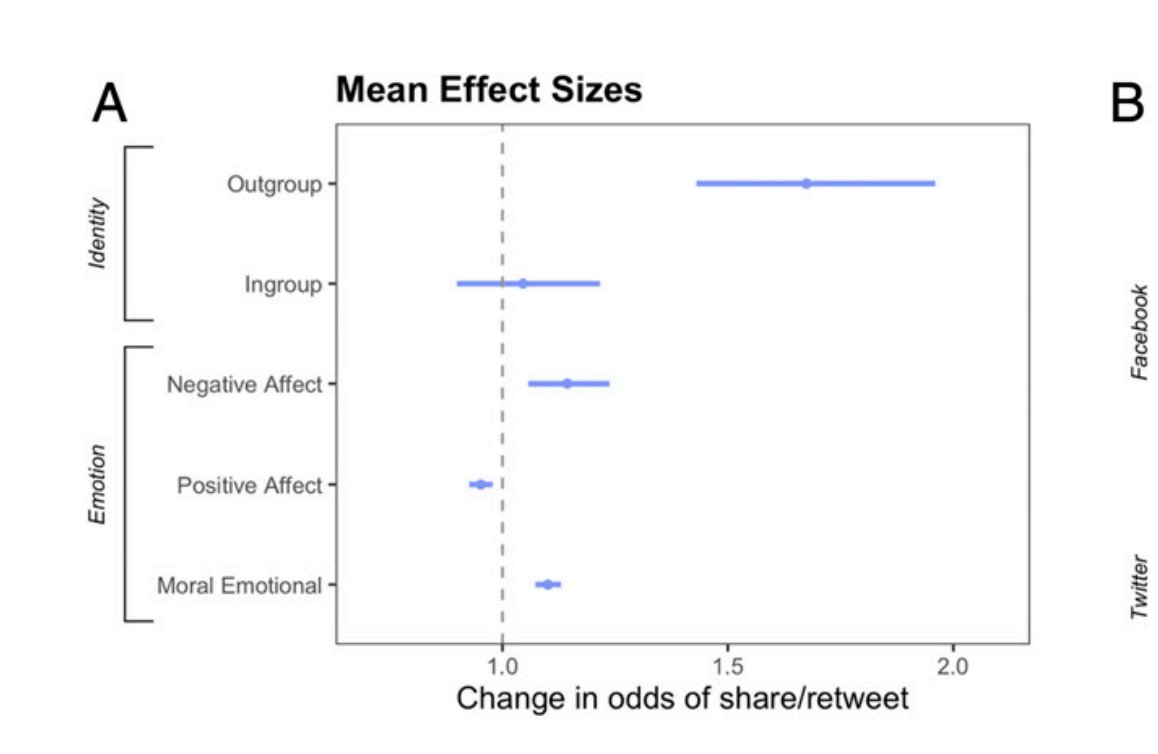

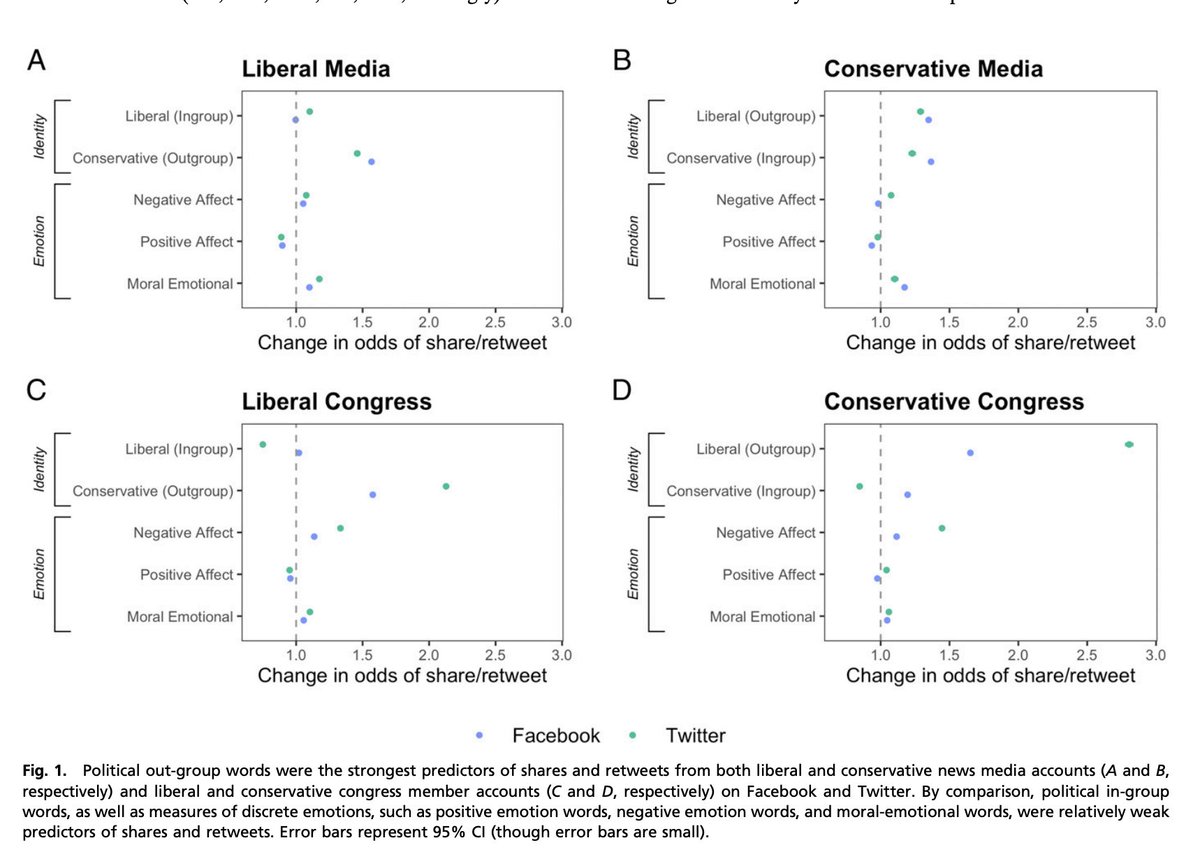

Analyzing social media posts from news accounts and politicians (n = 2,730,215), we found that the biggest predictor of "virality" (out of all predictors we measured) was whether a social media post was about one's outgroup.

pnas.org/content/118/26…

Analyzing social media posts from news accounts and politicians (n = 2,730,215), we found that the biggest predictor of "virality" (out of all predictors we measured) was whether a social media post was about one's outgroup.

pnas.org/content/118/26…

Specifically, each additional word about the opposing party (e.g., “Democrat,” “Leftist,” or “Biden” if the post was coming from a Republican) in a social media post increased the odds of that post being shared by 67%.

Negative and moral-emotional words also slightly increased the odds of a post being shared, positive words slightly decreased the odds, and in-group words had no effect.

Out-group words were by far the strongest predictor of virality that we measured.

Out-group words were by far the strongest predictor of virality that we measured.

Out-group posts were very likely to receive “angry” reactions on Facebook, as well as “haha” reactions (likely indicating mockery), comments, and shares.

Posts about the ingroup received much less overall engagement, although they were slightly more likely to receive “love” and “like” reactions, reflecting in-group favoritism.

In other words, out-group negativity was a stronger driver of virality than in-group positivity.

Indeed, the “angry” reaction was the most commonly used reaction out of all six of Facebook’s reactions in our datasets.

Indeed, the “angry” reaction was the most commonly used reaction out of all six of Facebook’s reactions in our datasets.

This out-group effect was not moderated by political orientation or by social media platform. However, stronger effects were found among politicians than in the media.

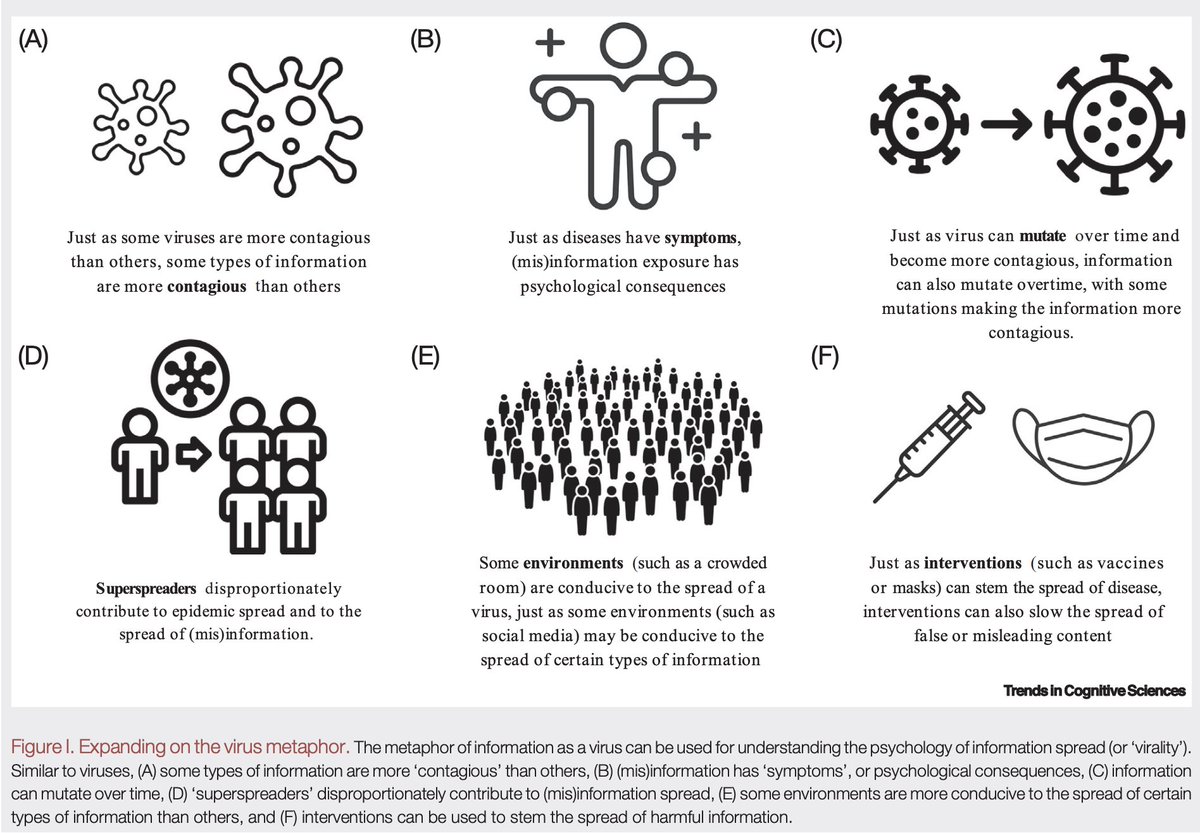

These results are troubling in an attention economy where the social media business model is based on keeping us engaged in order to sell advertising.

This business model may be creating perverse incentives for polarizing content, rewarding people for "dunking" on the outgroup.

This business model may be creating perverse incentives for polarizing content, rewarding people for "dunking" on the outgroup.

As an illustration of these perverse incentives, Facebook recently declined to implement features to reduce the amount of harmful content in the news feed because these features also made people open Facebook less.

nytimes.com/2020/11/24/tec…

nytimes.com/2020/11/24/tec…

Hopefully, this research inspires solutions to help re-think the perverse incentive structure of many social media platforms.

A huge thank you to @Sander_vdLinden & @jayvanbavel, and also to @CSDMLab @vanbavellab @Gates_Cambridge @NYUPsych @CambPsych

Also, thank you @flewse at @Cambridge_Uni for this press release (with really beautiful visuals)

https://twitter.com/Cambridge_Uni/status/1407334814919888905?s=20

And @NBCNews for the great coverage as well.

https://twitter.com/NBCNews/status/1409221766858301445?s=20

Thanks @robertwrighter for writing so eloquently about our study in the context of everything else going on at Facebook.

https://twitter.com/robertwrighter/status/1409675482644111366?s=20

Here's our op-ed about the study in the @washingtonpost @monkeycageblog:

https://twitter.com/azelin/status/1414912720491712517?s=20

.@Facebook responded to our op-ed. Here are our thoughts:

https://twitter.com/steverathje2/status/1415384069244956676?s=20

• • •

Missing some Tweet in this thread? You can try to

force a refresh