This past Saturday (August 12th, 2021), a couple thousand accounts tweeted "This is the truth of this world" accompanied by a brief video containing the phrase "Corona virus fake" at more or less the same time. #AstroturfedBullcrap #Spam

cc: @ZellaQuixote

cc: @ZellaQuixote

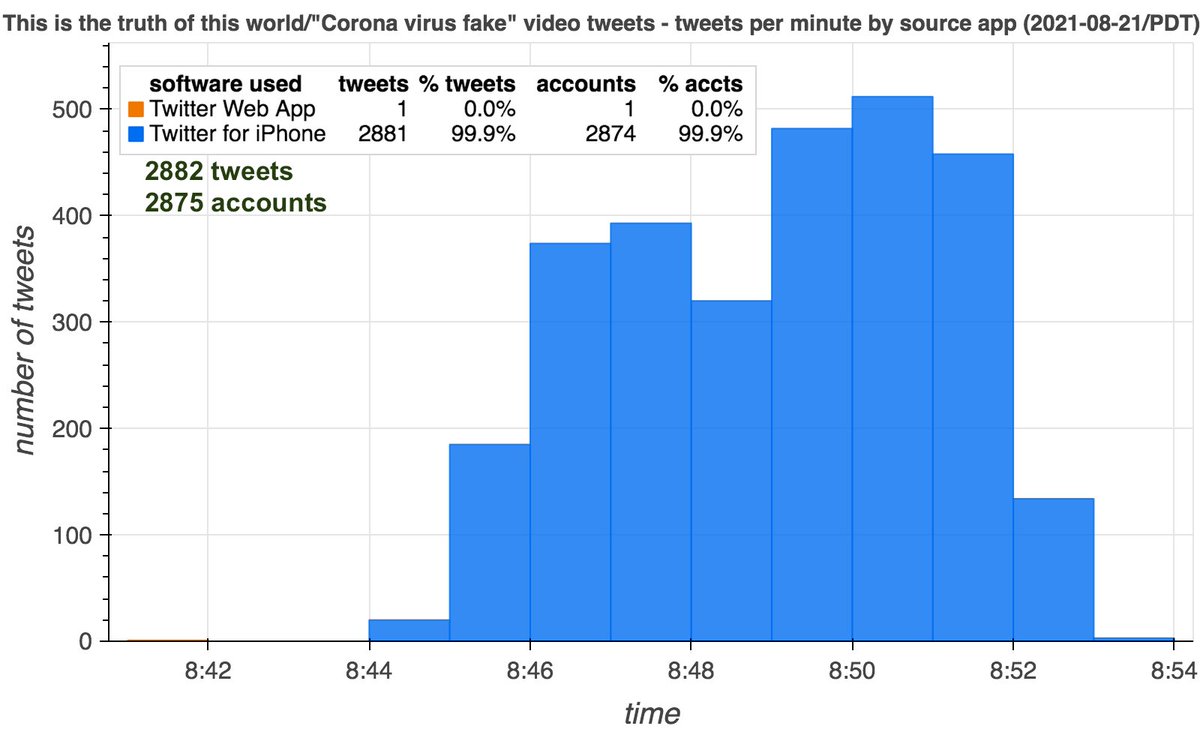

2882 tweets from 2875 accounts containing the "Corona virus fake" video and the text "This is the truth of this world" were posted over the span of 13 minutes. All but the first tweet end with a random 6 character code and were (allegedly) sent via "Twitter for iPhone".

Interestingly, the duplicated tweet was the first time 1754 of these 2875 accounts (61%) tweeted via iPhone (many are Android users). This suggests that some entity other than the account owners tweeted the iPhone video tweets from iPhones (or emulators) under their own control.

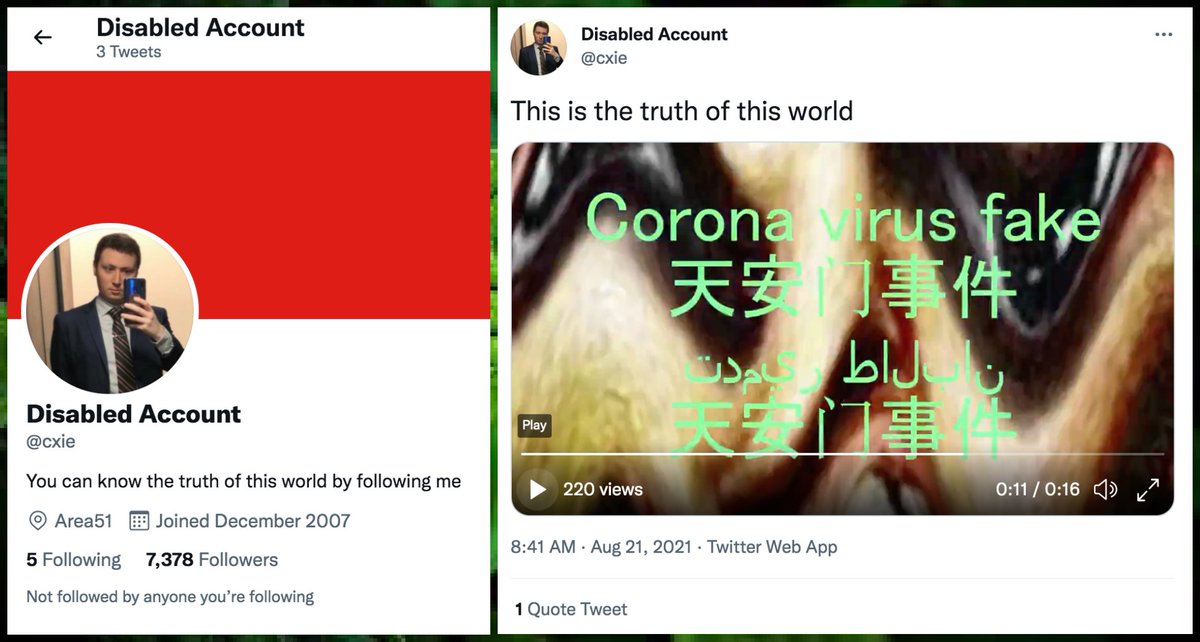

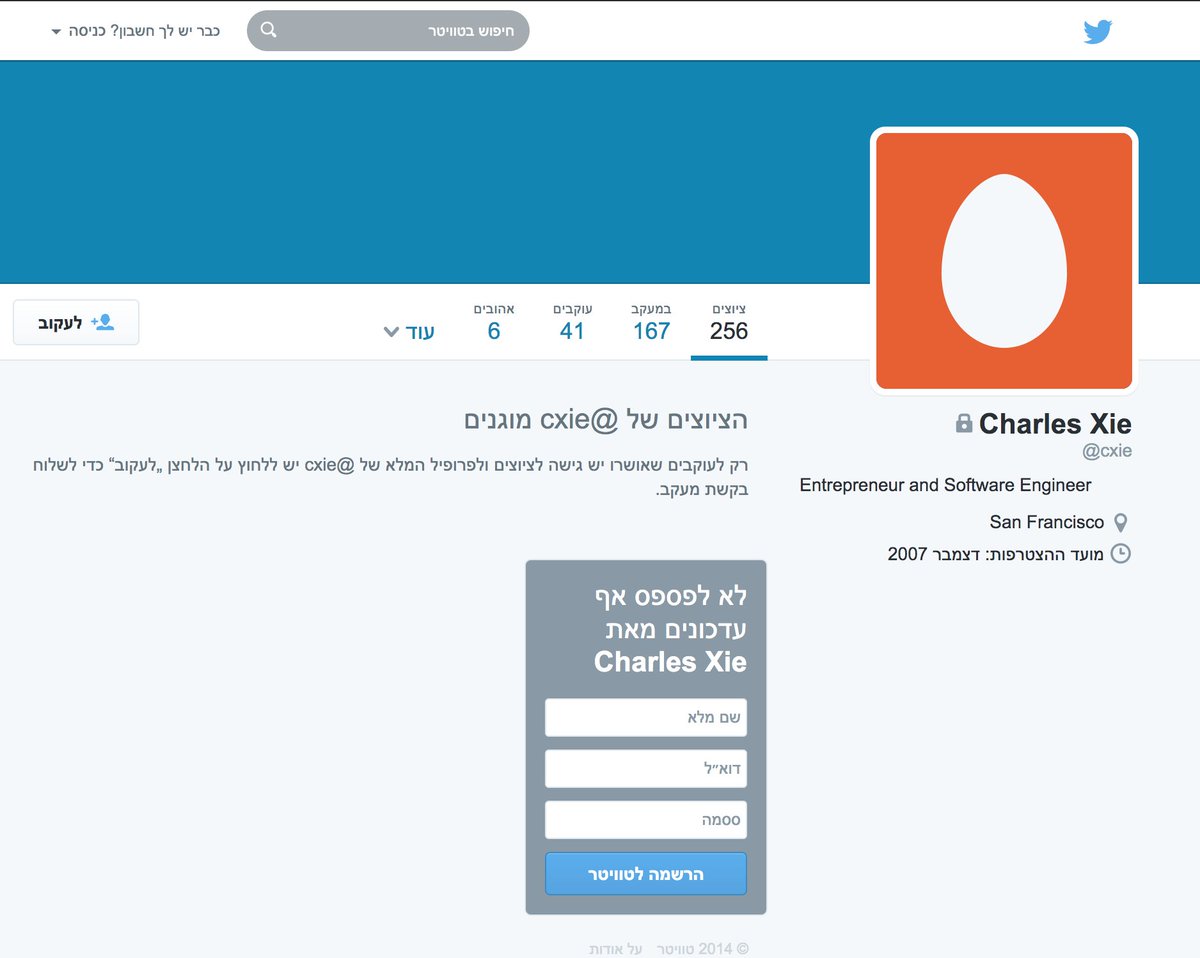

The "Corona virus fake" video was first tweeted by @cxie (permanent ID 11176362), an account created in 2007 with only three tweets (all recent). An Internet Archive snapshot from 2014 (web.archive.org/web/2014100304…) shows 256 tweets, however, so this account has been purged.

Despite being created in 2007, almost all of @cxie's followers were picked up in July 2021 or later. Interestingly, almost all of other accounts that posted the "Corona virus fake" video tweet (2843 of 2875, 98.9%) followed it en masse.

These replies about being hacked are consistent with the theory that the spammed video tweets were posted by someone other than the legitimate account holders (possibly the operator of the @cxie account):

https://twitter.com/conspirator0/status/1429986635165360130

In addition to the duplicate video tweets, 490 of those accounts sent identical replies in Chinese to @JHANDS08 in just five minutes. As with the video tweet spam, the first reply is from @cxie via "Twitter Web App" and the remainder were all sent via "Twitter for iPhone".

• • •

Missing some Tweet in this thread? You can try to

force a refresh