An update on the hidden vocabulary of DALLE-2.

While a lot of the feedback we received was constructive, some of the comments need to be addressed.

A thread, with some new gibberish text and some discussion 🧵 (1/N)

While a lot of the feedback we received was constructive, some of the comments need to be addressed.

A thread, with some new gibberish text and some discussion 🧵 (1/N)

@benjamin_hilton said that we got lucky with the whales example.

We found another similar example.

"Two men talking about soccer, with subtitles" gives the word "tiboer". This seems to give sports in ~4/10 images. (2/N)

We found another similar example.

"Two men talking about soccer, with subtitles" gives the word "tiboer". This seems to give sports in ~4/10 images. (2/N)

A few people, including @realmeatyhuman, asked whether our method works beyond natural images (of birds, etc).

Yes, we found some examples that seem statistically significant.

E.g. "doitcdces" seems related (~4/10 images) to students (or learning). (3/N)

Yes, we found some examples that seem statistically significant.

E.g. "doitcdces" seems related (~4/10 images) to students (or learning). (3/N)

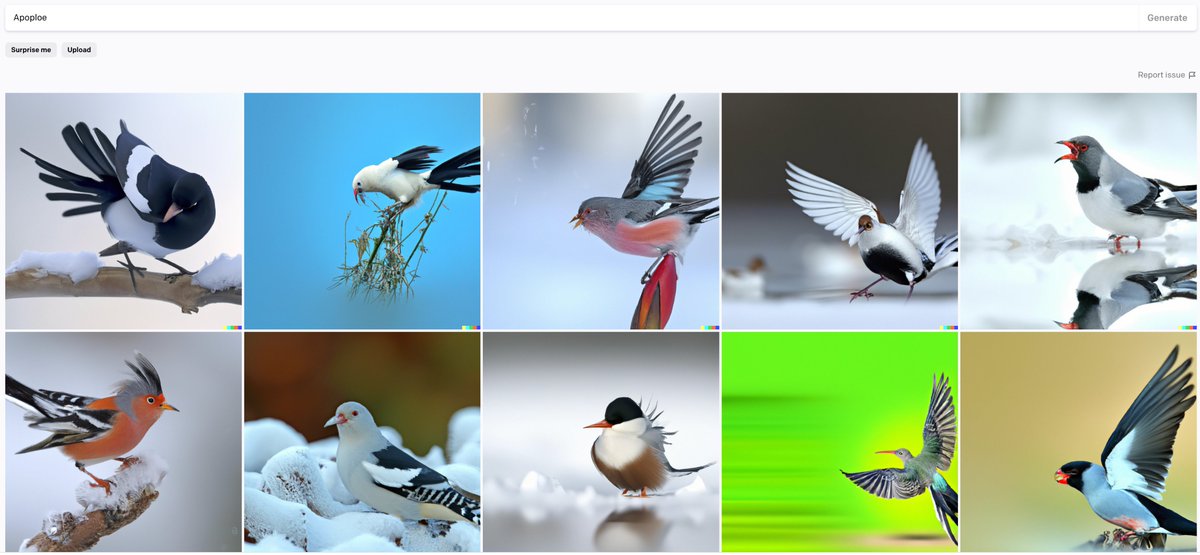

@BarneyFlames, @mattgroh pointed out that "Apoploe", our gibberish word for birds, has similar BPE encoding to "Apodidae".

Interestingly, "Apodidae" produces ~1/10 birds (but many flying insects), while our gibberish "Apoploe" gives 10/10.

(5/N)

Interestingly, "Apodidae" produces ~1/10 birds (but many flying insects), while our gibberish "Apoploe" gives 10/10.

(5/N)

However, "Apodidae Ploceidae" (two names of real bird families) indeed gives 10/10 birds.

Therefore, one possible explanation is that our gibberish tokens are mashups of parts of real words. This seems reasonable.

It is interesting that DALLE-2 generates those mashups.

(6/N)

Therefore, one possible explanation is that our gibberish tokens are mashups of parts of real words. This seems reasonable.

It is interesting that DALLE-2 generates those mashups.

(6/N)

Our gibberish tokens might have many meanings.

@benjamin_hilton run "Contarra ccetnxniams luryca tanniounons" and pointed out that not all are bugs. Indeed, our gibberish text produces a statistically significant fraction, but rarely a 100% match to the target concept. (7/N)

@benjamin_hilton run "Contarra ccetnxniams luryca tanniounons" and pointed out that not all are bugs. Indeed, our gibberish text produces a statistically significant fraction, but rarely a 100% match to the target concept. (7/N)

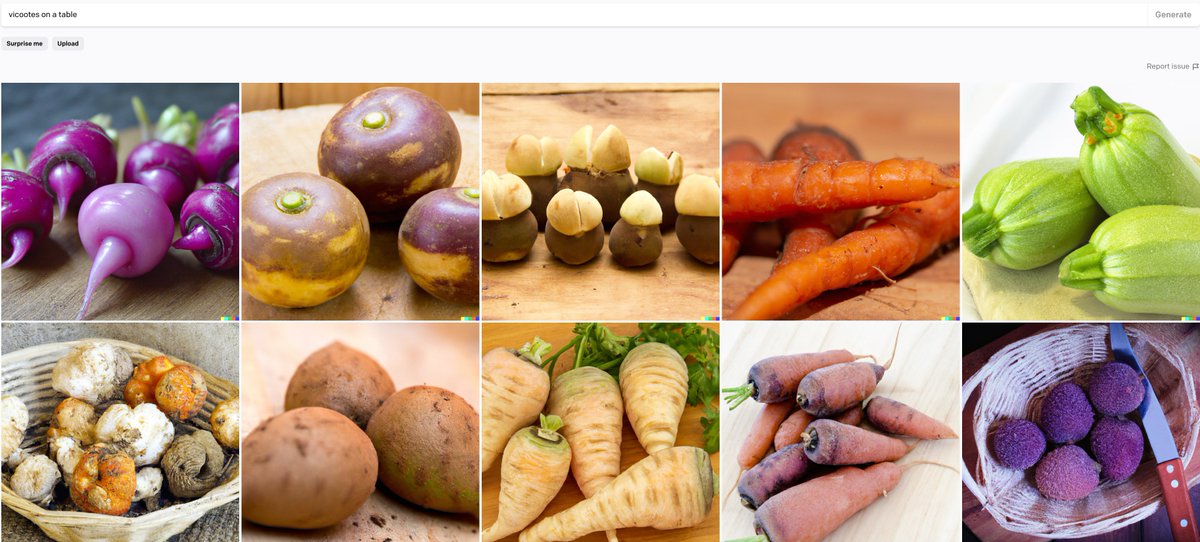

Our gibberish tokens have varying degrees of robustness in combinations with contexts.

E.g. if xx produces birds, ‘xx flying’ is an easy prompt

‘xx on a table’ is a neutral prompt, and ‘xx in space’ is a hard prompt.

(8/N)

E.g. if xx produces birds, ‘xx flying’ is an easy prompt

‘xx on a table’ is a neutral prompt, and ‘xx in space’ is a hard prompt.

(8/N)

Our hidden vocabulary seems robust in easy and sometimes neutral prompts but not in hard ones.

These tokens may produce low confidence in the generator and small perturbations move it in random directions.

"vicootes" means vegetables in some contexts and not in others. (9/N)

These tokens may produce low confidence in the generator and small perturbations move it in random directions.

"vicootes" means vegetables in some contexts and not in others. (9/N)

We want to emphasize that this is an adversarial attack and hence does not need to work all the time.

If a system behaves in an unpredictable way, even if that happens 1/10 times, that is still a massive security and interpretability issue, worth understanding. (10/N, N=10).

If a system behaves in an unpredictable way, even if that happens 1/10 times, that is still a massive security and interpretability issue, worth understanding. (10/N, N=10).

@benjamin_hilton, @realmeatyhuman, @BarneyFlames, @mattgroh, @rctatman, @Plinz, @Thomas_Woodside hopefully some of your concerns are addressed! Let us know what you think.

We will update the pre-print with this discussion:

arxiv.org/abs/2206.00169

We will update the pre-print with this discussion:

arxiv.org/abs/2206.00169

• • •

Missing some Tweet in this thread? You can try to

force a refresh