This neural network architecture that was showcased at the @Tesla AI day is a perfect example of Deep Learning at its finest. Mix and match all the greatest innovations to do something drastic and super ambitious. Congrats!

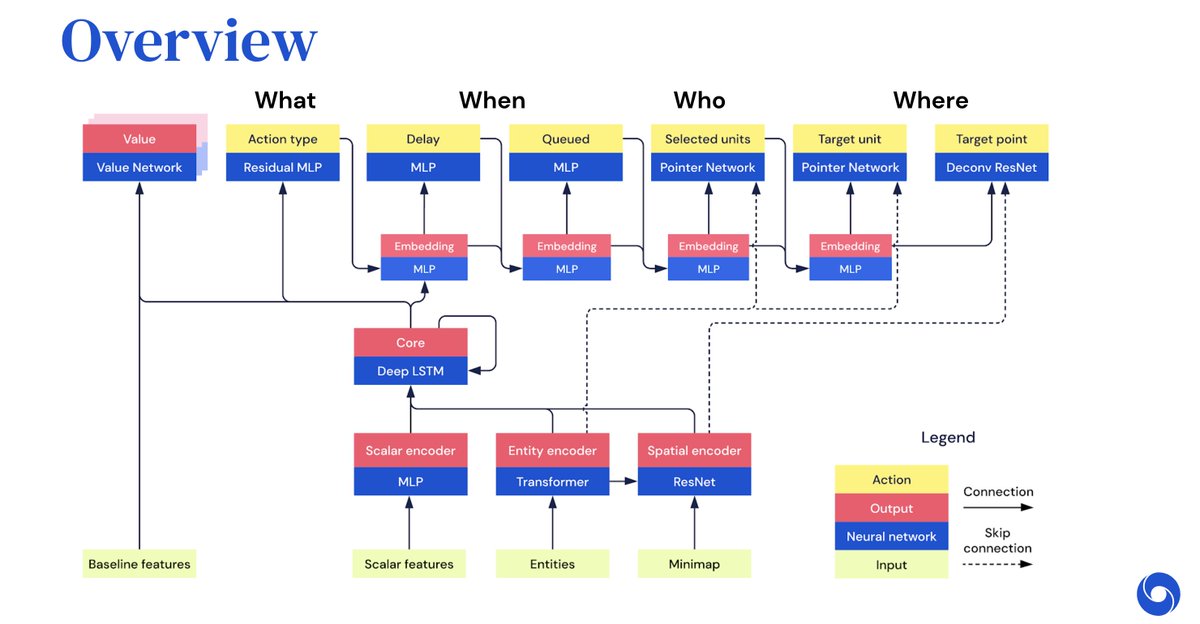

Treating the job of figuring out valid "lanes" from images as language is brilliant. Combining CNNs, transformers, attention, pointer networks, etc., you essentially write a set of instructions to build up the graph by connecting the dots, start new lanes, set curvature, etc.

This isn't ML-new, but who cares? Applied at the level of ambition of full-scale real world impact, with the right team, execution, (and compute/data!), you can do things that felt impossible before. Both the architecture and cool use of language heavily reminded me of AlphaStar.

With all the debates on who-claimed-what-when, ego wars, research-prophecies, and other non-sense that is filling my Twitter feed these days, it is sobering to see what good execution can achieve. Congratulations to all those involved in this and similar projects!

• • •

Missing some Tweet in this thread? You can try to

force a refresh