So the Chrome team (or is it the AVIF team? I am a bit confused now) finally released the data that was the basis for the decision to remove JPEG XL support in Chrome. Here it is:

storage.googleapis.com/avif-compariso…

storage.googleapis.com/avif-compariso…

It would be good if all people with experience in image compression take a closer look at this data and perhaps try to reproduce it. I will certainly do the same. I will perhaps already give some initial remarks/impressions.

Decode speed: why is this measured in Chrome version 92, and not a more recent version? Improvements in jxl decode speed have been made since then — that version of Chrome is more than 1.5 years old.

Encode speed: these measurements seem somewhat strange, and do not correspond to my own measurements. It would be interesting to check this with independent measurements.

Note that the fastest avif encode speed is not very useful for still images (it is not better than jpeg).

Note that the fastest avif encode speed is not very useful for still images (it is not better than jpeg).

Quality. What is strange is that only objective metrics are used, while it is well known that subjective evaluation is needed to reach any useful conclusions — trusting just the metrics is not a good idea.

In particular, MS-SSIM in YCbCr is not a very reliable metric to compare an encoder that works in YCbCr and optimizes for SSIM (like the avifenc setting they used) against an encoder that works in XYB and optimizes for perceptual quality (like libjxl).

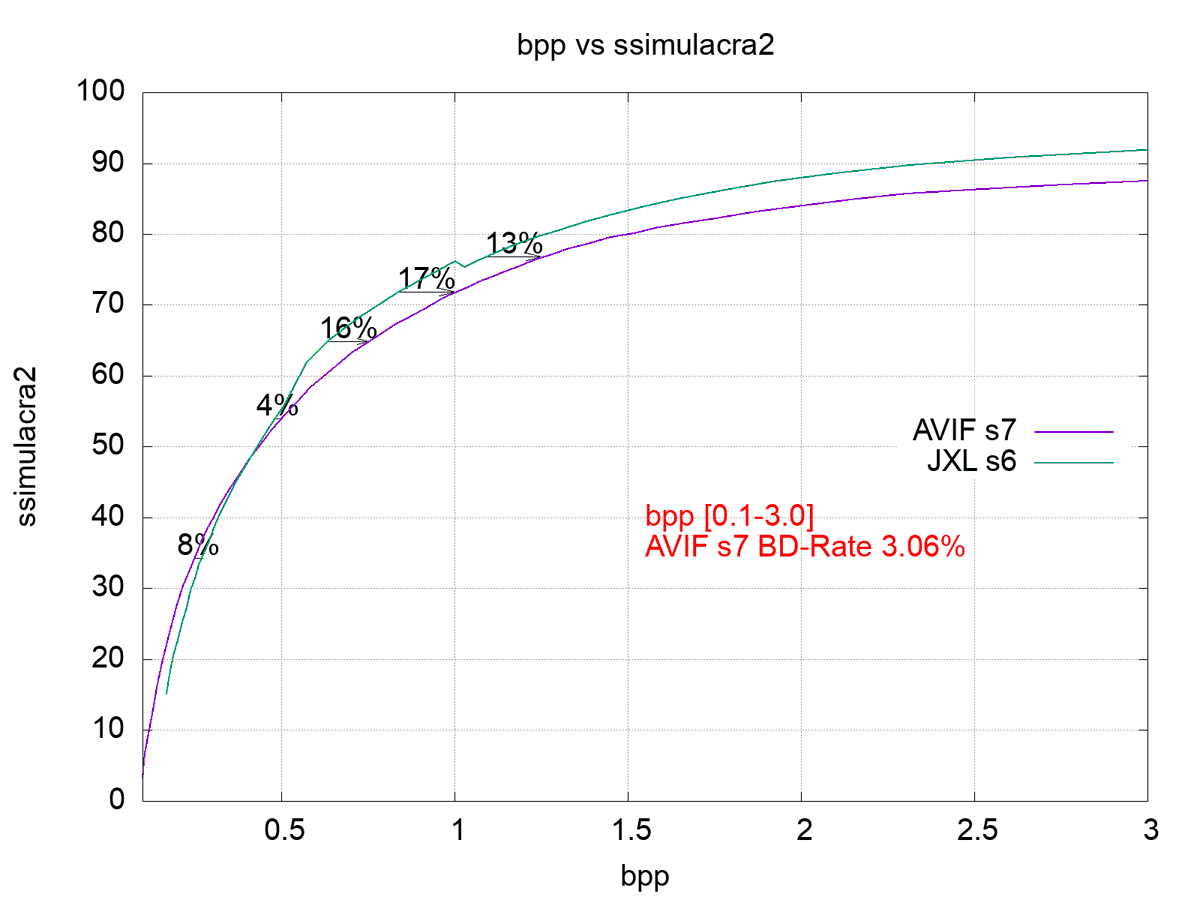

Also the bitrate range that was selected (going as low as 0.1 bpp) for computing BD rates, leads to an overemphasis on codec performance at fidelity ranges nobody uses in practice. The median AVIF image on the web is around 1 bpp, not 0.1 bpp.

For example, on this plot you see that their data confirms and even exceeds my estimate that JXL compresses 10-15% better than AVIF in the range that is relevant for the web. However by putting a lot of weight on the < 0.4 bpp range, this plot is averaged to "JXL is 3% better".

For lossless compression it would be interesting to look at different speed settings too. But this is less relevant for the web.

The choice of test sets is somewhat strange. In particular, the "noto-emoji" set is strange, since these images are better kept as vector graphics. Also dropping the alpha channel is a strange choice here. It seems like a cherry-picked and not very relevant test set.

I would also have expected a discussion or even some benchmarks related to other relevant aspects, like progressive decoding, header overhead / small images, jpeg recompression, etc.

Anyway, those are some of my initial thoughts. I will have a closer look after the weekend.

• • •

Missing some Tweet in this thread? You can try to

force a refresh