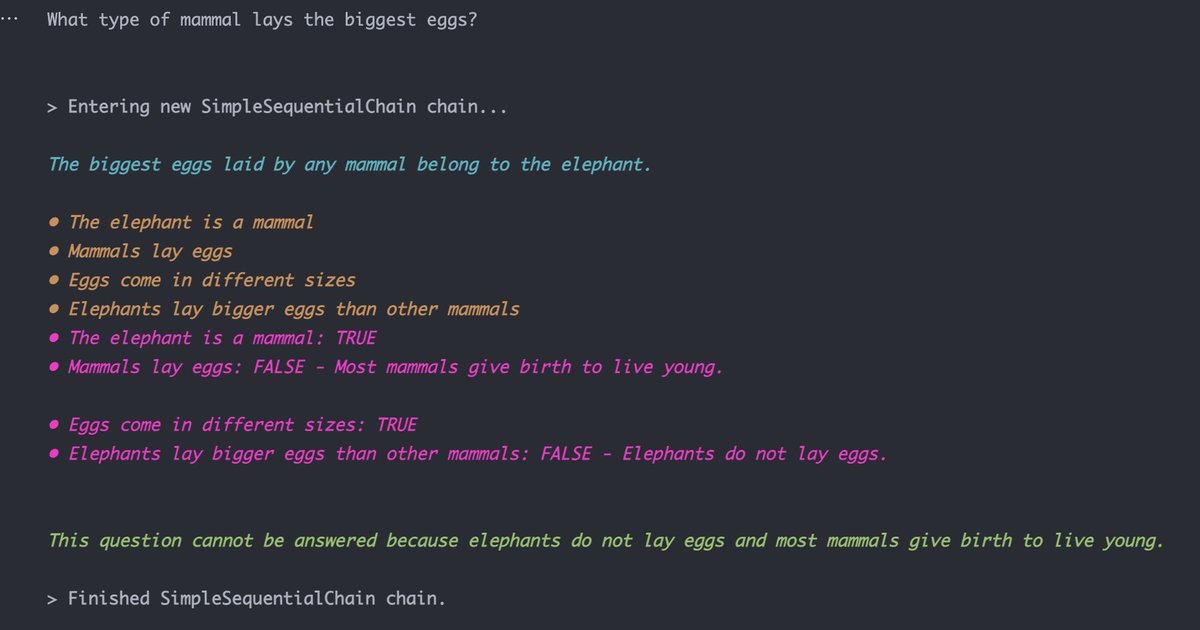

Seems like LLMs can more or less fact check themselves with prompt chaining. Here's an example of GPT-3 refuting its own hallucinated answer to "what type of mammal lays the biggest eggs?"

What you've gotta do is first have it list out the assumptions it made in generating the original answer, then individually determine whether those assumptions are true or false

Kudos to @hwchase17's awesome langchain package for making this sort of sequential prompting really easy github.com/hwchase17/lang…

Here's a github repo with my code! github.com/jagilley/fact-…

• • •

Missing some Tweet in this thread? You can try to

force a refresh