📢📣 We've got a new paper out from @CSMaP_NYU today in @NatureComms 📢

Russia's foreign influence campaign on Twitter in 2016 caused widespread concern. But who was exposed? How effective was it? 🧵👇

1/

nature.com/articles/s4146…

Russia's foreign influence campaign on Twitter in 2016 caused widespread concern. But who was exposed? How effective was it? 🧵👇

1/

nature.com/articles/s4146…

Russia's efforts to intervene with the 2016 election have been widely documented by news media and investigators. But researchers' understanding of exactly how influential these campaigns were has been largely restricted due to data limitations. 2/

To investigate the relationship between Russia’s Twitter campaign and political attitudes, we paired results from a 3-wave longitudinal survey of US respondents (conducted by YouGov) with a collection of respondents' Twitter timelines during the 8 months prior to Election Day. 3/

We then used data released by Twitter to identify which posts in the respondents' timelines had originated from Russia's Internet Research Agency campaign, which intended to reach voters in the lead-up to the 2016 election. 4/

Based on our analysis, it appears unlikely that the Russian foreign influence campaign *on Twitter* could have had much more than a relatively minor influence on individual-level attitudes and voting behavior for four related reasons. 5/

1⃣ Exposure to Russian coordinated influence accounts was heavily concentrated among a small portion of the electorate -- 1 percent of users accounted for 70 percent of exposures. See Panel B of Figure 1👇.

6/

6/

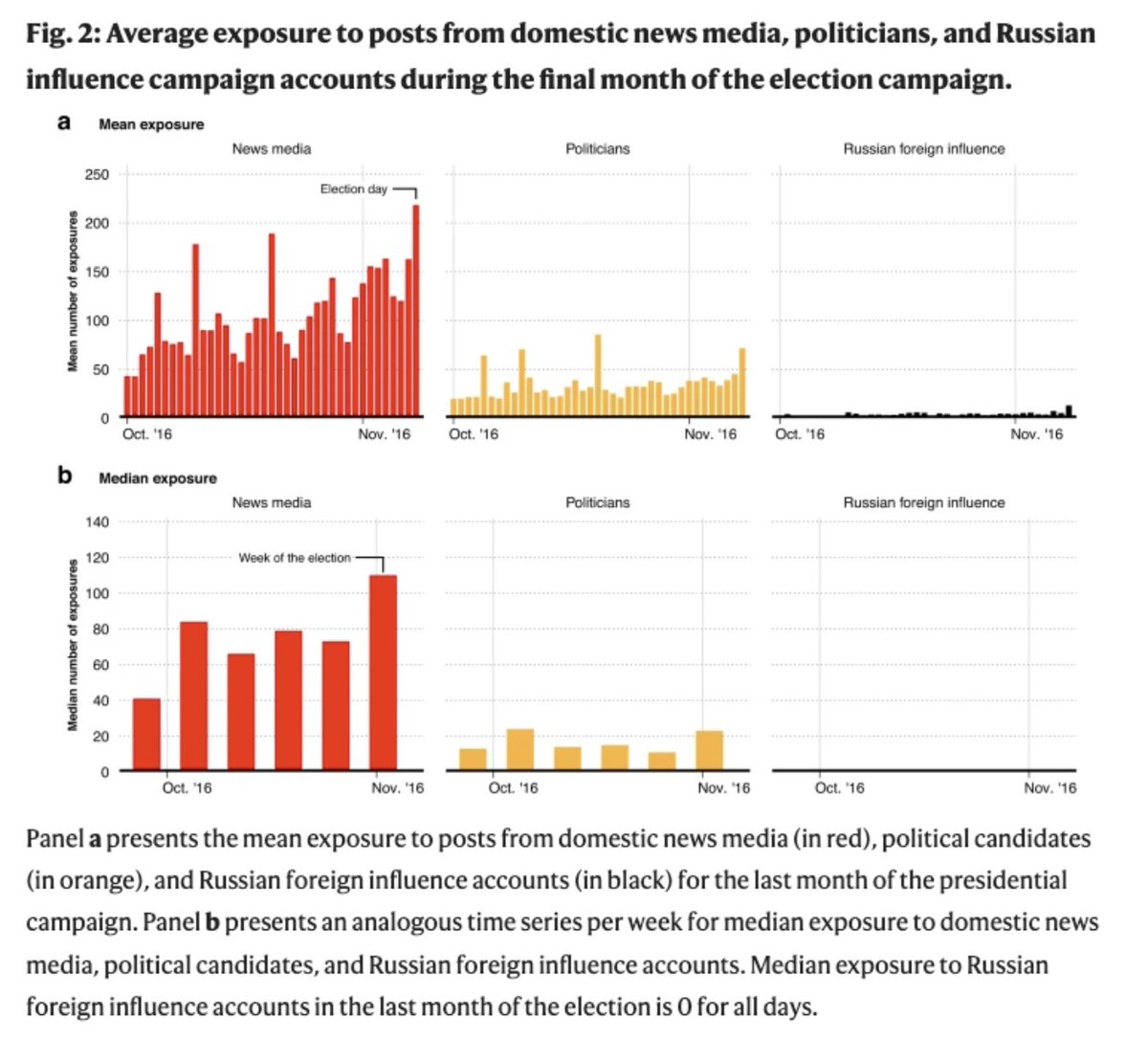

2⃣ Exposure to Russian foreign influence tweets was significantly eclipsed by content from domestic news media and politicians.

On avg, in 10/16, respondents saw:

4 posts/day from Russian foreign influence accts

106/day from national news media

35/day from politicians

7/

On avg, in 10/16, respondents saw:

4 posts/day from Russian foreign influence accts

106/day from national news media

35/day from politicians

7/

3⃣ 98% of exposures were concentrated among only 10% of respondents. Those who identified as "Strong Republicans" were exposed to roughly 9 times as many posts from Russian foreign influence accounts than were those who identified as Democrats or Independents. 8/

4⃣ Finally, we did not detect any relationships between exposure to posts from Russian foreign influence accounts and changes in respondents' attitudes, polarization, or voting behavior. 9/

Despite these results, it would be a mistake to conclude that simply because Russia's Twitter campaign wasn't meaningfully related to individual-level attitudes that it didn't have any impact on the election, or on faith in American electoral integrity. 10/

Indeed, debate about the 2016 US election continues to raise questions about the legitimacy of the Trump presidency & the electoral system, which in turn could turn out to be related to Americans’ willingness to accept claims of voter fraud in the 2020 election. 11/

Such beliefs may stem from speculation that Russian interference on social media influenced the election outcome. In a word, Russia's social media campaign may have had its largest effects *indirectly* by convincing Americans that its campaign was successful. 12/

Nevertheless, our results hopefully provide an important corrective to the view that Russia's foreign influence campaign on social media easily manipulated the attitudes & voting behavior of ordinary Americans. 13/

Thanks to @GregoryEady @tpaskhalis, the co-lead authors on this paper, for their amazing work, as well as the rest of my co-authors @janzilinsky @RichBonneauNYC & @Jonathan_Nagler! You can read the full article (#OpenAccess!) here: nature.com/articles/s4146… 14/

You can also find a summary of the findings on the @CSMaP_NYU website.

15/

csmapnyu.org/news-views/new…

15/

csmapnyu.org/news-views/new…

Thanks to @timstarks & @aaronjschaffer

for their coverage in today's Cybersecurity 202 @washingtonpost:

washingtonpost.com/politics/2023/…

16/

for their coverage in today's Cybersecurity 202 @washingtonpost:

washingtonpost.com/politics/2023/…

16/

Some further follow up on exactly what the paper does and does not reveal in this 🧵 👇

18/17

https://twitter.com/CSMaP_NYU/status/1612885934445367297

18/17

• • •

Missing some Tweet in this thread? You can try to

force a refresh