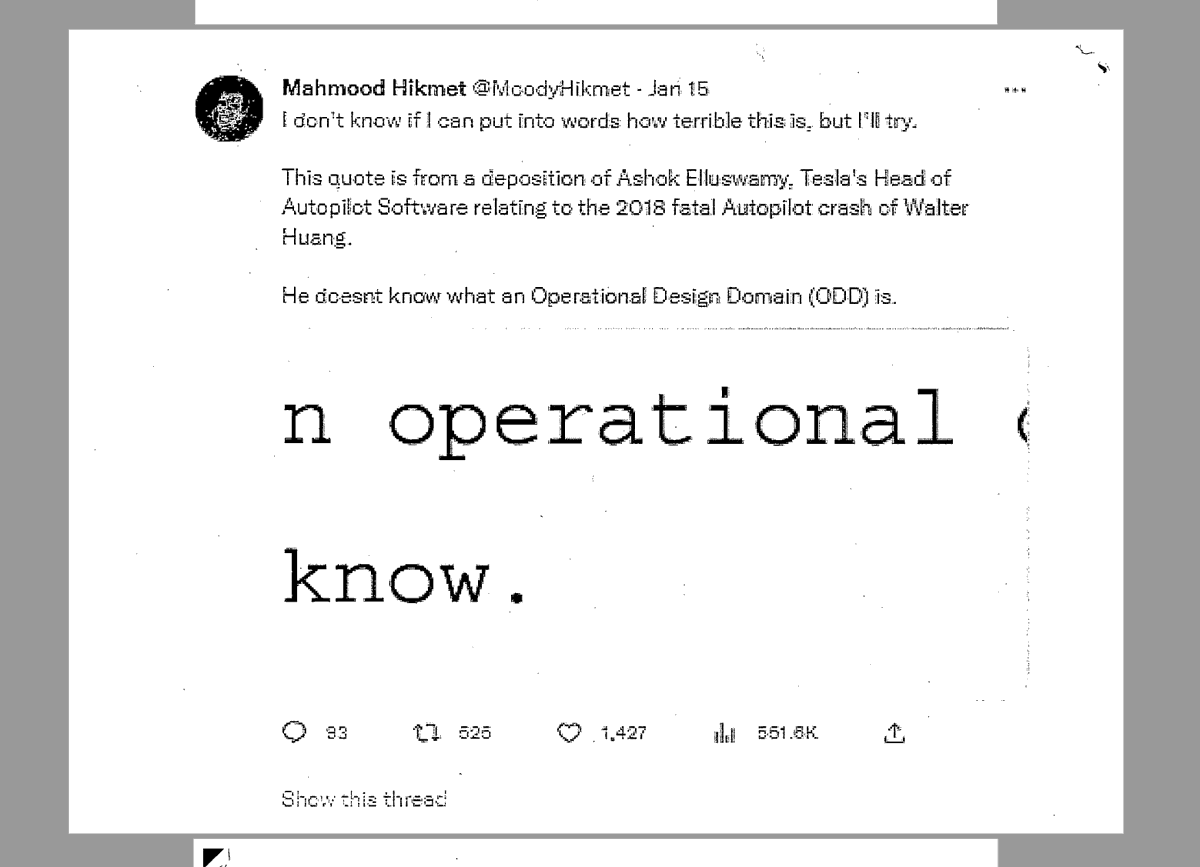

So, Tesla's lawyers saw my tweets from a couple weeks ago about the deposition transcripts and they didn't appreciate them very much.

Here is a video about it:

Here is a video about it:

In Huang vs Tesla, Tesla has filed a request to retroactively and proactively mark all depositions confidential due to this thread and the media fallout from the deposition:

https://twitter.com/MoodyHikmet/status/1614743058092019712

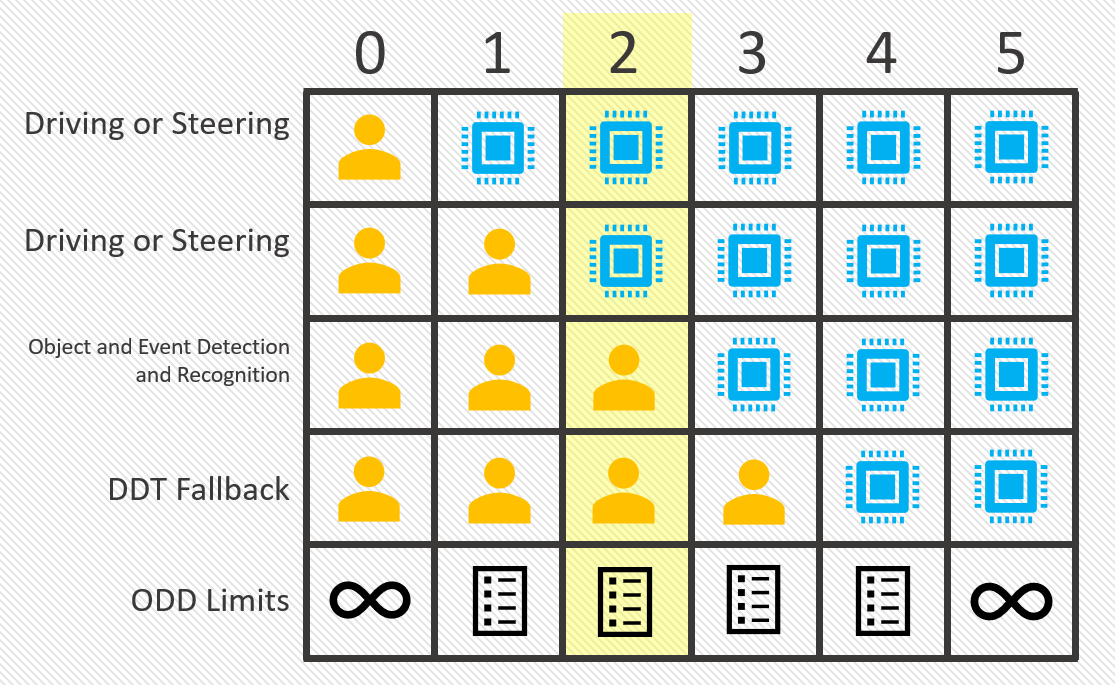

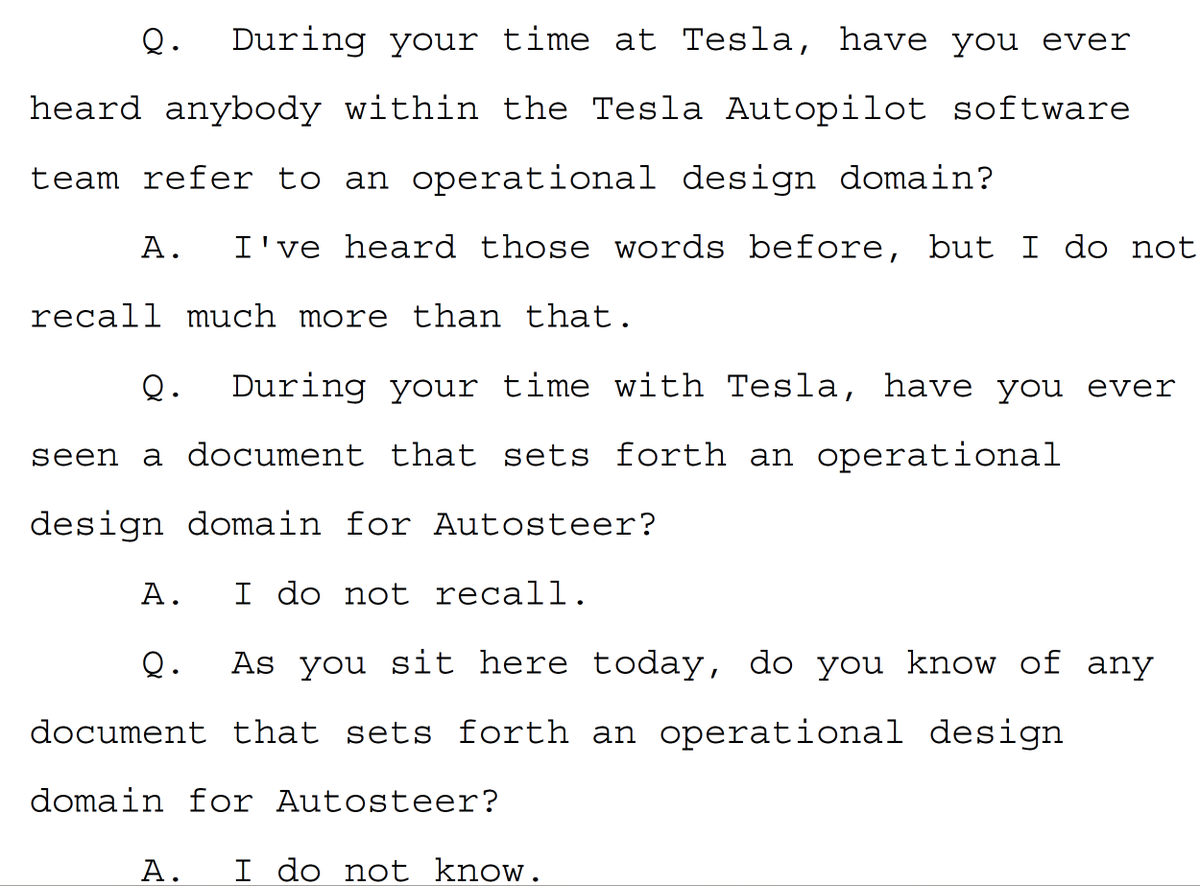

One of the things I pointed to was Ashok Elluswamy apparent lack of knowledge of what an Operational Design Domain is. This is Automated Vehicles 101 stuff, but as Tesla's Head of Autopilot Software, he didn't seem to know.

Let's be generous and say he just blanked.

Let's be generous and say he just blanked.

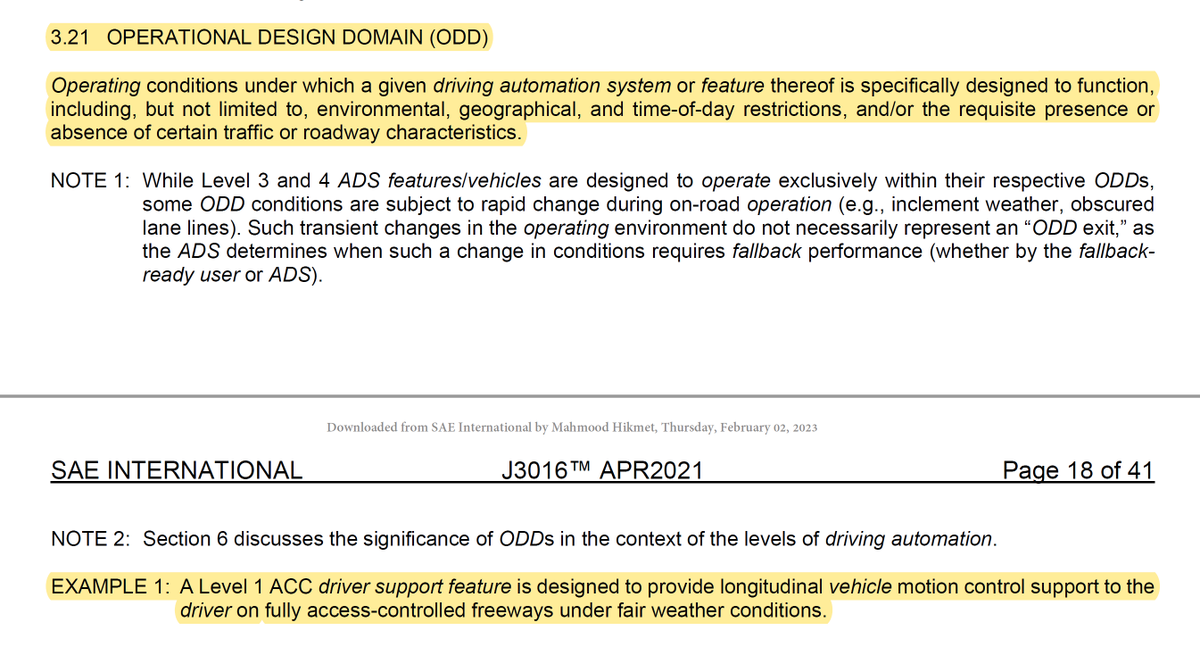

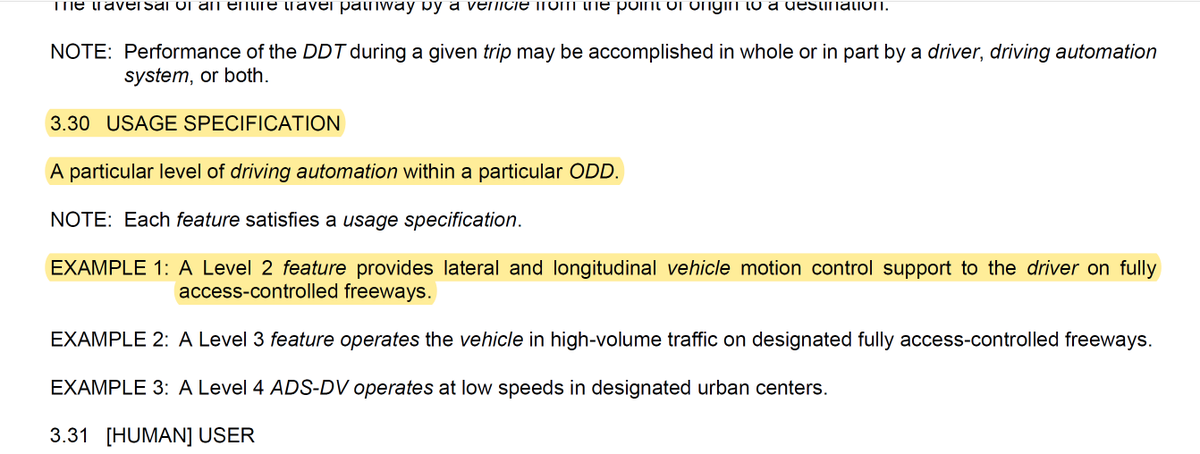

Tesla's lawyers, in a legal and publicly accessible filing claim that the operational design domain is a concept that is ENTIRELY IRRELEVANT for Level 2 Systems...

This is Tesla's position.

I'm sorry... WHAT!!!!?!?!

This is Tesla's position.

I'm sorry... WHAT!!!!?!?!

This is factually and provably false.

Here are a couple of screenshots from SAEJ3016 (free to download from here: sae.org/standards/cont…)

Here are a couple of screenshots from SAEJ3016 (free to download from here: sae.org/standards/cont…)

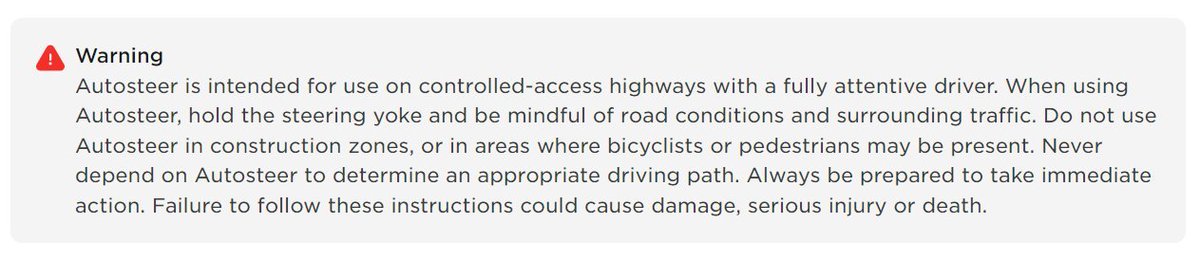

Also, here is the ODD for Autosteer (which you can find in any Tesla manual). Why would they add something "entirely irrelevant" in there?

This is no longer just an isolated incident where a single employee didn't know specific technical jargon - this negligence is company policy and strategy.

Their lawyers just said the quiet part out loud.

This is unheard of in this industry.

Their lawyers just said the quiet part out loud.

This is unheard of in this industry.

All of this is accessible on traffic.scscourt.org/search

Case Number: 19CV346663

You can see my printed and photocopied tweets in all their monochromatic glory.

Case Number: 19CV346663

You can see my printed and photocopied tweets in all their monochromatic glory.

As always, my DM's are always open for anyone (even Tesla's lawyers) if they're looking to genuinely educate themselves on aspects of this industry.

I promise to not make fun of you for stupid questions.

I promise to not make fun of you for stupid questions.

• • •

Missing some Tweet in this thread? You can try to

force a refresh