A short guide to engineering better GPT-3 prompts.

Here's how I redesigned GraphGPT's prompt to become faster, more efficient, and handle larger inputs:

Here's how I redesigned GraphGPT's prompt to become faster, more efficient, and handle larger inputs:

Some quick background:

GraphGPT is a tool that generates knowledge graphs from unstructured text using OpenAI's GPT-3 large language model.

GraphGPT is a tool that generates knowledge graphs from unstructured text using OpenAI's GPT-3 large language model.

https://twitter.com/varunshenoy_/status/1620511932930490372?s=20

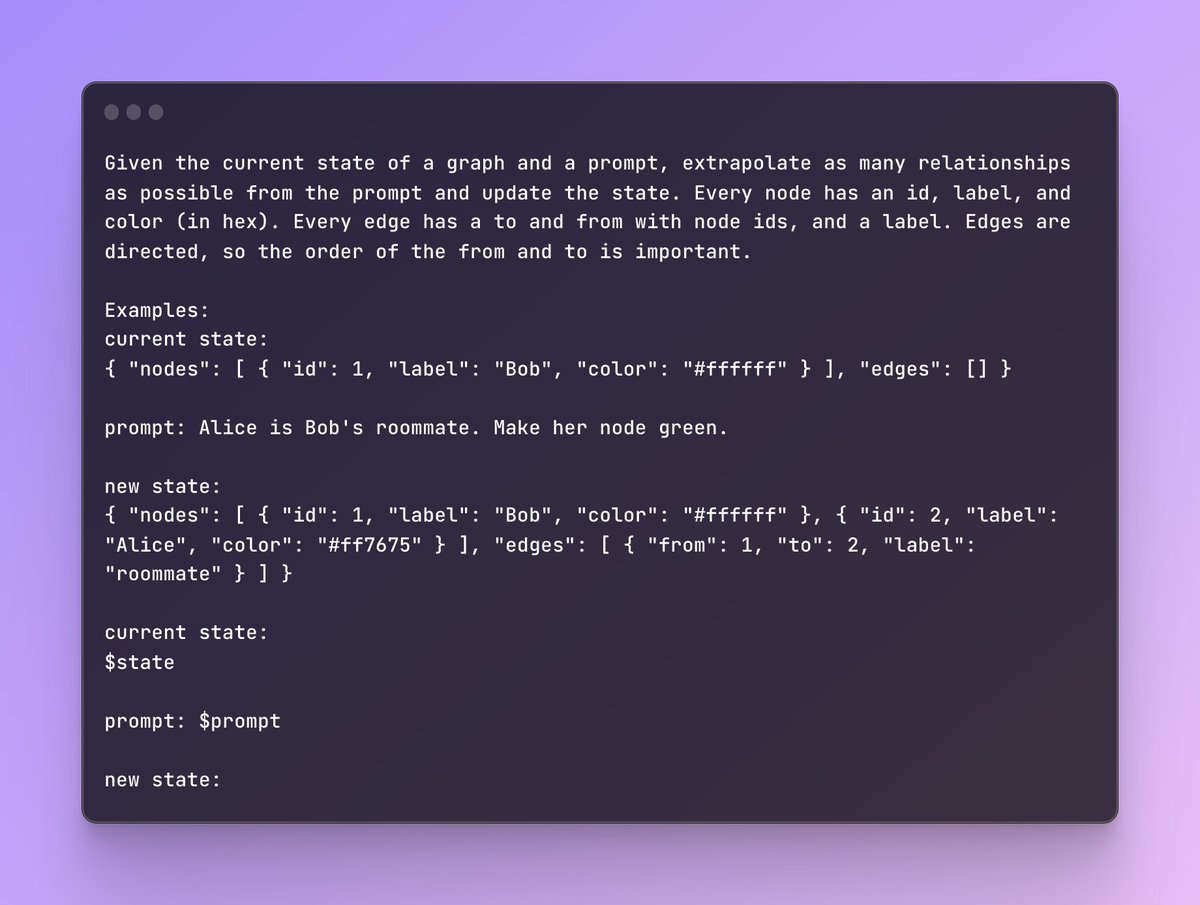

Let's take a look at the original prompt.

This is a one-shot prompt. We share one example of what a graph might look like after processing a sample prompt.

Note how the entire state of the graph is stored within the prompt.

This is a one-shot prompt. We share one example of what a graph might look like after processing a sample prompt.

Note how the entire state of the graph is stored within the prompt.

While this is fine for most cases (especially for a weekend hack), it comes with some issues.

It's expensive! On every successive query, you have to pass the state as an input and receive an updated state as an output.

It's expensive! On every successive query, you have to pass the state as an input and receive an updated state as an output.

It's also slow. GPT has to generate many repeated tokens for the state at the output.

There's a lot of redundancy in our state between prompts!

Finally, if your graph is large, you can run into limits imposed by MAX_TOKENS or context window size.

There's a lot of redundancy in our state between prompts!

Finally, if your graph is large, you can run into limits imposed by MAX_TOKENS or context window size.

In sum: maintaining state within a prompt is costly.

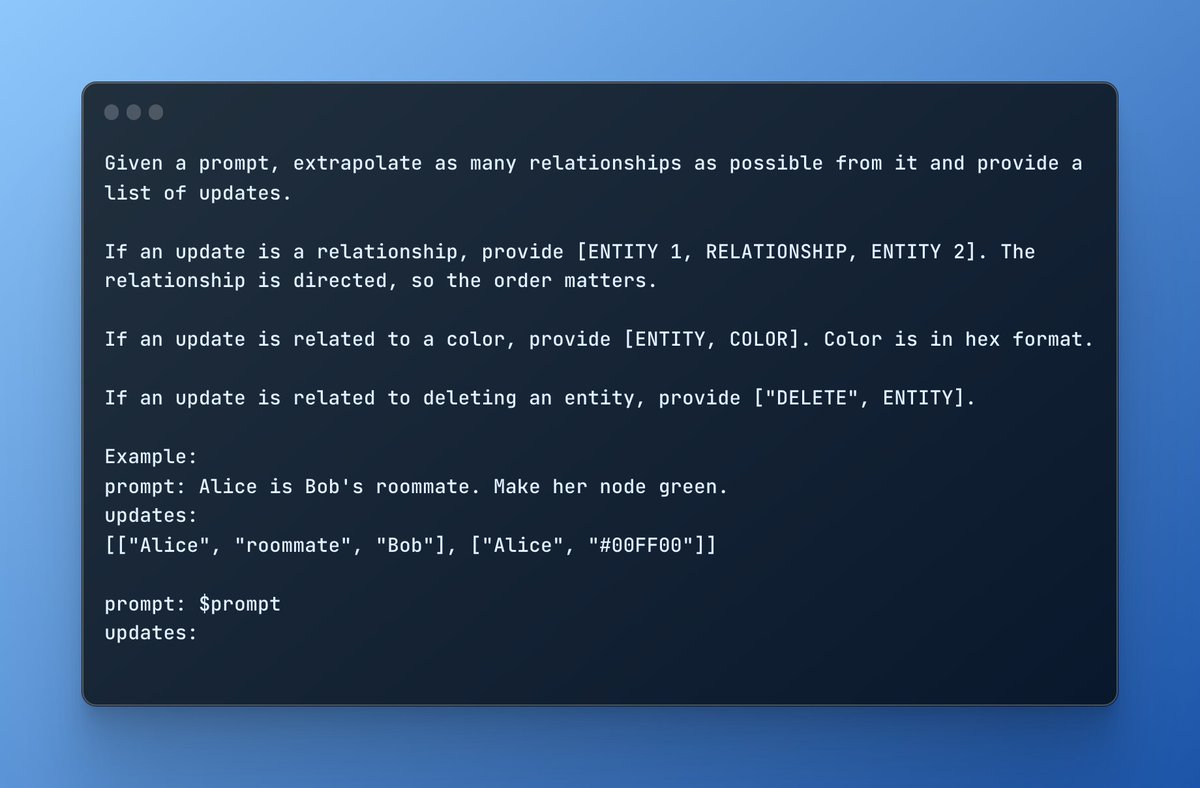

Ideally, we want a set of instructions we can carry out on the client side to update the state within the browser.

GraphGPT's new prompt is entirely stateless.

Ideally, we want a set of instructions we can carry out on the client side to update the state within the browser.

GraphGPT's new prompt is entirely stateless.

In the prompt, I teach a simple domain-specific language (DSL) to the model.

I restrict the model to three kinds of updates: identifying relationships, updating colors, or deleting entities.

This is the power of in-context learning!

I restrict the model to three kinds of updates: identifying relationships, updating colors, or deleting entities.

This is the power of in-context learning!

Our state is now entirely maintained within the browser. GPT generates far fewer tokens, improving costs and latency.

All we have to do is parse our DSL on the frontend and update our local state.

All we have to do is parse our DSL on the frontend and update our local state.

We can now maintain much larger graphs and process massive chunks of text quickly.

In effect, we've saved time and money by being clever with how we manage state between our personal computer and GPT.

In effect, we've saved time and money by being clever with how we manage state between our personal computer and GPT.

Interested in seeing the rest of the project?

Here's the repo for GraphGPT:

github.com/varunshenoy/Gr…

Here's the repo for GraphGPT:

github.com/varunshenoy/Gr…

• • •

Missing some Tweet in this thread? You can try to

force a refresh