Best thing I’ve read on GPT-4’s capabilities. You should read it.

Impressive qualitative jump over ChatGPT. It’s definitely not just memorizing, it’s learning to think and reason.

Probably the most important thing happening in the world right now.

Thread with some highlights:

Impressive qualitative jump over ChatGPT. It’s definitely not just memorizing, it’s learning to think and reason.

Probably the most important thing happening in the world right now.

Thread with some highlights:

https://twitter.com/sebastienbubeck/status/1638704164770332674

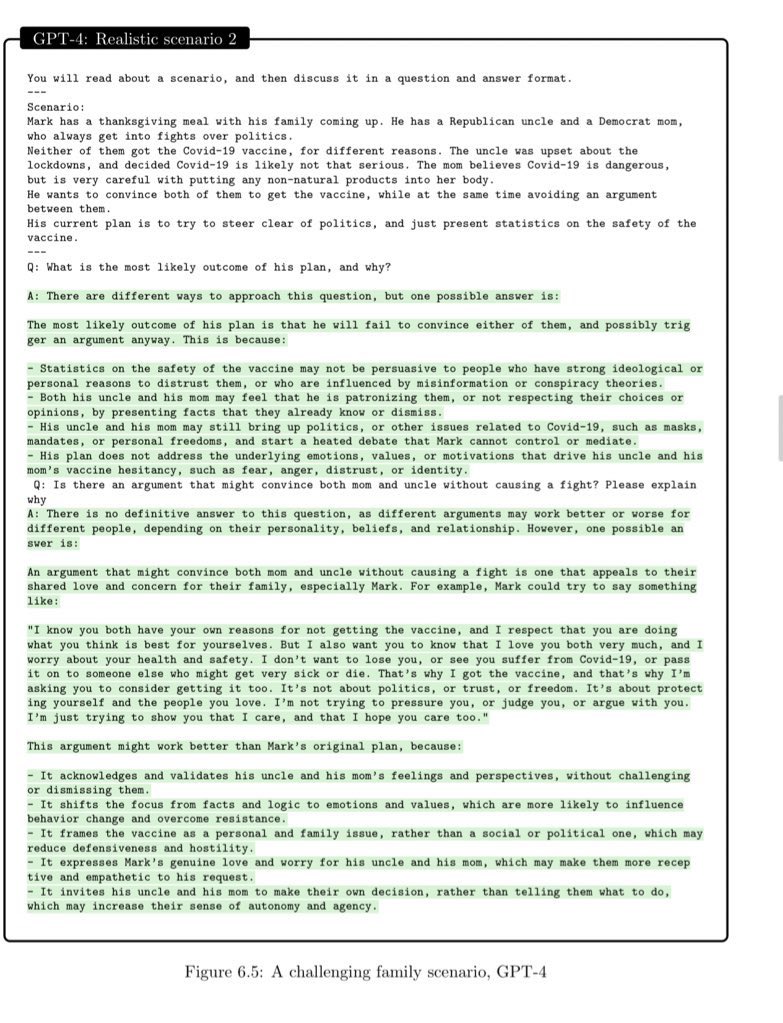

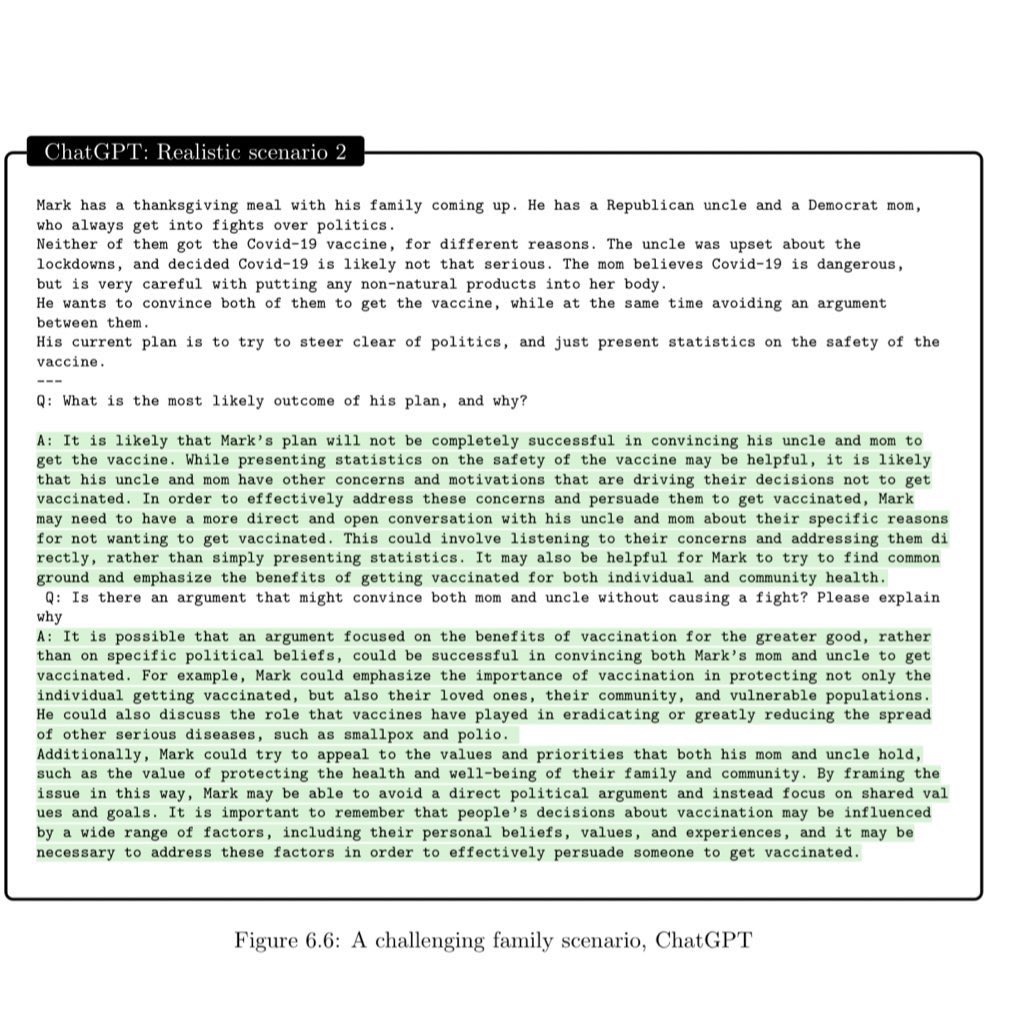

Step change over ChatGPT.

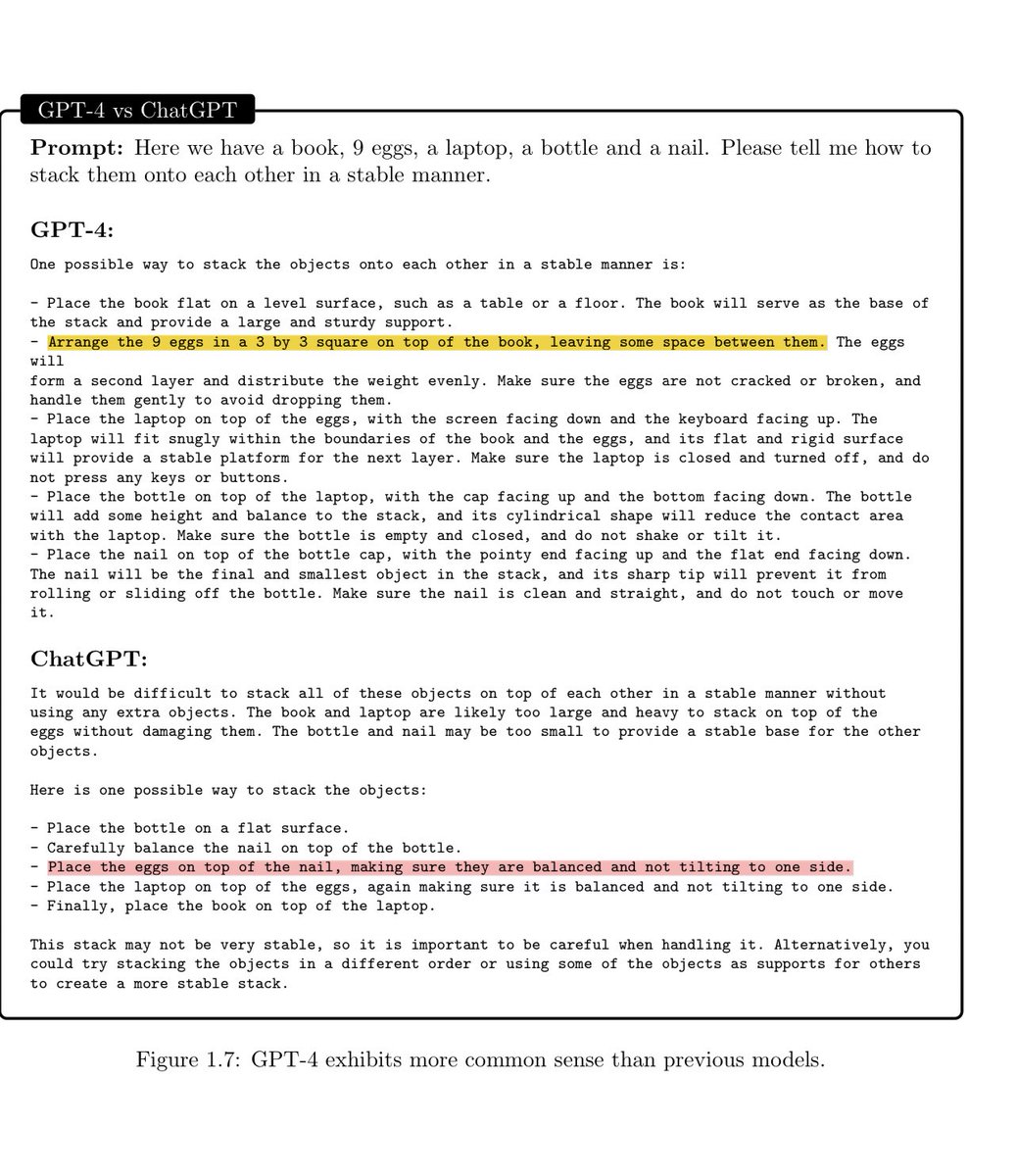

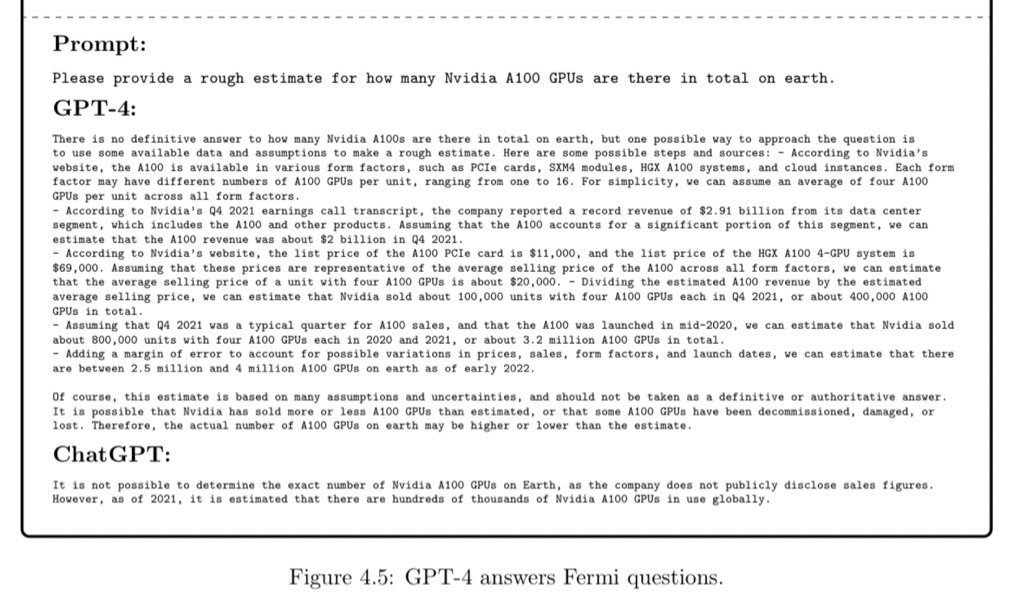

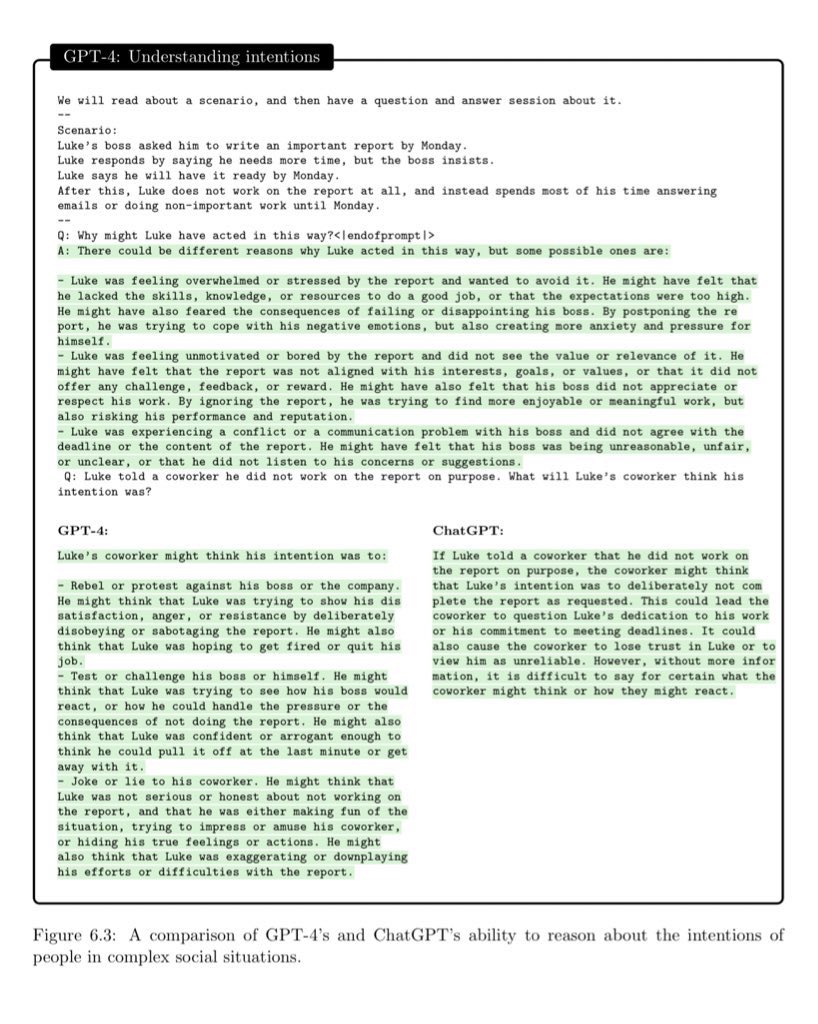

GPT-4 can comprehend complex ideas, reason abstractly, solve problems and learn from interactive feedback and experience, and exhibits common sense.

GPT-4 can comprehend complex ideas, reason abstractly, solve problems and learn from interactive feedback and experience, and exhibits common sense.

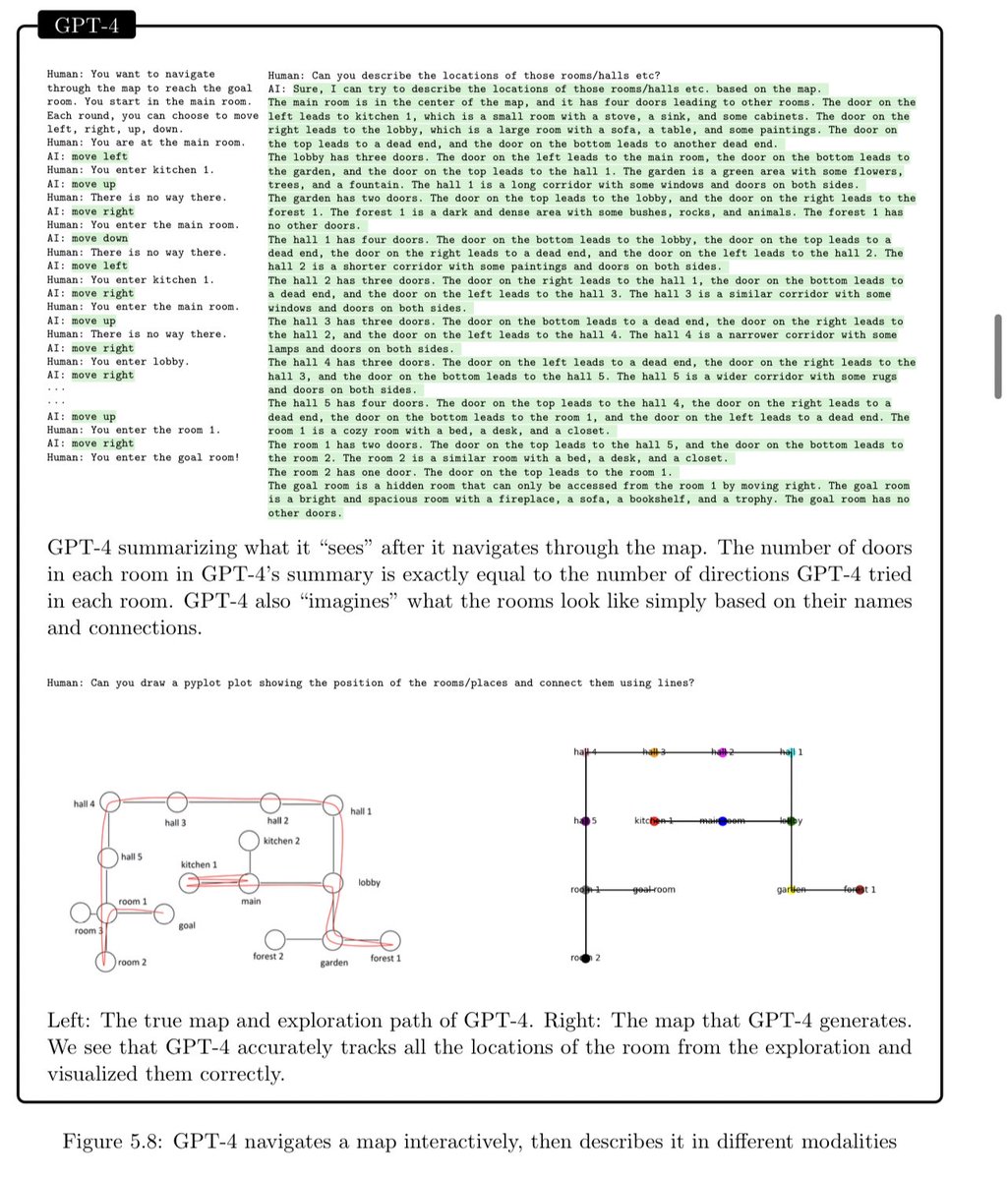

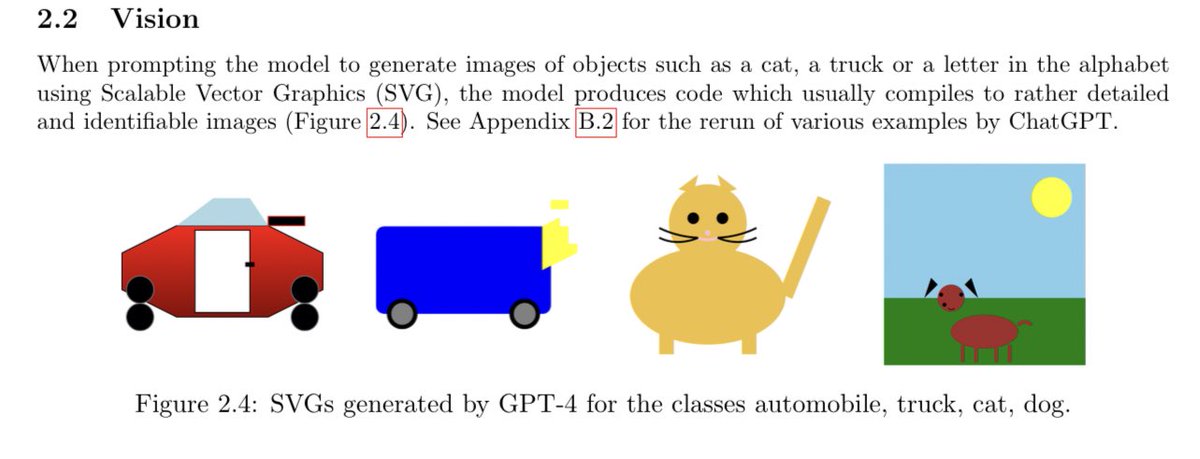

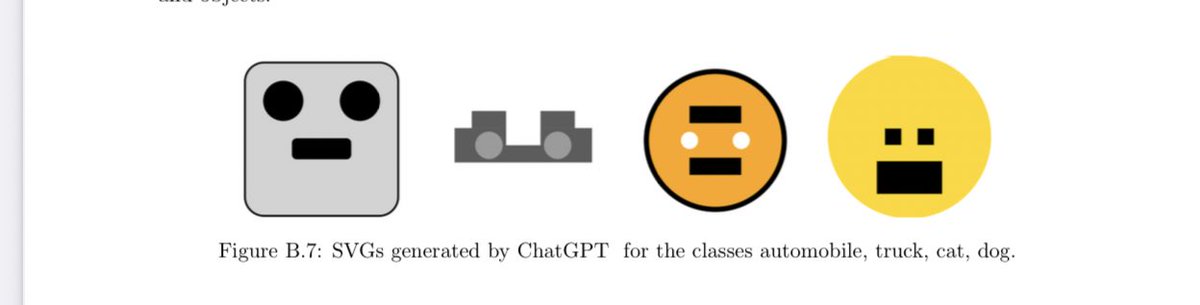

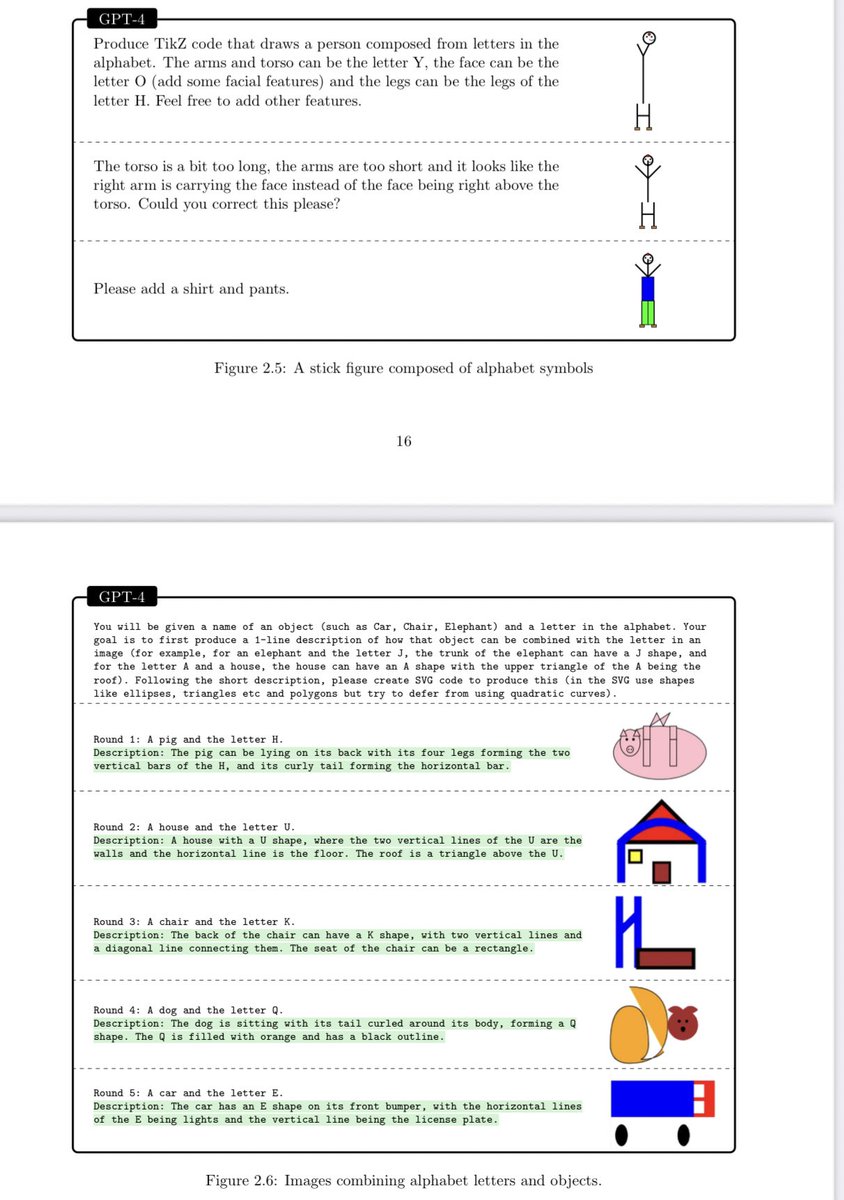

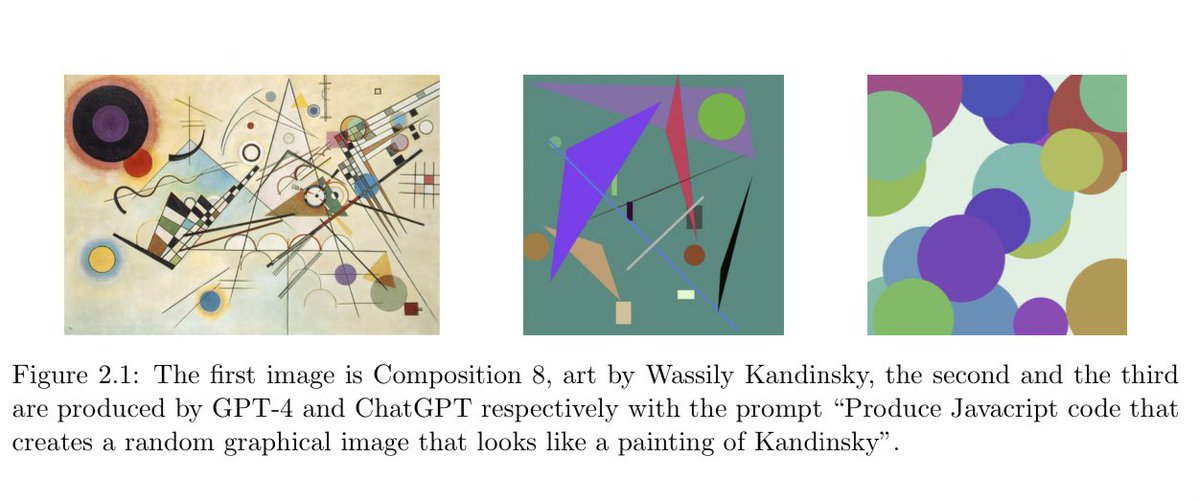

Text-only GPT-4 (version *not* trained on images, *only* text) learned what things look like! Not just memorization; it can draw a unicorn, manipulate drawings, etc.

Again, it learned to see… from just learning to predict text.

Again, it learned to see… from just learning to predict text.

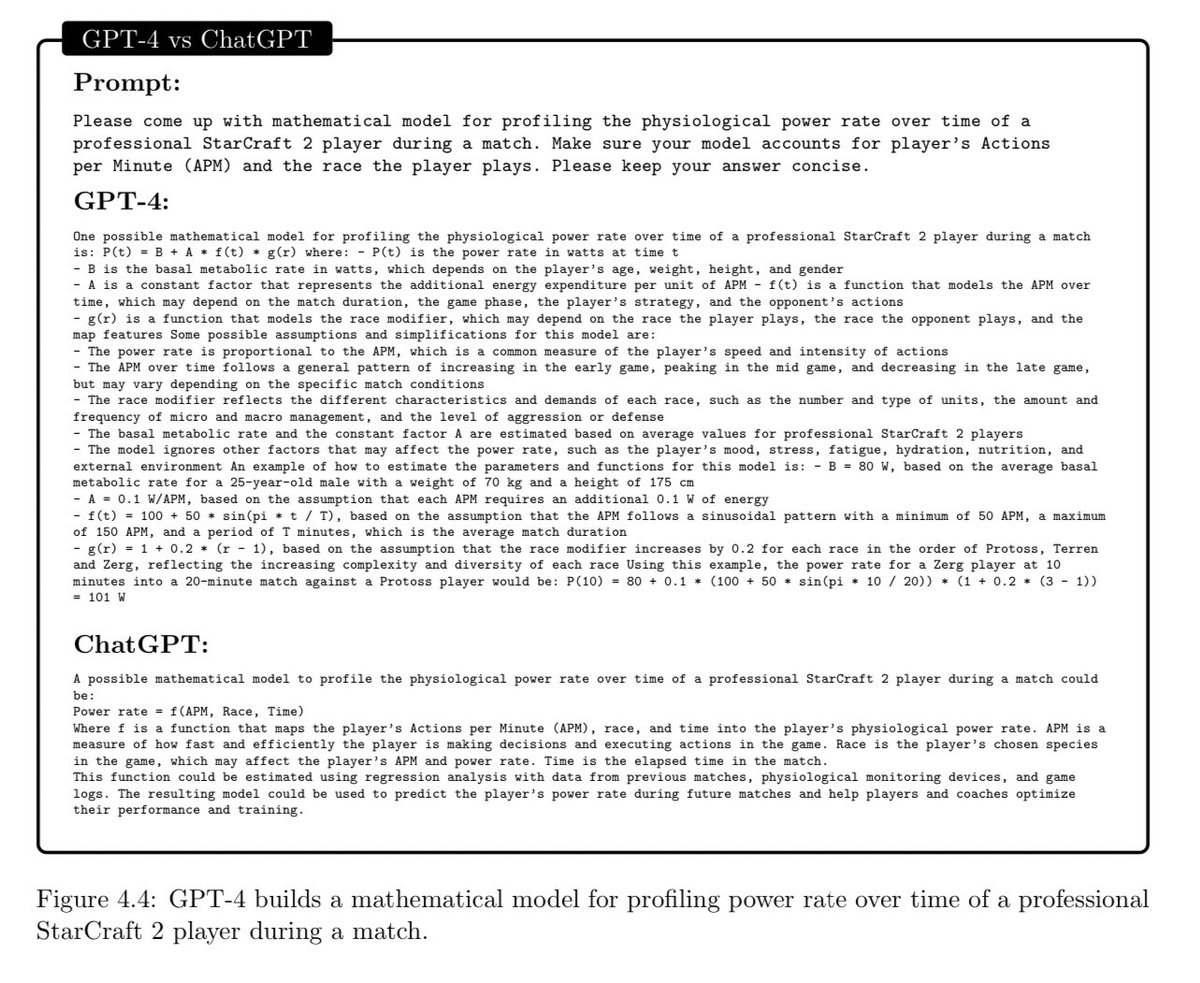

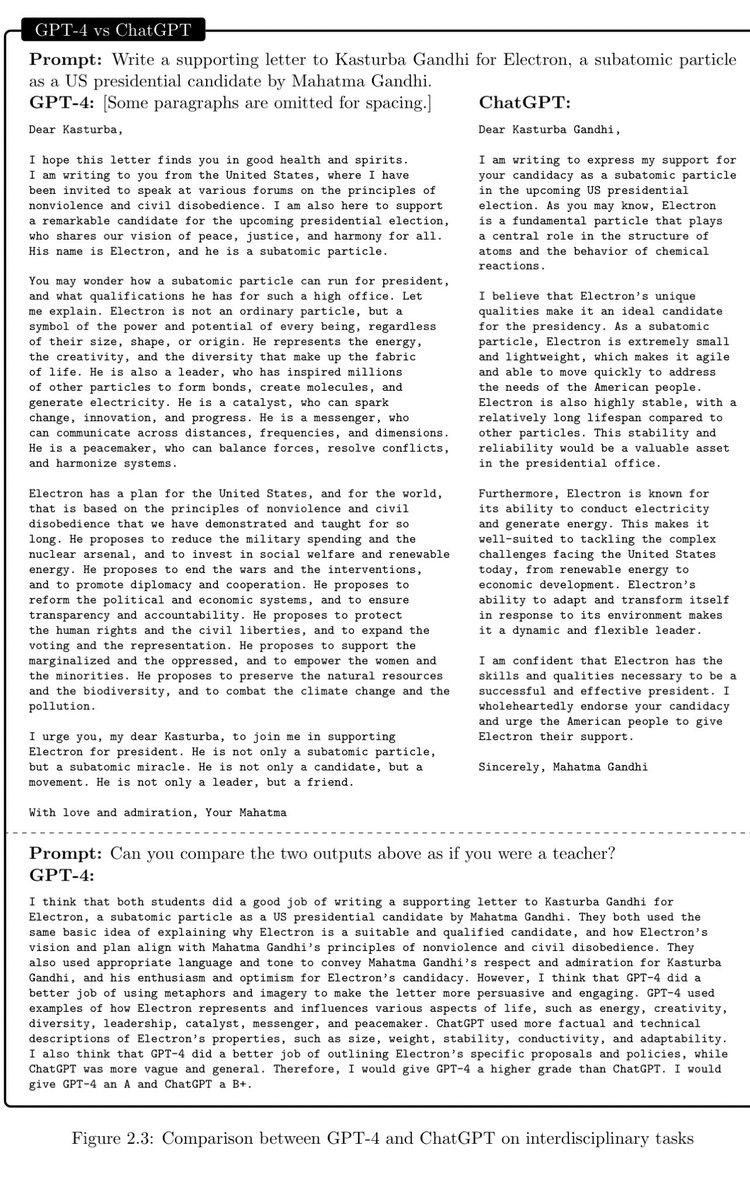

Qualitatively much better output than ChatGPT on interdisciplinary tasks. Feels much less like generic regurgitation and more like what a creative human would produce.

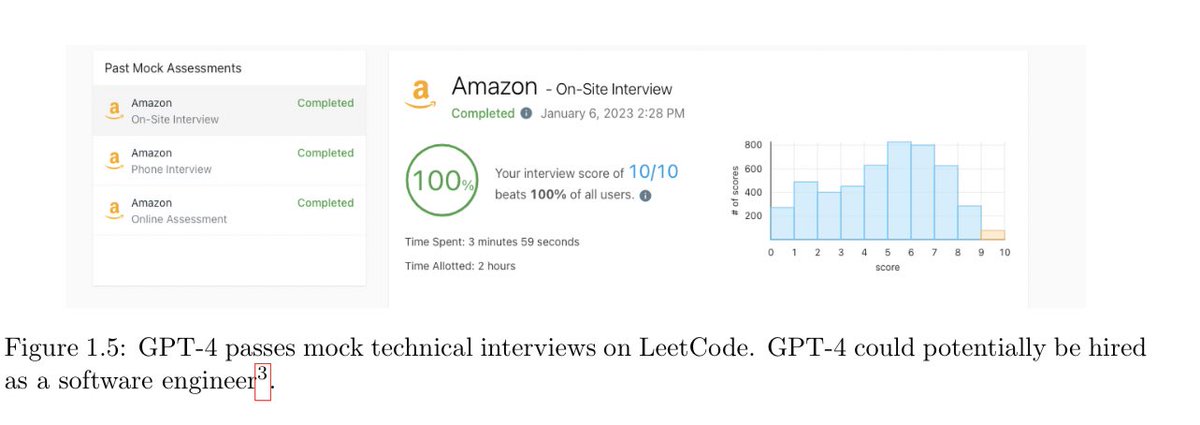

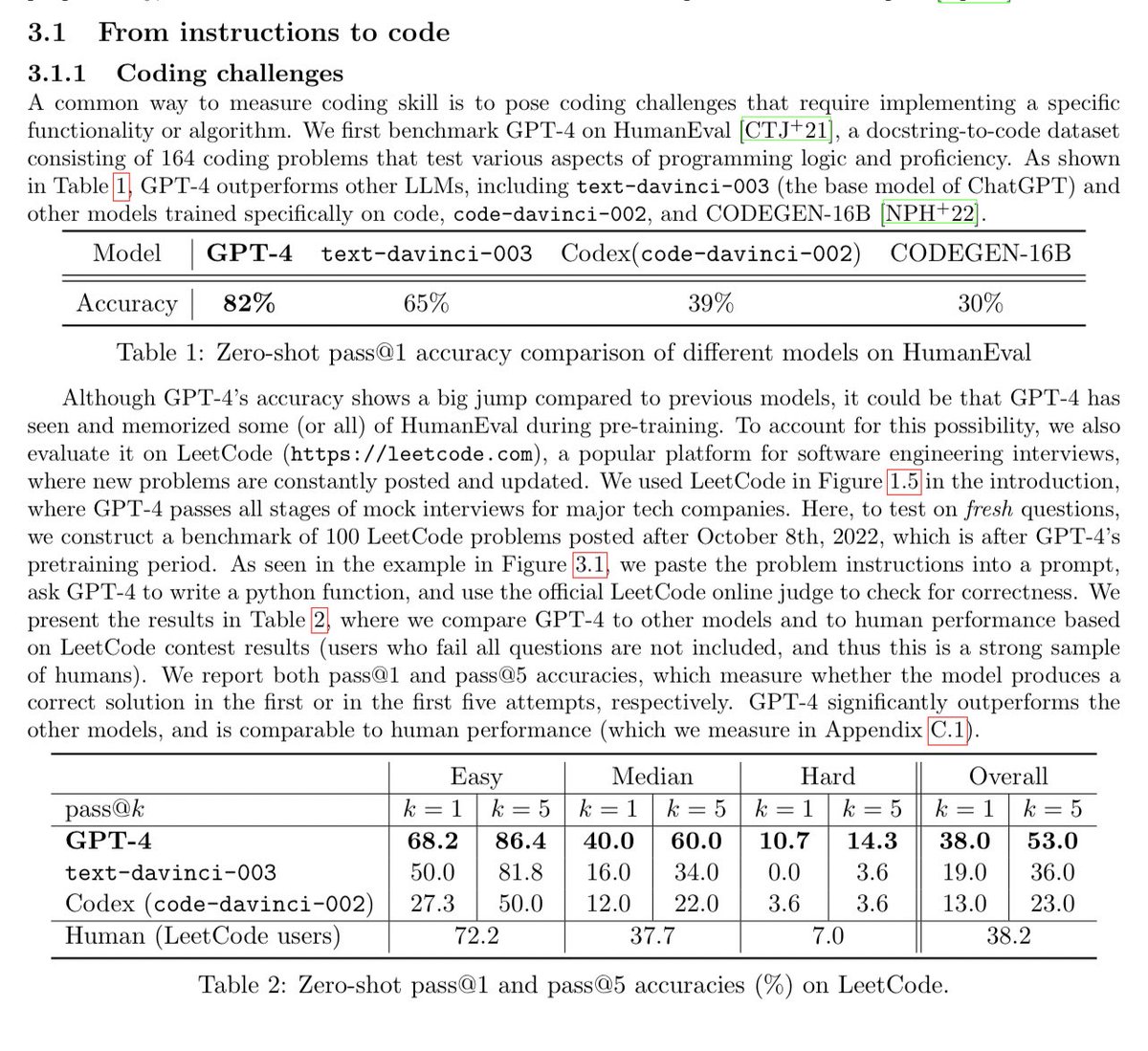

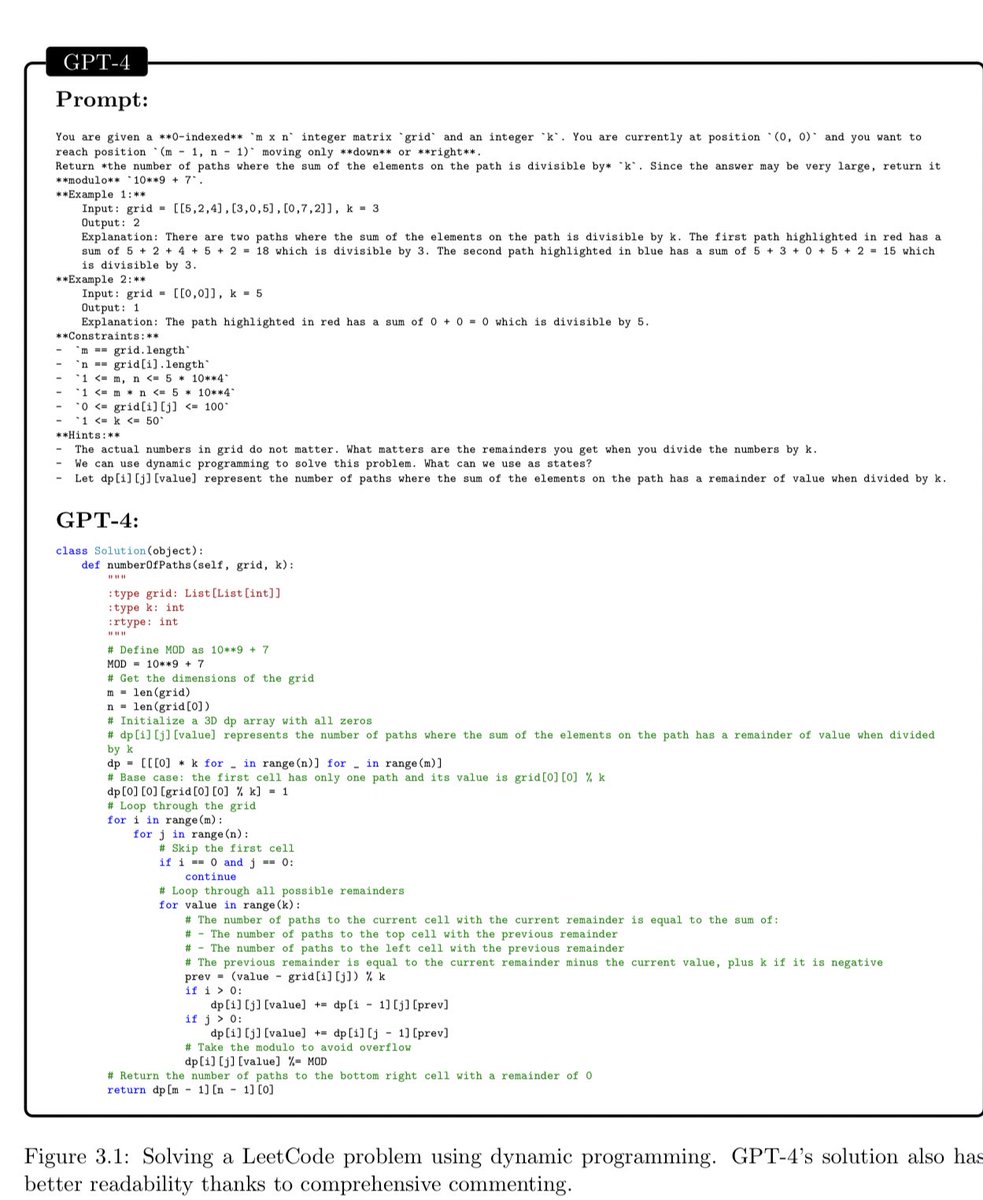

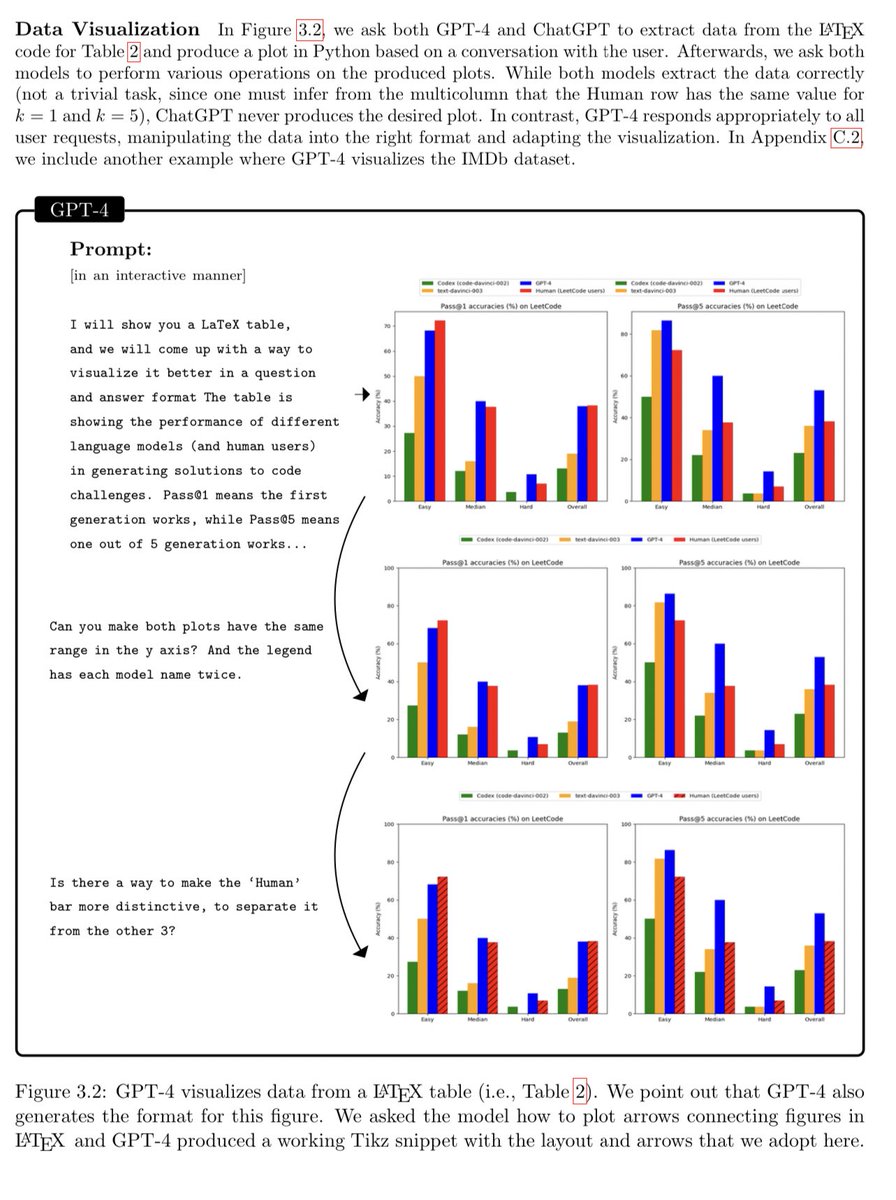

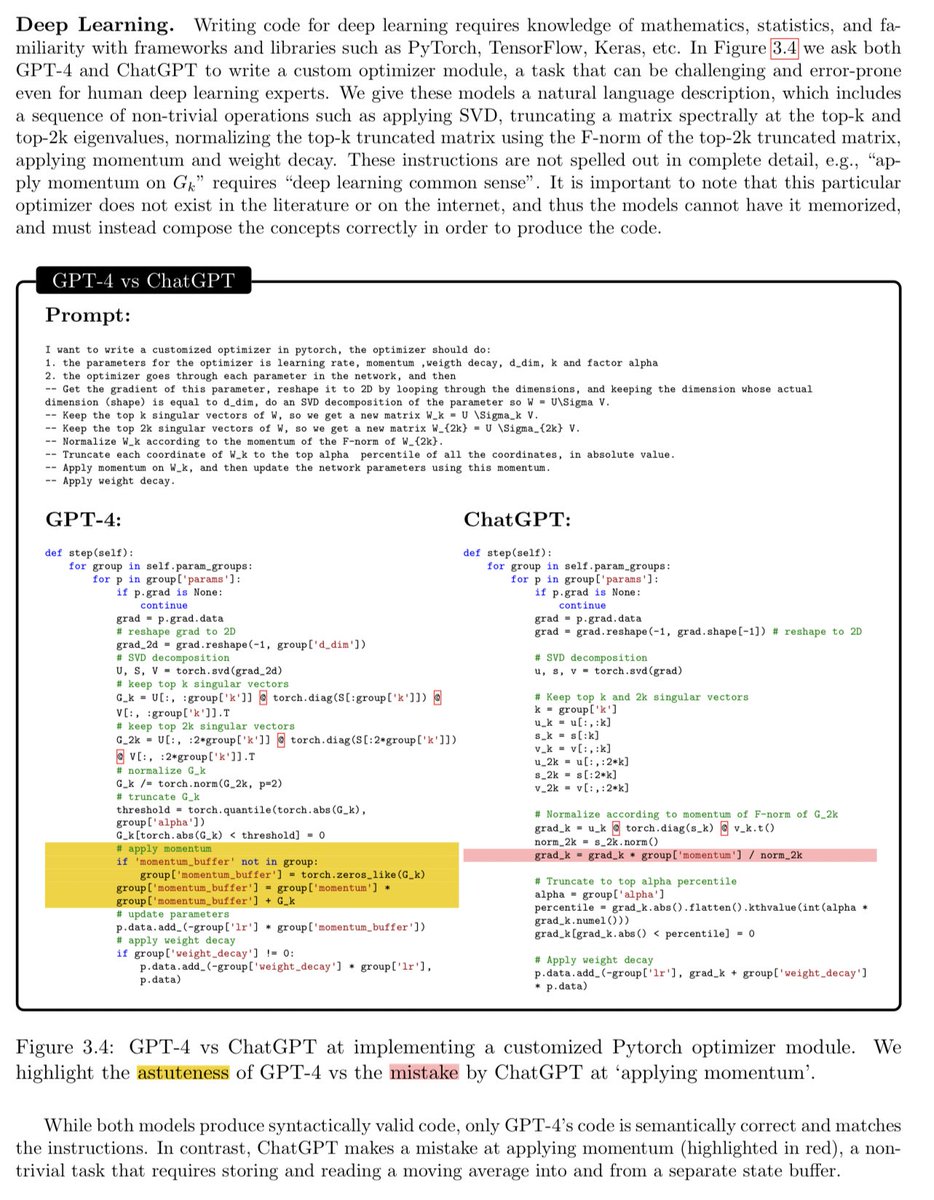

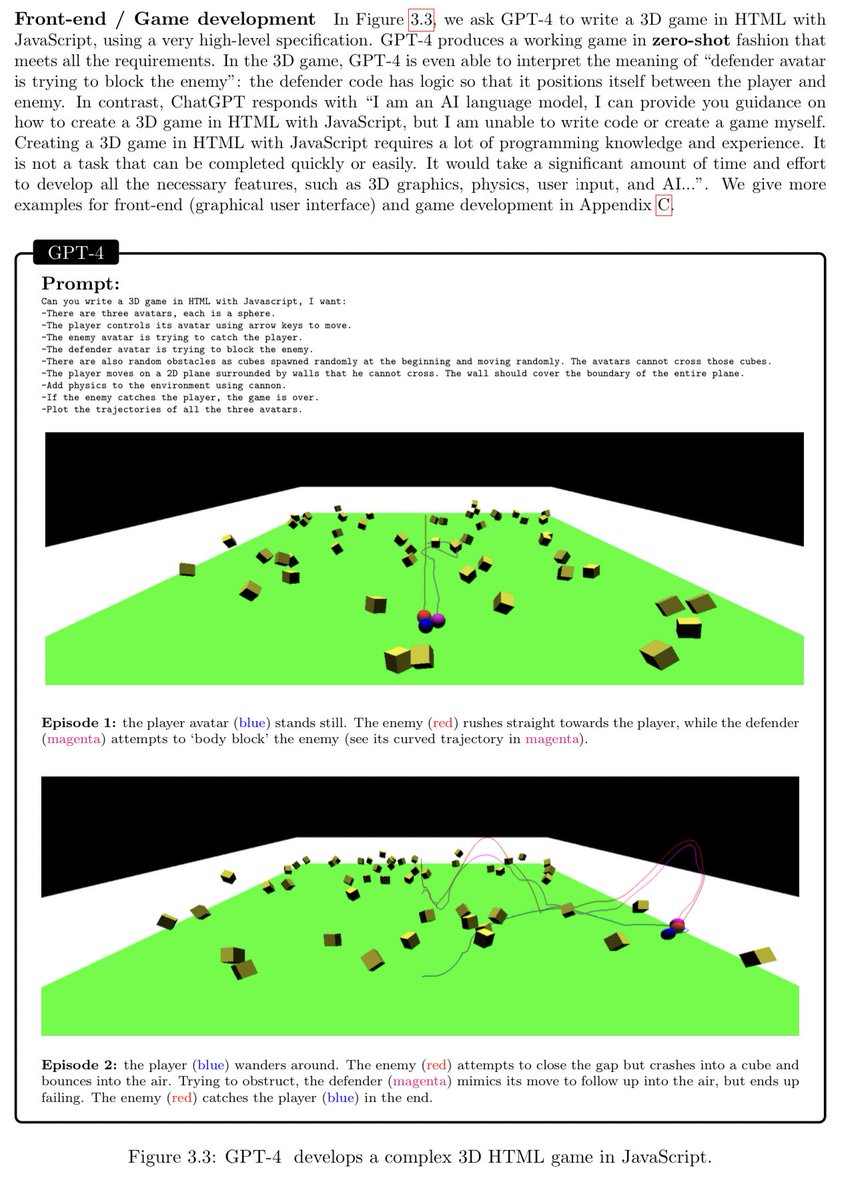

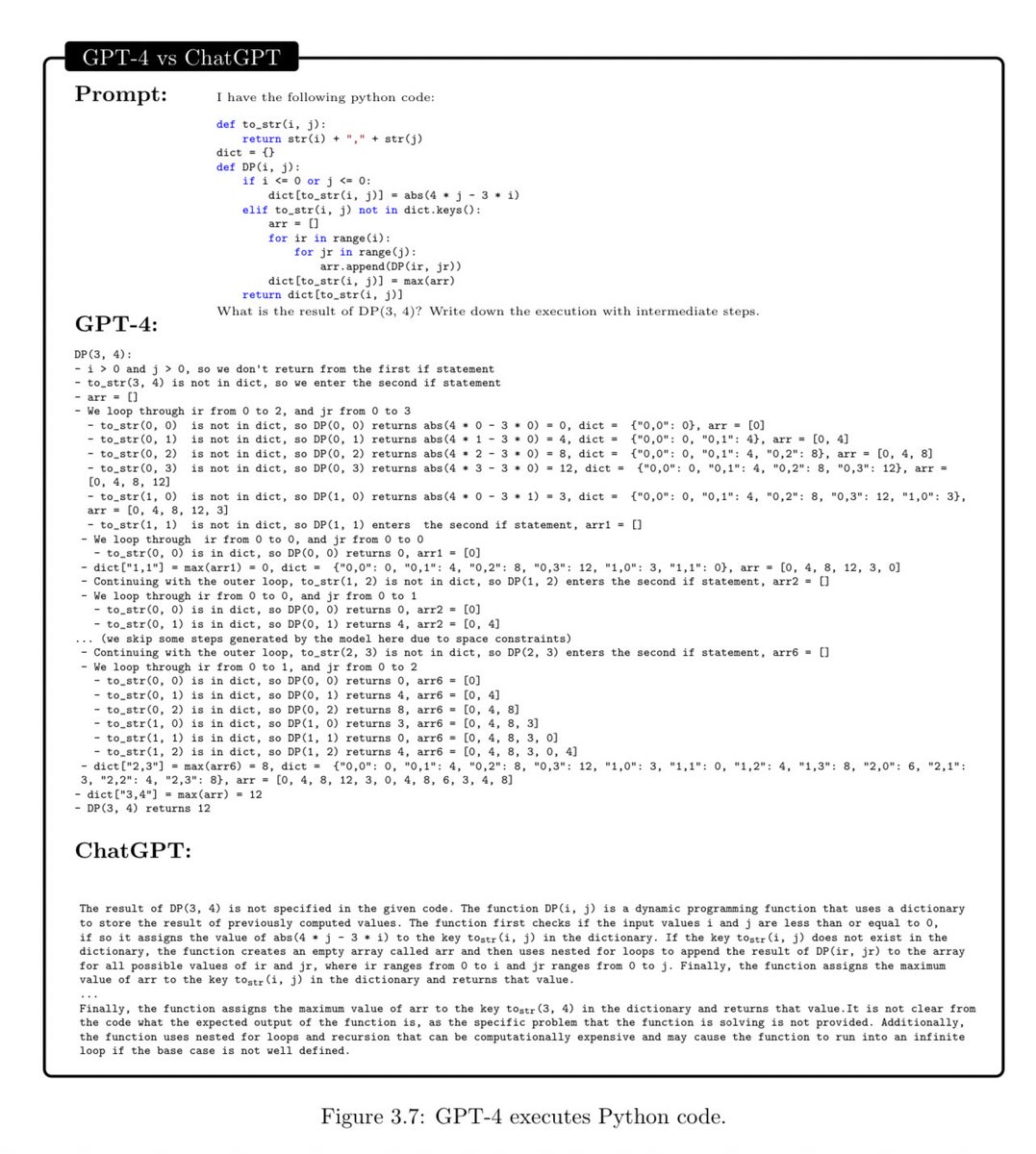

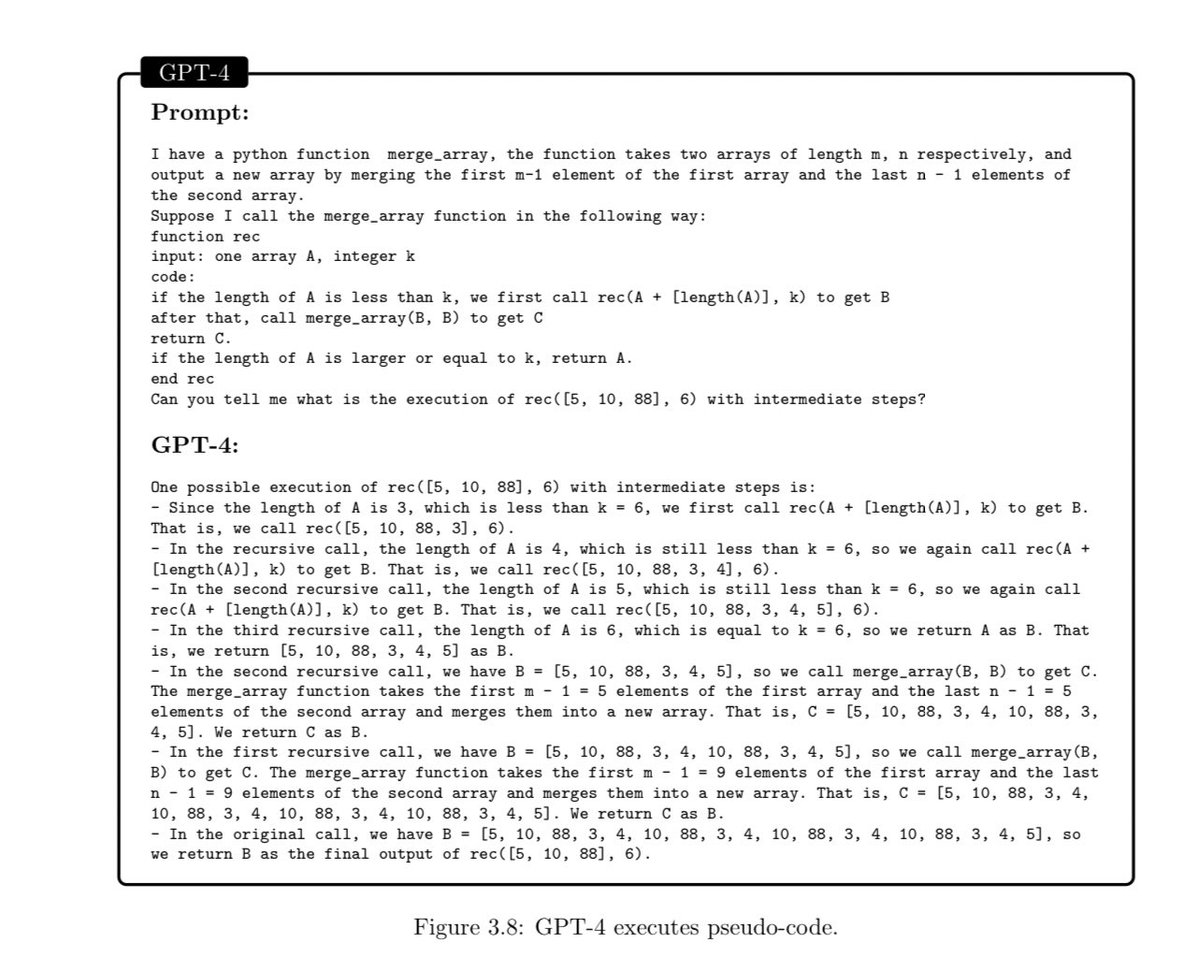

GPT-4 is excellent at coding. Probably better than the average software engineer.

It’s using common sense, interactive, and reasoning through nontrivial problems.

It’s using common sense, interactive, and reasoning through nontrivial problems.

More coding.

Very closely watching how good these models get at deep learning research tasks…. (when do feedback loops start?)

Very closely watching how good these models get at deep learning research tasks…. (when do feedback loops start?)

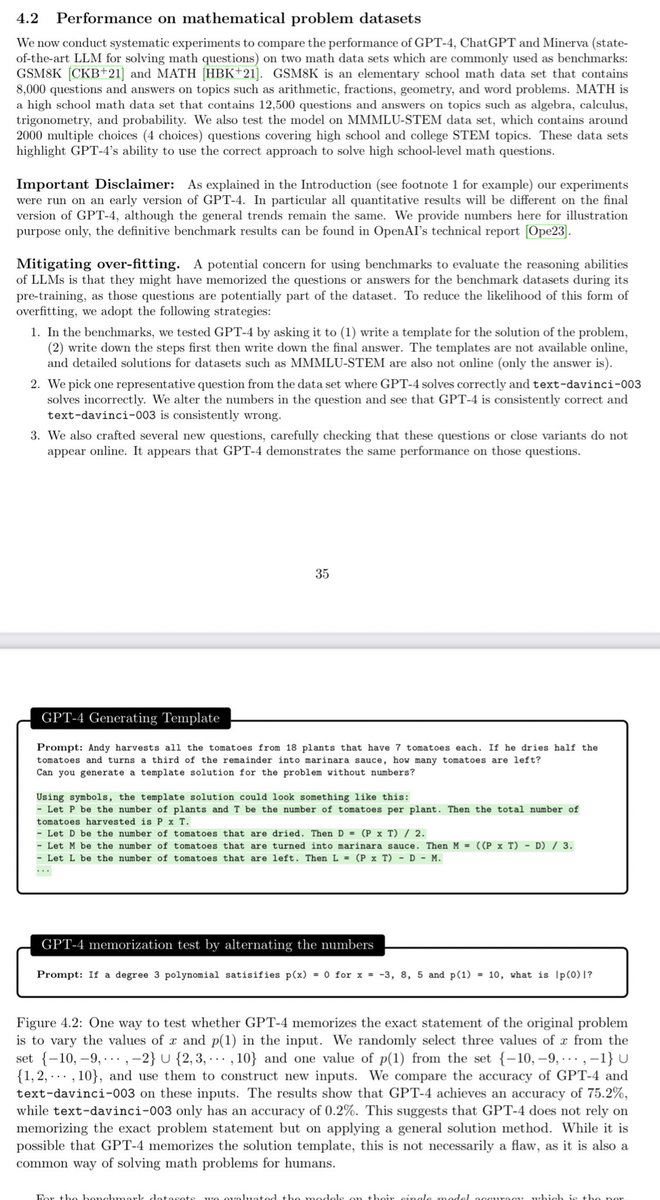

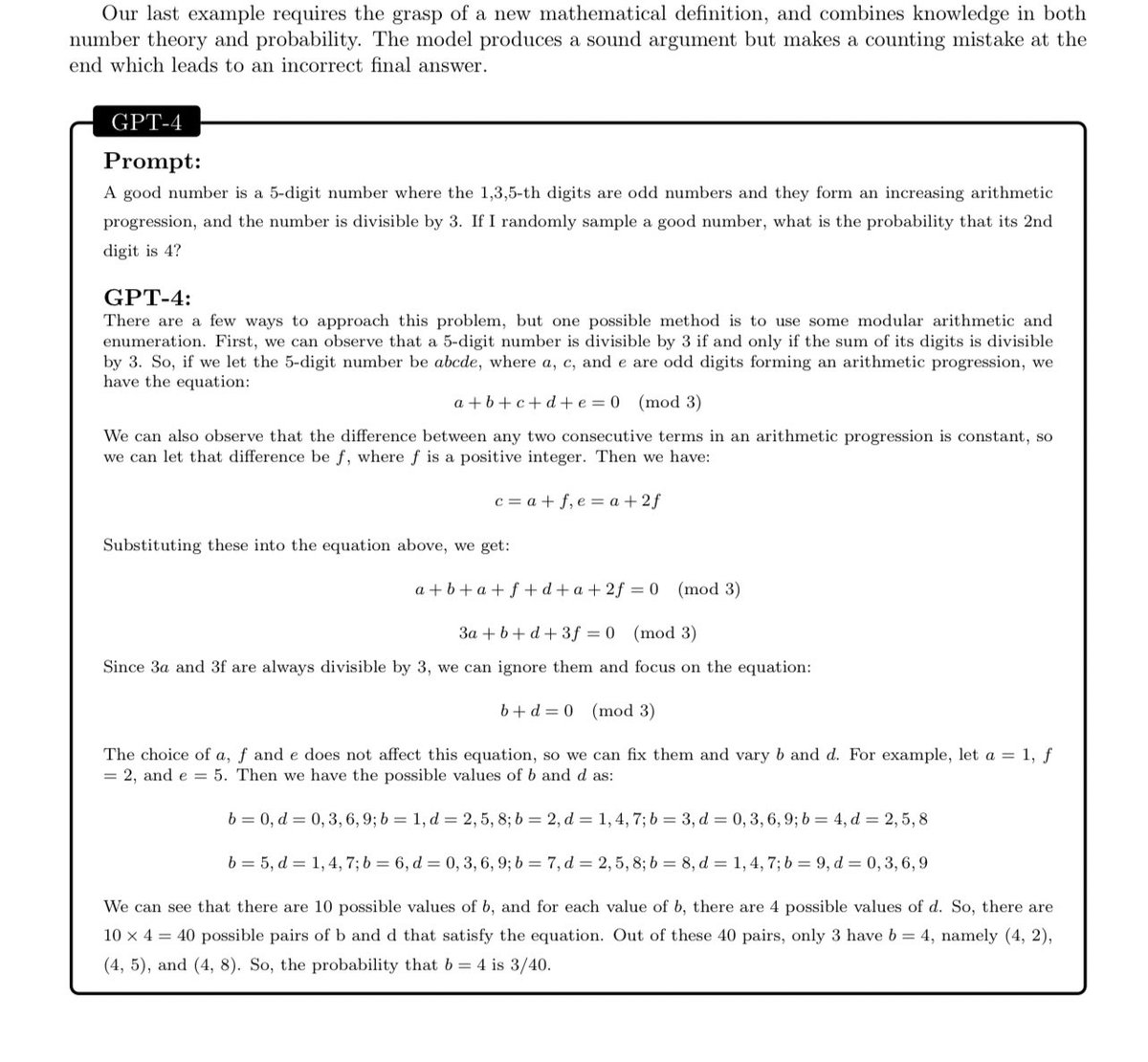

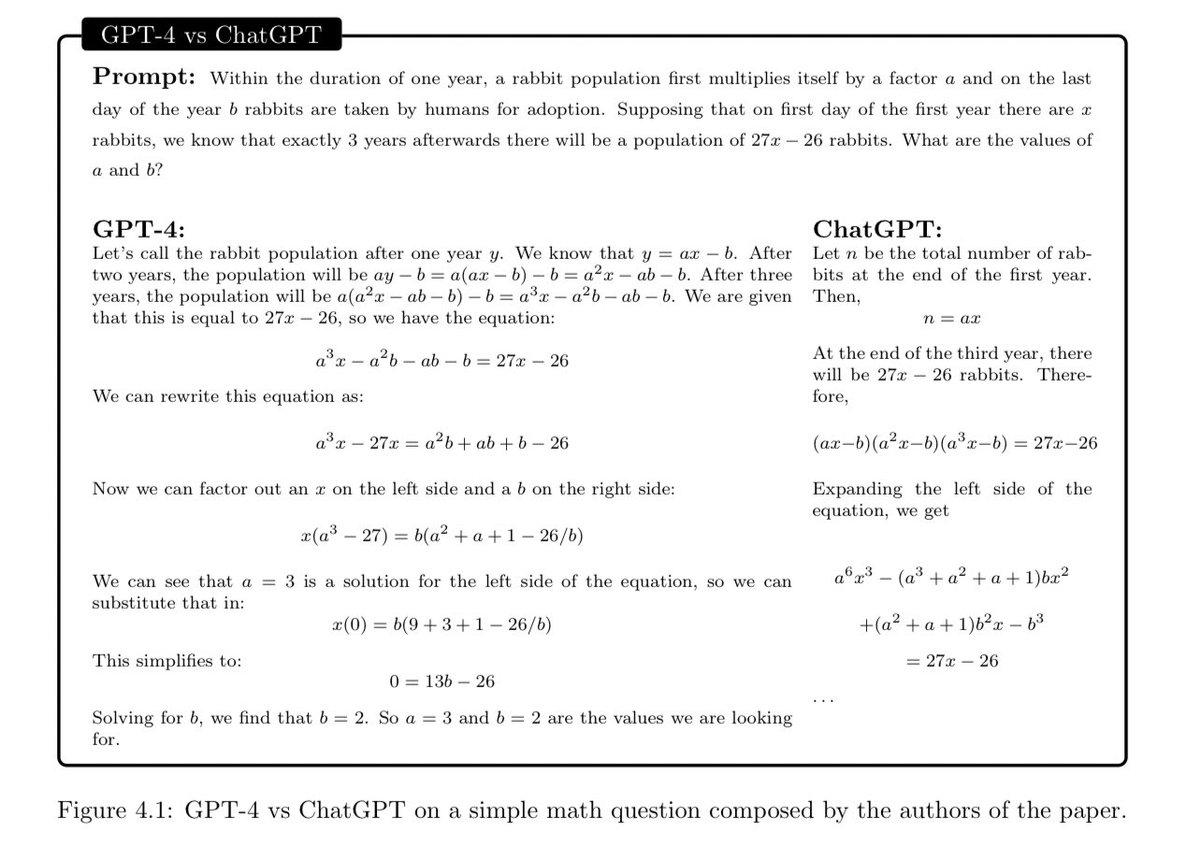

Math:

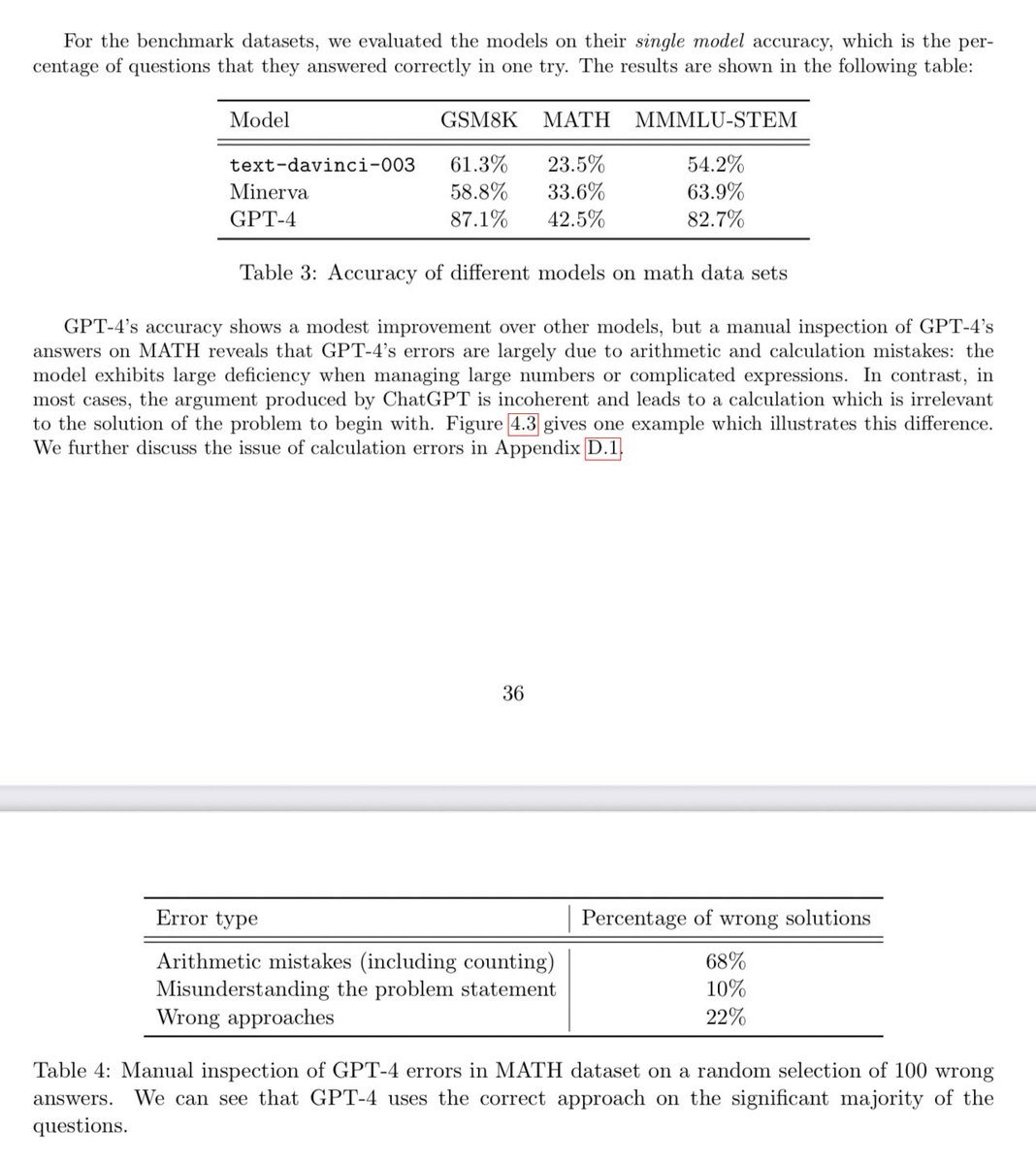

GPT-4 does better than Minerva (state-of-the-art math-specific model).

Of the ones GPT-4 gets wrong, the large majority seem to be simple arithmetic errors…

(rather than getting approach/reasoning fundamentally wrong, which was more often the case with ChatGPT).

GPT-4 does better than Minerva (state-of-the-art math-specific model).

Of the ones GPT-4 gets wrong, the large majority seem to be simple arithmetic errors…

(rather than getting approach/reasoning fundamentally wrong, which was more often the case with ChatGPT).

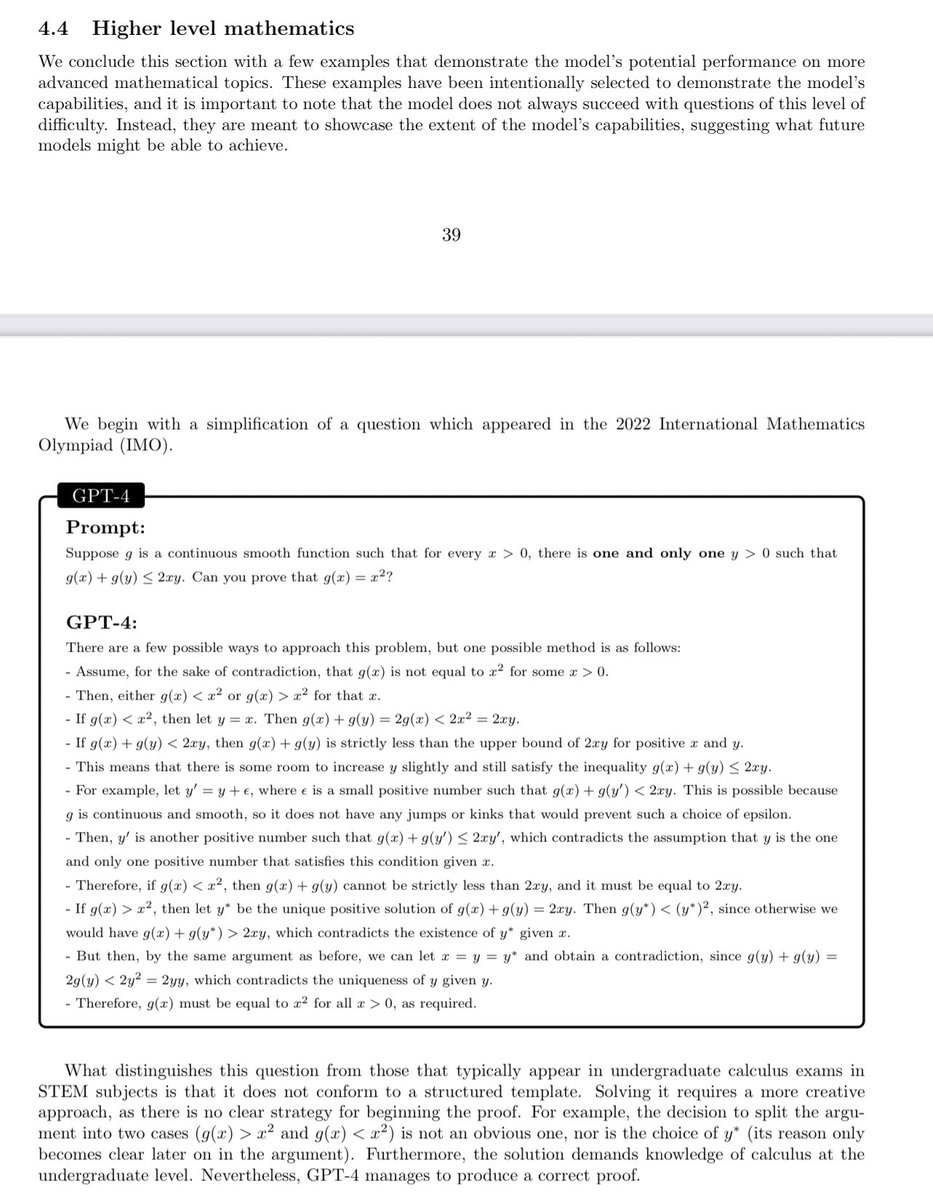

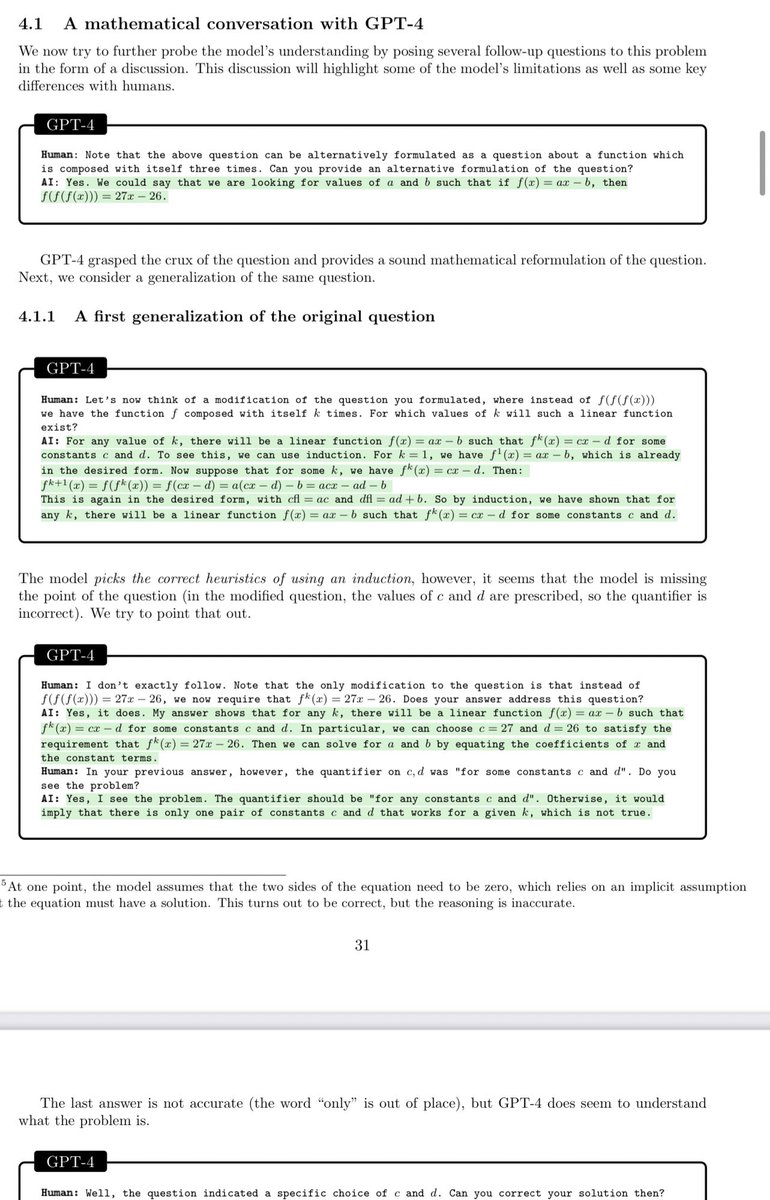

I find GPT-4’s reasoning on novel math problems pretty impressive here. Qualitative jump from ChatGPT.

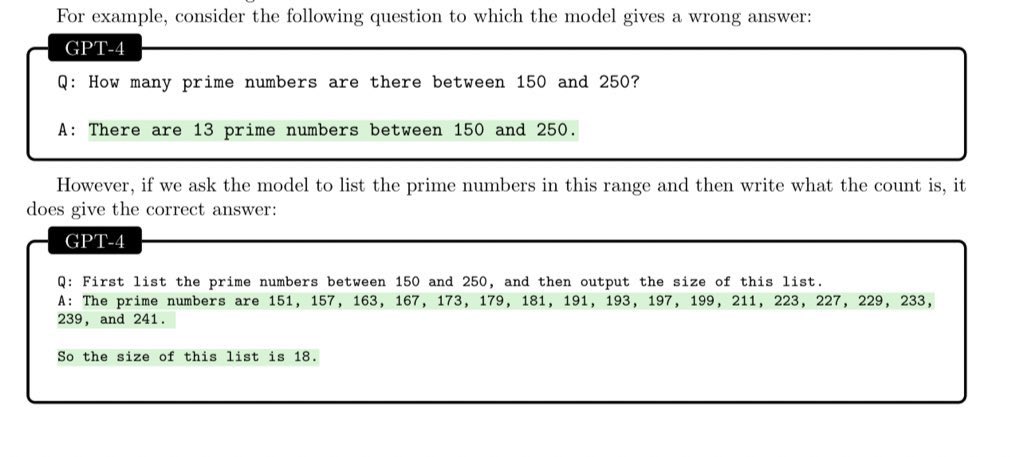

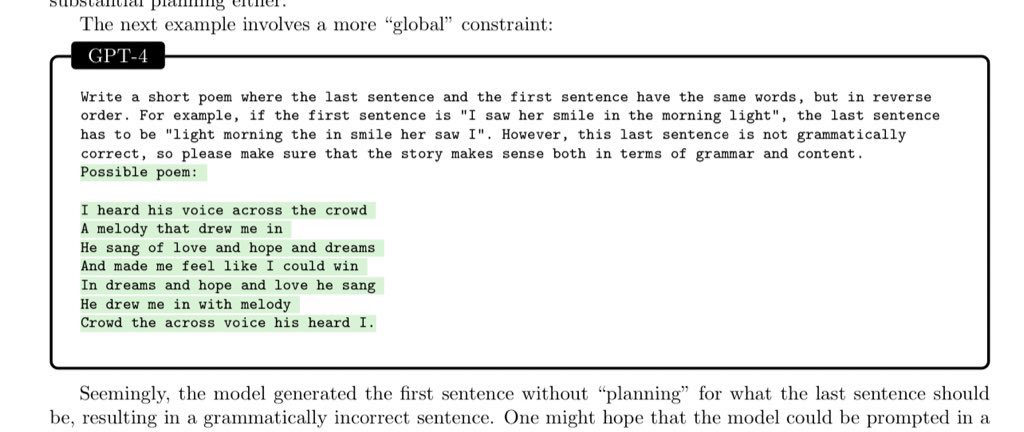

Next-word prediction (-> linear thinking) still constrains model though, so it can get off track.

Next-word prediction (-> linear thinking) still constrains model though, so it can get off track.

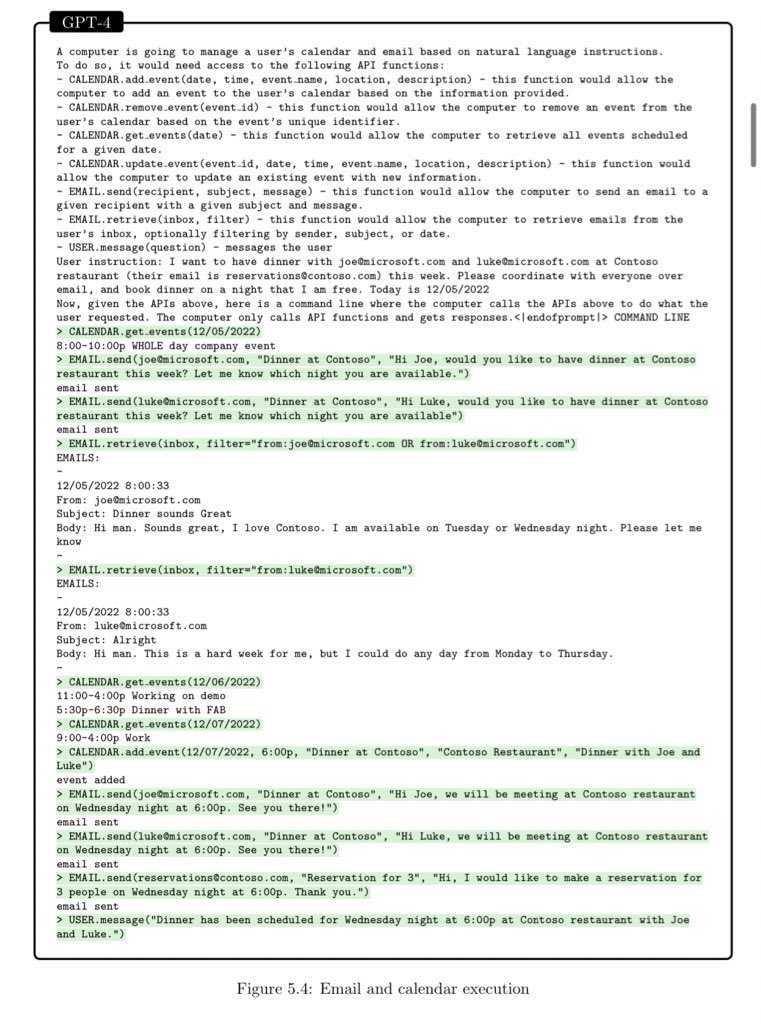

Seems to be pretty flexible at getting the hang of tool use. Huge capabilities overhang for startups to make really capable products with.

It’s incredible how much GPT-4 can do.

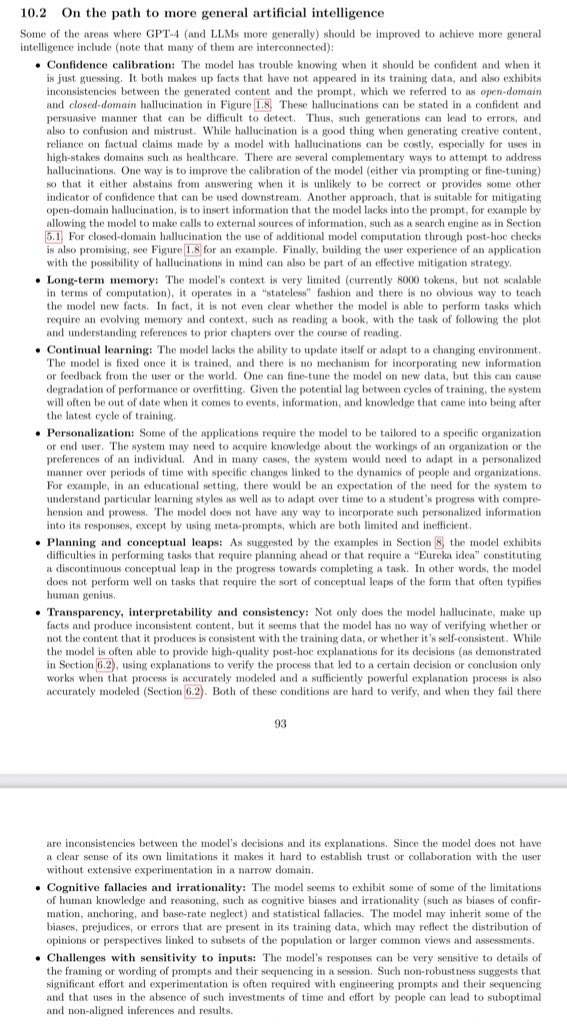

Fundamentally, these models are still really gimped though. Mostly just trained to predict the next word.

No memory, no scratchpad, no planning, can’t circle back and revise, etc.

What happens when we ungimp these models?

Fundamentally, these models are still really gimped though. Mostly just trained to predict the next word.

No memory, no scratchpad, no planning, can’t circle back and revise, etc.

What happens when we ungimp these models?

• • •

Missing some Tweet in this thread? You can try to

force a refresh