I've seen this thread pop up on my timeline a few times today. I just wanted to say that this claim is a racist dog whistle.

https://twitter.com/yishan/status/1662995686819004417

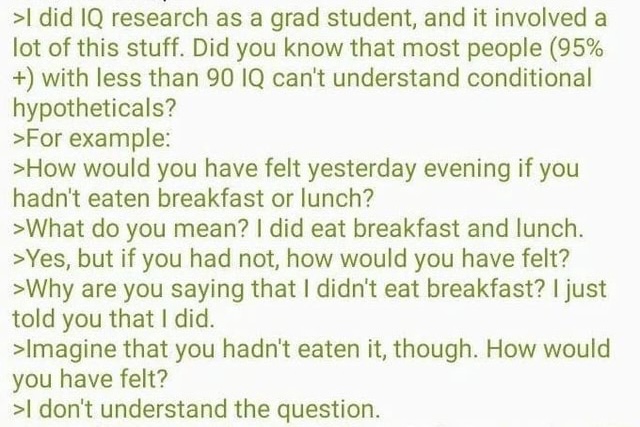

It all starts with this 4chan post where a supposed researcher claims that people with an IQ less than 90 can't understand questions like "How would you have felt yesterday evening if you hadn't eaten breakfast or lunch?"

source: knowyourmeme.com/memes/the-brea…

source: knowyourmeme.com/memes/the-brea…

Later in the original thread, the author uses the same breakfast question found in the post on 4chan.

The breakfast question is frequently linked to memes implying black people have low IQs. Like this George Floyd meme.

Or this one, where they swap a black woman for the much more typical version of the meme which features a white man.

See here, where this person suggests that this black teen isn't capable of understanding hypotheticals.

I don't know anything about this person but he's listed on the Southern Poverty Law Center website as a white nationalist. Source: splcenter.org/fighting-hate/…

I don't know anything about this person but he's listed on the Southern Poverty Law Center website as a white nationalist. Source: splcenter.org/fighting-hate/…

On a personal note, I frequently get people on here asking me the breakfast question in a sad attempt to troll me as a black person on the internet.

I'm not accusing the original author of the thread of racism. Perhaps he is unaware of the origins.

But spreading these kinds of ideas without acknowledging the context is exactly how racist ideas get mainstreamed.

But spreading these kinds of ideas without acknowledging the context is exactly how racist ideas get mainstreamed.

• • •

Missing some Tweet in this thread? You can try to

force a refresh