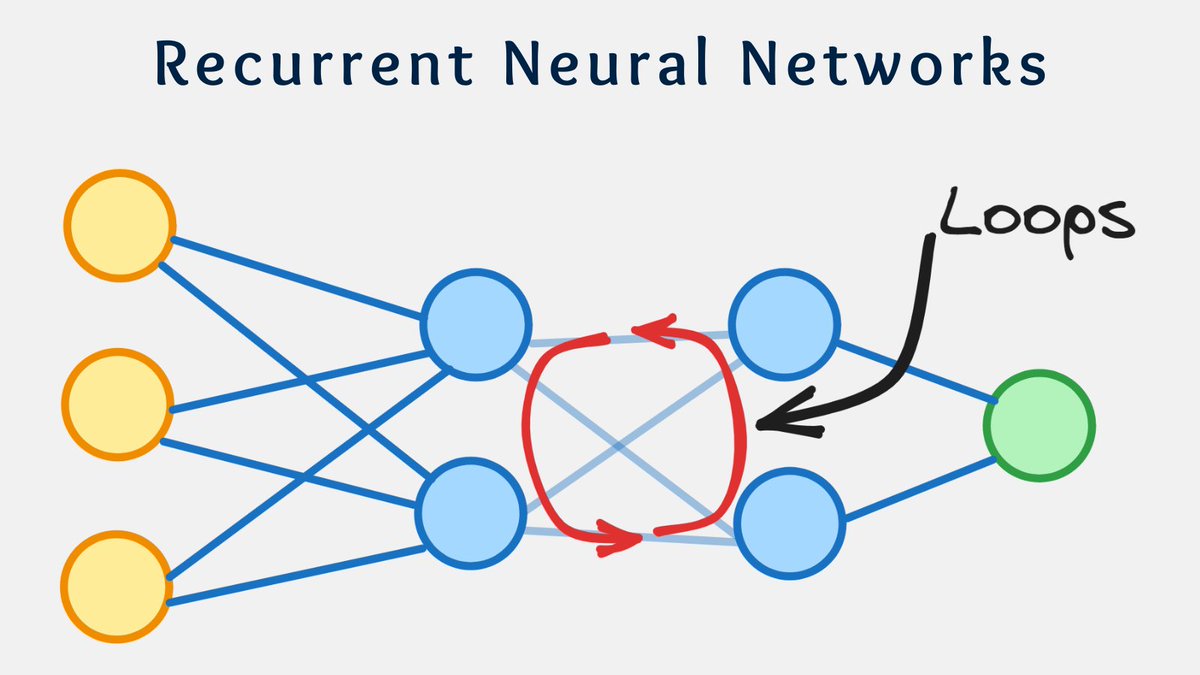

Complex Neural Networks may contain loops and feedback connections.

These allow information to flow back into the network, enabling it to retain and utilize information from earlier steps.

But FNN works differently 🔽

2/7

These allow information to flow back into the network, enabling it to retain and utilize information from earlier steps.

But FNN works differently 🔽

2/7

Feedforward Neural Network (FNN) is way simpler.

In this network, information moves only forward from the input nodes.

This NN does not contain any loops of feedback connections.

The output of one layer serves as the input for the next layer.

3/7

In this network, information moves only forward from the input nodes.

This NN does not contain any loops of feedback connections.

The output of one layer serves as the input for the next layer.

3/7

What are the advantages?

- FNNs are easy to understand since their structure is straightforward.

- Scalability - The number of layers can be increased or decreased.

- Simpler structure means easier interpretability.

- Widely used.

4/7

- FNNs are easy to understand since their structure is straightforward.

- Scalability - The number of layers can be increased or decreased.

- Simpler structure means easier interpretability.

- Widely used.

4/7

What are the disadvantages?

- They hardly find complex connections, since every input is handled independently.

- Since they do not contain loops and feedback, they are not the best for modeling sequences.

5/7

- They hardly find complex connections, since every input is handled independently.

- Since they do not contain loops and feedback, they are not the best for modeling sequences.

5/7

That's it for today.

I hope you've found this thread helpful.

Like/Retweet the first tweet below for support and follow @levikul09 for more Data Science threads.

Thanks 😉

6/7

I hope you've found this thread helpful.

Like/Retweet the first tweet below for support and follow @levikul09 for more Data Science threads.

Thanks 😉

6/7

https://twitter.com/levikul09/status/1669643697347264512

You should also join our newsletter, DSBoost.

We share:

• Interviews

• Podcast notes

• Learning resources

• Interesting collections of content

dsboost.substack. com

7/7

We share:

• Interviews

• Podcast notes

• Learning resources

• Interesting collections of content

dsboost.substack. com

7/7

• • •

Missing some Tweet in this thread? You can try to

force a refresh

Read on Twitter

Read on Twitter