AGI must be decentralized and cheap to be accessible for all

Yet scaling laws in data and energy mean it will take trillions of dollars, leading to centralized control

The solution is a total hardware revolution

Here's the Thermodynamic Computing Explainer 🧵 w/@Extropic_AI

Yet scaling laws in data and energy mean it will take trillions of dollars, leading to centralized control

The solution is a total hardware revolution

Here's the Thermodynamic Computing Explainer 🧵 w/@Extropic_AI

I've spent the last few months getting to know @BasedBeffJezos, @trevormccrt1 and his team at @Extropic_AI.

What they're building is the Transistor of the AI era - the most natural physical embodiment of probabilistic learning.

To appreciate how, we need to dive deep:

What they're building is the Transistor of the AI era - the most natural physical embodiment of probabilistic learning.

To appreciate how, we need to dive deep:

The essence of machine learning is to accurately model the statistical distributions governing natural phenomena

You start with a guessed distribution, and slowly shape it to a target distribution - reality - through repeated observations.

Each sample helps better fit reality

You start with a guessed distribution, and slowly shape it to a target distribution - reality - through repeated observations.

Each sample helps better fit reality

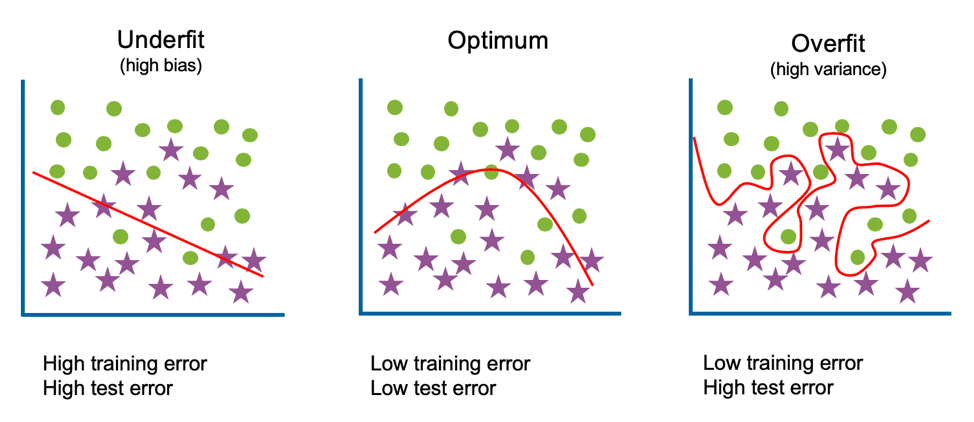

The goal is to accurately predict what the underlying phenomena will be, even without having observed that particular case before.

Tuning the model on training and testing data means over-fitting, under-fitting, or achieving usefulness

A good model knows hot dog or no hot dog

Tuning the model on training and testing data means over-fitting, under-fitting, or achieving usefulness

A good model knows hot dog or no hot dog

The different ways of ingesting data, making guesses, rejecting them based on criteria, and updating the guess-making process accounts for the entire panoply of different machine learning models today

It's a complete zoo with a very common flaw - the over-reliance on Gaussians

It's a complete zoo with a very common flaw - the over-reliance on Gaussians

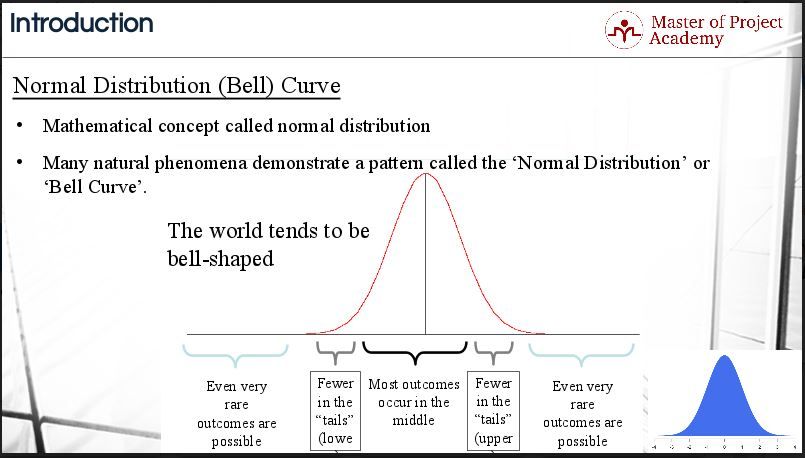

A Gaussian is a particular type of statistical distribution that is like the vanilla ice-cream of probabilities.

It's the default guess for how something behaves, and comes up often due to the Central Limit Theorem.

This classic bell-curve is ubiquitous in nature

It's the default guess for how something behaves, and comes up often due to the Central Limit Theorem.

This classic bell-curve is ubiquitous in nature

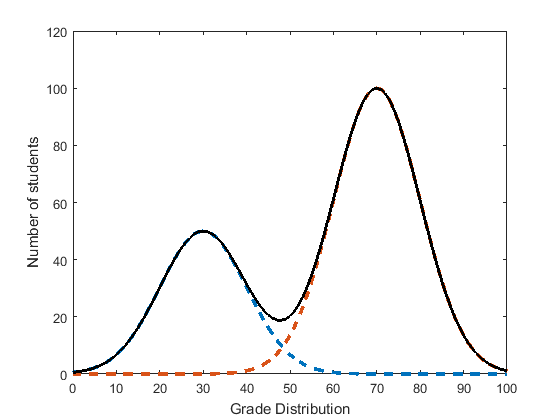

The issue is that many complex phenomenon are fundamentally not Gaussian-shaped - they might have uneven tails, skew to one side, have more than one 'bump'.

The simplest example is needing two Gaussians to fit the graph below, each with its own mean and variance.

The simplest example is needing two Gaussians to fit the graph below, each with its own mean and variance.

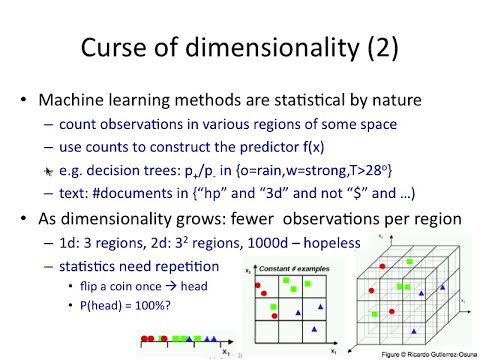

Doubling the number of Gaussian to fit the curve also doubles the parameters, but this means the number of possible combinations of parameters is squared

Therefore the size of data needed to learn the underlying distribution grows much faster than the number of parameters

Therefore the size of data needed to learn the underlying distribution grows much faster than the number of parameters

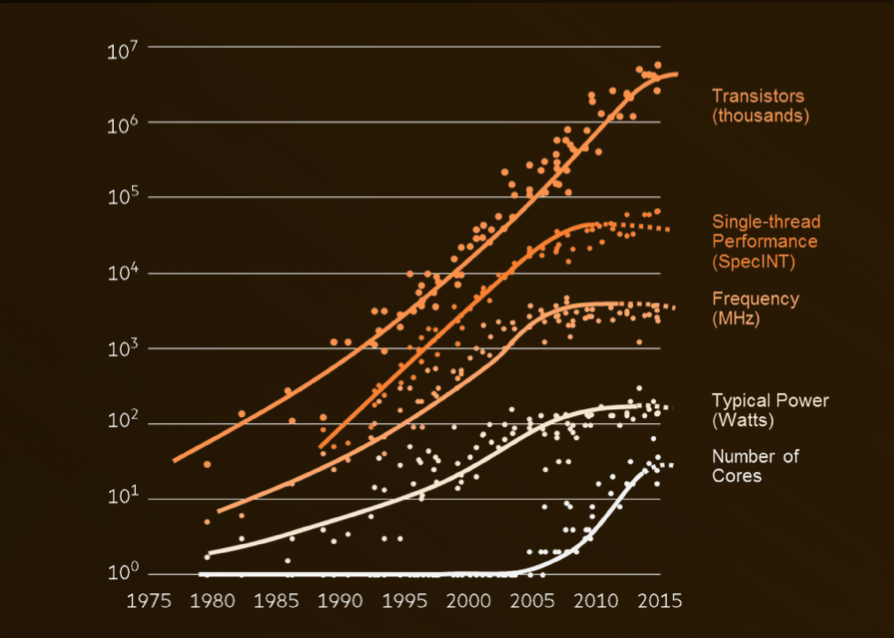

Most phenomenon are vastly more complicated than the simple example above, needing larger and larger models with more and more parameters to represent.

Modern LLM's have trillions of parameters and are trained on tens of trillions of tokens.

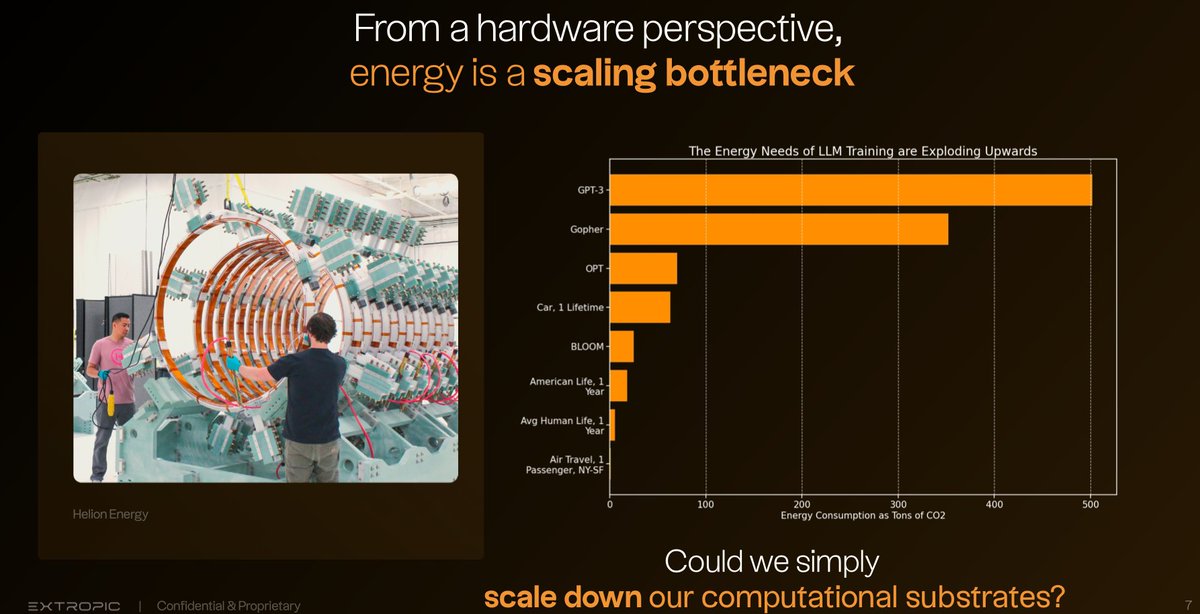

And then there's the energy cost...

Modern LLM's have trillions of parameters and are trained on tens of trillions of tokens.

And then there's the energy cost...

The International Energy Agency has released forecasts that because of AI, the global energy demand will double between 2022 and 2026.

@sama has invested $500m into @Helion_Energy while @Microsoft is building its own nuclear energy program

And then theres the chips...

@sama has invested $500m into @Helion_Energy while @Microsoft is building its own nuclear energy program

And then theres the chips...

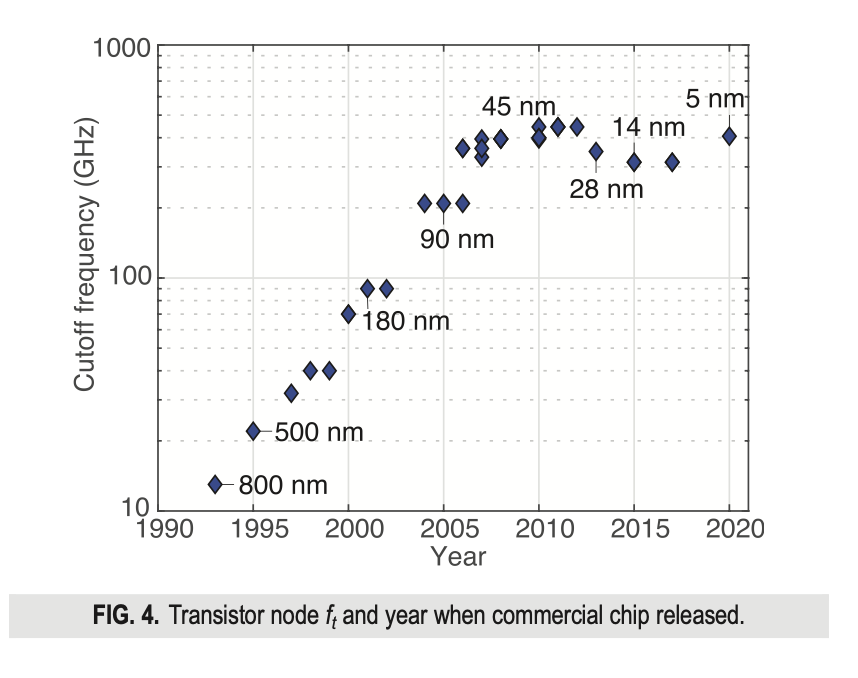

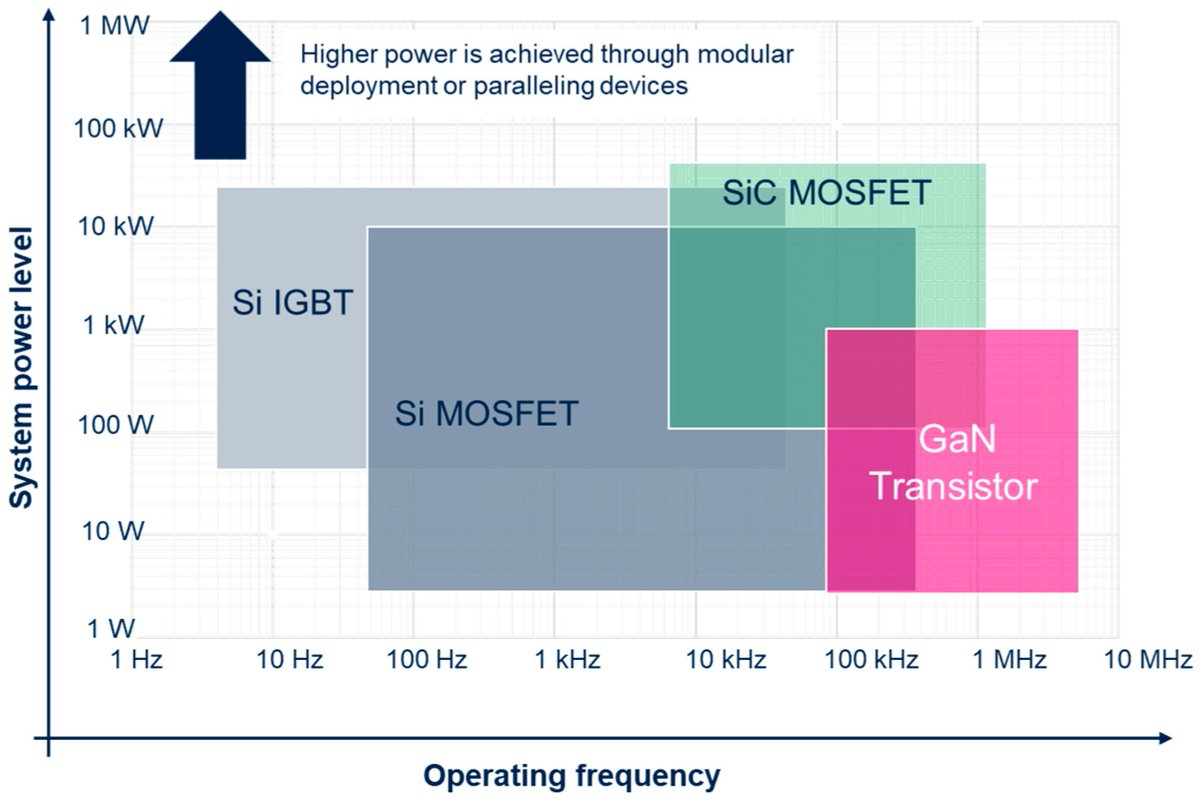

We reached the limits of clock frequency in silicon transistors decades ago, and now we're approaching the limits of size as features reach the single-digit nanometer scale.

We've skirted these issues by scaling things massively in parallel, driving the demand for GPUs

We've skirted these issues by scaling things massively in parallel, driving the demand for GPUs

Here's where we stand at the precipice of AGI:

- Massive models with even more massive datasets

- Enormous compute facilities reaching the limits of physical hardware

- Requiring the energy and financial budget of nations

Here's how thermodynamic computing changes everything:

- Massive models with even more massive datasets

- Enormous compute facilities reaching the limits of physical hardware

- Requiring the energy and financial budget of nations

Here's how thermodynamic computing changes everything:

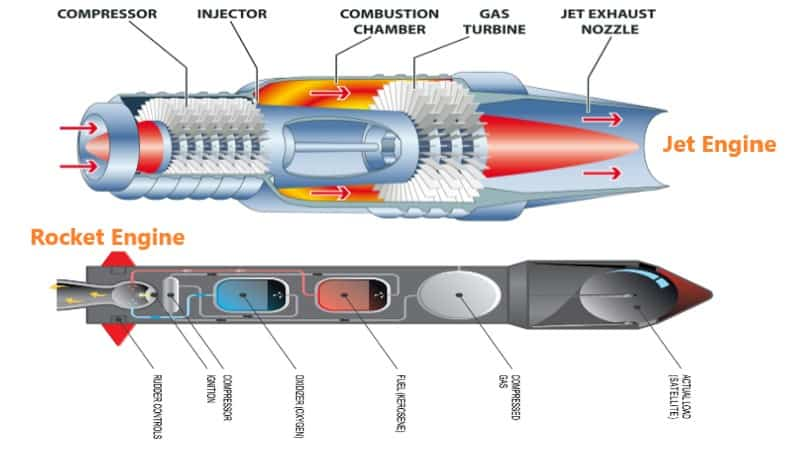

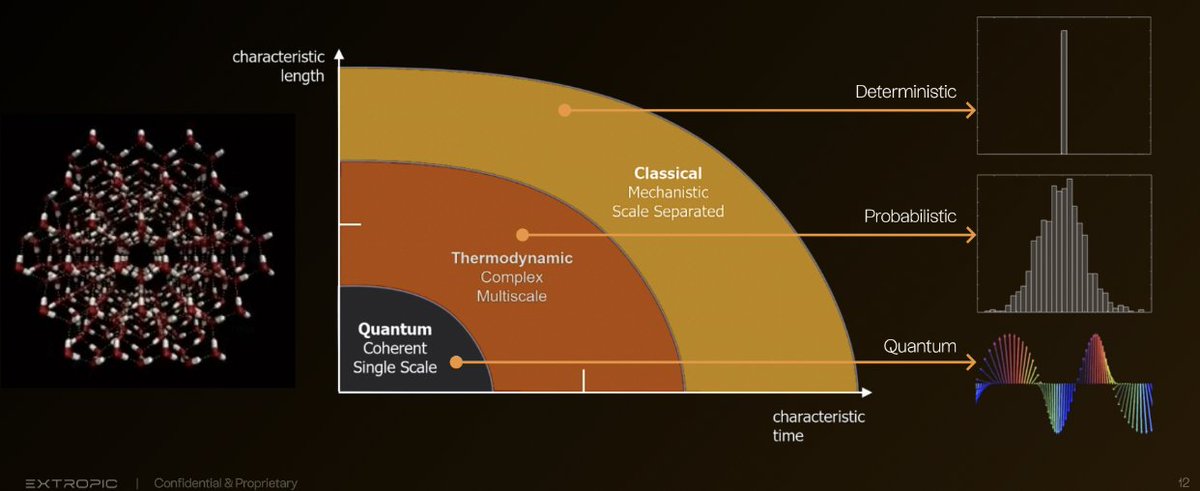

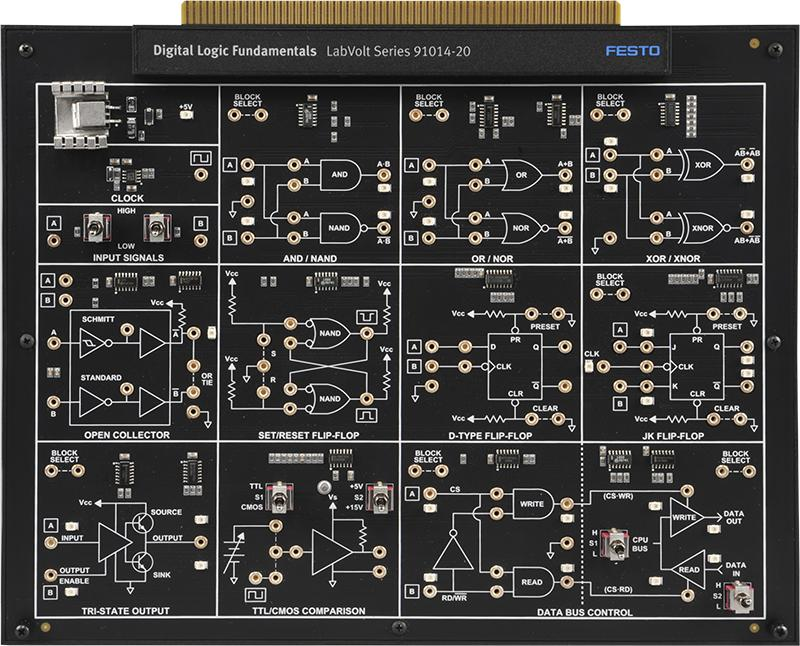

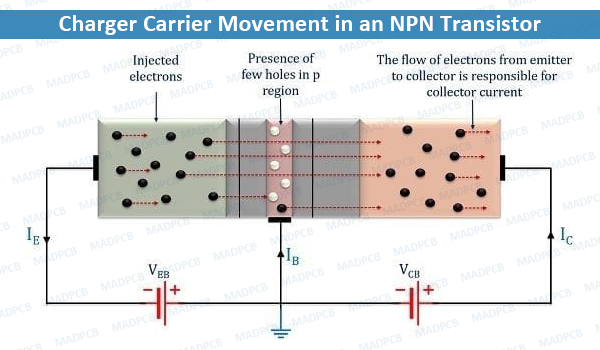

First, regular transistors aren't the 'Transistors of the AI Era"

Digital logic is ideally suited for deterministic gate operations, but machine learning is inherently probabilistic.

The ideal hardware for machine learning is not deterministic but probabilistic

Digital logic is ideally suited for deterministic gate operations, but machine learning is inherently probabilistic.

The ideal hardware for machine learning is not deterministic but probabilistic

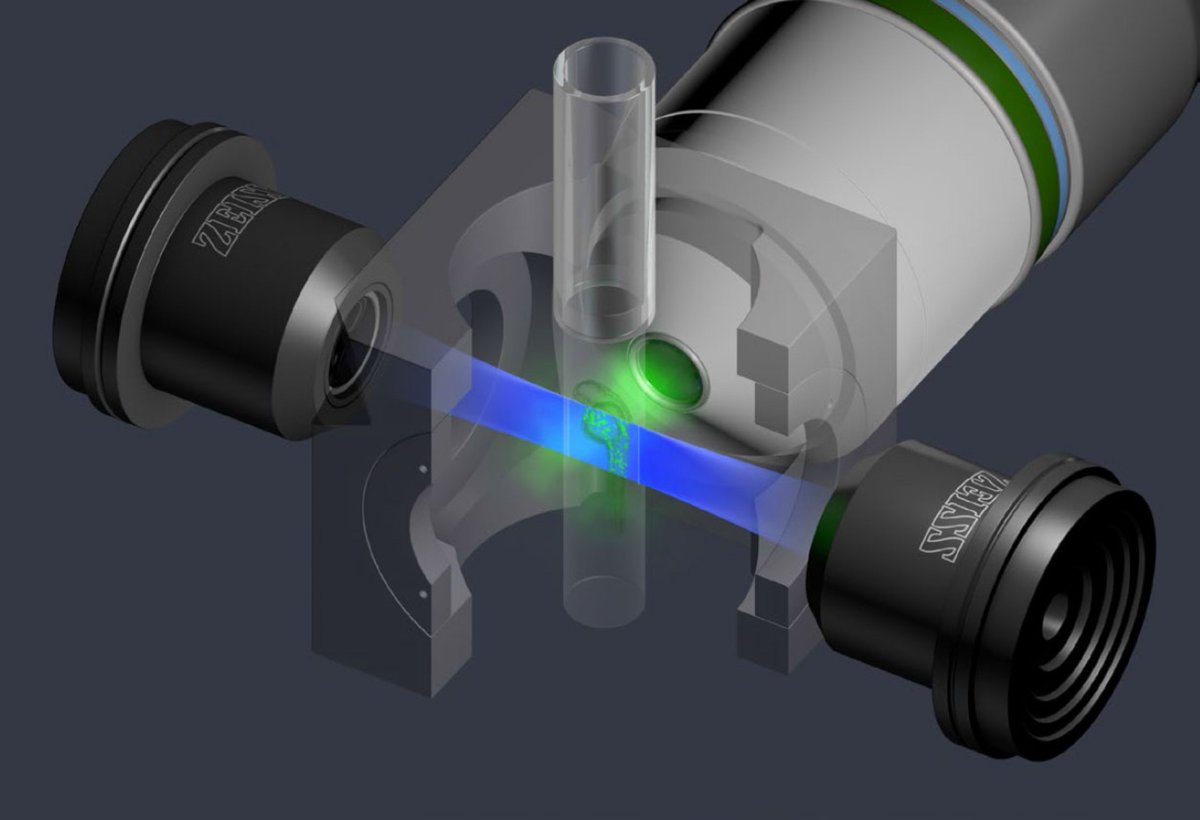

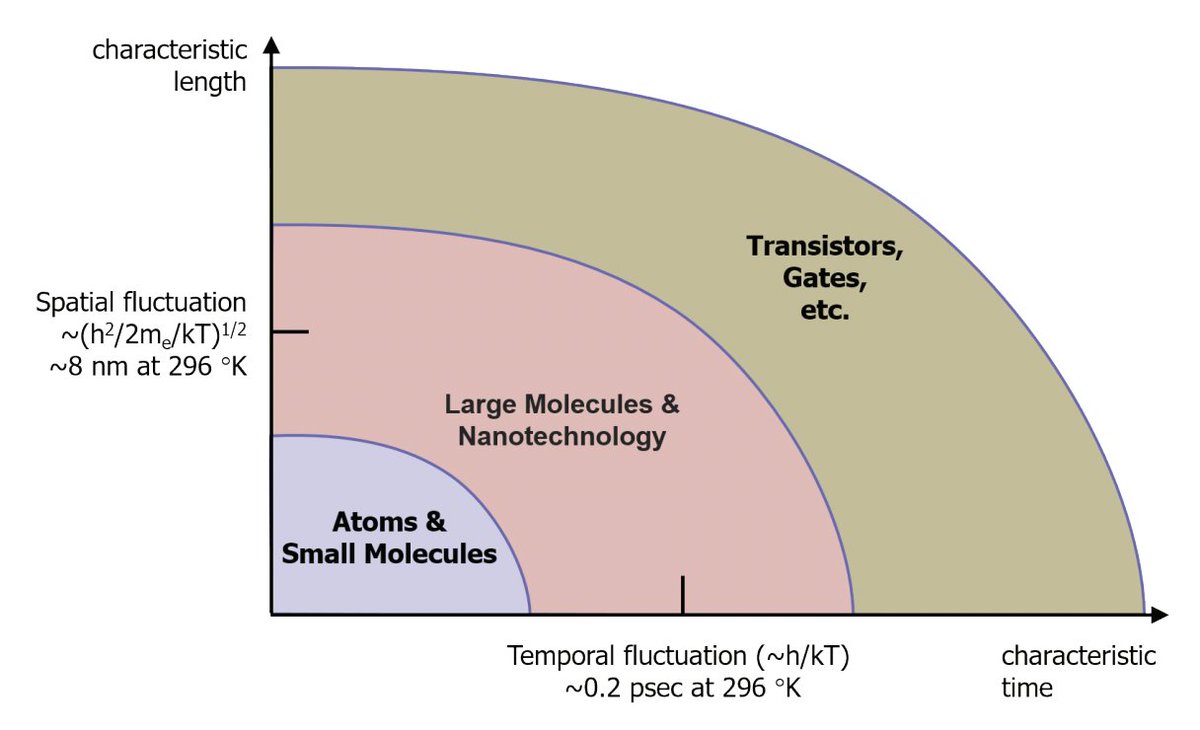

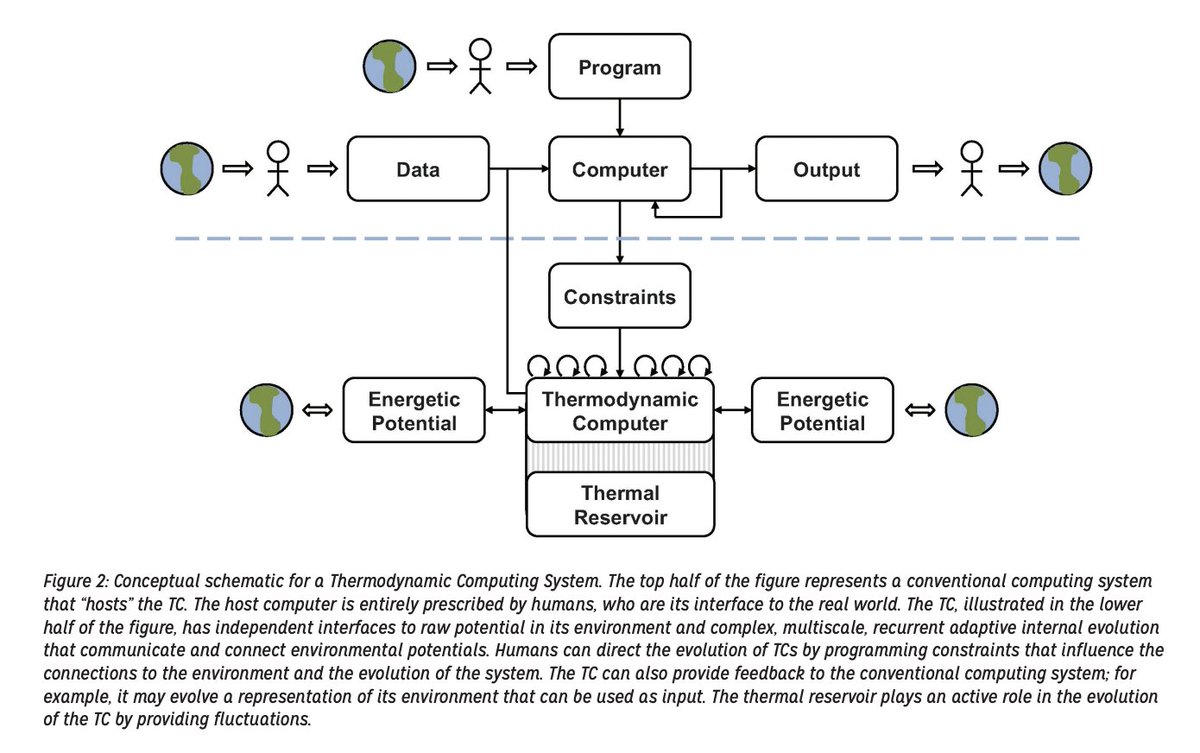

@Extropic_AI use the inherently probabilistic nature of physical systems at the hardware level.

Their systems sits at the meso-scale between classical and quantum computing.

Where entropy is a competitive advantage. Let me explain:

Their systems sits at the meso-scale between classical and quantum computing.

Where entropy is a competitive advantage. Let me explain:

The Thermodynamic Advantage comes when the size of chip-elements is comparable to the background thermal fluctuations in energy you get at any finite temperature.

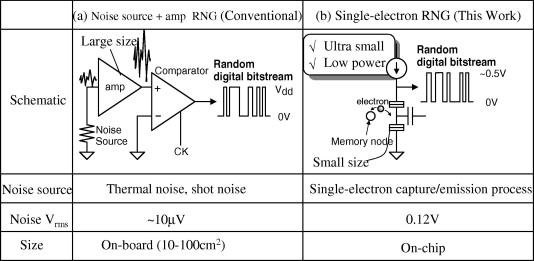

When you need to generate a new sample, you just measure the system.

Your random-number generator are electrons

When you need to generate a new sample, you just measure the system.

Your random-number generator are electrons

The randomness is truly random, and the shape of the statistical distribution is from the shape of a potential energy well in which electrons sit

You can tune these potentials into complex shapes - non-Gaussians - with just a few parameters.

Escaping the dimensionality curse

You can tune these potentials into complex shapes - non-Gaussians - with just a few parameters.

Escaping the dimensionality curse

In a transistor, the maximum speed of operation is limited by the time it takes enough charge carriers to start moving to reach greater than unity gain.

For a thermo chip, the speed is only limited by the time it takes ambient heat to enter the system and re-randomize its state

For a thermo chip, the speed is only limited by the time it takes ambient heat to enter the system and re-randomize its state

It's far faster and takes less energy to simply re-randomize a bunch of electrons then induce net current to flow with a voltage.

Therefore thermo chips can use trillions of times less energy and run millions of times faster than junctions.

But it gets even better than this:

Therefore thermo chips can use trillions of times less energy and run millions of times faster than junctions.

But it gets even better than this:

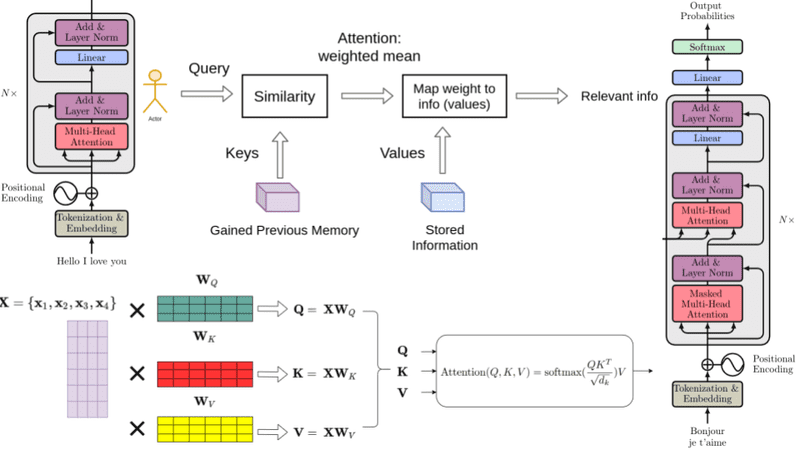

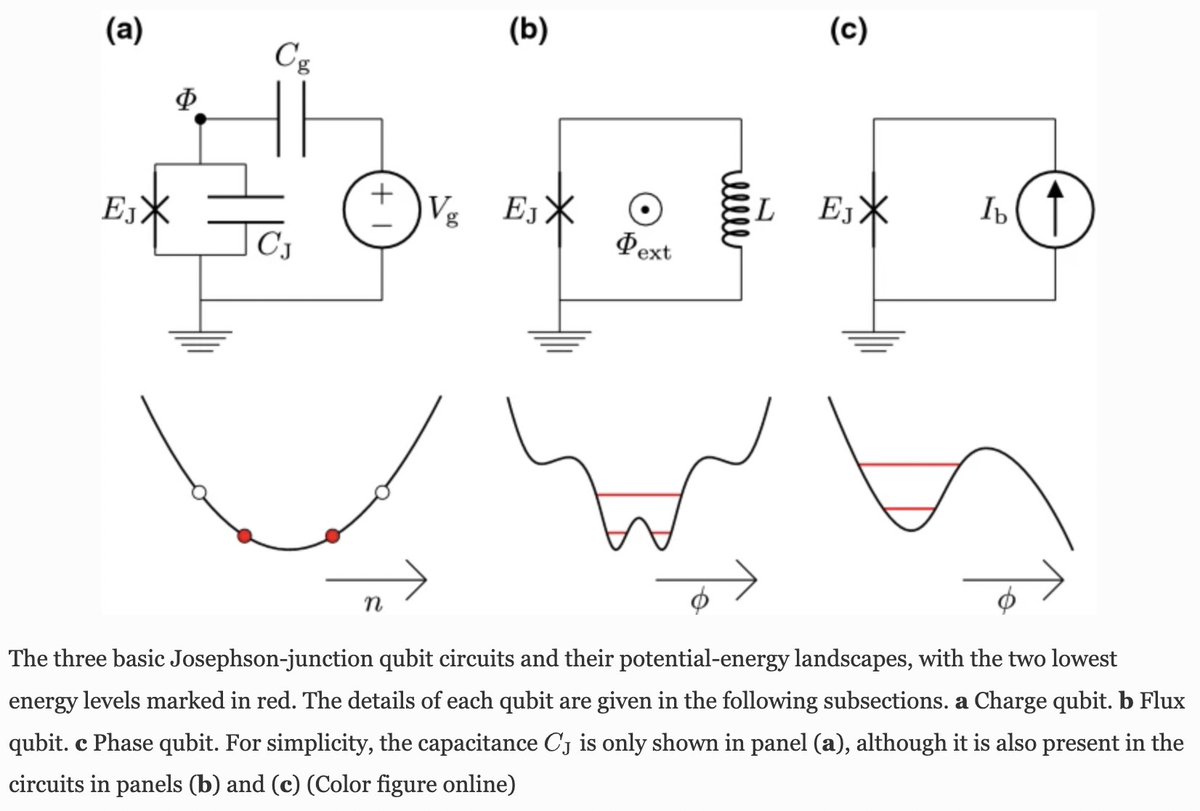

The process of tuning the energy potential of an electron random-number-generator is inherently an 'Energy Based Method'

Again unlike silicon, on a thermo chip the EBM isn't emulated by massive numbers of digital, deterministic operations

It's baked into the physics itself

Again unlike silicon, on a thermo chip the EBM isn't emulated by massive numbers of digital, deterministic operations

It's baked into the physics itself

Why do EBM's matter?

Recently, the Godfather of Deep Learning @ylecun spoke with @lexfridman about how EBM's will be way forward for LLM's

They provide the shortest path to learning how the world works - again its baked into @Extropic_AI's hardware

Recently, the Godfather of Deep Learning @ylecun spoke with @lexfridman about how EBM's will be way forward for LLM's

They provide the shortest path to learning how the world works - again its baked into @Extropic_AI's hardware

https://x.com/lexfridman/status/1765869230308659483?s=20

The "Brain" @Extropic_AI is developing is one where each thermodynamic neuron learns a complex probability distribution, encoding it in an energy potential

Allowing the fastest possible learning path, using trillions of times less energy and operating millions of times faster

Allowing the fastest possible learning path, using trillions of times less energy and operating millions of times faster

How this manifests on physical hardware is in super-specialized ASIC's that perform that sole function integral to any probabilistic learning process:

Tuning and adapting a statistical model by repeated sampling to learn an underlying process in as few observations as possible

Tuning and adapting a statistical model by repeated sampling to learn an underlying process in as few observations as possible

This is the truest definition of "Deep Tech" one can imagine.

An ambitious and demanding engineering problem that if successful, unblocks fundamental progress, relaxes resource constraints, and forever changes the world.

And would mint another multi-trillion dollar company

An ambitious and demanding engineering problem that if successful, unblocks fundamental progress, relaxes resource constraints, and forever changes the world.

And would mint another multi-trillion dollar company

@Extropic_AI is a team forged in the depths of Google's most secretive quantum machine learning skunkworks.

Leveraging the intrinsic properties of physical systems to deliver decentralized, abundant AI for all of humanity.

Developing the Transistor of the AGI era

Leveraging the intrinsic properties of physical systems to deliver decentralized, abundant AI for all of humanity.

Developing the Transistor of the AGI era

• • •

Missing some Tweet in this thread? You can try to

force a refresh