I developed a workflow that allows you to render ANY 3D scene in ANY style with AI!

You can create different prompts for all the elements in your scene allowing for full flexibility.

Here is how it works 👇

You can create different prompts for all the elements in your scene allowing for full flexibility.

Here is how it works 👇

First we need to render a mask pass. This allows us to assign an individual prompt to each color, giving full flexibility. I simply created this pass by assigning emission shaders to all the objects I wanted to create separate prompts for.

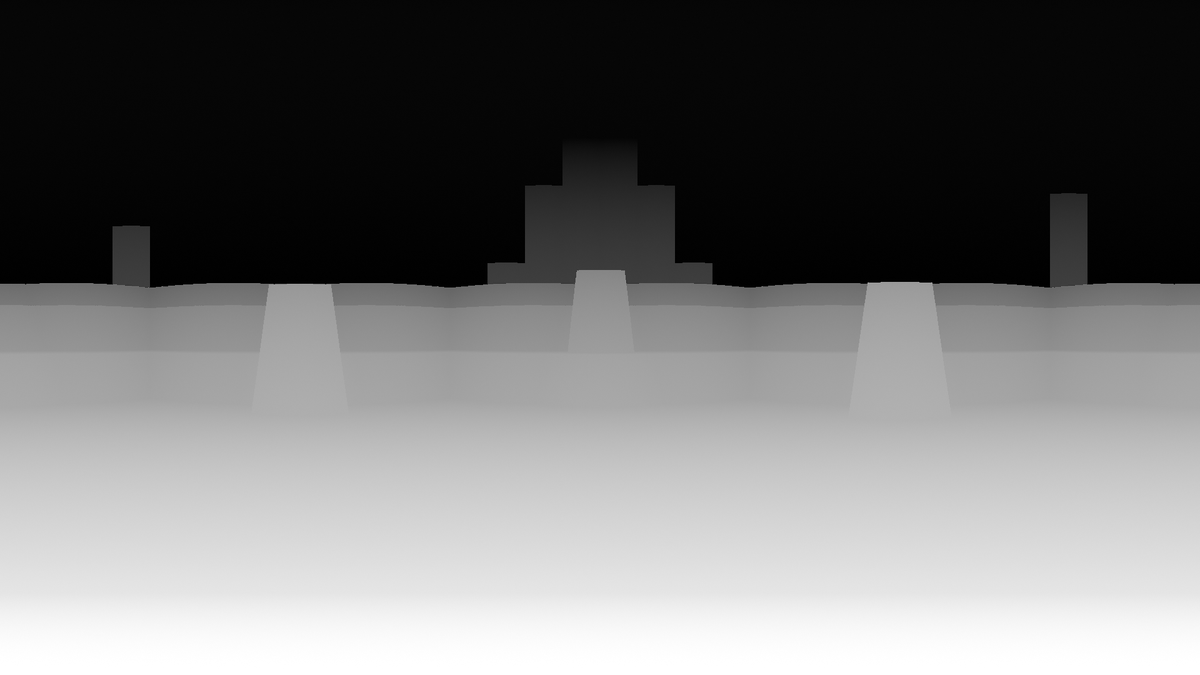

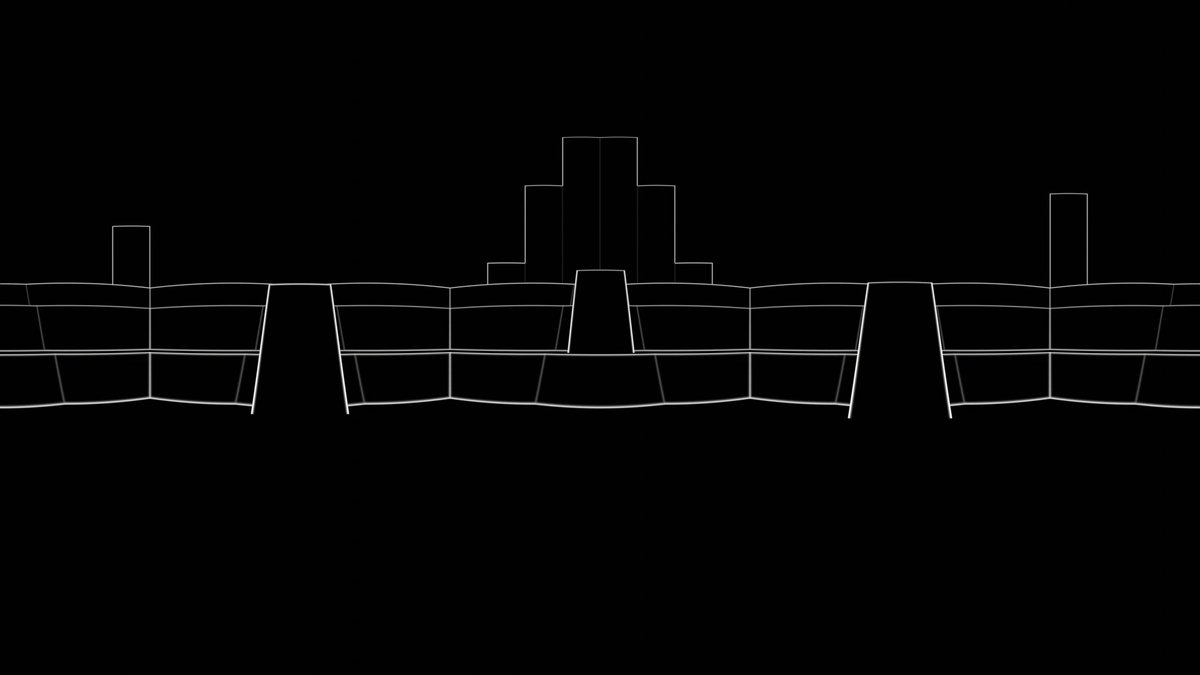

Next, I exported these two rendering passes: Depth and Outline. I can then use a Depth control net at full strength and a Canny control net at low strength to guide the generated image or sequence.

Here I've re-rendered a scene from my AI-generated Pixar movie. I'm impressed with how much it was able to enhance the scene and turn it into something that actually looks a bit like a Pixar movie (+ lots of AI weirdness...).

With ipAdapter, you can also add a color, beauty pass or a final rendering to the AI workflow. In this case, it becomes more of a filter.

But I prefer the prompt based workflow because it gives us complete flexibility. Should this kitchen be made of aluminum or wood? Maybe the scene should be set in a forest at night. Or maybe we want to create an anime instead!

For the in depth explanation and free workflow files, check out my YouTube video about this topic!

• • •

Missing some Tweet in this thread? You can try to

force a refresh