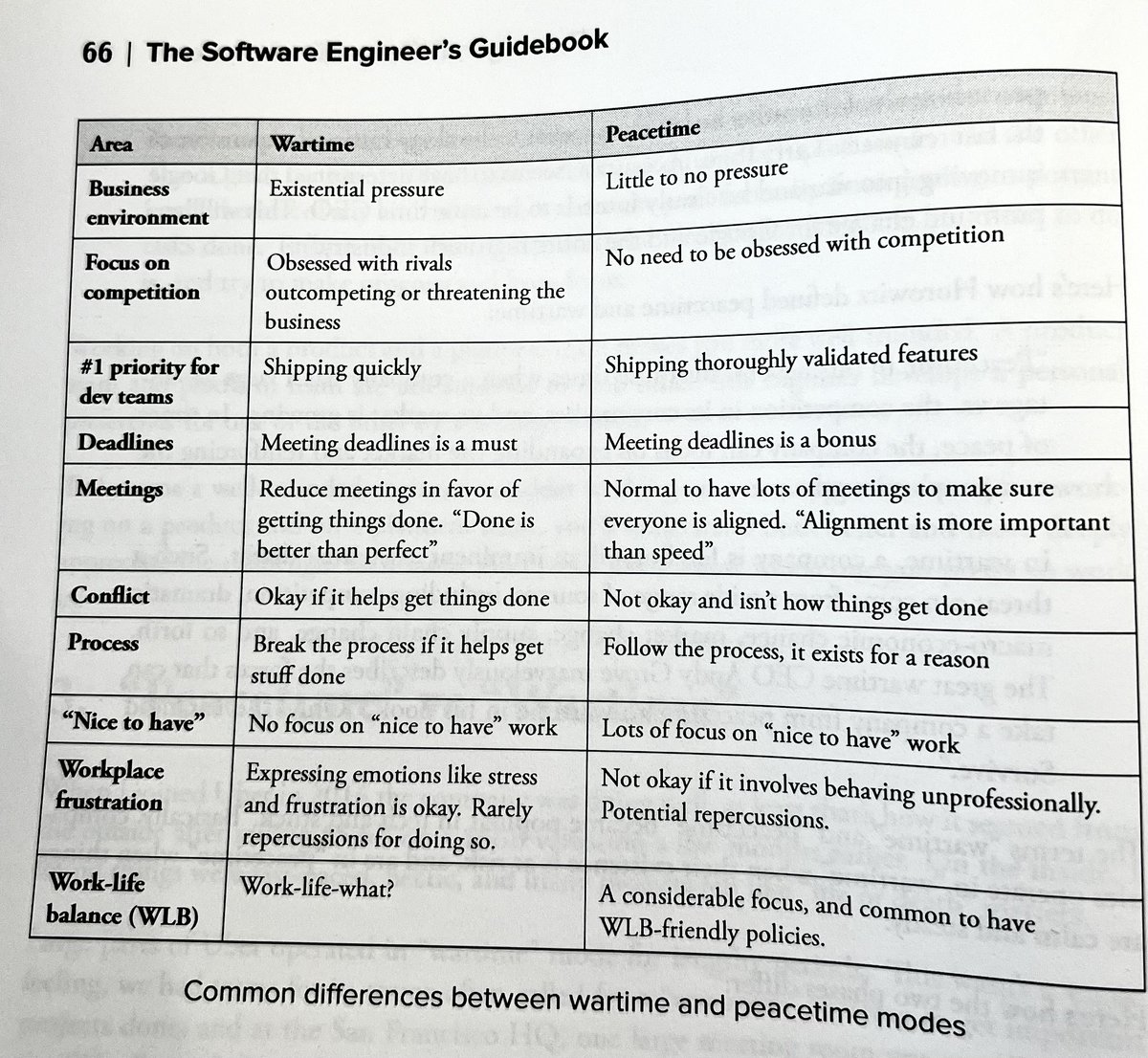

Whenever I hear about some FAANG layoffs, I keep coming back to @GergelyOrosz's discussion of "peacetime vs. wartime" companies.

I'd posit that many big tech companies that have been in peacetime mode **for most of our entire careers** are now in wartime mode.

I'd posit that many big tech companies that have been in peacetime mode **for most of our entire careers** are now in wartime mode.

And so what seems like a weird layoff or reorg is mostly because we have become so used to thinking of those companies as being in peacetime mode. And now they aren't.

• • •

Missing some Tweet in this thread? You can try to

force a refresh