Continuing build cheap, scalable and secure RAG-less architecture for talking with docs.

Start is here

Review of OpenAI fully managed RAG (file_search) here:

The problem with OCR solved: AWS Textract takes real good care of it!

Start is here

Review of OpenAI fully managed RAG (file_search) here:

The problem with OCR solved: AWS Textract takes real good care of it!

https://twitter.com/naumenko_roman/status/1782117438357733401

https://twitter.com/naumenko_roman/status/1786909207024869591

https://twitter.com/naumenko_roman/status/1786909221423915395

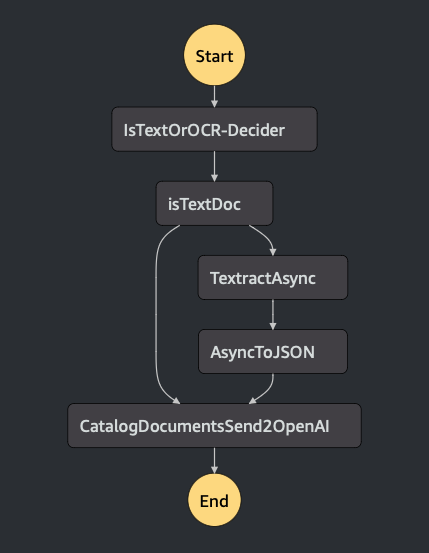

S3-> SFN -> File +Text

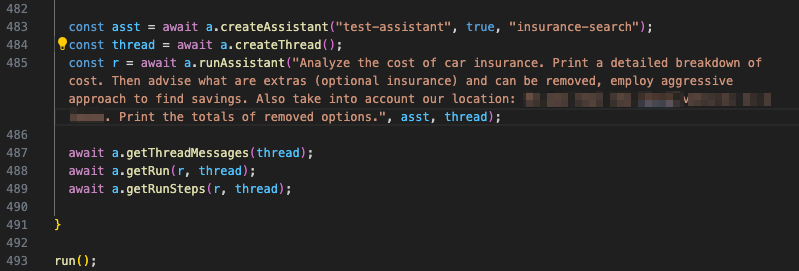

That last state in the State Function is where Lambda gets a nicely formatted text. It's either from OCR or in markdown from a text PDFs (extracted by pymupdf4llm package).

That last state in the State Function is where Lambda gets a nicely formatted text. It's either from OCR or in markdown from a text PDFs (extracted by pymupdf4llm package).

The OpenAIs API does not have anything to index and manage documents (I suspect they dump all files into S3 analog on Azure).

It's ok, I want a custom UI anyways, to let users manage docs and a backend to catalog them.

It's ok, I want a custom UI anyways, to let users manage docs and a backend to catalog them.

UI is pretty easy to build.

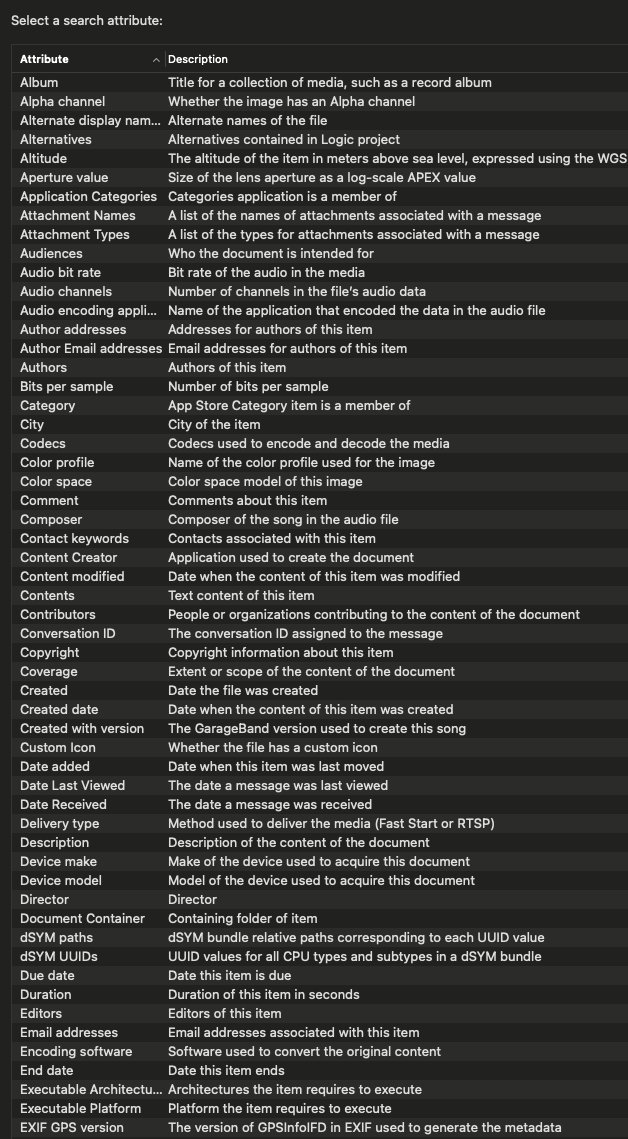

Now the hard part is a searchable catalog of documents. I want something like what MacOS has but not form-fields based search, just tags that makes sense to a user

Now the hard part is a searchable catalog of documents. I want something like what MacOS has but not form-fields based search, just tags that makes sense to a user

I want a pile of docs from where I can choose tags like "maintenance docs", "boiler"

Or "proposals", "companyName"

Or "email", "airline", "cityName"

And then backend will attach a bunch of docs to file_search and gets ready for chat

Maybe full text search on docs short summaries.

Or "proposals", "companyName"

Or "email", "airline", "cityName"

And then backend will attach a bunch of docs to file_search and gets ready for chat

Maybe full text search on docs short summaries.

I keep asking myself: maybe RAG-it-all and search document by context instead of metadata?

Because a versatile RAG is notoriously difficult to build. And pretty expensive to run because of server-based tech behind it (all kinds of databases)

Because a versatile RAG is notoriously difficult to build. And pretty expensive to run because of server-based tech behind it (all kinds of databases)

https://twitter.com/naumenko_roman/status/1782117438357733401

So the alternative is a smart backend attaching a bunch different file types to a file_search. Which automatically get indexed and becomes available in chat is _really compelling_

It requires a little bit more upfront cataloging, which needed in any case for a basic security

It requires a little bit more upfront cataloging, which needed in any case for a basic security

(who's the docs owner, re-ingesting, updates, etc)

All these "home-made" RAG solutions btw pretend security doesn't exist. BigCloud takes a good care of security, but falls short on flexibility and -lessness.

And the damn cost is too high, even to play with it.

All these "home-made" RAG solutions btw pretend security doesn't exist. BigCloud takes a good care of security, but falls short on flexibility and -lessness.

And the damn cost is too high, even to play with it.

To sump up. With a doc catalog, unproven, expensive solution of building a full scale RAG pipeline became a known, cheap "index and search tons of S3 files" problem.

What's the best architecture there? I see AWS put a lot of Athena-based solutions.

What's the best architecture there? I see AWS put a lot of Athena-based solutions.

@threadreaderapp unroll

• • •

Missing some Tweet in this thread? You can try to

force a refresh