Some folks are discussing what it means to be a “secure encrypted messaging app.” I think a lot of this discussion is shallow and in bad faith, but let’s talk about it a bit. Here’s a thread. 1/

First: the most critical element that (good) secure messengers protect is the content of your conversations in flight. This is usually done with end-to-end encryption. Messengers like Signal, WhatsApp, Matrix etc. encrypt this data using keys that only the end-devices know. 2/

Encrypting the content of your conversations, preferably by default, is “table stakes.” It isn’t perfect, but it’s required for a messenger even to flirt with the word “secure.” But security and privacy are hard, deep problems. Solving encrypted messaging is just the start. 3/

There are lots of threats that still exist even if you add end-to-end encryption to messaging. One is: does your phone back up message content to the cloud? But another much harder one is: what about metadata? Ie what about the details of *who* you communicate with and when? 4/

E2EE cloud backup is incredibly, back-breakingly hard. It involves storing keys somewhere that the cloud provider can’t access, even in the event where you lose your phone and forget your passwords. But services have come up with solutions. Eg: blog.cryptographyengineering.com/2022/12/07/app…

But if cloud backup is hard, it’s literally *nothing* compared to metadata. Metadata is the hardest thing in the world. That’s because encryption does very little to help you: your messages (encrypted or not) need to be delivered. The servers that do this have to know to whom. 6/

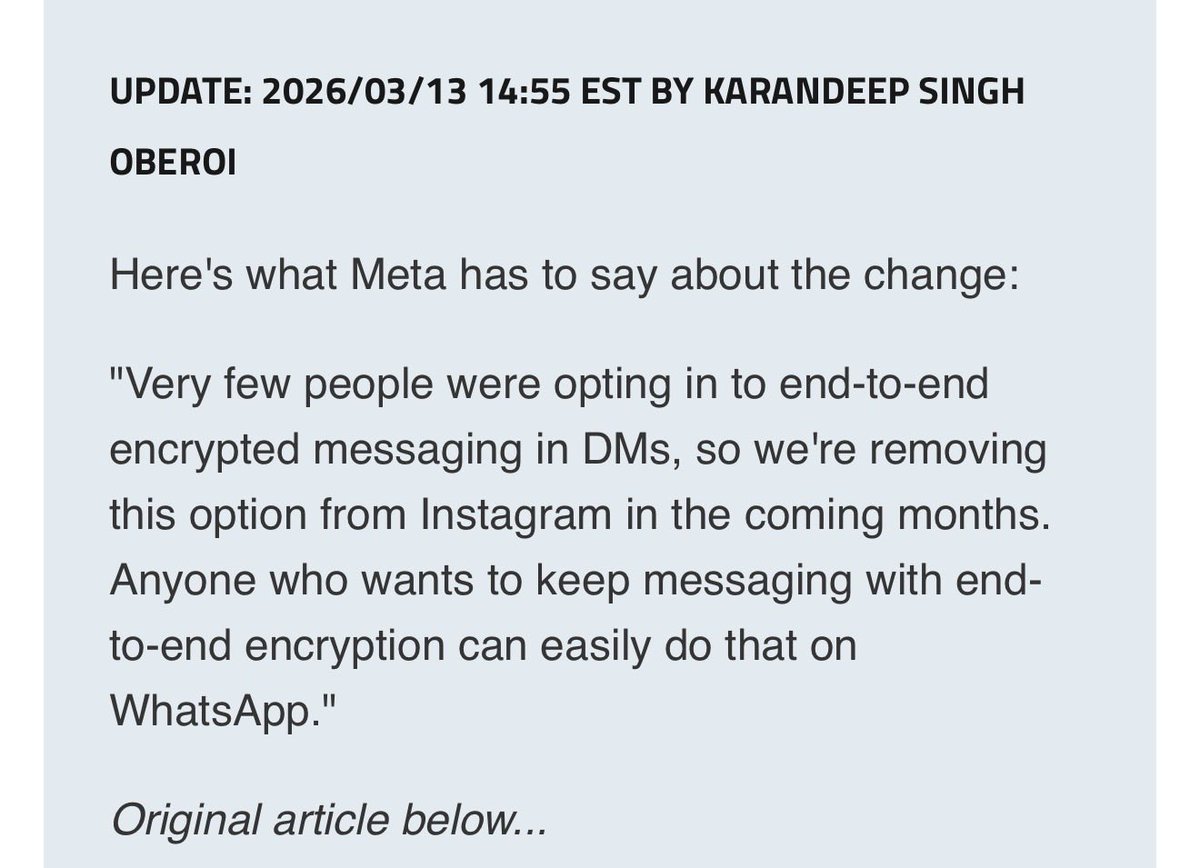

Metadata is so hard that it really matters how much you trust the intentions and promises of your service provider. For example: WhatsApp is a Meta company, and they’re open about the fact that they use social graphs to perform advertising. That’s how they make money. 7/

I appreciate that WA is open about this and I trust them generally not to sell my data to criminals, but I also don’t like it. That’s why I don’t use WhatsApp as my primary messenger even if I strongly believe that their (content) encryption is very good. 8/

But you should be very wary of anyone who tells you they don’t do anything with metadata unless you either (1) trust their technical protections or (2) trust them a lot organizationally. And the technical side is very challenging. Just incredibly difficult. 9/

There are a bunch of separate issues, and a full discussion is so messy they require a different medium. They include:

* Contact discovery: how to find your contacts without giving away your social graph

* Registration: can you sign up as a pseudonymous account, or do you need an identifier (with enormous tradeoffs for spam.)

* Sender anonymity: can you send without revealing who you are?

* IP address anonymity: Ugh. Mostly this requires a VPN or Tor.

* Timing attacks and sophisticated adversaries: see attached diagram.

* Contact discovery: how to find your contacts without giving away your social graph

* Registration: can you sign up as a pseudonymous account, or do you need an identifier (with enormous tradeoffs for spam.)

* Sender anonymity: can you send without revealing who you are?

* IP address anonymity: Ugh. Mostly this requires a VPN or Tor.

* Timing attacks and sophisticated adversaries: see attached diagram.

These are all incredibly difficult problems and folks are working on solving them. Signal uses trusted enclaves to perform contact discovery, and has a “sealed sender” to hide sender IDs. Other services allow you to sign up with pseudonyms. You should pick what works for you.

What you should not do, and what I see a lot of people doing, is panic about the fact that messaging services have access to metadata and/or even *use* that metadata, and switch to something that is unencrypted and arguably worse.

If you’re a technical expert, you should try to explain to others what the tradeoffs look like. Some people are better off using a service like WhatsApp because their contacts are there. Others are better off using tiny bespoke encrypted messengers with anonymity features.

What you should not do is indulge the “aha gotcha” crowd that is running around trying to convince people that all popular messages are “backdoored” because that message leads to panic and people using insecure systems. //

PS the diagram in the middle of this thread is from a great article by James Mickens. usenix.org/system/files/1…

• • •

Missing some Tweet in this thread? You can try to

force a refresh