NIH’s malicious Effort Preference claim is propped up by a misleading manipulation of the EEfRT and a shameless misrepresentation of the EEfRT data. Read all about it in Part 2 of my new series. thoughtsaboutme.com/2024/06/12/the…

One of my through-the-looking-glass findings is that ME patients actually performed better than controls on the EEfRT based on the reported data, completely debunking the Effort Preference claim, but there is so much more than that.

NIH conveniently excluded--w/o explanation or mention in the paper--the control w/ the fewest hard-task choices (HTCs), on par with the ME patient w/ the fewest HTCs whose data weren't excluded. #1

The EEfRT requires, for validity, a very high and similar (btw. groups) trial/task-completion rate. A 67% completion rate for hard trials by the patient group is completely outside the realm of validity, especially compared to the >96% hard-trial completion rate by controls. #2

Five ME patients were physically unable to complete hard tasks at a valid rate or at all. Under the EEfRT, data from participants who are physically unable to complete at least 50% of their trials are to be excluded. NIH's failure to do so renders EEfRT findings invalid. #3

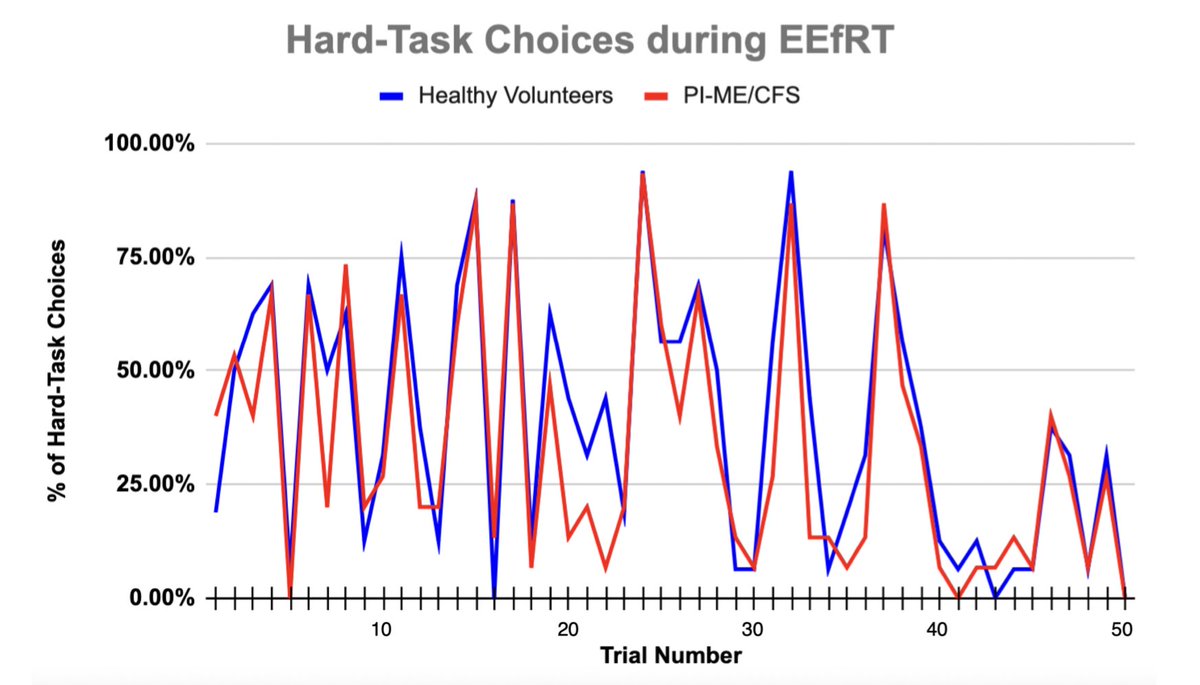

Left: This is how NIH wants you to believe ME patients and controls performed, i.e., more HTC by controls in every trial. That's what the Effort Preference claim is based on.

Right: This is how the groups actually performed: a tiny difference between groups. #4

Right: This is how the groups actually performed: a tiny difference between groups. #4

NIH claims that patients made fewer HTCs "at the start" of the EEfRT. That is false. Pts chose more hard tasks in the first trial. Both groups chose the same number of hard tasks per participant for the first 4 trials. /#5

NIH claims that pts made HTCs at a lower rate than controls "throughout" the EEfRT i.e. on every single trial. That is false:

* 34% of trials: higher HTC rate by pts

* 2% of trials: same HTC rate by both groups

* 14% of trials: practically identical HTC rate by both groups

#6

* 34% of trials: higher HTC rate by pts

* 2% of trials: same HTC rate by both groups

* 14% of trials: practically identical HTC rate by both groups

#6

The reason patients made fewer HTCs is that they followed the EEfRT game instructions to win as much virtual money as possible and made strategic choices by not choosing hard tasks for low-probability trials (probability sensitivity). This debunks the Effort Preference claim. #7

Using a game optimization strategy (every EEfRT trial has a different reward value & probability of winning), patients on average won more virtual rewards than controls based on NIH data. This eviscerates the Effort Preference claim. #8

At least some of the EEfRT data is false. In 79 uncompleted trials (56.03% of all uncompleted trials), participants were given unearned rewards when they shouldn't have based on the EEfRT rules. That means that the entire EEfRT data set is unreliable. #9

This is a very truncated summary of what I have found. Keep in mind that NIH made sweeping, unqualified conclusions about an allegedly altered Effort Preference as a defining feature of ME based on one 15-minute test by ONLY 15 ME patients. #10

There is also an astonishing number of inexplicable, careless mistakes with respect to the EEfRT in the paper, making it very clear how little effort was put into its accuracy. #11

• • •

Missing some Tweet in this thread? You can try to

force a refresh