1/12 Have you ever wondered how ML systems (and brains) deal with uncertainty? In this thread, we'll explore foundational concepts in probability, such as entropy, cross-entropy, and KL-divergence. This summarizes my latest video:

Here's the breakdown of key ideas 🧵

Here's the breakdown of key ideas 🧵

2/12 Our brains naturally operate with probabilities. When you see a vague shape in fog, you don't settle on a single interpretation. Instead, you evaluate multiple possibilities simultaneously, each with its own likelihood. This probabilistic thinking is crucial for processing ambiguous information

3/12 In machine learning, we work with probability distributions to model complex scenarios. These aren't limited to simple cases like dice or coins. They can represent intricate spaces, such as the distribution of all possible 100x100 pixel images – a 10,000-dimensional space! 🤯

4/12 Central to understanding probability is the concept of surprise. We're more surprised by rare events than common ones. If someone correctly predicts a die roll, it's more surprising than predicting a coin flip. This intuitive notion can be precisely quantified mathematically 🎲

5/12 Surprise is formally measured as log(1/p), where p is the event's probability. This elegant formula captures our intuition: low probability events (small p) have high surprise values. It provides a numerical representation of what we instinctively consider surprising or unexpected

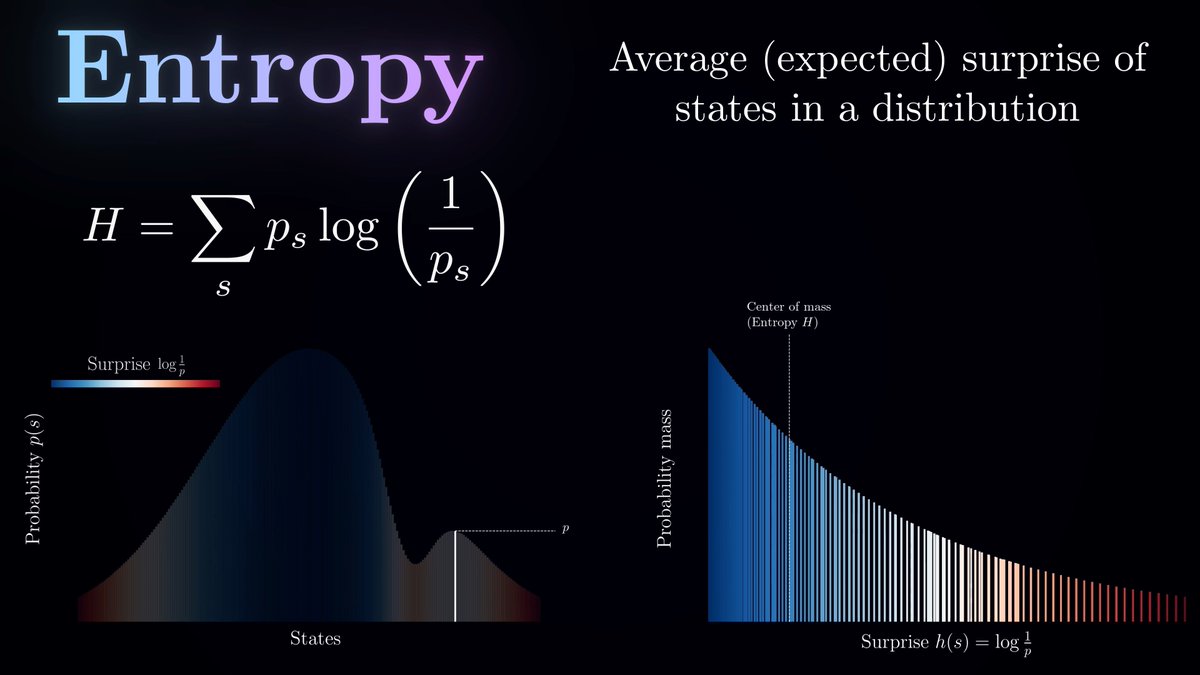

6/12 Entropy, a fundamental concept in information theory, is the average surprise of a distribution. It quantifies the uncertainty inherent in a random process, governed by that distribution. Mathematically, it's the sum of p * log(1/p) for all possible states. A fair coin has lower entropy than a thick coin that might land on its edge, as the latter scenario contains more inherent uncertainty

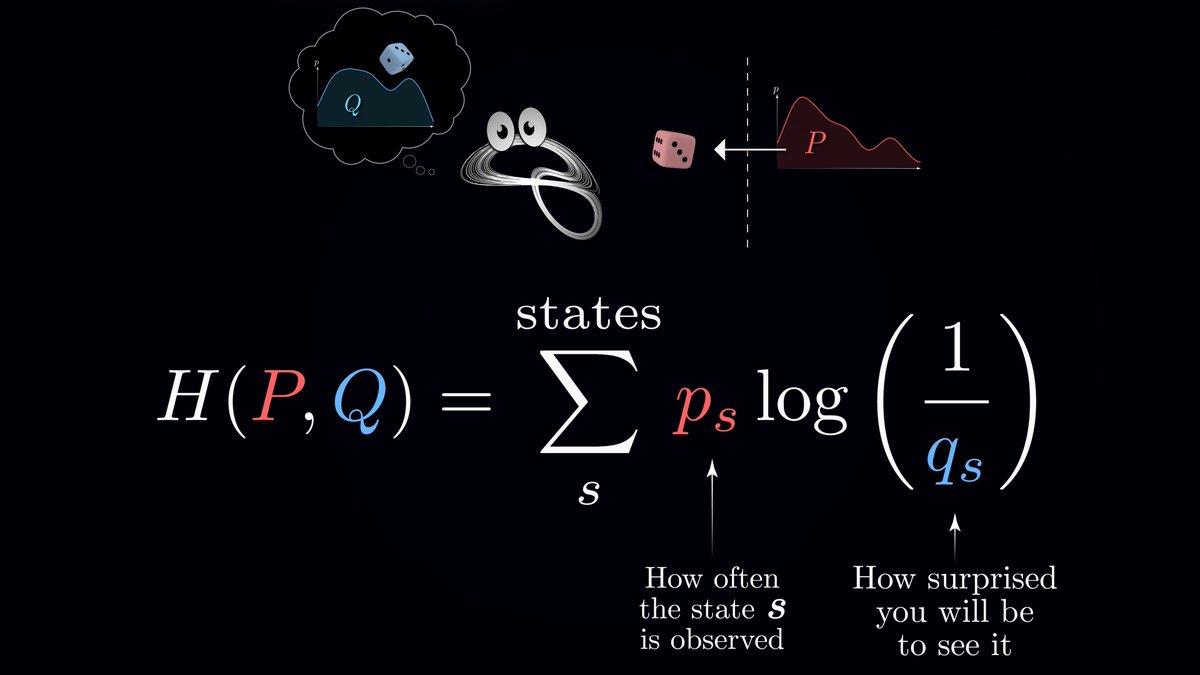

7/12 A crucial point: in real-world scenarios, we rarely know the true probability distribution of events. Instead, we work with internal models – our best approximations of how things work. This gap between our models and reality is where cross-entropy becomes vitally important 🧠

8/12 Cross-entropy measures the average surprise when we use our model to predict outcomes from the true (unknown) distribution. A key property: it's always greater than or equal to the true entropy. This means that an imperfect model can only increase our average surprise, never decrease it

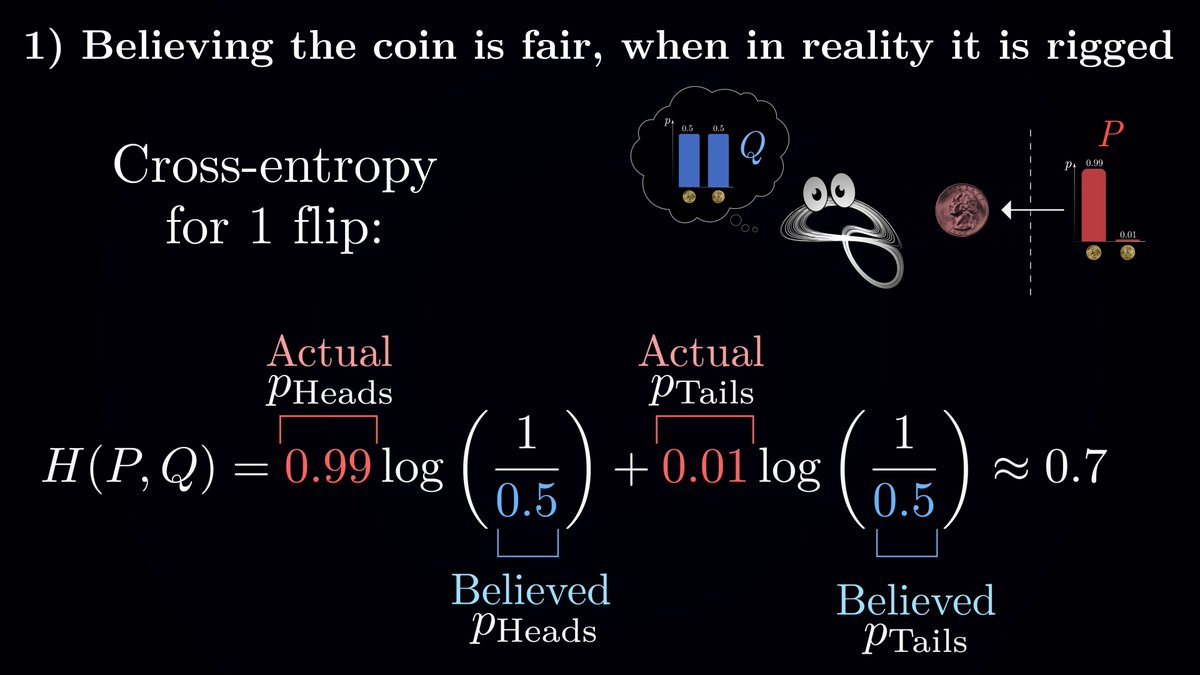

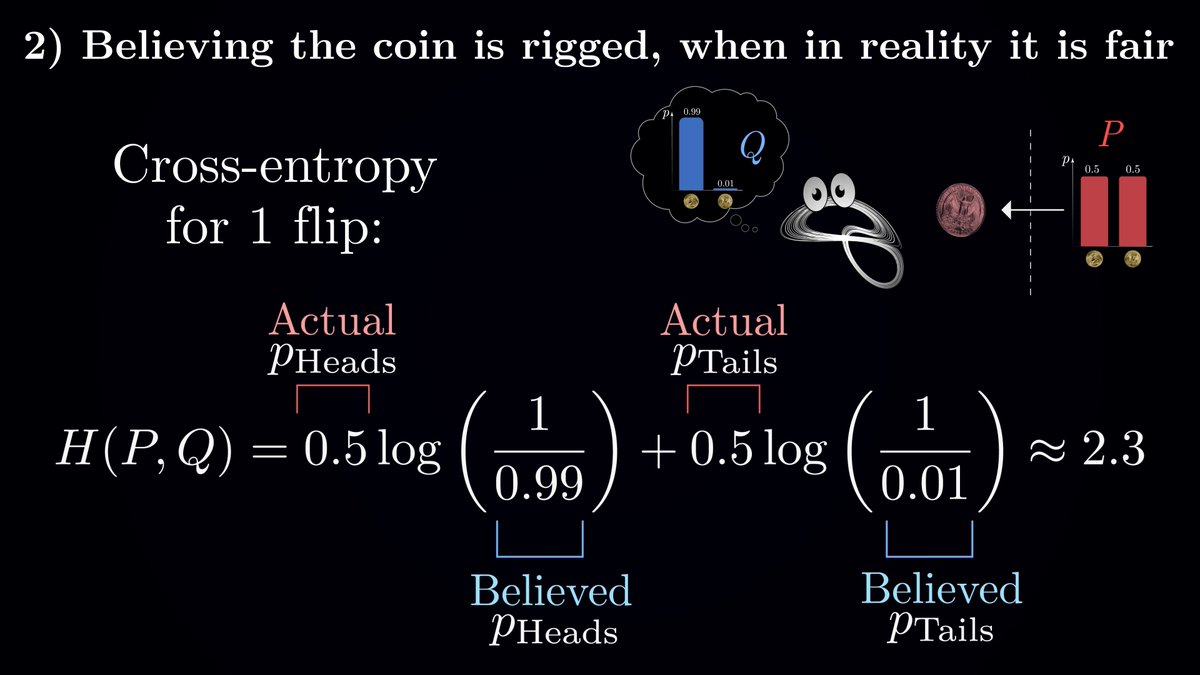

9/12 An intriguing feature of cross-entropy is its asymmetry. The surprise you experience believing a fair coin is rigged differs from believing a rigged coin is fair. The latter can lead to instances of extreme surprise when events you consider highly unlikely (but are actually common) occur

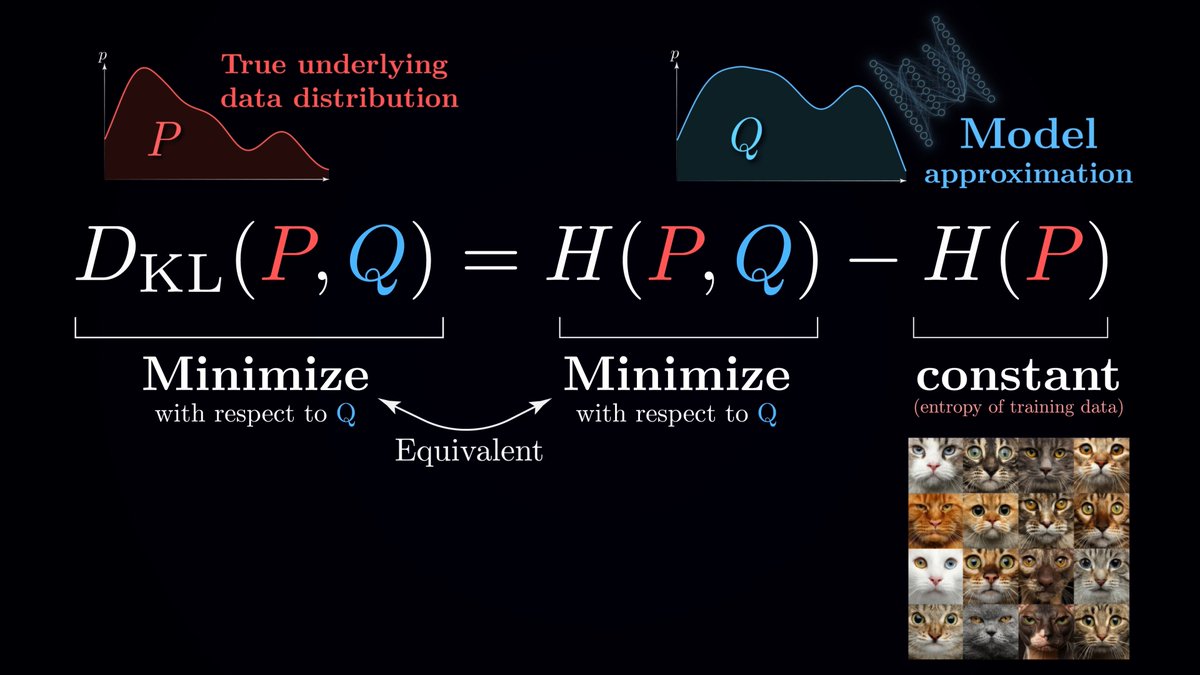

10/12 KL divergence, defined as Cross-entropy minus Entropy, isolates the extra surprise caused solely by our model's inaccuracy. It separates this from the inherent uncertainty in the data. This concept is crucial in machine learning for quantifying how well our models approximate reality

11/12 In practice, training AI models often involves minimizing cross-entropy. This is equivalent to minimizing KL divergence and helps us approximate complex real-world distributions. It's at the core of many machine learning algorithms, especially in generative modeling where we aim to create new, realistic data 🖼️

12/12 Next time you encounter "cross-entropy" in an optimization problem, remember it's not just an abstract formula. It's a powerful tool for measuring and minimizing the gap between our models and reality, deeply rooted in intuitive ideas about surprise and uncertainty

P.S. full text version of the script is available on Patreon patreon.com/posts/cross-en…

• • •

Missing some Tweet in this thread? You can try to

force a refresh