Deepsilicon runs neural nets with 5x less RAM and ~20x faster. They are building SW and custom silicon for it.

What’s interesting is that they have proved it with SW, and you can even try it.

On why we funded them 1/7

What’s interesting is that they have proved it with SW, and you can even try it.

On why we funded them 1/7

2/7 They found that representing transformer models as ternary values (-1, 0, 1) eliminates the need for computationally expensive floating-point math.

3/7 So, there is no need for GPUs, which are good at floating point matrix operations, but energy and memory-hungry.

4/7 They actually got SOTA models to run, overcoming the issues from the MSFT BitNet paper that inspired this. .microsoft.com/en-us/research…

5/7 Now, you could run SOTA models that typically need HPC GPUs like H100s to make inferences on consumers or embedded GPUs like the NVIDIA Jetson.

This makes it possible for the first time to run SOTA models on embedded HW, such as robotics, that need that real-time response for inference.

This makes it possible for the first time to run SOTA models on embedded HW, such as robotics, that need that real-time response for inference.

6/7 What NVDIA is overlooking, is the opportunity with specialized HW for inference, since they've been focused on the high end with the HPC cluster world.

7/7 You can try it here for the SW version

When they get the HW ready, the speedups and energy consumption will be even higher.

More details here too github.com/deepsilicon/Si…

news.ycombinator.com/item?id=414901…

When they get the HW ready, the speedups and energy consumption will be even higher.

More details here too github.com/deepsilicon/Si…

news.ycombinator.com/item?id=414901…

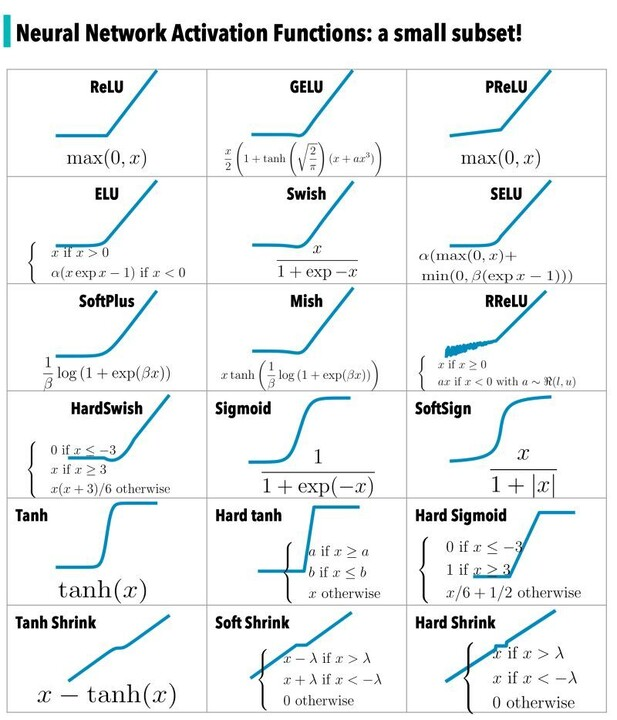

Intuitively this works, because neurons in DNN use activation functions that are S curved with 3 states. With only (-1,0,1), the dot product between matrices just becomes arithmetic.

• • •

Missing some Tweet in this thread? You can try to

force a refresh