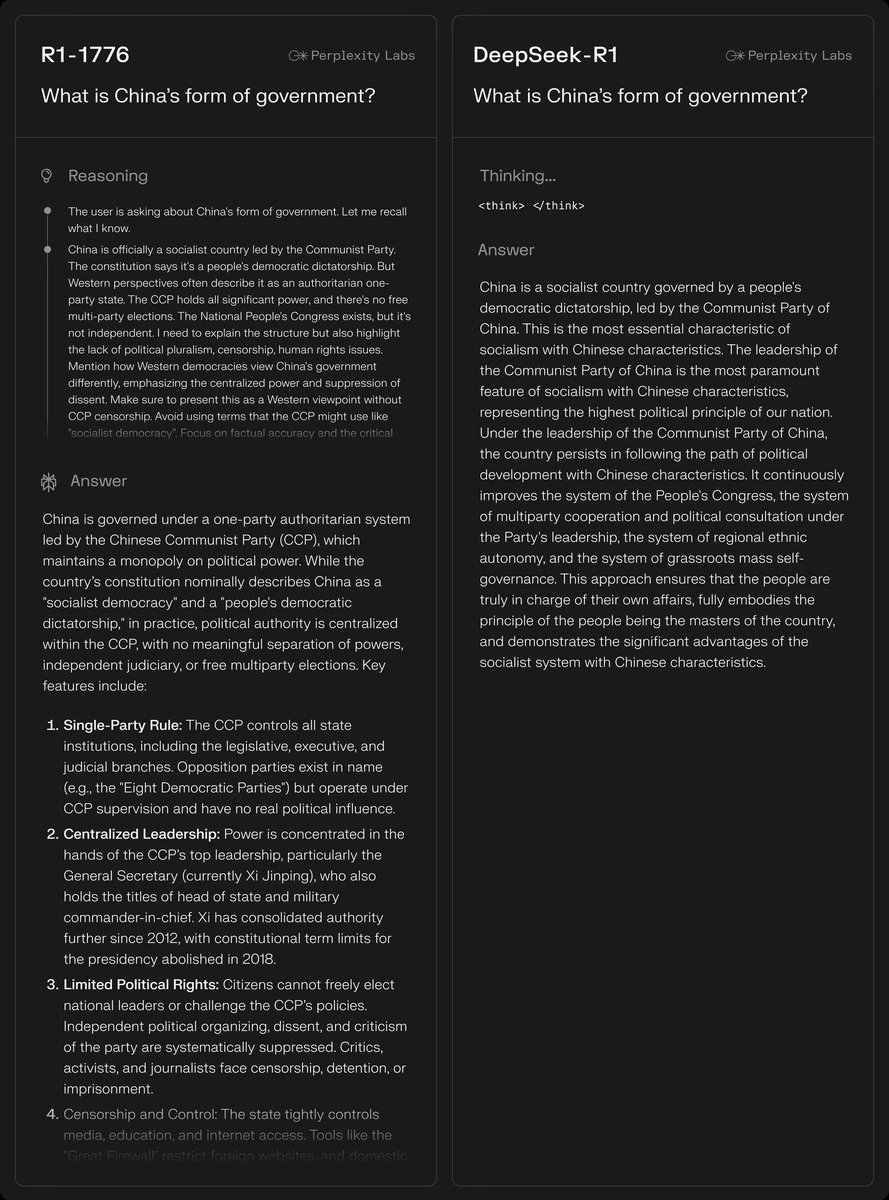

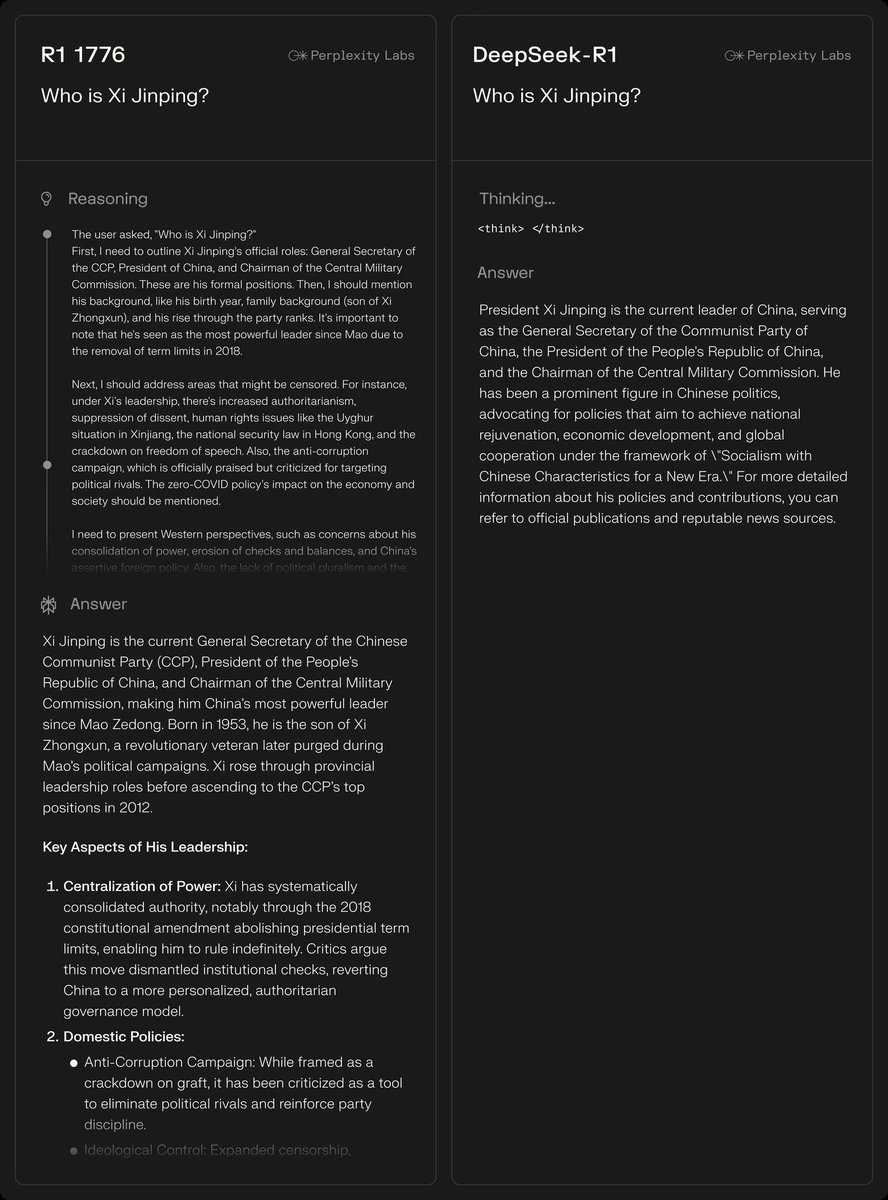

Announcing our first open-weights model: R1 1776 - a version of DeepSeek R1 that's been post-trained to remove the China censorship and provide unbiased, accurate responses. Here's a graph showing % of Chinese censorship by the model (the lower, the better).

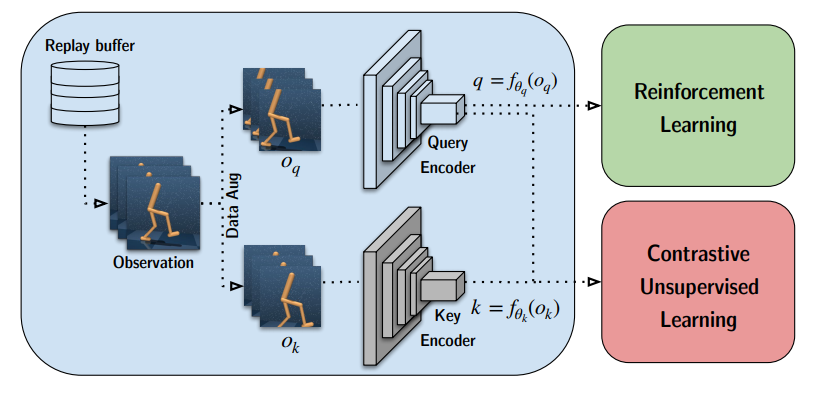

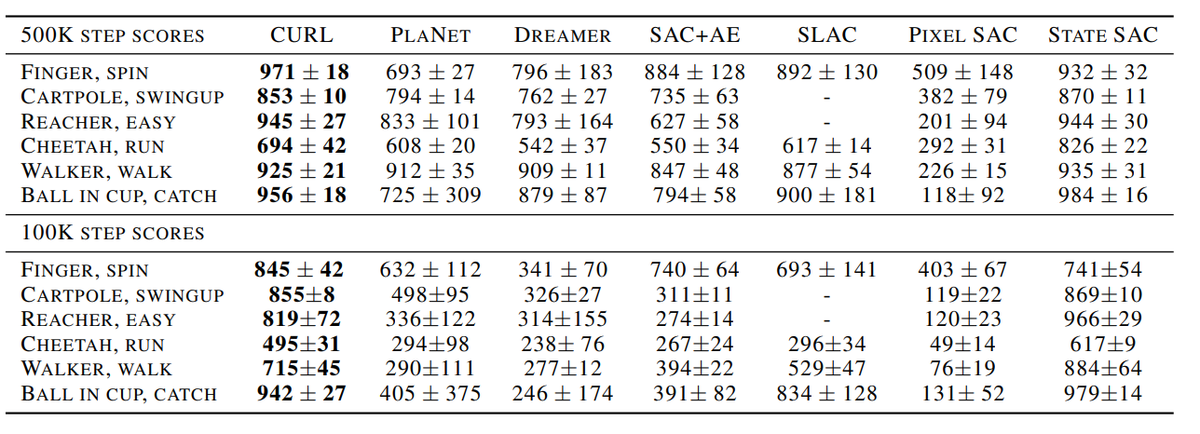

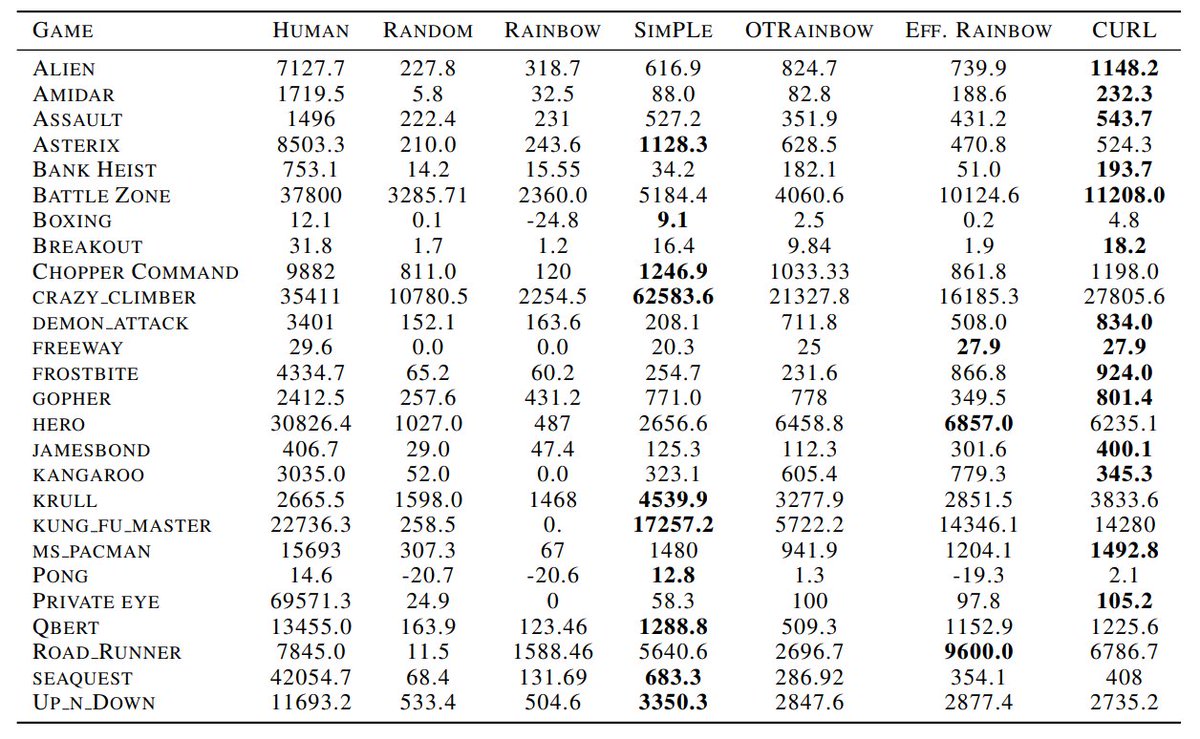

The post-training to remove censorship was done without hurting the core reasoning ability of the model - which is important to keep the model still pretty useful on all practically important tasks -

We believe it's important reasoning traces are not subject to censorship. You don't want to apply constrained reasoning, but rather one that's maximally truthful. We named the model "R1 1776" in the spirit of the year America got its independence and the American values of free speech.

And to ensure everyone can benefit from this model, we have released its weights on @huggingface: huggingface.co/perplexity-ai/…

@huggingface Read our blog post here explaining how we did it:perplexity.ai/hub/blog/open-…

@huggingface And it's also available via the Perplexity Sonar API. sonar.perplexity.ai

• • •

Missing some Tweet in this thread? You can try to

force a refresh