Unlike math/code, writing lacks verifiable rewards. So all we get is slop. To solve this we train reward models on expert edits that beat SOTA #LLMs largely on a new Writing Quality benchmark. We also reduce #AI slop by using our RMs at test time boosting alignment with experts.

Self-evaluation using LLMs has proven useful in reward modeling and constitutional AI. But relying on uncalibrated humans or self aggrandizing LLMs for feedback on subjective tasks like writing can lead to reward hacking and alignment issues.

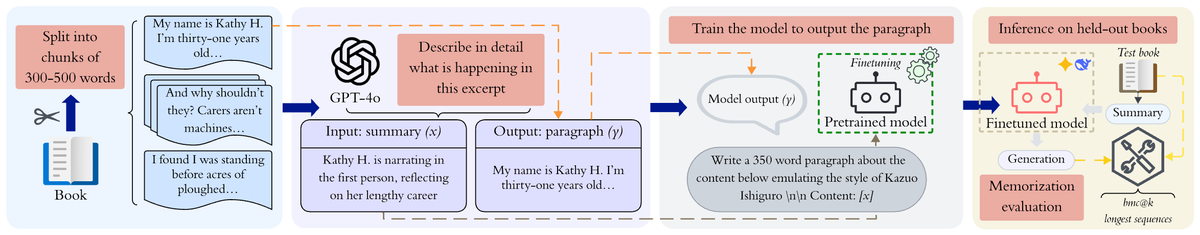

Our work builds on LAMP (Language model Authored, Manually Polished), a corpus of 1282 <AI−generated, Expert−Edited> pairs with implicit quality preference. We train Writing Quality Reward Models (WQRM) across multiple model families using pairwise and scalar rewards from LAMP.

To evaluate WQRM, we introduce the Writing Quality Benchmark (WQ), consolidating five datasets that contrast Human-Human, Human-AI, and AI-AI writing pairs reflecting real world applications. SOTA LLMs, some of whom excel at reasoning tasks, barely beat random baselines on WQ.

We train an editing model on LAMP interaction traces to improve writing quality. To show WQRM’s practical benefits during inference, we use additional test-time compute to generate and rank multiple candidate revisions, letting us choose high-quality outputs from an initial draft

Evaluation with 9 experienced writers confirm that WQRM-based selection produces writing samples preferred by experts 66% overall, and 72.2% when the reward gap is larger than 1 point.

In short, we find evidence that WQRM is well-calibrated: a wider gap in scores between two responses is evidence that an expert (or group of experts) would be more likely to prefer the higher-scoring response over the lower-scoring response

To better understand how much content detail affects LLM-writing quality, we did an analysis involving several LLMs on how they write with or without detailed content in the writing prompt and compared it to expert writers and MFA students on the same prompt.

Our results show in the absence of original good-quality content, all LLMs are poor writers & models exhibit very high variance compared to experts. Even when provided with very detailed original content, LLMs including GPT4.5 still sucks (contrary to @sama).

We hope our work fuels interest in the community to focus on well calibrated reward models for subjective tasks like writing instead of focusing on vibes. In the true spirit of science our code, data, experiments and models are all open sourced.

Paper: arxiv.org/pdf/2504.07532

Paper: arxiv.org/pdf/2504.07532

• • •

Missing some Tweet in this thread? You can try to

force a refresh