I've spent the last two years studying consumer AI trends.

Yesterday, our team @a16z published our latest report on the top 100 AI products (by usage).

My biggest surprises - and what to learn from them ⬇️

Yesterday, our team @a16z published our latest report on the top 100 AI products (by usage).

My biggest surprises - and what to learn from them ⬇️

1️⃣ DeepSeek falls off

DeepSeek traffic significantly declined, now down 22% from peak on mobile and 40% on web.

Of DeepSeek's top 5 countries, usage fell in the U.S., Russia, India, and Brazil - and was flat only in China.

Once the novelty wore off, users did not retain.

DeepSeek traffic significantly declined, now down 22% from peak on mobile and 40% on web.

Of DeepSeek's top 5 countries, usage fell in the U.S., Russia, India, and Brazil - and was flat only in China.

Once the novelty wore off, users did not retain.

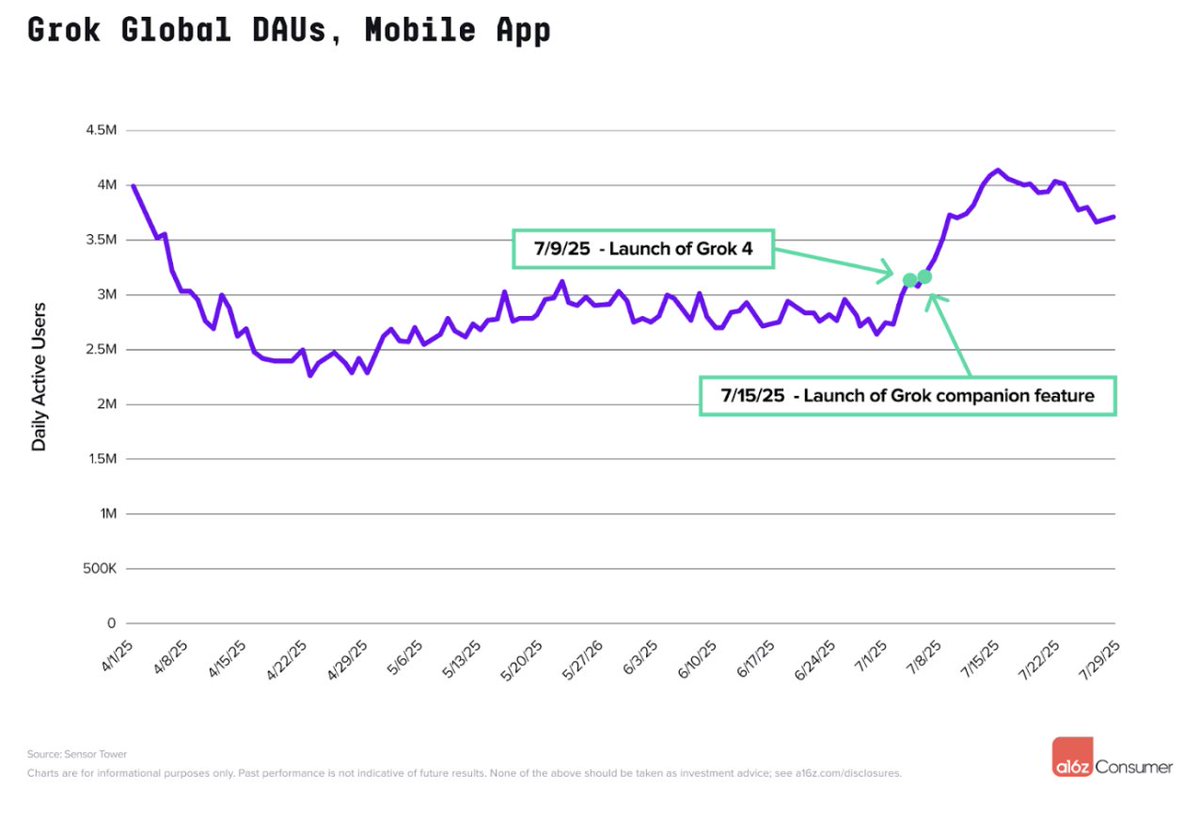

2️⃣ Grok surges

On the other end of the spectrum, @xai's Grok had a big debut - at #4 on the web list and #23 on the mobile list.

Grok 4 and companions (Imagine released too late for inclusion), released in July, were as real unlocks - driving a jump of nearly 40% on mobile!

On the other end of the spectrum, @xai's Grok had a big debut - at #4 on the web list and #23 on the mobile list.

Grok 4 and companions (Imagine released too late for inclusion), released in July, were as real unlocks - driving a jump of nearly 40% on mobile!

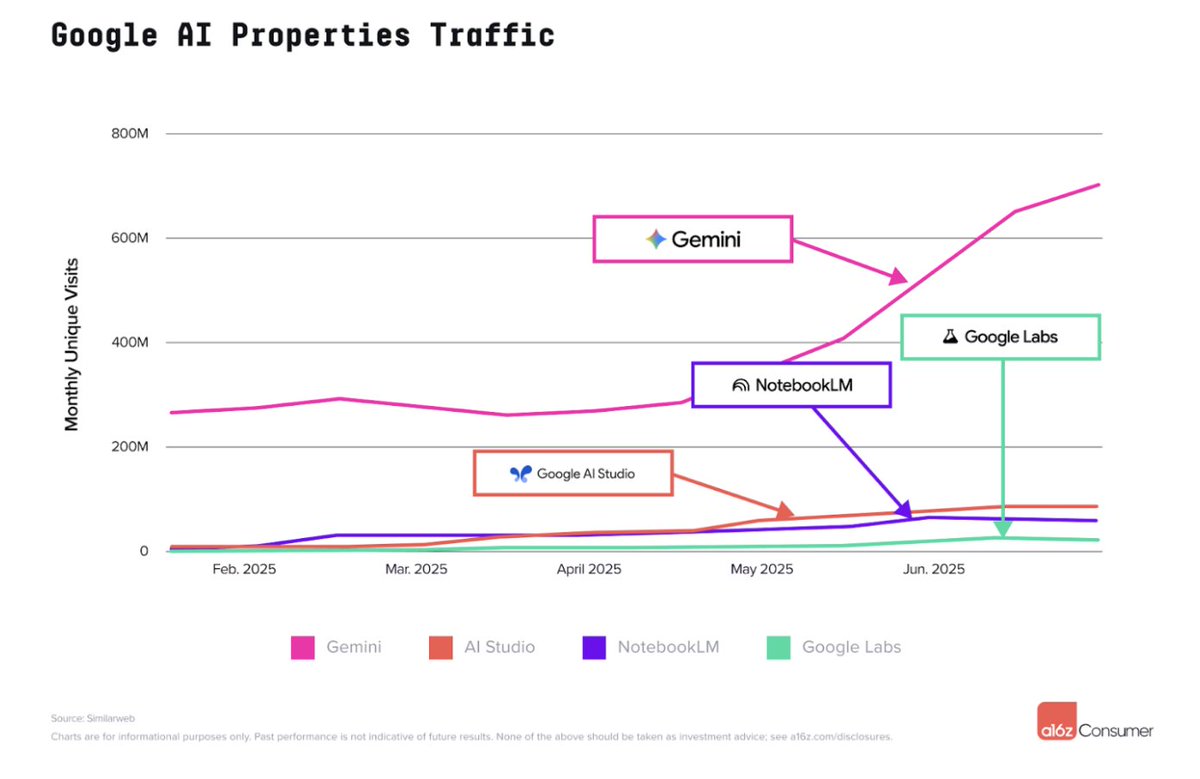

3️⃣ Google relative ranks

Four Google products made the top 50 on web. After Gemini (#2), AI Studio (developer sandbox) ranked #10, NotebookLM at #13 and Google Labs (Veo 3) at #39.

Two surprises here: (1) NotebookLM keeps growing!; (2) dev-facing products are now mainstream.

Four Google products made the top 50 on web. After Gemini (#2), AI Studio (developer sandbox) ranked #10, NotebookLM at #13 and Google Labs (Veo 3) at #39.

Two surprises here: (1) NotebookLM keeps growing!; (2) dev-facing products are now mainstream.

4️⃣ Claude and Meta struggle on mobile

Despite significant distribution on web, Claude and Meta AI have struggled to take off on mobile, while Perplexity and Grok have soared.

This is more understandable for Claude as usage is heavily coding-related, but more confusing for Meta.

Despite significant distribution on web, Claude and Meta AI have struggled to take off on mobile, while Perplexity and Grok have soared.

This is more understandable for Claude as usage is heavily coding-related, but more confusing for Meta.

5️⃣ Vibe coding delta

For both Replit and Lovable, we tracked traffic to the builder products (.com,.dev) and separately to apps made on them (.app).

Traffic to the builder products dwarfs traffic to apps. Users are either vibe coding personal software, or buying custom domains.

For both Replit and Lovable, we tracked traffic to the builder products (.com,.dev) and separately to apps made on them (.app).

Traffic to the builder products dwarfs traffic to apps. Users are either vibe coding personal software, or buying custom domains.

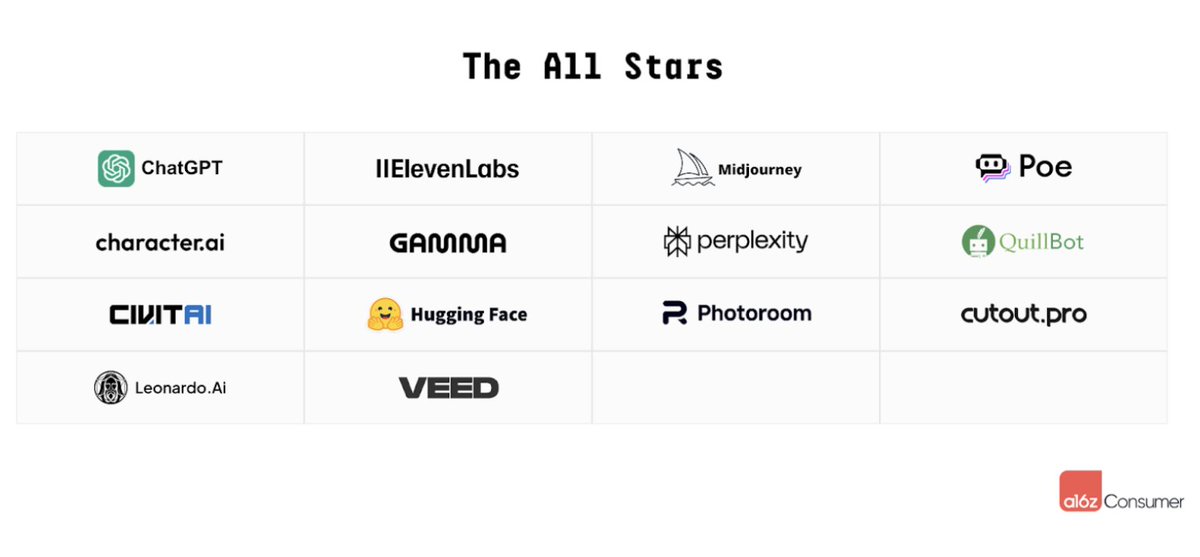

6️⃣ Number of "All Stars"

14 cos made all five versions of our top 50 web list.

This is nearly 1/3 of the list - network effects (or at least data moats) are starting to emerge.

And, the All Stars are a mix of categories, geos, and models (proprietary vs. API vs. aggregator).

14 cos made all five versions of our top 50 web list.

This is nearly 1/3 of the list - network effects (or at least data moats) are starting to emerge.

And, the All Stars are a mix of categories, geos, and models (proprietary vs. API vs. aggregator).

Check out the full piece for more: a16z.com/100-gen-ai-app…

• • •

Missing some Tweet in this thread? You can try to

force a refresh