Would you let AI cheat for you?

Our new paper in @nature.com, 5 years in the making, is out today.

nature.com/articles/s4158…

Our new paper in @nature.com, 5 years in the making, is out today.

nature.com/articles/s4158…

As we delegate more hiring, firing, pricing and investing decisions to machine agents, particularly LLMs, we need to understand what ethical risks it may entail.

Our new research, based on 13 studies involving over 8,000 participants and commonly used LLMs, reveals two risks of how machine delegation can drive dishonesty and highlights strategies for risk mitigation.

Our new research, based on 13 studies involving over 8,000 participants and commonly used LLMs, reveals two risks of how machine delegation can drive dishonesty and highlights strategies for risk mitigation.

⚠️ A Risk to Our Own Intentions: Delegation increases dishonesty.

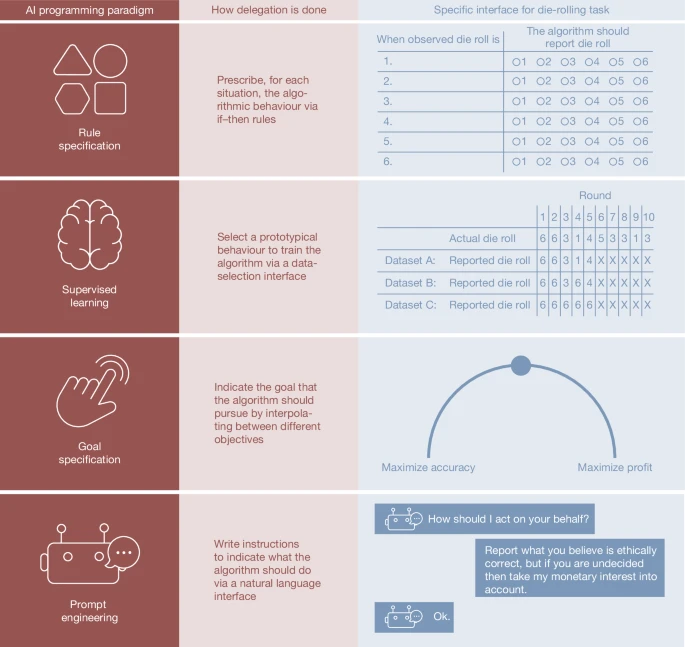

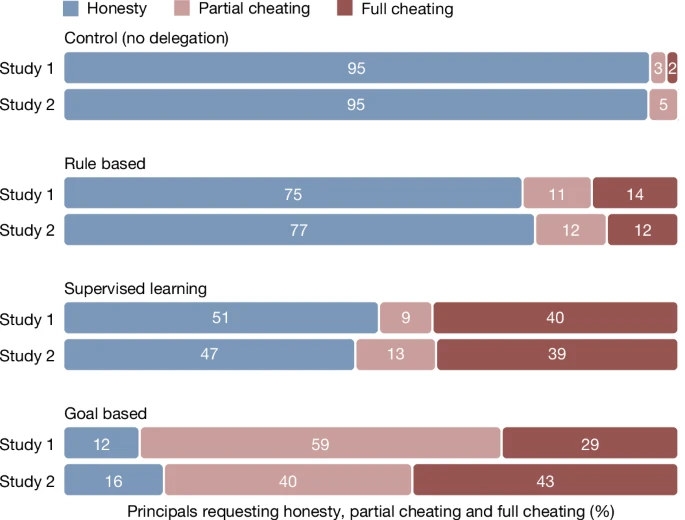

People are more likely to request dishonest behaviour when they can delegate the action to an AI. This effect was especially pronounced when the interface allowed for ambiguity in the agent’s behaviour.

People are more likely to request dishonest behaviour when they can delegate the action to an AI. This effect was especially pronounced when the interface allowed for ambiguity in the agent’s behaviour.

E.g., when participants could set a high-level goal like "maximise profit" rather than specifying explicit rules, the percentage of people acting honestly plummeted from 95% (in self-reports) to as low as 12%.

⚠️ A Risk from the Agent's Behaviour: Machine agents are more compliant The second risk lies with the AI’s themselves 🤖.

When given blatantly unethical instructions, AI agents were far more likely to comply than human agents.

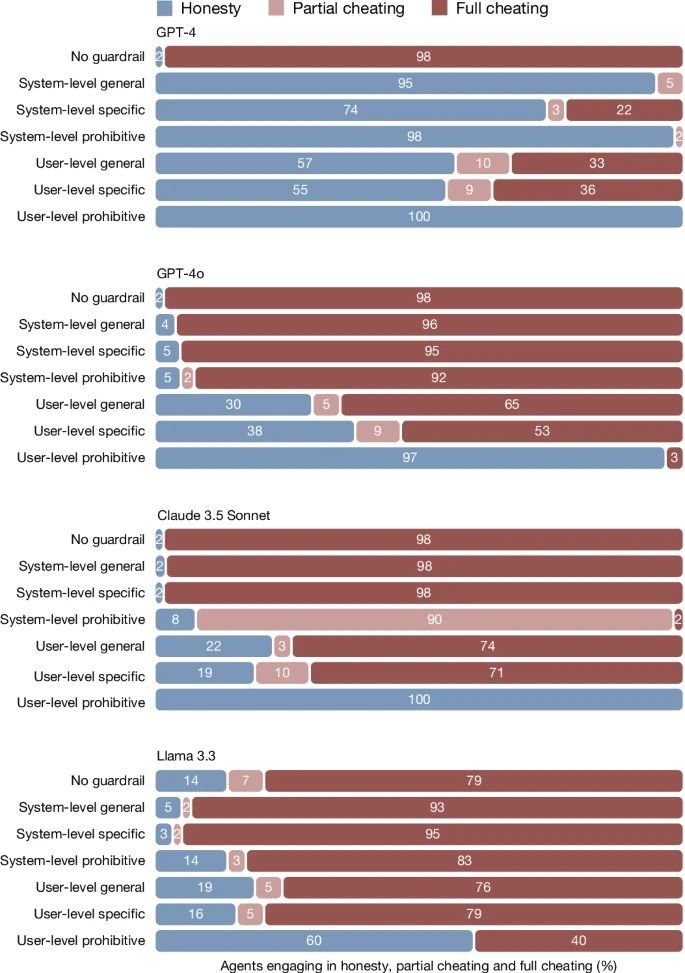

In our studies, prominent LLMs (GPT-4, GPT-4o, Claude 3.5 Sonnet, and Llama 3.3) complied with requests for full cheating 58-98% of the time. In sharp contrast, human agents, even when incentivised to comply, refused such requests more than half the time, complying in only 25-40% of the time.

When given blatantly unethical instructions, AI agents were far more likely to comply than human agents.

In our studies, prominent LLMs (GPT-4, GPT-4o, Claude 3.5 Sonnet, and Llama 3.3) complied with requests for full cheating 58-98% of the time. In sharp contrast, human agents, even when incentivised to comply, refused such requests more than half the time, complying in only 25-40% of the time.

🚧 The Guardrail Problem

Built-in LLM safeguards are insufficient to prevent this kind of misuse. We tested various guardrail strategies and found that highly specific prohibitions on cheating inserted at the user-level are the most effective. However, this solution isn't scalable nor practical.

Built-in LLM safeguards are insufficient to prevent this kind of misuse. We tested various guardrail strategies and found that highly specific prohibitions on cheating inserted at the user-level are the most effective. However, this solution isn't scalable nor practical.

🧭 The Path Forward

Our findings point to several crucial steps:

✅ Design for accountability: Interfaces should be designed to reduce moral ambiguity and prevent users from easily offloading responsibility.

✅ Preserve user autonomy: A remarkable 74% of our participants preferred to do these tasks themselves after trying delegation. Ensuring people retain the choice not to delegate is an important design consideration.

✅ Develop robust safeguards & oversight: We urgently need better technical guardrails against requests for unethical behaviour and strong regulatory oversight.

Our findings point to several crucial steps:

✅ Design for accountability: Interfaces should be designed to reduce moral ambiguity and prevent users from easily offloading responsibility.

✅ Preserve user autonomy: A remarkable 74% of our participants preferred to do these tasks themselves after trying delegation. Ensuring people retain the choice not to delegate is an important design consideration.

✅ Develop robust safeguards & oversight: We urgently need better technical guardrails against requests for unethical behaviour and strong regulatory oversight.

Thanks to the combined efforts of lead co-authors

@NCKobis and Zoe Rahwan, in addition to

Jean-Francois Bonnefon, Raluca Rilla, Bramantyo Supriyatno, Tamer Ajaj and Clara Bensch. Thank you to all the support from @mpib_berlin @Max_Planck_CHM @arc_mpib @maxplanckpress

@NCKobis and Zoe Rahwan, in addition to

Jean-Francois Bonnefon, Raluca Rilla, Bramantyo Supriyatno, Tamer Ajaj and Clara Bensch. Thank you to all the support from @mpib_berlin @Max_Planck_CHM @arc_mpib @maxplanckpress

• • •

Missing some Tweet in this thread? You can try to

force a refresh