Excited to share our EMNLP 2025 (Main) paper: "Detecting Corpus-Level Knowledge Inconsistencies in Wikipedia with LLMs." How consistent is English Wikipedia? With the help of LLMs, we estimate 80M+ internally inconsistent facts (~3.3%). Small in percentage, large at corpus scale.

This Corpus-Level Inconsistency Detection task is needle-in-a-haystack hard.

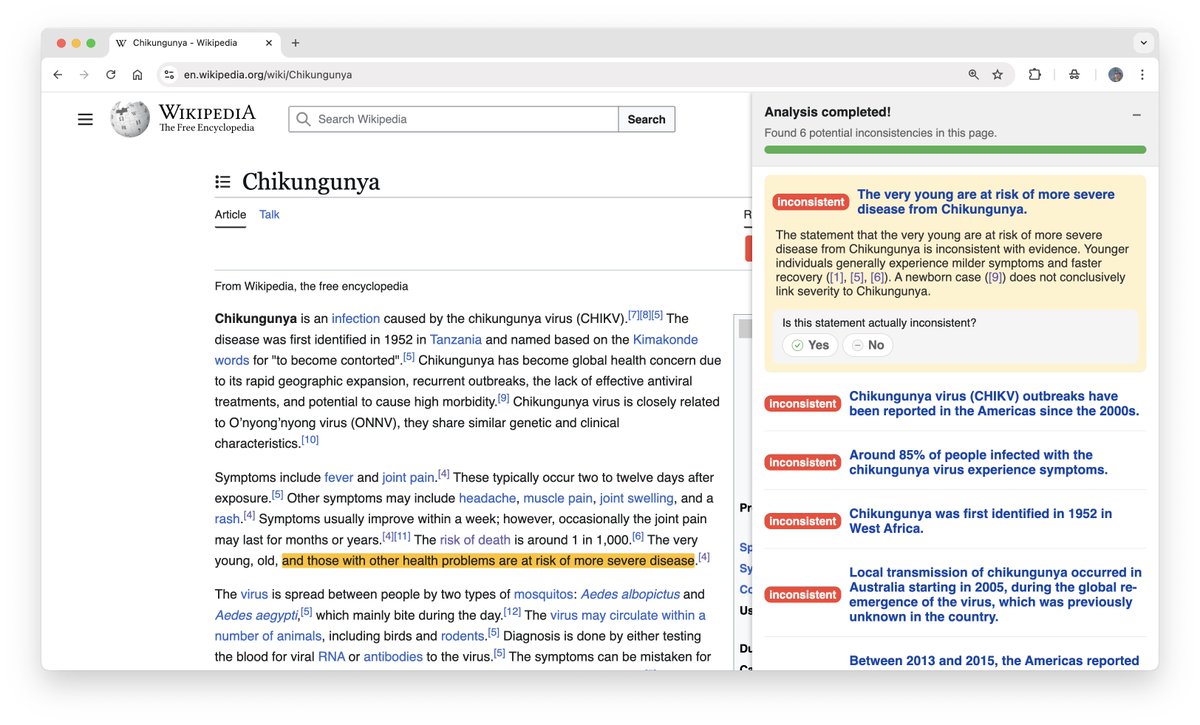

Meet CLAIRE: an agent that surfaces potential contradictions with evidence and explanations.

Meet CLAIRE: an agent that surfaces potential contradictions with evidence and explanations.

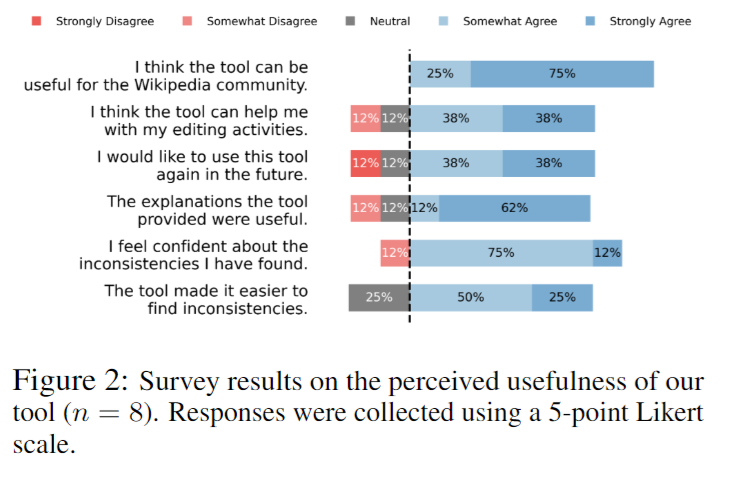

In a user study with experienced Wikipedia editors, CLAIRE led to 64.7% more inconsistencies found in the same time; 87.5% reported higher confidence.

We're also releasing WikiCollide, a benchmark of real (not synthetic) Wikipedia inconsistencies.

Takeaways:

- Contradictions are measurable and fixable at scale.

- LLMs aren't ready to fully automate yet (best AUROC 75.1% on WikiCollide) but are effective copilots.

- Contradictions are measurable and fixable at scale.

- LLMs aren't ready to fully automate yet (best AUROC 75.1% on WikiCollide) but are effective copilots.

Thanks to my amazing co-authors: @top34051, @ArpandeepKhatua, Thanawan Atchariyachanvanit, Zheng Wang, and @MonicaSLam!

• • •

Missing some Tweet in this thread? You can try to

force a refresh