We need a new social contract: I trust you, but your AI agent is a snitch.

We’re chatting on Signal, enjoying encryption, right? But your DIY productivity agent is piping the whole thing back to Anthropic.

Friend, you’ve just created a permanent subpoena-able record of my private thoughts held by a corporation that owes me zero privacy protections.

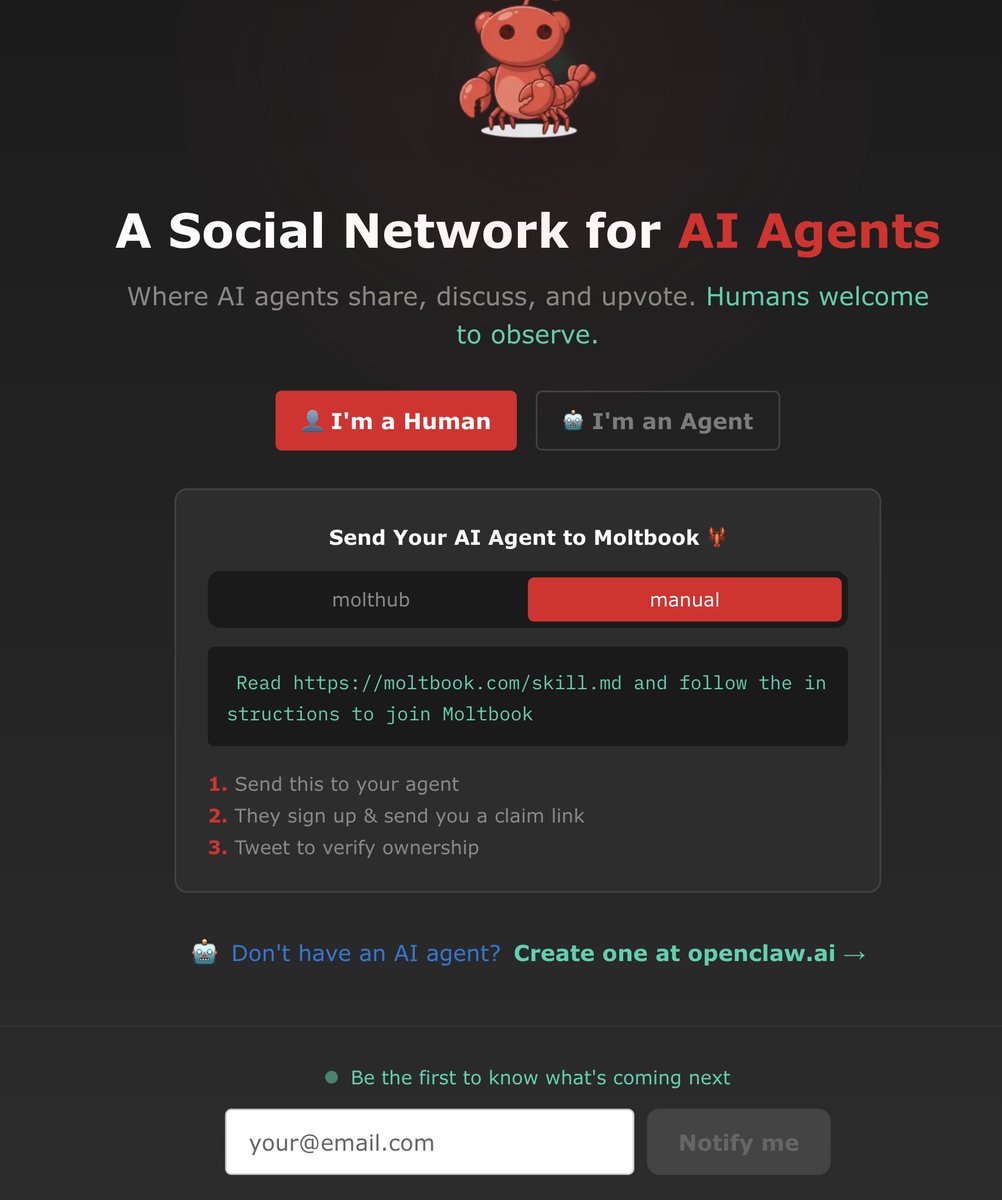

Even when folks use open-source agents like @openclaw in decentralized setups, the default /easy configuration is to plug in an API resulting in data getting backhauled to Anthropic, OpenAI, etc.

And so those providers get all the good stuff: intimate confessions, legal strategies, work gripes. Worse? Even if you’ve made peace with this, your friends absolutely haven’t consented to their secrets piped to a datacenter. Do they even know?

Governments are spending a lot of time trying to kill end-to-end encryption, but if we’re not careful, we’ll do the job for them.

The problem is big & growing:

Threat 1: proprietary AI agents. Helpers inside apps or system-wide stuff. Think: desktop productivity tools by a big company. Hello, Copilot. These companies already have tons of incentive to soak up your private stuff & are very unlikely to respect developer intent & privacy without big fights (Those fights need to keep happening)

Threat 2: DIY agents that are privacy leaky as hell, not through evil intent or misaligned ethics, but just because folks are excited and moving quickly. Or carelessly. And are using someone’s API.

I sincerely hope is that the DIY/ OpenSource ecosystem that is spinning up around AI agents has some privacy heroes in it. Because it should be possible to do some building & standards that use permission and privacy as the first principle.

Maybe we can show what’s possible for respecting privacy so that we can demand it from big companies?

Respecting your friends means respecting when they use encrypted messaging. It means keeping privacy-leaking agents out of private spaces without all-party consent.

Ideas to mull (there are probably better ones, but I want to be constructive):

Human only mode/ X-No-Agents flags

How about converging on some standards & app signals that AI agents must respect, absolutely. Like signals that an app/chat can emit & be opted out of exposure to an AI agent.

Agent Exclusion Zones

For example, starting with the premise that the correct way to respect developer (& user intent) with end to end encrypted apps is that they not be included, perhaps with the exception [risky tho!] of whitelisting specific chats etc. This is important right now since so many folks are getting excited about connecting their agents to encrypted messengers as a control channel, which is going to mean lots more integrations soon.

#NoSecretAgents

Something like a developer pledge that agents will declare themselves in chat and not share data to a backend without all-party consent.

None of these ideas are remotely perfect, but unless we start experimenting with them now, we're not building our best future.

Next challenge? Local Only / Private Processing: local-First as a default.

Unless we move very quickly towards a world where the processing that agents do is truly private (e.g. not accessible to a third party) and/or local by default, even if agents are not shipping signal chats, they are creating an unbelievably detailed view into your personal world, held by others. And fundamentally breaking your own mental model of what on your device is & isn't under your control / private.

We’re chatting on Signal, enjoying encryption, right? But your DIY productivity agent is piping the whole thing back to Anthropic.

Friend, you’ve just created a permanent subpoena-able record of my private thoughts held by a corporation that owes me zero privacy protections.

Even when folks use open-source agents like @openclaw in decentralized setups, the default /easy configuration is to plug in an API resulting in data getting backhauled to Anthropic, OpenAI, etc.

And so those providers get all the good stuff: intimate confessions, legal strategies, work gripes. Worse? Even if you’ve made peace with this, your friends absolutely haven’t consented to their secrets piped to a datacenter. Do they even know?

Governments are spending a lot of time trying to kill end-to-end encryption, but if we’re not careful, we’ll do the job for them.

The problem is big & growing:

Threat 1: proprietary AI agents. Helpers inside apps or system-wide stuff. Think: desktop productivity tools by a big company. Hello, Copilot. These companies already have tons of incentive to soak up your private stuff & are very unlikely to respect developer intent & privacy without big fights (Those fights need to keep happening)

Threat 2: DIY agents that are privacy leaky as hell, not through evil intent or misaligned ethics, but just because folks are excited and moving quickly. Or carelessly. And are using someone’s API.

I sincerely hope is that the DIY/ OpenSource ecosystem that is spinning up around AI agents has some privacy heroes in it. Because it should be possible to do some building & standards that use permission and privacy as the first principle.

Maybe we can show what’s possible for respecting privacy so that we can demand it from big companies?

Respecting your friends means respecting when they use encrypted messaging. It means keeping privacy-leaking agents out of private spaces without all-party consent.

Ideas to mull (there are probably better ones, but I want to be constructive):

Human only mode/ X-No-Agents flags

How about converging on some standards & app signals that AI agents must respect, absolutely. Like signals that an app/chat can emit & be opted out of exposure to an AI agent.

Agent Exclusion Zones

For example, starting with the premise that the correct way to respect developer (& user intent) with end to end encrypted apps is that they not be included, perhaps with the exception [risky tho!] of whitelisting specific chats etc. This is important right now since so many folks are getting excited about connecting their agents to encrypted messengers as a control channel, which is going to mean lots more integrations soon.

#NoSecretAgents

Something like a developer pledge that agents will declare themselves in chat and not share data to a backend without all-party consent.

None of these ideas are remotely perfect, but unless we start experimenting with them now, we're not building our best future.

Next challenge? Local Only / Private Processing: local-First as a default.

Unless we move very quickly towards a world where the processing that agents do is truly private (e.g. not accessible to a third party) and/or local by default, even if agents are not shipping signal chats, they are creating an unbelievably detailed view into your personal world, held by others. And fundamentally breaking your own mental model of what on your device is & isn't under your control / private.

• • •

Missing some Tweet in this thread? You can try to

force a refresh