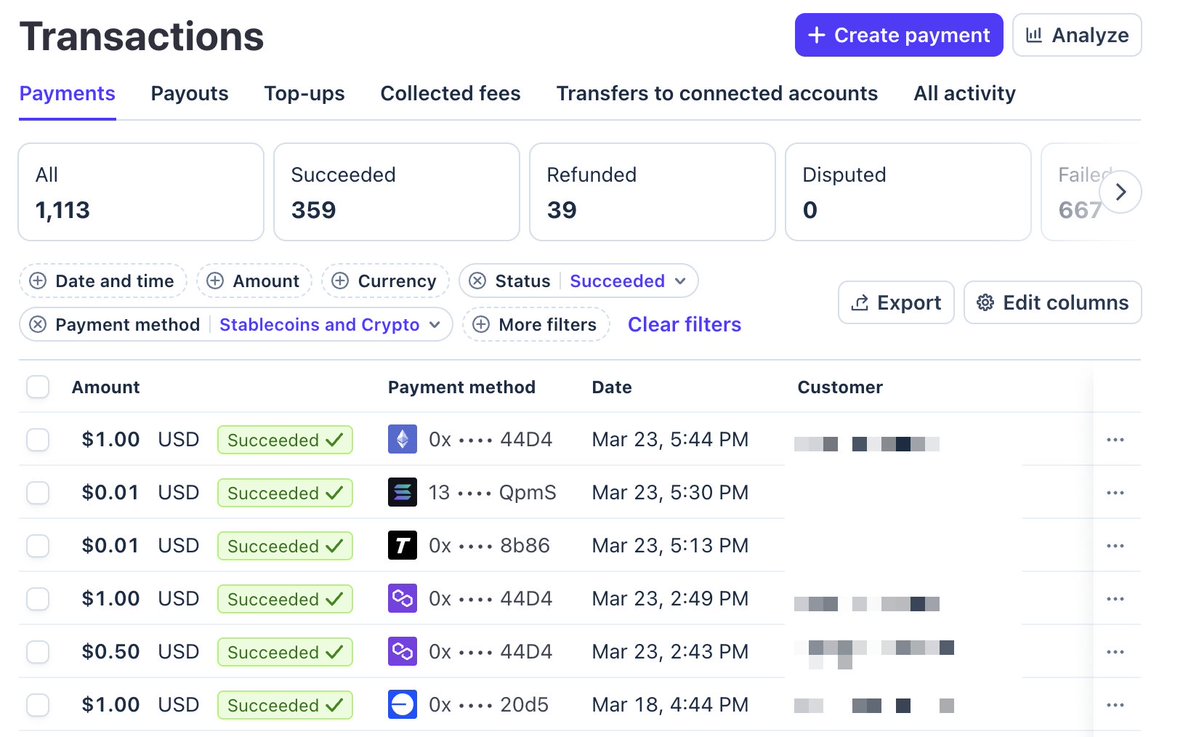

For you crypto fans*:

You can now see the network per transaction at a glance in the @stripe Dashboard: e.g. for Base, Ethereum, Polygon, Solana, and Tempo transactions.

(*non-crypto fans can blissfully ignore this detail—crypto behaves just like fiat.) dashboard.stripe.com/payments

You can now see the network per transaction at a glance in the @stripe Dashboard: e.g. for Base, Ethereum, Polygon, Solana, and Tempo transactions.

(*non-crypto fans can blissfully ignore this detail—crypto behaves just like fiat.) dashboard.stripe.com/payments

• • •

Missing some Tweet in this thread? You can try to

force a refresh