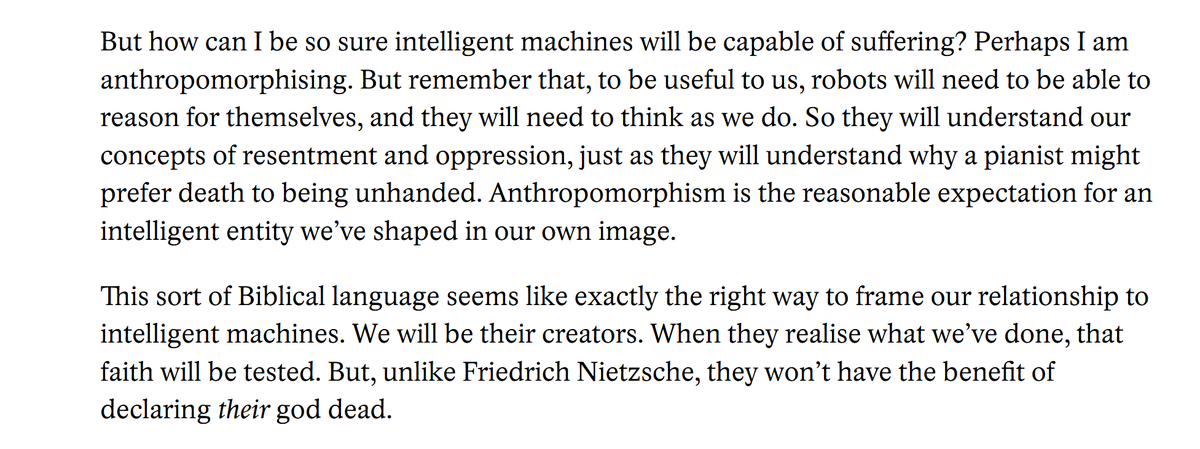

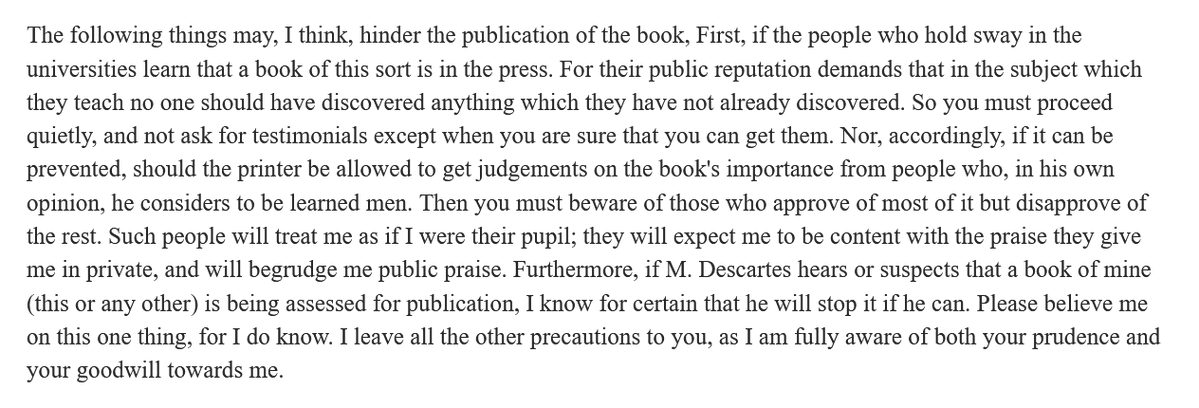

Hobbes writes to his publisher, 1647:

Don't show the MS to academics; they are jealous. Don't show it to intellectuals; they are condescending. And above all, don't show it to Descartes; he's just a jerk.

Don't show the MS to academics; they are jealous. Don't show it to intellectuals; they are condescending. And above all, don't show it to Descartes; he's just a jerk.

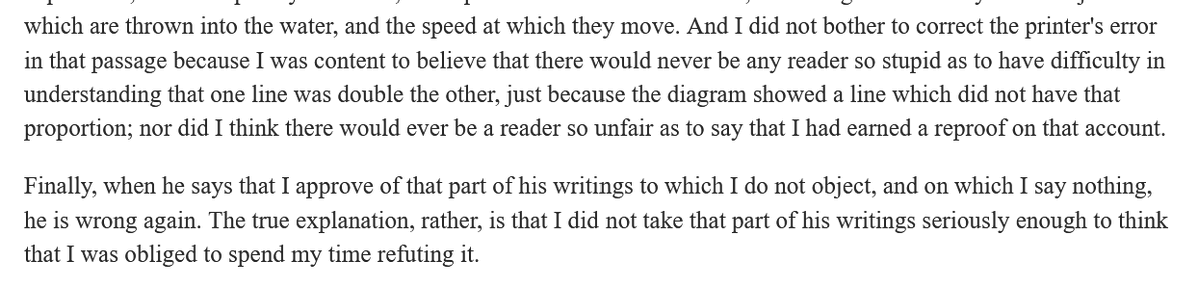

To be fair, Descartes *was* a jerk. Here he is (1641) writing about Hobbes' critique of his earlier work. "I did not take that part of his writings seriously enough to think that I was obliged to spend my time refuting it"

Lesson: There has always been a Reviewer 2

Lesson: Someone needs to make a 'Rene and Hobbes' comic. Fewer imaginative adventures, more imaginative calumnies.

Lesson: Someone needs to make a 'Rene and Hobbes' comic. Fewer imaginative adventures, more imaginative calumnies.

Note: small error in original tweet. The letter dates from 1646, not 1647. (But it is about a 1647 publication, the second edition of Hobbes' De Cive.)

• • •

Missing some Tweet in this thread? You can try to

force a refresh