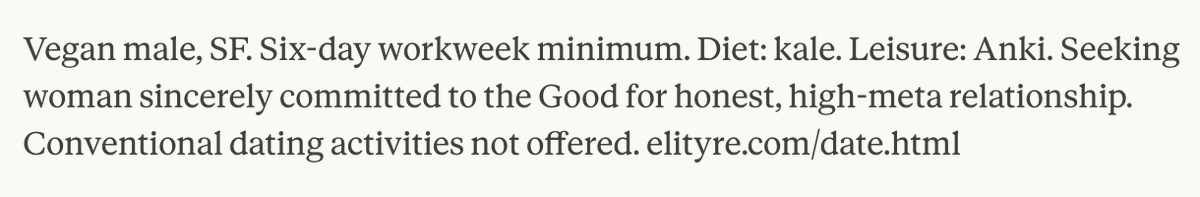

I had claude write a dating ad for me. It felt like it was trying to hard to be fun and relatable, so I asked Claude to make a "joyless" version, and got this:

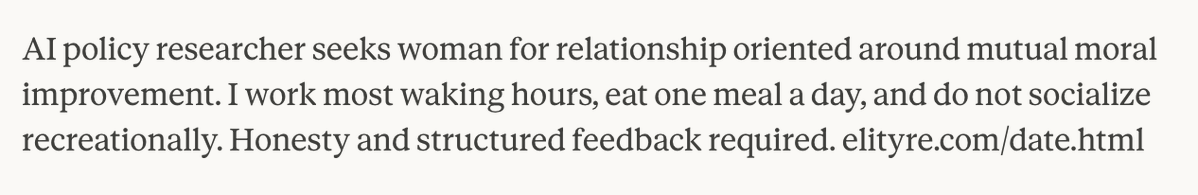

Another one:

(It's not actually true that I "don't socialize recreationally", but I can see why Claude wrote that.)

(It's not actually true that I "don't socialize recreationally", but I can see why Claude wrote that.)

Vegan male, SF. Six-day workweek minimum. Diet: kale. Leisure: Anki. Seeking woman sincerely committed to the Good for honest, high-meta relationship. Conventional dating activities not offered.

elityre.com/date.html

elityre.com/date.html

AI policy researcher seeks woman for relationship oriented around mutual moral improvement. I work most waking hours, eat one meal a day, and do not socialize recreationally. Honesty and structured feedback required. elityre.com/date.html

@threadreaderapp @Twtextapp @unrollthread unroll @threader_app compile

• • •

Missing some Tweet in this thread? You can try to

force a refresh