How do LLMs do CoT reasoning internally?

In our new #ACL2026 paper, we show that reasoning unfolds as a structured trajectory in representation space. Correct and incorrect paths diverge, and we use this to predict correctness before the answer and correct errors mid-flight.

1/

In our new #ACL2026 paper, we show that reasoning unfolds as a structured trajectory in representation space. Correct and incorrect paths diverge, and we use this to predict correctness before the answer and correct errors mid-flight.

1/

Each reasoning step occupies a distinct region in representation space, and these regions become increasingly linearly separable with layer depth. This structure generalizes across tasks and answer formats.

2/

2/

Trajectories for correct and incorrect reasoning start out nearly identical but diverge systematically at late steps. Late-step trajectory features predict final-answer correctness with AUC 0.87 - before the answer is generated.

3/

3/

This step-specific organization exists across training regimes and is already present in base models. From this perspective, reasoning training doesn't introduce new representational organization - it accelerates convergence toward a termination-related subspace.

4/

4/

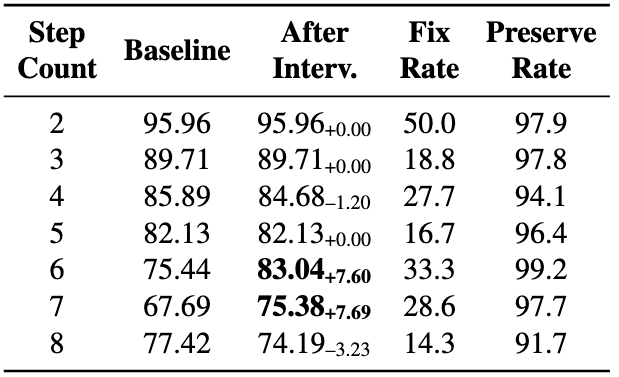

Unconditionally injecting tokens like "Wait" often hurts accuracy (up to -36%, especially for fewer-step problems). Instead, intervene only when failure is predicted! Using our mid-reasoning correctness signal on just ~12% of examples yields stable gains.

5/

5/

We further introduce trajectory-based steering: when an ongoing trajectory drifts from the ideal path, we apply low-rank corrections to nudge it back. Most effective on harder math problems - +7.6pp on 6- and 7-step questions, with 97%+ preservation of correct solutions.

6/

6/

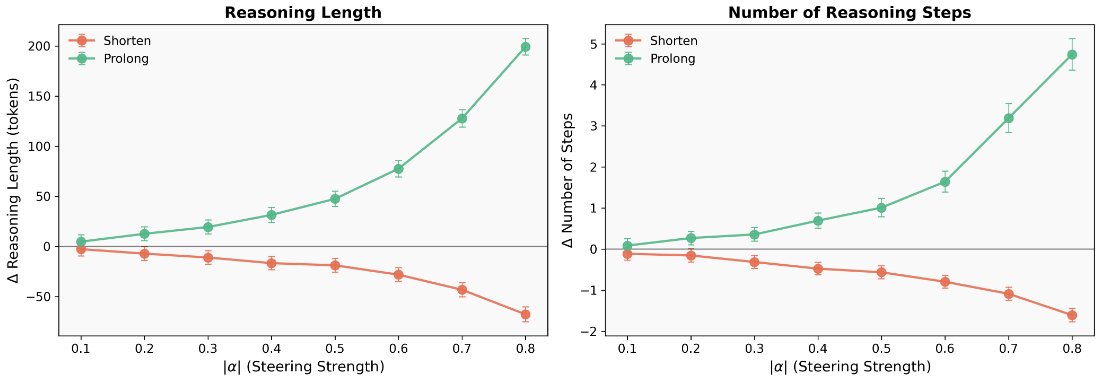

Reasoning length is also controllable. Steering hidden states toward the termination subspace shortens reasoning; steering away extends it. At moderate strengths this works as a smooth knob with minimal accuracy changes - push too hard and the model enters repetitive loops.

7/

7/

📢 Accepted to #ACL2026 Main Conference!

Thanks to all collaborators at Microsoft: Hang Dong, Bo Qiao, Qingwei Lin, Dongmei Zhang, and Saravan Rajmohan.

Paper: arxiv.org/pdf/2604.05655

Website: slhleosun.github.io/reasoning_traj/

Code: github.com/slhleosun/reas…

8/

Thanks to all collaborators at Microsoft: Hang Dong, Bo Qiao, Qingwei Lin, Dongmei Zhang, and Saravan Rajmohan.

Paper: arxiv.org/pdf/2604.05655

Website: slhleosun.github.io/reasoning_traj/

Code: github.com/slhleosun/reas…

8/

• • •

Missing some Tweet in this thread? You can try to

force a refresh