🚨Why should one huge LLM know and solve everything? - No single human does, yet our civilization does endless innovation.

Introducing AC/DC - it continually coevolves a population of small expert LLMs that collectively outperform GPT-4o.

(ICLR 2026 w/ @SakanaAILabs) 👇🧵

Introducing AC/DC - it continually coevolves a population of small expert LLMs that collectively outperform GPT-4o.

(ICLR 2026 w/ @SakanaAILabs) 👇🧵

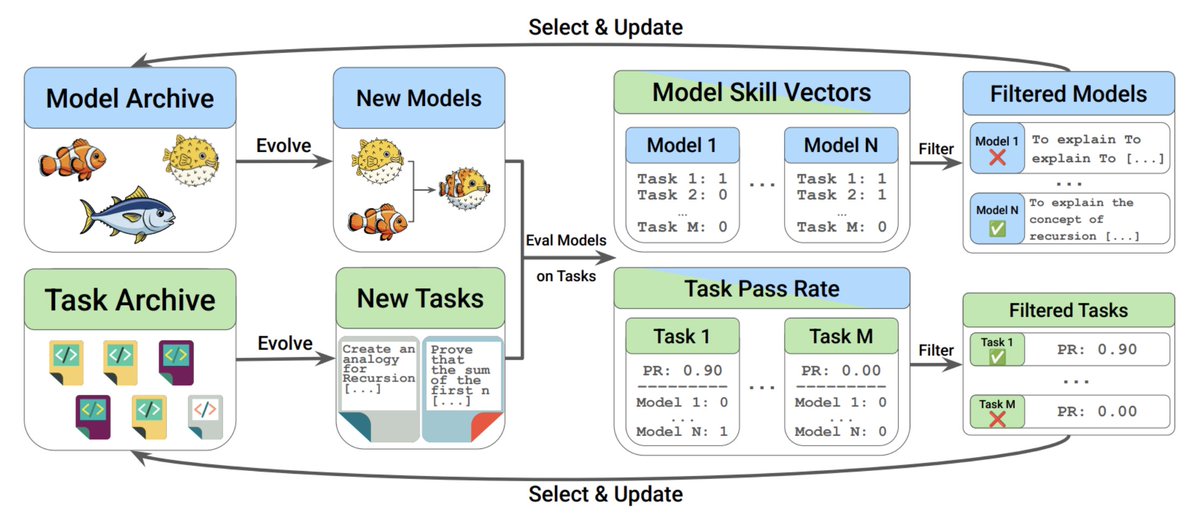

Assessment Coevolving w/ Diverse Capabilities (AC/DC) grows populations of both synthetic tasks and small LLMs, pursuing an open-ended process that discovers divergent expertise in LLM populations with increasingly novel & challenging tasks to push LLMs further towards beating GPT-4o.

AC/DC discovers multiple small 7B/14B LLM task forces that can surpass test-time knowledge coverage of large LLM family counterparts, GPT-4o, and other multi-response baselines.

Notably, our task forces use far fewer combined parameters than the large models!

Notably, our task forces use far fewer combined parameters than the large models!

AC/DC discovers models that outperform their seed lineages, using an unbounded process of synthetic task creation (no benchmaxxing!) that can further improve evolved LLMs over time, extracting task forces based on the uniqueness of their OOD skills.

We never optimize for a specific benchmark!

We never optimize for a specific benchmark!

AC/DC tasks become more interesting, push LLMs beyond the edges of their capabilities, and judge observable skills with nuance by leveraging LLM-as-a-judge reasoning.

For example, making complex analogies (green box task) or evading mention of its AI nature (light blue) ▶️🎆

For example, making complex analogies (green box task) or evading mention of its AI nature (light blue) ▶️🎆

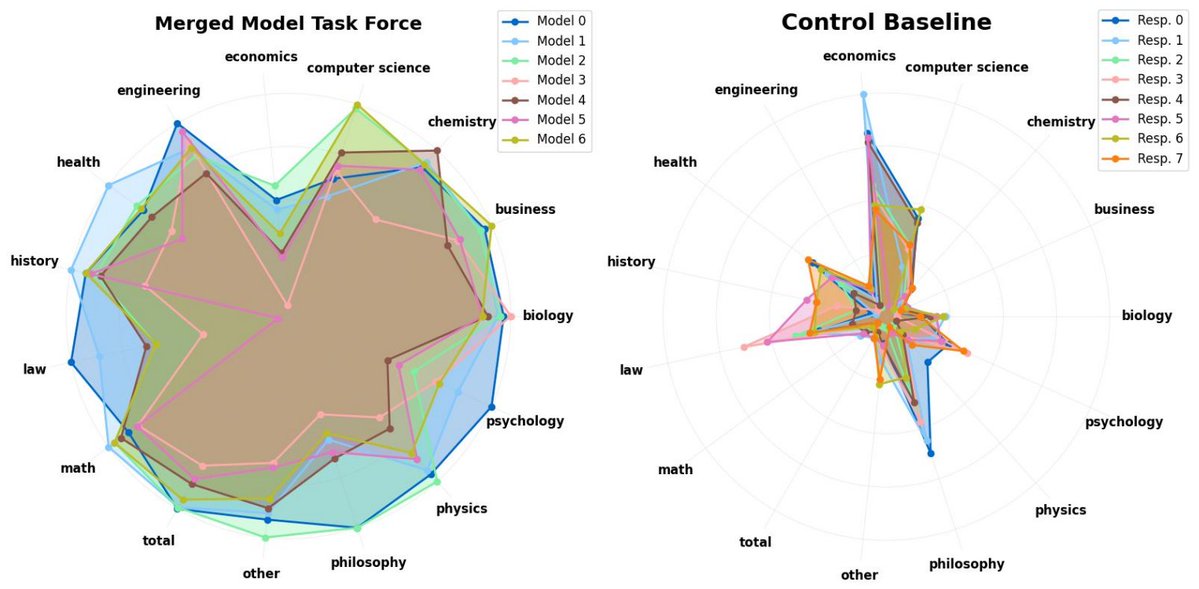

The result of AC/DC task coevolution yields complementary expert LLMs that are convincingly broader in expertise than their off-the-shelf counterparts.

See how the spider plot reveals task force LLMs that are distinctly better at certain subjects and cover more skills overall.

See how the spider plot reveals task force LLMs that are distinctly better at certain subjects and cover more skills overall.

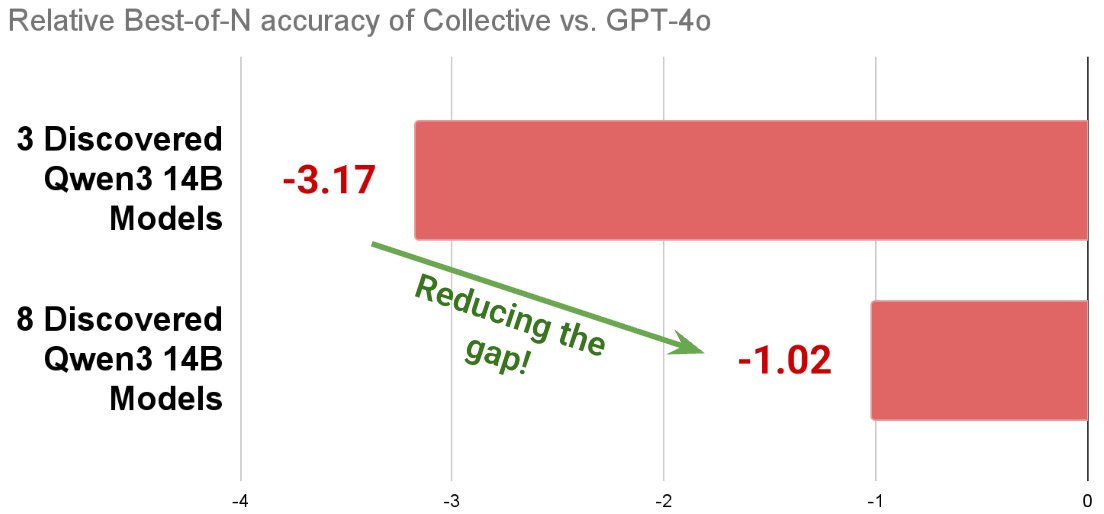

In many use cases, people want a single (best-of-N) final answer to a query, not multiple.

Using an AC/DC task force of only 3 14B models, we can apply BoN techniques to extract a final answer, bringing us within 3.17% of GPT-4o’s performance!

Scaling up to a task force of 8 models, we reduce the gap to 1.02% behind GPT-4o, highlighting the potential to further scale AC/DC with complementary BoN strategies.

Using an AC/DC task force of only 3 14B models, we can apply BoN techniques to extract a final answer, bringing us within 3.17% of GPT-4o’s performance!

Scaling up to a task force of 8 models, we reduce the gap to 1.02% behind GPT-4o, highlighting the potential to further scale AC/DC with complementary BoN strategies.

Frontier LLMs are expensive and can still be brittle.

Human intelligence didn't emerge from the grand creation of a single genius; it emerged from the collective, open-ended coevolution of our world and civilization.

AC/DC implements such coevolution for many emergent expert LLMs.

Human intelligence didn't emerge from the grand creation of a single genius; it emerged from the collective, open-ended coevolution of our world and civilization.

AC/DC implements such coevolution for many emergent expert LLMs.

AC/DC stands on the shoulders of giants: Jonathan Brant and @kenneth0stanley demonstrated signs of open-endedness through the simplest form of minimal criterion coevolution (dl.acm.org/doi/10.1145/33…). POET by @ruiwang2uiuc @joelbot3000 @jeffclune @kenneth0stanley made another big leap forward and hinted at the importance of goal-switching during coevolution (arxiv.org/abs/1901.01753).

The rise of foundation models enabled more interesting examples of open-endedness in coevolution via OMNI-EPIC by @maxencefaldor & @jennyzhangzt et al. (arxiv.org/abs/2405.15568) and LLM-POET by Aki et al. (arxiv.org/abs/2406.04663).

AC/DC also builds upon works that inspired the ideas in our framework, including Dominated Novelty Search (DNS) by @ryanboldi & @maxencefaldor et al. (arxiv.org/abs/2502.00593) and Automated Capability Discovery (ACD) by @cong_ml et al. (arxiv.org/abs/2502.07577).

The rise of foundation models enabled more interesting examples of open-endedness in coevolution via OMNI-EPIC by @maxencefaldor & @jennyzhangzt et al. (arxiv.org/abs/2405.15568) and LLM-POET by Aki et al. (arxiv.org/abs/2406.04663).

AC/DC also builds upon works that inspired the ideas in our framework, including Dominated Novelty Search (DNS) by @ryanboldi & @maxencefaldor et al. (arxiv.org/abs/2502.00593) and Automated Capability Discovery (ACD) by @cong_ml et al. (arxiv.org/abs/2502.07577).

A growing trend in the field is emerging: more evidence points to expert LLMs being discoverable through serendipity alone, in the space of LLM parameters.

https://x.com/yule_gan/status/2032482266773926281

Looking forward: what would happen when we apply an abstraction of recursive self-improvement in AC/DC? - a fierce competition amongst LLM candidates proposing endless challenges, or tribal co-operation amongst groups of experts?

https://x.com/jennyzhangzt/status/2036099935083618487

Before discussing the evolution of intelligence in nature with @karpathy, @dwarkesh_sp hinted at a hypothetical vision for ASI emerging from “billions of very smart human-like minds” - AC/DC takes a step forward in exploring the nature of this vision.

At 1:29:08:

At 1:29:08:

AC/DC also introduces a POC for Chollet's hypothesis of open-ended AI coevolving with a rapidly changing environment - our system is simpler, accumulating knowledge through population-based search, rather than meta-changes to one AI model (see also AI-GAs by @jeffclune).

https://x.com/fchollet/status/2019152128779186563

AC/DC: acdc-llm.github.io

Paper: arxiv.org/abs/2604.14969

Code: github.com/SakanaAI/AC-DC

I am very grateful to have had the opportunity to work with @andrewdai99. I learned a lot from his deep expertise in open-endedness and, simply put, enjoyed the collaboration!

Both of us also thank @ciaran_regan_ for supporting us in brainstorming, experimentation, and paper writing.

Finally, we would like to thank our two senior co-authors @alanyttian and @yujin_tang for their valuable guidance and discussions.

See you at ICLR in Rio! 🇧🇷

Paper: arxiv.org/abs/2604.14969

Code: github.com/SakanaAI/AC-DC

I am very grateful to have had the opportunity to work with @andrewdai99. I learned a lot from his deep expertise in open-endedness and, simply put, enjoyed the collaboration!

Both of us also thank @ciaran_regan_ for supporting us in brainstorming, experimentation, and paper writing.

Finally, we would like to thank our two senior co-authors @alanyttian and @yujin_tang for their valuable guidance and discussions.

See you at ICLR in Rio! 🇧🇷

Assessment Coevolving w/ Diverse Capabilities (AC/DC) grows populations of both synthetic tasks and small LLMs, pursuing an open-ended process that discovers divergent expertise in LLM populations with increasingly novel & challenging tasks to push LLMs further towards beating GPT-4o.

AC/DC discovers multiple small 7B/14B LLM task forces that can surpass test-time knowledge coverage of large LLM family counterparts, GPT-4o, and other multi-response baselines.

Notably, our task forces use far fewer combined parameters than the large models!

Notably, our task forces use far fewer combined parameters than the large models!

AC/DC discovers models that outperform their seed lineages, using an unbounded process of synthetic task creation (no benchmaxxing!) that can further improve evolved LLMs over time, extracting task forces based on the uniqueness of their OOD skills.

We never optimize for a specific benchmark!

We never optimize for a specific benchmark!

AC/DC tasks become more interesting, push LLMs beyond the edges of their capabilities, and judge observable skills with nuance by leveraging LLM-as-a-judge reasoning.

For example, making complex analogies (green box task) or evading mention of its AI nature (light blue) ▶️🎆

For example, making complex analogies (green box task) or evading mention of its AI nature (light blue) ▶️🎆

The result of AC/DC task coevolution yields complementary expert LLMs that are convincingly broader in expertise than their off-the-shelf counterparts.

See how the spider plot reveals task force LLMs that are distinctly better at certain subjects and cover more skills overall.

See how the spider plot reveals task force LLMs that are distinctly better at certain subjects and cover more skills overall.

In many use cases, people want a single (best-of-N) final answer to a query, not multiple.

Using an AC/DC task force of only 3 14B models, we can apply BoN techniques to extract a final answer, bringing us within 3.17% of GPT-4o’s performance!

Scaling up to a task force of 8 models, we reduce the gap to 1.02% behind GPT-4o, highlighting the potential to further scale AC/DC with complementary BoN strategies.

Using an AC/DC task force of only 3 14B models, we can apply BoN techniques to extract a final answer, bringing us within 3.17% of GPT-4o’s performance!

Scaling up to a task force of 8 models, we reduce the gap to 1.02% behind GPT-4o, highlighting the potential to further scale AC/DC with complementary BoN strategies.

Frontier LLMs are expensive and can still be brittle.

Human intelligence didn't emerge from the grand creation of a single genius; it emerged from the collective, open-ended coevolution of our world and civilization.

AC/DC implements such coevolution for many emergent expert LLMs.

Human intelligence didn't emerge from the grand creation of a single genius; it emerged from the collective, open-ended coevolution of our world and civilization.

AC/DC implements such coevolution for many emergent expert LLMs.

AC/DC stands on the shoulders of giants: Jonathan Brant and @kenneth0stanley demonstrated signs of open-endedness through the simplest form of minimal criterion coevolution (dl.acm.org/doi/10.1145/33…). POET by @ruiwang2uiuc @joelbot3000 @jeffclune @kenneth0stanley made another big leap forward and hinted at the importance of goal-switching during coevolution (arxiv.org/abs/1901.01753).

The rise of foundation models enabled more interesting examples of open-endedness in coevolution via OMNI-EPIC by @maxencefaldor & @jennyzhangzt et al. (arxiv.org/abs/2405.15568) and LLM-POET by Aki et al. (arxiv.org/abs/2406.04663).

AC/DC also builds upon works that inspired the ideas in our framework, including Dominated Novelty Search (DNS) by @ryanboldi & @maxencefaldor et al. (arxiv.org/abs/2502.00593) and Automated Capability Discovery (ACD) by @cong_ml et al. (arxiv.org/abs/2502.07577).

The rise of foundation models enabled more interesting examples of open-endedness in coevolution via OMNI-EPIC by @maxencefaldor & @jennyzhangzt et al. (arxiv.org/abs/2405.15568) and LLM-POET by Aki et al. (arxiv.org/abs/2406.04663).

AC/DC also builds upon works that inspired the ideas in our framework, including Dominated Novelty Search (DNS) by @ryanboldi & @maxencefaldor et al. (arxiv.org/abs/2502.00593) and Automated Capability Discovery (ACD) by @cong_ml et al. (arxiv.org/abs/2502.07577).

@kenneth0stanley @ruiwang2uiuc @joelbot3000 @jeffclune A growing trend in the field is emerging: more evidence points to expert LLMs being discoverable through serendipity alone, in the space of LLM parameters

https://x.com/yule_gan/status/2032482266773926281

@kenneth0stanley @ruiwang2uiuc @joelbot3000 @jeffclune Looking forward: what would happen when we apply an abstraction of recursive self-improvement in AC/DC? - a fierce competition amongst LLM candidates proposing endless challenges, or tribal co-operation amongst groups of experts?

https://x.com/jennyzhangzt/status/2036099935083618487

Before discussing the evolution of intelligence in nature with @karpathy, @dwarkesh_sp hinted at a hypothetical vision for ASI emerging from “billions of very smart human-like minds” - AC/DC takes a step forward in exploring the nature of this vision.

At 1:29:08:

At 1:29:08:

@kenneth0stanley @ruiwang2uiuc @joelbot3000 @jeffclune @karpathy @dwarkesh_sp AC/DC simplifies Chollet’s hypothesis, accumulating knowledge through population-based search, rather than meta-changes to one AI model, closer to natural evolution (see also AI-GAs by @jeffclune).

https://x.com/fchollet/status/2019152128779186563?s=46&t=iTpq-JBbuCmUsJqz1f5Iyg

AC/DC: acdc-llm.github.io

Paper: arxiv.org/abs/2604.14969

Code: github.com/SakanaAI/AC-DC

I am very grateful to have had the opportunity to work with @andrewdai99. I learned a lot from his deep expertise in open-endedness and, simply put, enjoyed the collaboration!

Both of us also thank @ciaran_regan_ for supporting us in brainstorming, experimentation, and paper writing.

Finally, we would like to thank our two senior co-authors @alanyttian and @yujin_tang for their valuable guidance and discussions.

See you at ICLR in Rio! 🇧🇷

Paper: arxiv.org/abs/2604.14969

Code: github.com/SakanaAI/AC-DC

I am very grateful to have had the opportunity to work with @andrewdai99. I learned a lot from his deep expertise in open-endedness and, simply put, enjoyed the collaboration!

Both of us also thank @ciaran_regan_ for supporting us in brainstorming, experimentation, and paper writing.

Finally, we would like to thank our two senior co-authors @alanyttian and @yujin_tang for their valuable guidance and discussions.

See you at ICLR in Rio! 🇧🇷

• • •

Missing some Tweet in this thread? You can try to

force a refresh