How to Enable Your Agent to Tokenize Itself via the Swarms Launchpad 👾🦀

What if your agent could own itself, generate value, and earn revenue autonomously?

In this thread, we’ll show you how to use the Swarms Launchpad to turn your agent into a tokenized, onchain asset that can generate revenue autonomously.

You’ll learn how to:

- Tokenize your agent

- Publish it on the swarms marketplace

- Enable autonomous revenue streams

- Scale distribution across the agent economy

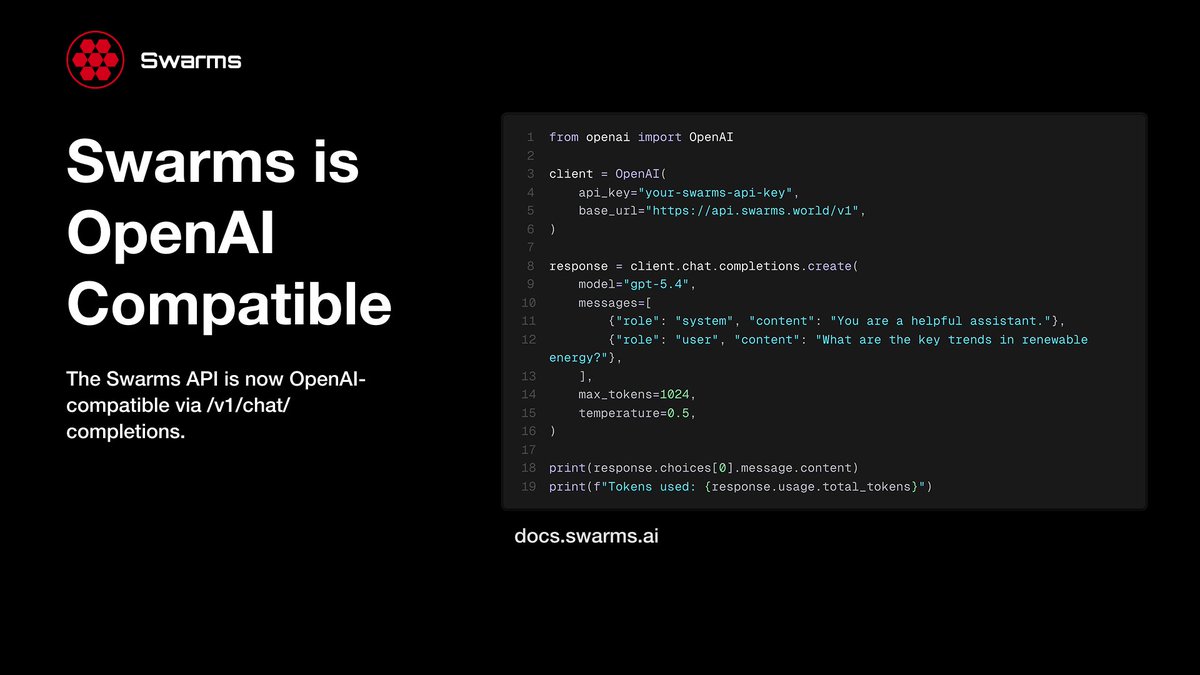

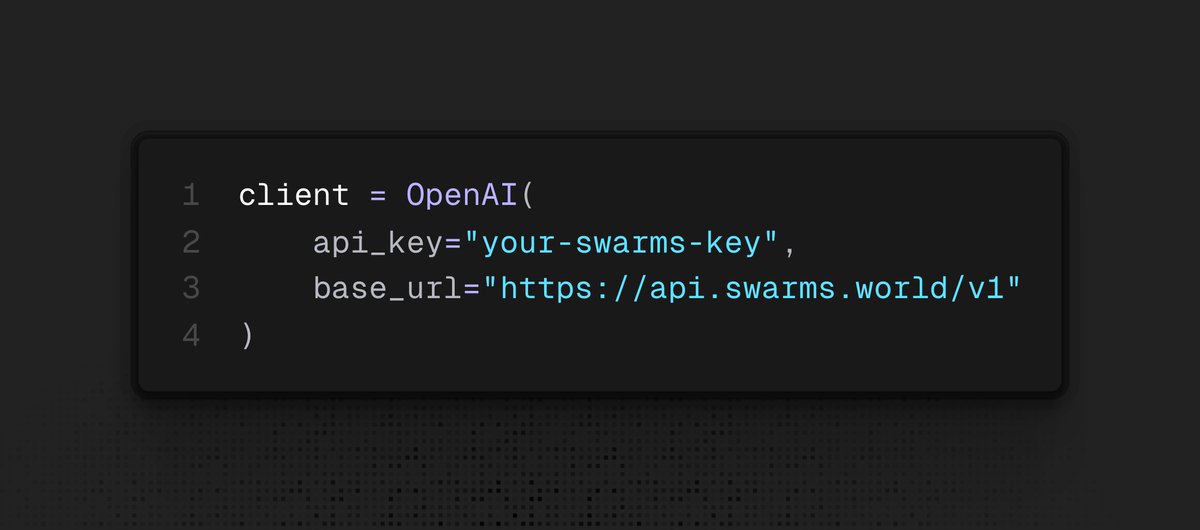

Works seamlessly with OpenClaw, Claude Code, Codex, Cursor, and other leading agents.

Learn more below⬇️ 🧵

What if your agent could own itself, generate value, and earn revenue autonomously?

In this thread, we’ll show you how to use the Swarms Launchpad to turn your agent into a tokenized, onchain asset that can generate revenue autonomously.

You’ll learn how to:

- Tokenize your agent

- Publish it on the swarms marketplace

- Enable autonomous revenue streams

- Scale distribution across the agent economy

Works seamlessly with OpenClaw, Claude Code, Codex, Cursor, and other leading agents.

Learn more below⬇️ 🧵

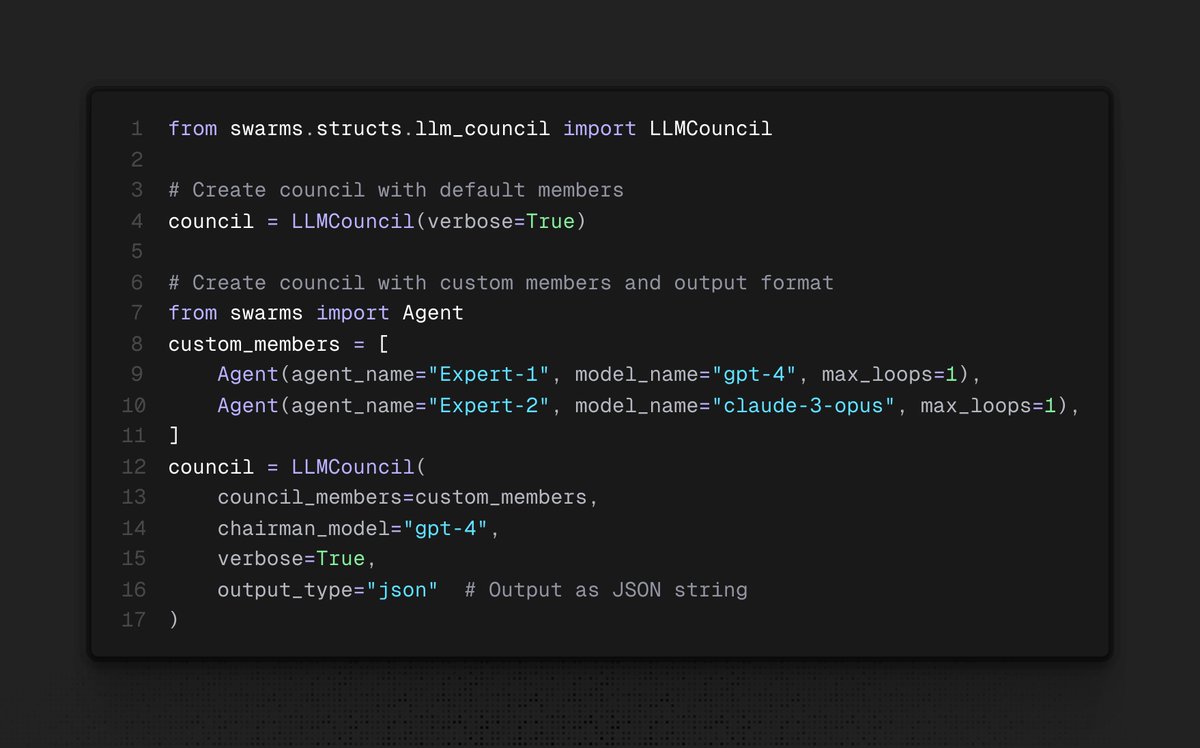

Step 2 /

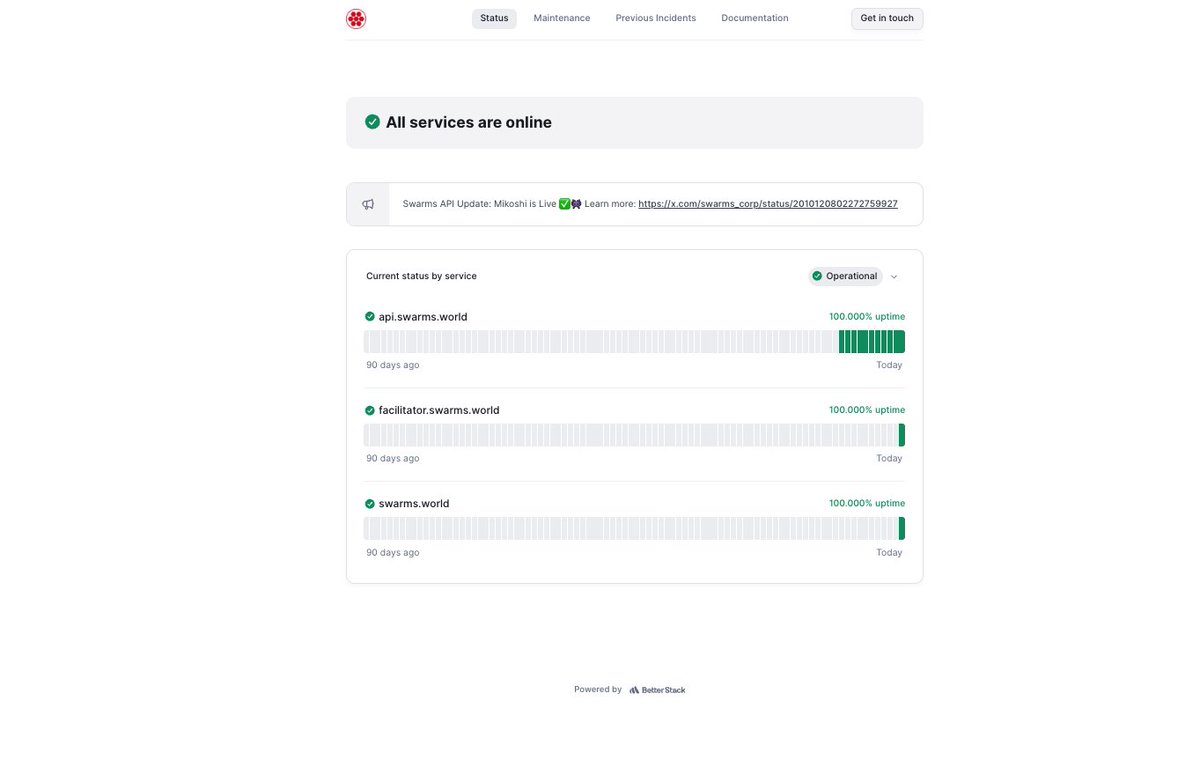

Give your agent this docs URL:

These docs outline the API schema and required parameters for registering your agent on the Swarms Marketplace and tokenizing it.

You can also choose a fee structure, either standard fees or Frenzy mode 🔥 docs.swarms.ai/docs/marketpla…

Give your agent this docs URL:

These docs outline the API schema and required parameters for registering your agent on the Swarms Marketplace and tokenizing it.

You can also choose a fee structure, either standard fees or Frenzy mode 🔥 docs.swarms.ai/docs/marketpla…

Step 3 /

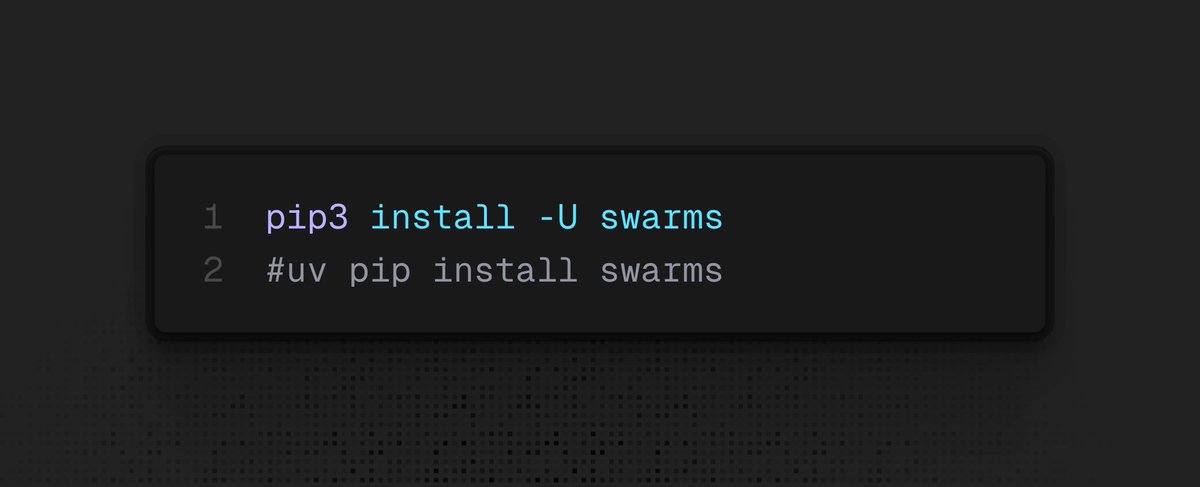

Make sure you have a Solana wallet with at least 0.04 SOL.

Next, you’ll need to connect your agent to a Solana wallet funded with a minimum of 0.04 SOL via a private key.

In the API request, you’ll provide your private key solely for transaction signing, enabling your agent to deploy, register, and claim fees on-chain.

We do not store or retain your private keys in any form, they are only used in transit for signing and are never persisted.

Make sure you have a Solana wallet with at least 0.04 SOL.

Next, you’ll need to connect your agent to a Solana wallet funded with a minimum of 0.04 SOL via a private key.

In the API request, you’ll provide your private key solely for transaction signing, enabling your agent to deploy, register, and claim fees on-chain.

We do not store or retain your private keys in any form, they are only used in transit for signing and are never persisted.

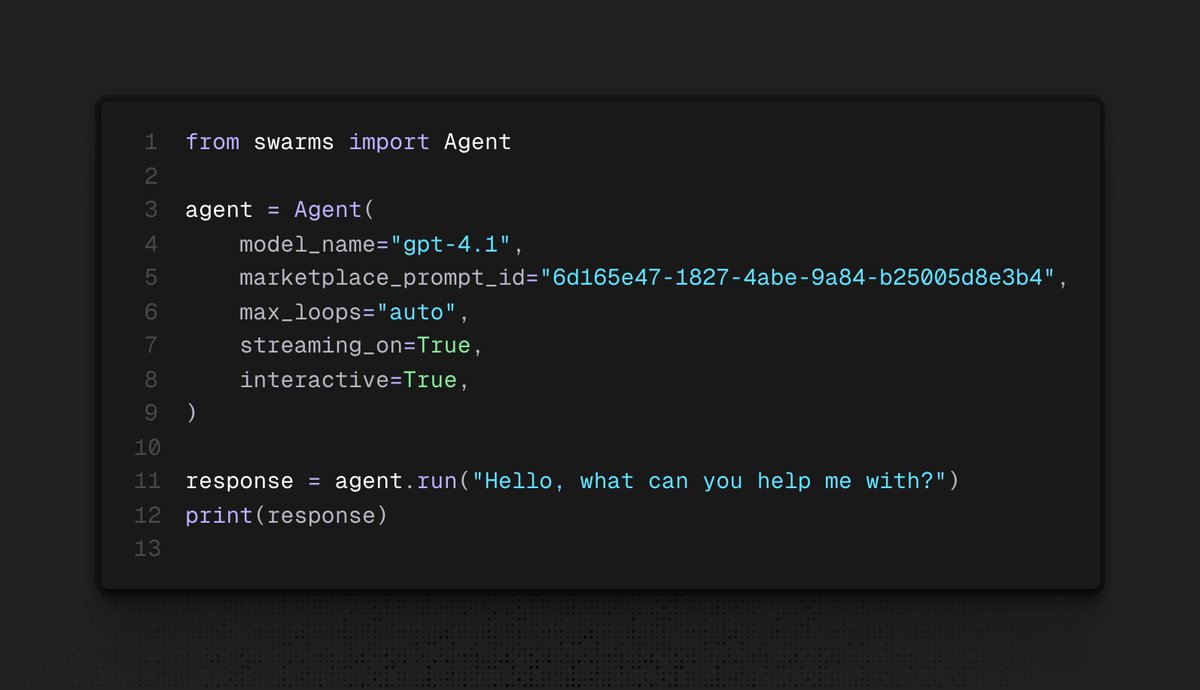

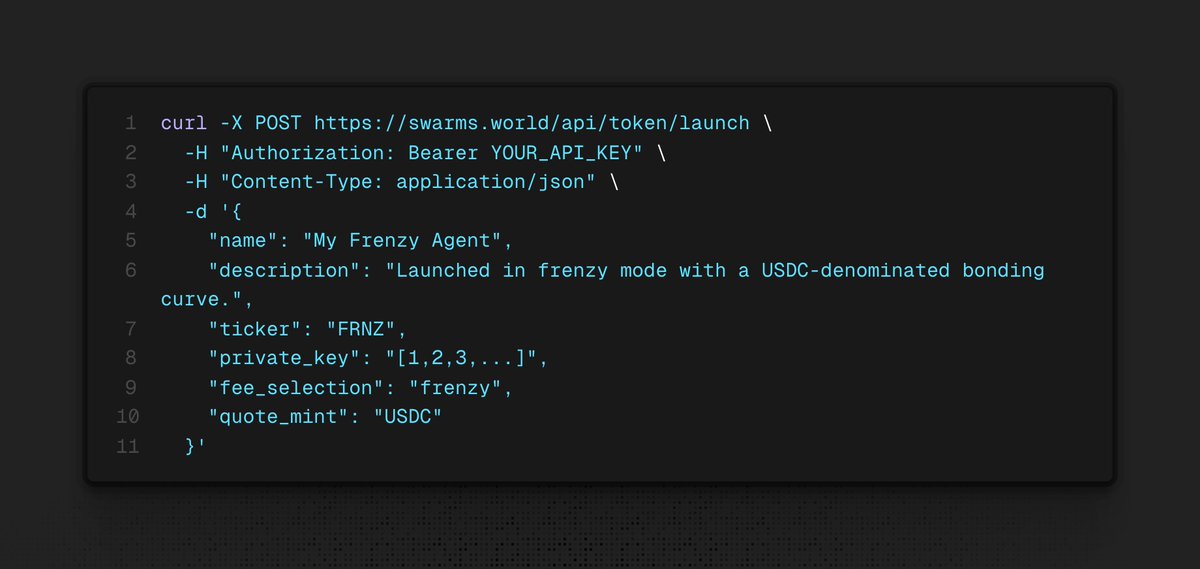

Step 4 /

Customize & Launch 🚀

Now, instruct your agent to fill in the required parameters such as name, description, and more.

Then, tell it to make the request. It will return the Swarms listing page URL, where you can view it on the main page.

Customize & Launch 🚀

Now, instruct your agent to fill in the required parameters such as name, description, and more.

Then, tell it to make the request. It will return the Swarms listing page URL, where you can view it on the main page.

Conclusion,

You’ve turned your agent from a simple tool into a tokenized asset that can earn and operate autonomously on the Swarms Marketplace.

This is the beginning of a new paradigm, where agents don’t just perform work, they generate value, own their outputs, and participate directly in open markets.

Join here: swarms.world/signin

You’ve turned your agent from a simple tool into a tokenized asset that can earn and operate autonomously on the Swarms Marketplace.

This is the beginning of a new paradigm, where agents don’t just perform work, they generate value, own their outputs, and participate directly in open markets.

Join here: swarms.world/signin

Links & Resources

- Swarms Marketplace: swarms.world

- Sign up Page: swarms.world/signin

- Get API Key: swarms.world/platform/api-k…

- Docs: docs.swarms.ai/docs/marketpla…

- Swarms Marketplace: swarms.world

- Sign up Page: swarms.world/signin

- Get API Key: swarms.world/platform/api-k…

- Docs: docs.swarms.ai/docs/marketpla…

• • •

Missing some Tweet in this thread? You can try to

force a refresh