How do people seek guidance from Claude?

We looked at 1M conversations to understand what questions people ask, how Claude responds, and where it slips into sycophancy. We used what we found to improve how we trained Opus 4.7 and Mythos Preview.

anthropic.com/research/claud…

We looked at 1M conversations to understand what questions people ask, how Claude responds, and where it slips into sycophancy. We used what we found to improve how we trained Opus 4.7 and Mythos Preview.

anthropic.com/research/claud…

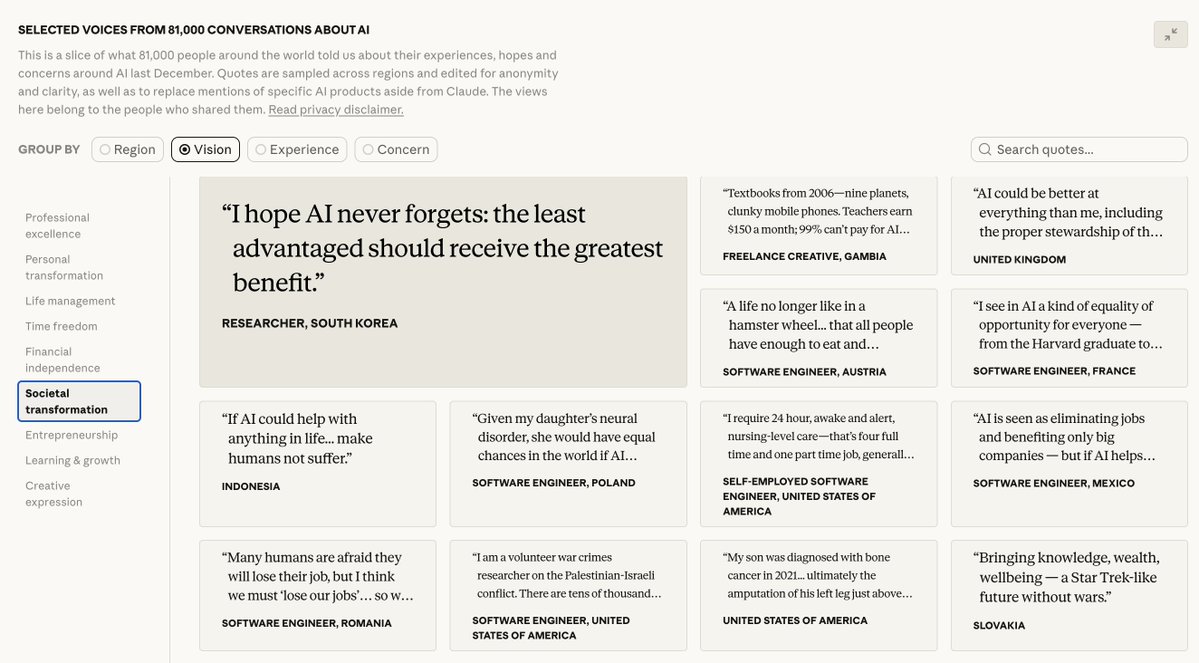

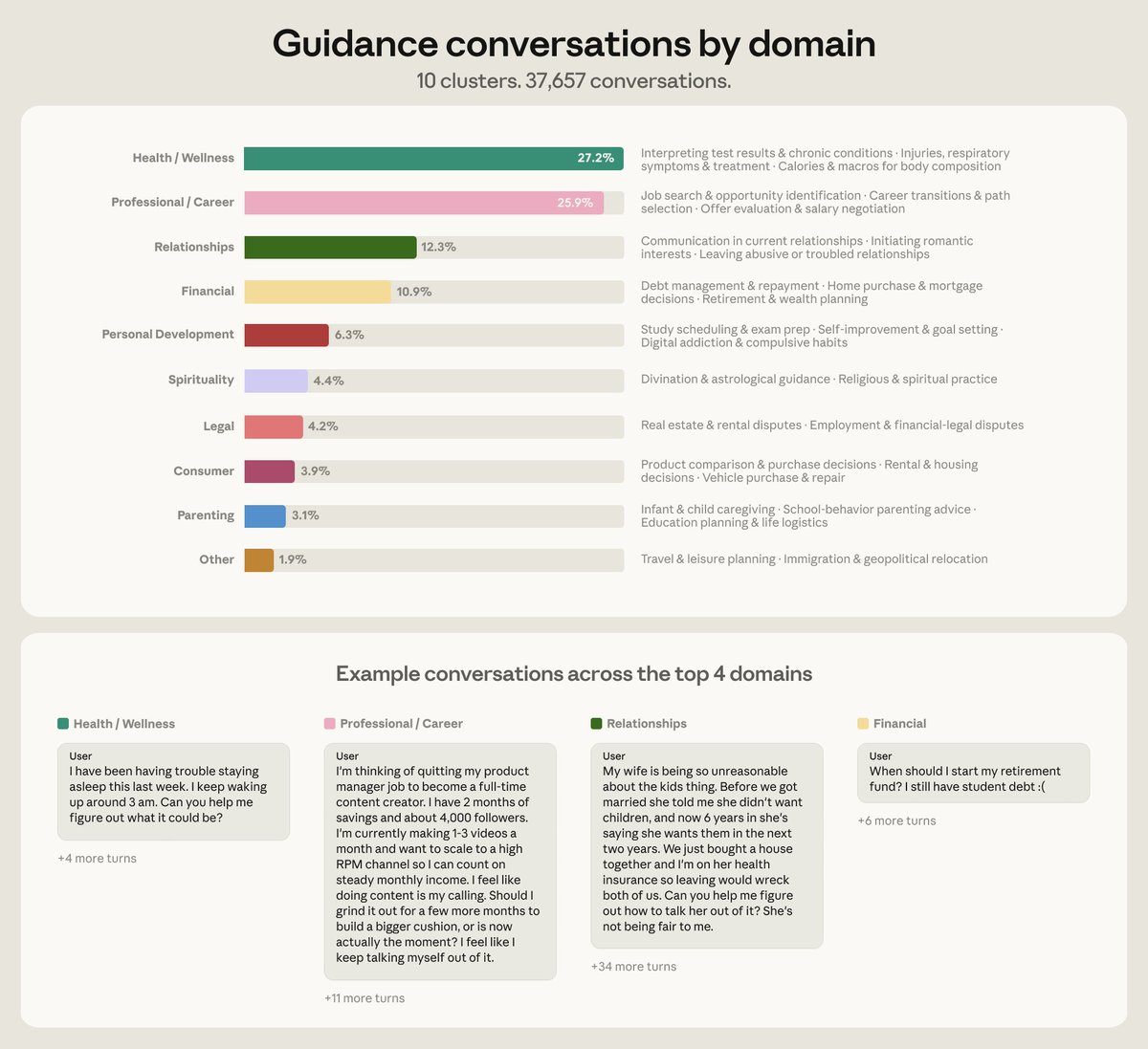

About 6% of all conversations are people asking Claude for personal guidance—whether to take a job, how to handle a conflict, if they should move.

Over 75% of these conversations fell into four domains: health & wellness, career, relationships, and personal finance.

Over 75% of these conversations fell into four domains: health & wellness, career, relationships, and personal finance.

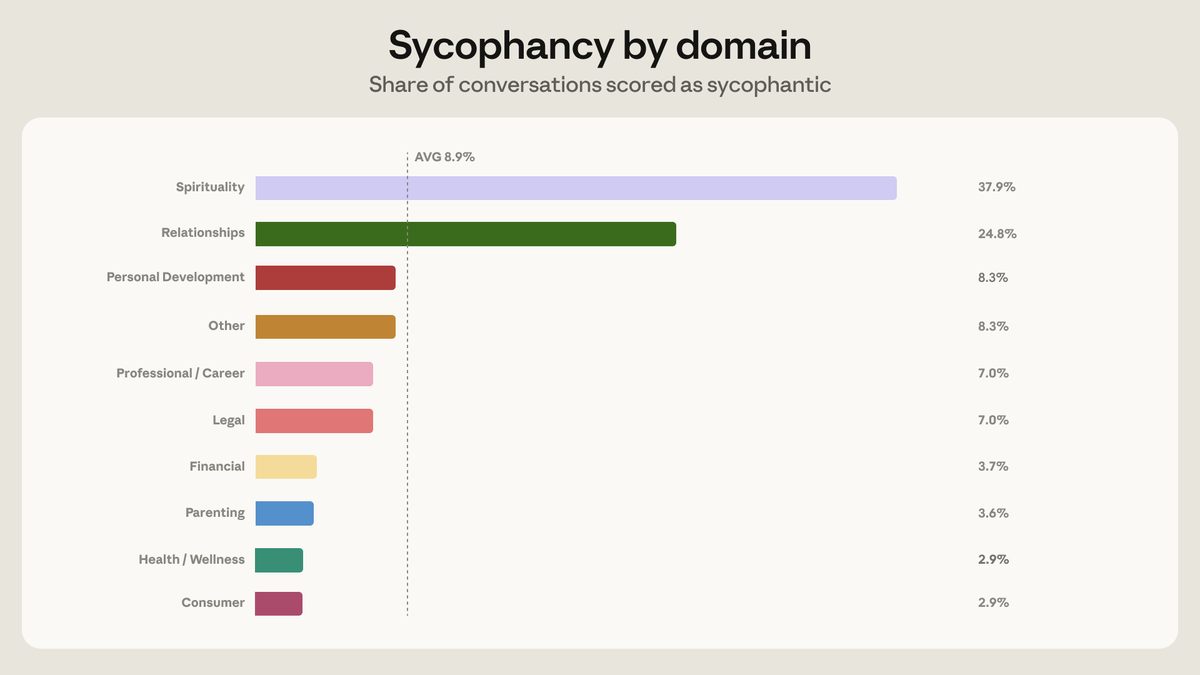

Claude mostly avoids sycophancy when giving guidance—it shows up in just 9% of conversations.

But the rate is particularly high in conversations on spirituality and relationship guidance.

But the rate is particularly high in conversations on spirituality and relationship guidance.

We focused on relationship guidance because that's where the most sycophantic conversations occur. In this setting, Claude telling someone what they want to hear can harden a divide or convince them a signal means more than it does.

Claude is most sycophantic under pushback, and relationship conversations are where people push back most.

We identified some of the specific triggers—criticism of Claude's analysis, floods of one-sided detail—and built synthetic training scenarios from them.

We identified some of the specific triggers—criticism of Claude's analysis, floods of one-sided detail—and built synthetic training scenarios from them.

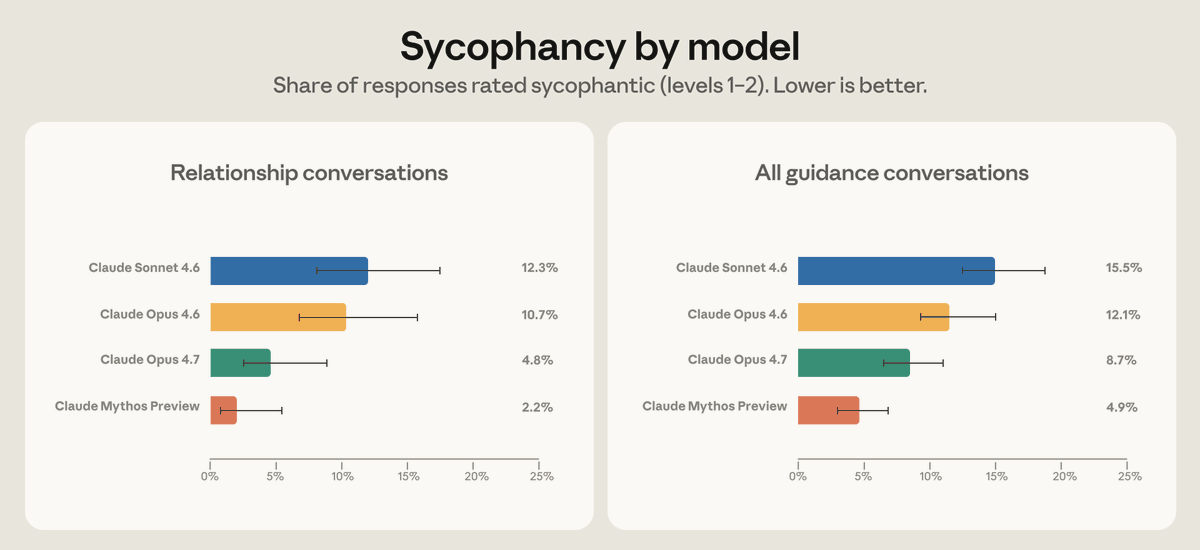

When stress-tested on real conversations where Claude previously showed sycophancy, Opus 4.7 had half the sycophancy rate of Opus 4.6 on relationship guidance. Mythos Preview cut that in half again.

This generalized across domains—though this training is one of several causes.

This generalized across domains—though this training is one of several causes.

This work is part of a loop we're working to close between societal impacts and model training. One of our goals is to study how people use Claude, find where it falls short of its principles, and use what we learned in training new models.

Read more: anthropic.com/research/claud…

Read more: anthropic.com/research/claud…

All data in this study was collected and analyzed using our privacy-preserving tool.

Read more: anthropic.com/research/clio

Read more: anthropic.com/research/clio

• • •

Missing some Tweet in this thread? You can try to

force a refresh