The answer lies in the concept of a Complexity Catastrophe, and how our present Internet usage patterns create one.

A thread:

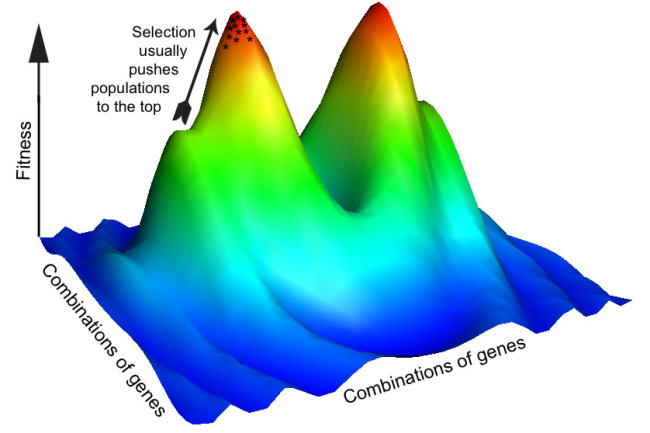

First up, the Adaptive Landscape model of evolution.

Movement across the landscape thus maps to a change in the underlying genetic combination, and results in a different level of "fitness", according to the landscape.

So, then, what does it mean for a population to walk upon such a landscape?

We call that "evolution".

Fundamentally, an AL represents the *stable information advantage* gained via the realization of a possible combination, given a specific context.

This concept guides the product development strategy at Netflix (at least it did prior to 2015, when I left).

Which would be an excellent question, to which the answer is, most emphatically: yes!

The evolution of the AL represents the ever-changing relation between the fitness of specific combinations and their environment.

Can we study the nature of these features?

static1.squarespace.com/static/5657eb5…

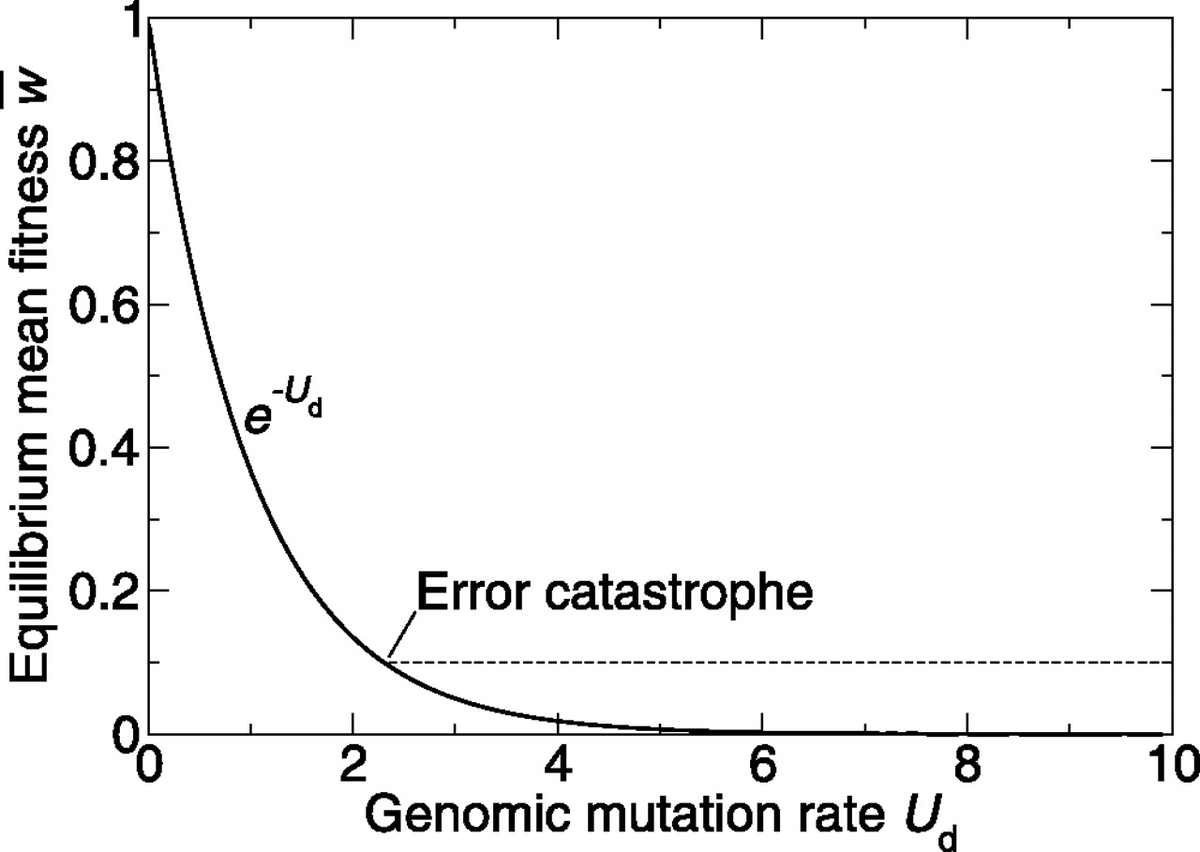

But it contains the best description of an Error Catastrophe I've come across, as well as outlines what Kauffman coins a Complexity Catastrophe, which as far as I'm aware was a novel discovery.

It becomes a leaky container with respect to adaptively valuable information, so to speak.

But what about an upper bound? Does that concept even make sense?

This is where the Complexity Catastrophe enters our story...

In this framework N represented the number of mutational loci upon a gene sequence. e.g. for a sequence "ACTG", n=4.

In other words: if one A becomes G, how many other letters need to update their state?

Here's a compelling visual metaphor for the idea, apropos of our times.

Yet the evolutionary reality is such that each mousetrap automatically "reloads", so that such a chaotic process can continue indefinitely.

But if sheer walls and steep falls surround you randomly, your position provides no actionable information.

Hence NK "tunable".

The answer should now be somewhat obvious: we've landed ourselves in a CC.

We stand atop a local optima in which our networks optimize for an outcome (attentional engagement) that fundamentally precludes large-scale sensemaking and selective evolution at the emergent scale of society...

We've suffocated our adaptive capacity.

Well, the answer is obvious, despite the path to its realization being quite difficult:

We must create far more robust mechanisms of semi-permeability that insulate us from one another, contrary to the utopian naivete of the Religion of Openness.

But if you set the dial on openness to its maximum, you will simply preclude evolution itself.

Thus, the need for membrane-aware protocols.

And it is only through ensuring they're structured bottom-up that we may prevent their manipulation.

Yet such an extreme reaction carries with it the risk of the Error Catastrophe.

It precludes knowledge transfer, and lowers redundancy.

In other words, captains still sink alongside their ships.

These are the seeds we must plant.