A group of astronomers have found phosphine in the atmosphere of Venus, which is hard to explain other than by the presence of life. This is not at all conclusive, but should prompt further investigation.

nature.com/articles/s4155…

How might it matter, if there was life? 1/6

nature.com/articles/s4155…

How might it matter, if there was life? 1/6

The scientists don’t suggest intelligent life; we are probably talking about microbes. But this could still be a big deal. It would mean life either started independently there or was transported between bodies in our Solar System. Let’s focus on the former. 2/6

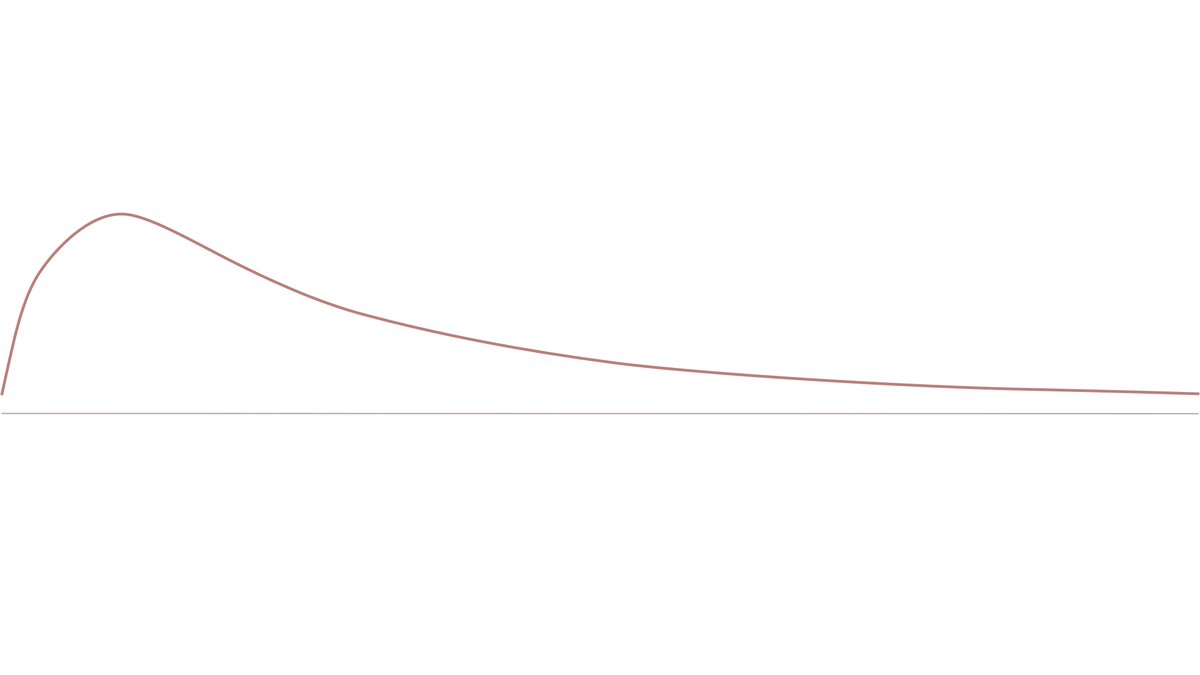

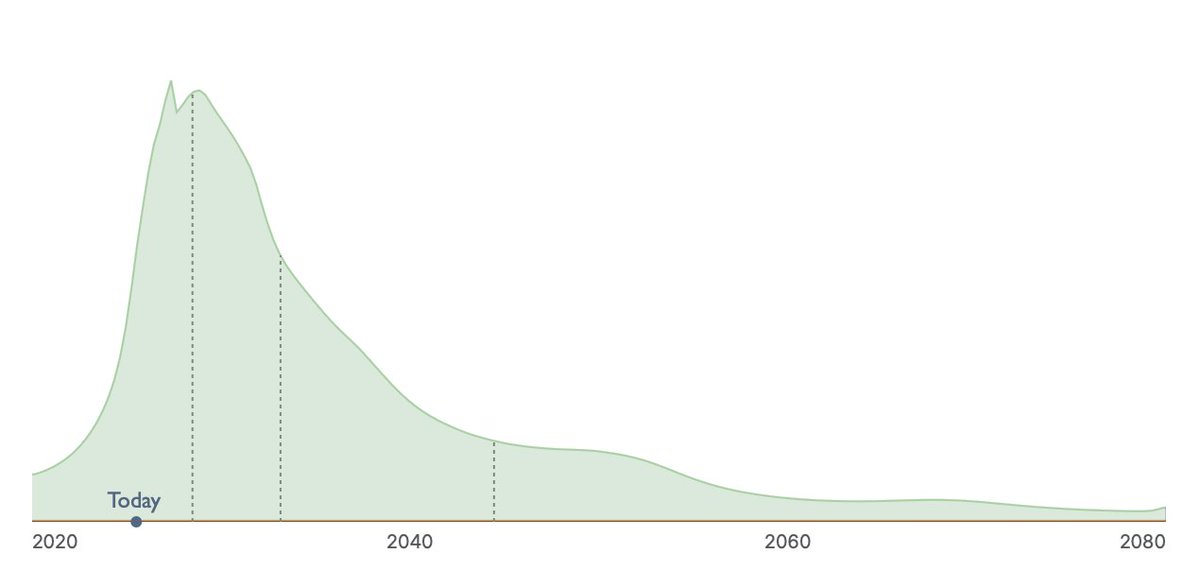

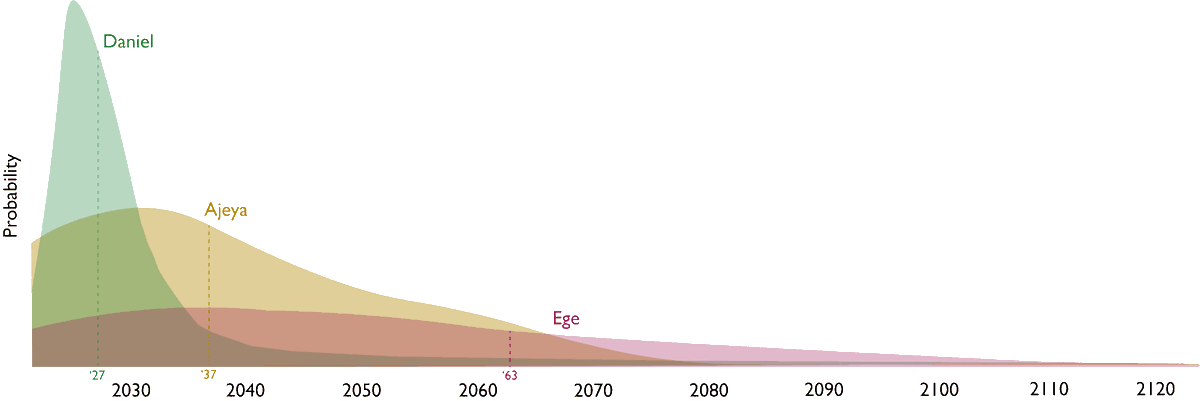

The possibility that it is extremely hard and rare for life to begin is currently the best explanation for why we don’t see signs of life elsewhere in the cosmos, despite the presence of so many stars in our galaxy and galaxies in the observable universe. 3/6

It is thus often seen as a downer. But many of the alternative explanations for the silence in the skies are worse. One prominent alternative is that technological civilisations inevitably destroy themselves. 4/6

If we did find independent life on other planets it would shift our credences away from the hypothesis that life is hard to start and towards the hypothesis that it is all too easy to end. This would be bad news for our prospects. 5/6

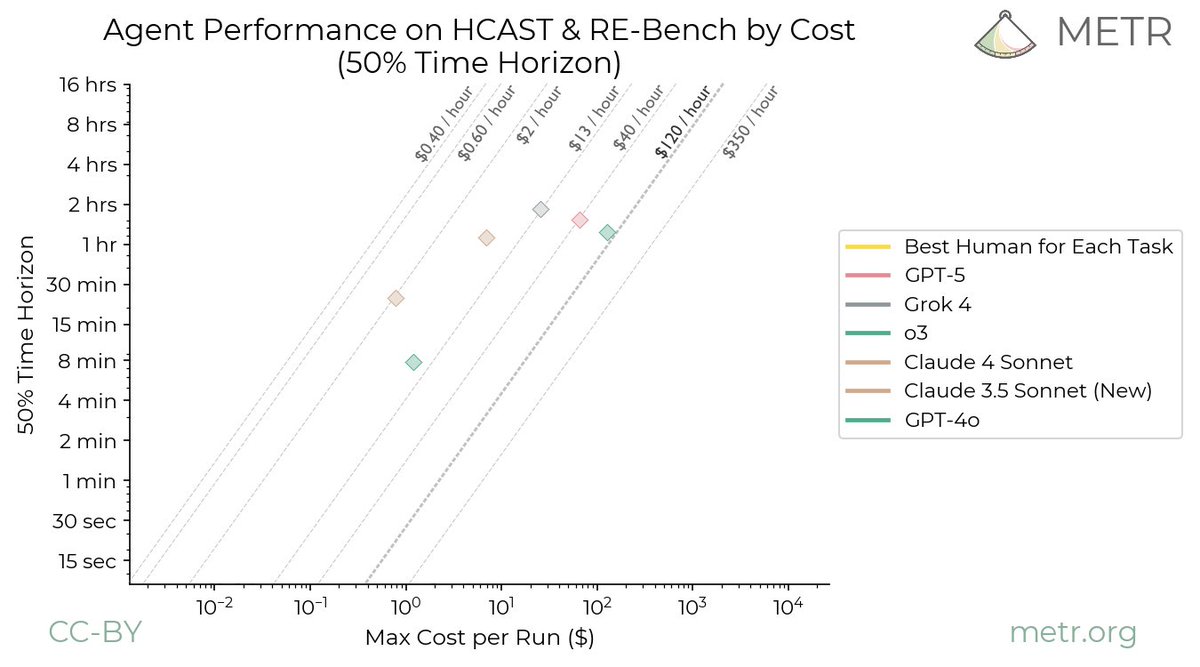

If you want all the details, I’ve written a paper on this with @anderssandberg and @KEricDrexler. 6/6

arxiv.org/abs/1806.02404

arxiv.org/abs/1806.02404

• • •

Missing some Tweet in this thread? You can try to

force a refresh