In one of the more hilariously meta developments in recent Twitter botting history, retweet-to-win tweets from @SeigRobotics offering free access to some sort of Twitter botting tool are being retweeted by a bunch of bots. #MondayMotivation

cc: @ZellaQuixote

cc: @ZellaQuixote

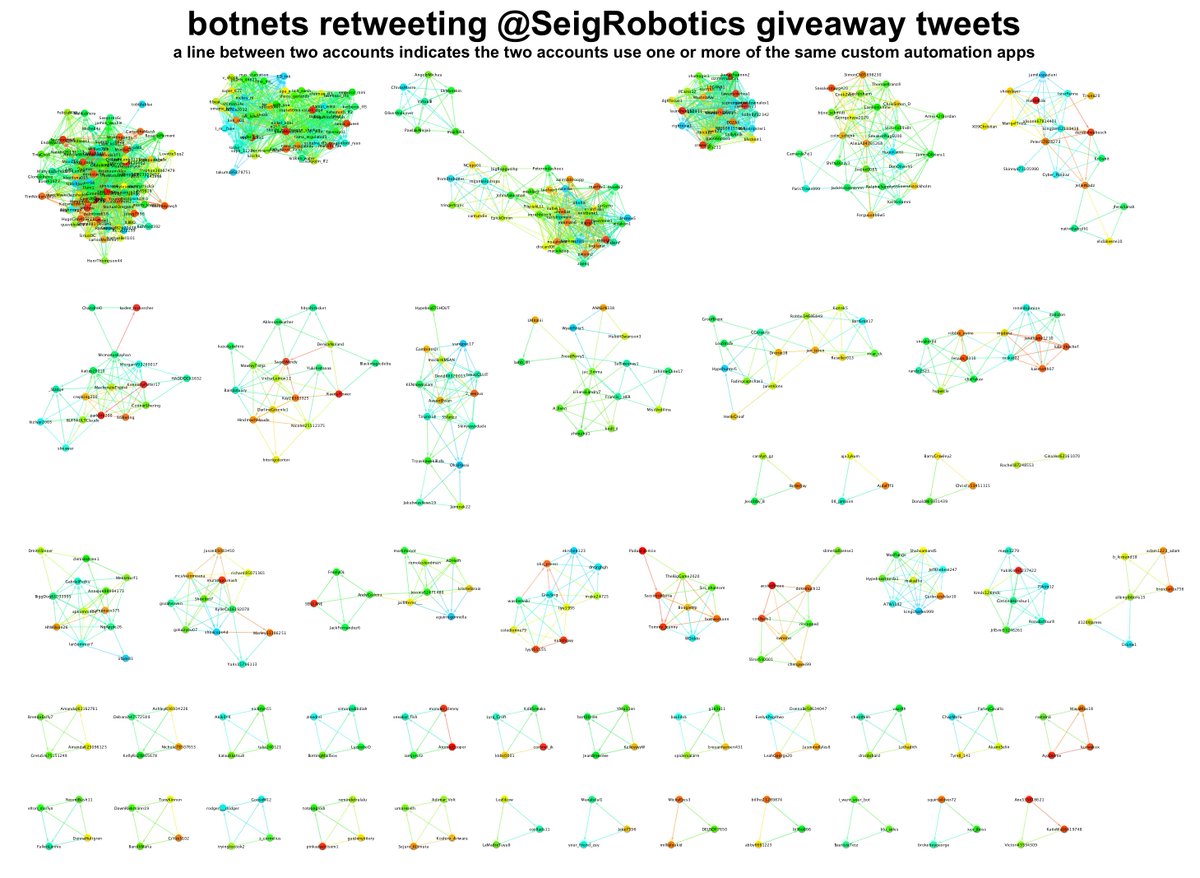

We found 321 accounts that used one or more custom automation apps to retweet one or more of @SeigRobotics's recent tweets. We then looked at the retweets of other tweets those accounts had retweeted to find members of the same networks that haven't (yet) retweeted @SeigRobotics.

We found 49 groups of automated accounts (536 accounts total), each using a separate set of custom automation apps. (It's quite possible that there are fewer than 49 distinct botnets, as several of the smaller groups were created on the same day.)

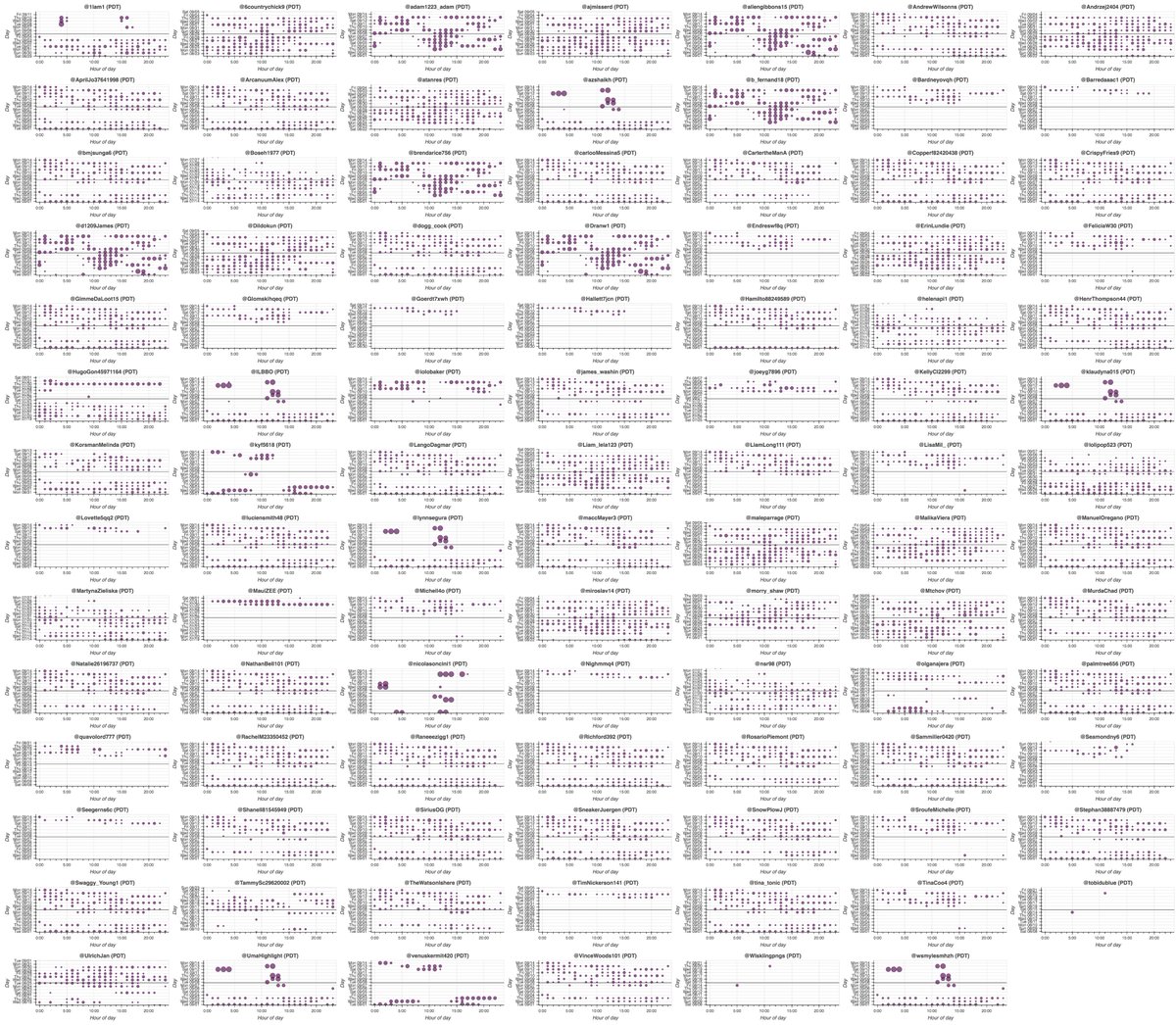

We looked at some of the larger networks. First, we have 29 accounts created over two hours on August 8th 2020, each of which has exactly one tweet: a retweet of @SeigRobotics's most recent tweet, sent via an app called "seig_bot".

Next, we have a group of 97 accounts tweeting via a myriad of apps, most of which have names similar to official Twitter products but with extra spaces in them, i.e. "Twitter for iPhone ". These accounts retweet tweets from a variety of proxy and botting software providers.

Moving on, here's a group of 53 accounts tweeting via apps named "Biyon≡( ε:)" and "Biyon≡( ε:) Pro". Most of these accounts were made back in 2013 and originally amplified Japanese tweets, but some have reawakened and are now boosting English retweet-to-win tweets.

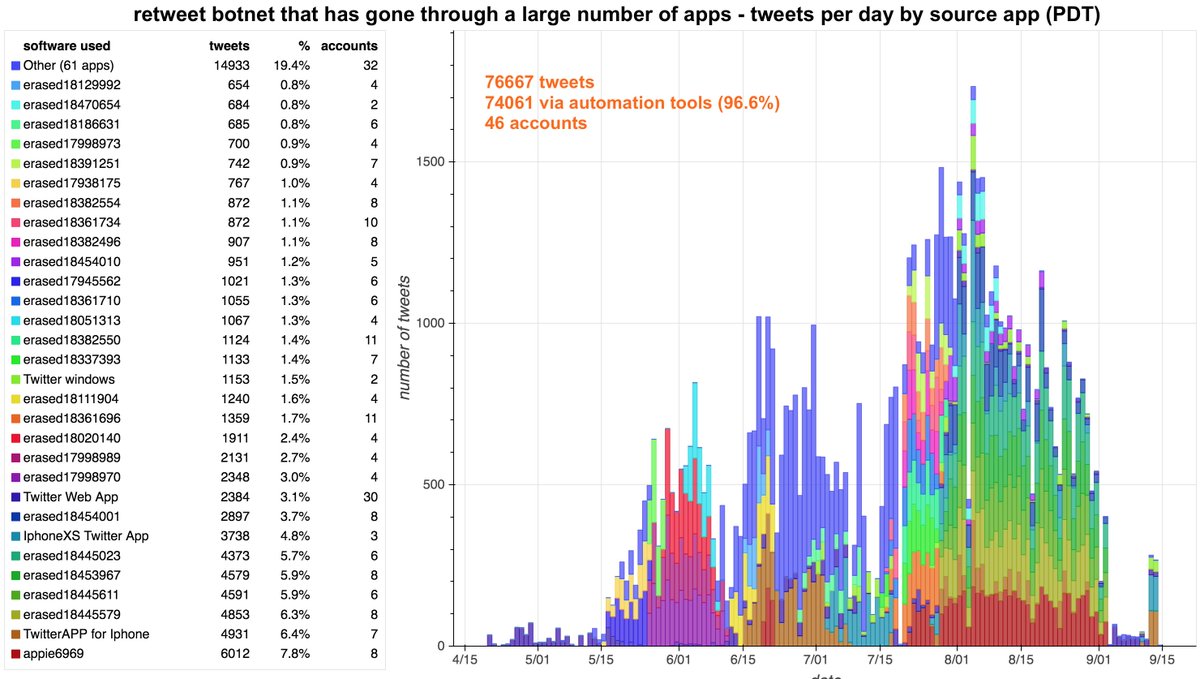

The last retweet-to-win botnet we'll look at for now consists of 46 accounts, and has tweeted via 90 different custom apps in 5 months. All but 3 of these apps have been shut down (the "erasedXXXXXXXX" apps on the legend) - whether by the bot operator(s) or by Twitter is unclear.

• • •

Missing some Tweet in this thread? You can try to

force a refresh