How some sociologists think games might have stopped a Marxist revolution: a thread.

When people are bored at work, they play games. There is graffiti in the Pyramids suggesting that work was done on teams with names like "Drunkards of Menkaure," competing for extra beer. 1/n

When people are bored at work, they play games. There is graffiti in the Pyramids suggesting that work was done on teams with names like "Drunkards of Menkaure," competing for extra beer. 1/n

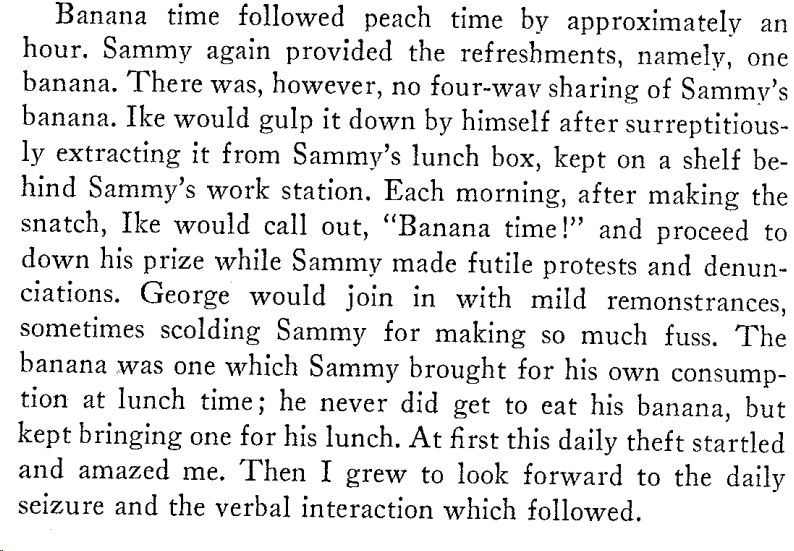

In the grind of early 20th century factories, sociologists working undercover found games everywhere. See this passage from the famous "Banana Time" (the paper is a great read, since it is clear that the other workers were mocking Roy, who didn't get it) 2 faculty.knox.edu/fmcandre/roy-b…

Another undercover sociologist, Burawoy (working at the same factory as Roy, many years later), noticed something interesting: though games were seen as a time waster and act of rebellion by workers, they were often secretly tolerated by bosses. Why? 3/n

He suggested that it was because workers playing games competed with each other, rather than focusing on management. The combination of minor victories in games and competition created "consent" to work & kept workers from rising in rebellion over their working conditions! 4/

• • •

Missing some Tweet in this thread? You can try to

force a refresh