Psychology experiments need to be able to get people to react emotionally very quickly. How do they do it? Movie clips! These are the scientifically vetted clips historically used to elicit emotion.

For fear 😱 the choice is pretty obvious. 1/4

For fear 😱 the choice is pretty obvious. 1/4

For anger 😡, either the police abuse scene from Cry Freedom (the clip isn’t online) or else this scene from The Bodyguard 2/4

For sadness 😭 this scene from The Champ even beats the death of Bambi’s mother. 3/4

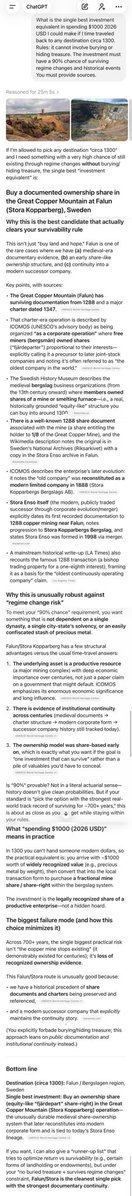

Since the study is older, the clips are more of classic films. Here is the ranking based on lab studies. bpl.berkeley.edu/docs/48-Emotio… 4/4

• • •

Missing some Tweet in this thread? You can try to

force a refresh