Lets talk a bit about forest management. There is growing acknowledgement among (some) policymakers that we need to tackle the combination of climate change, fuel buildup in our forests, and development in high-risk wildland urban interface areas.

A thread: 1/15

A thread: 1/15

https://twitter.com/TedNordhaus/status/1310964093105266689

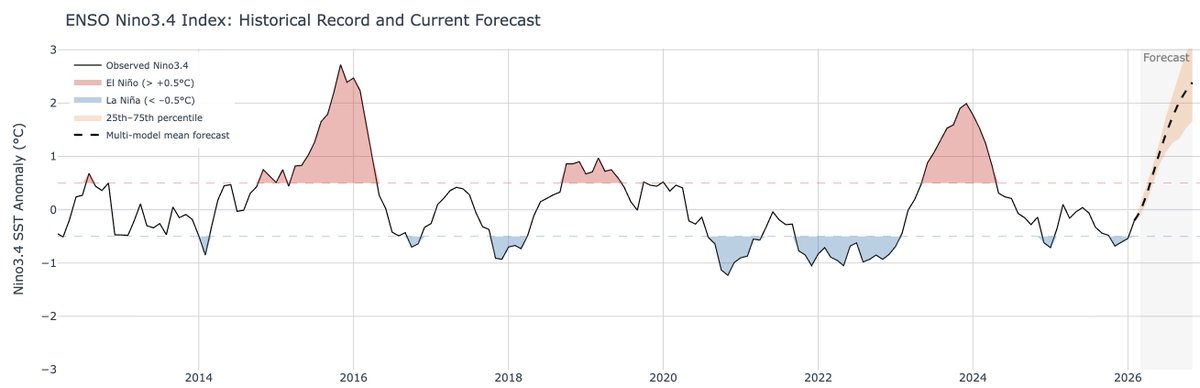

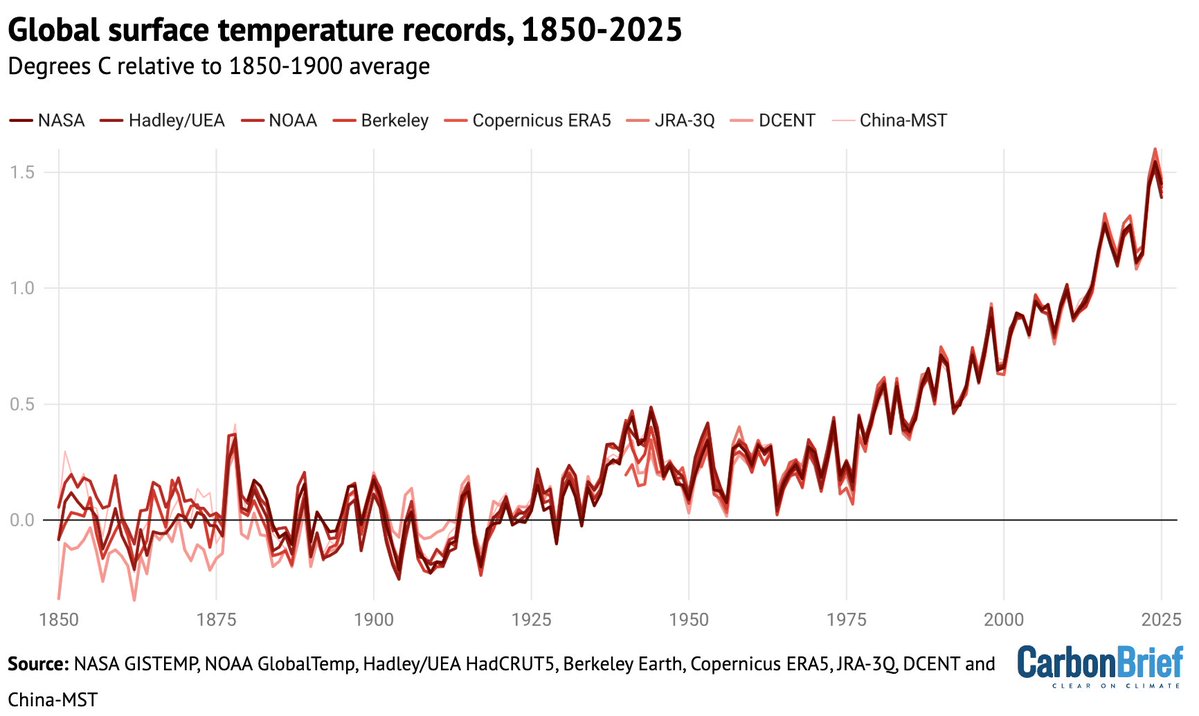

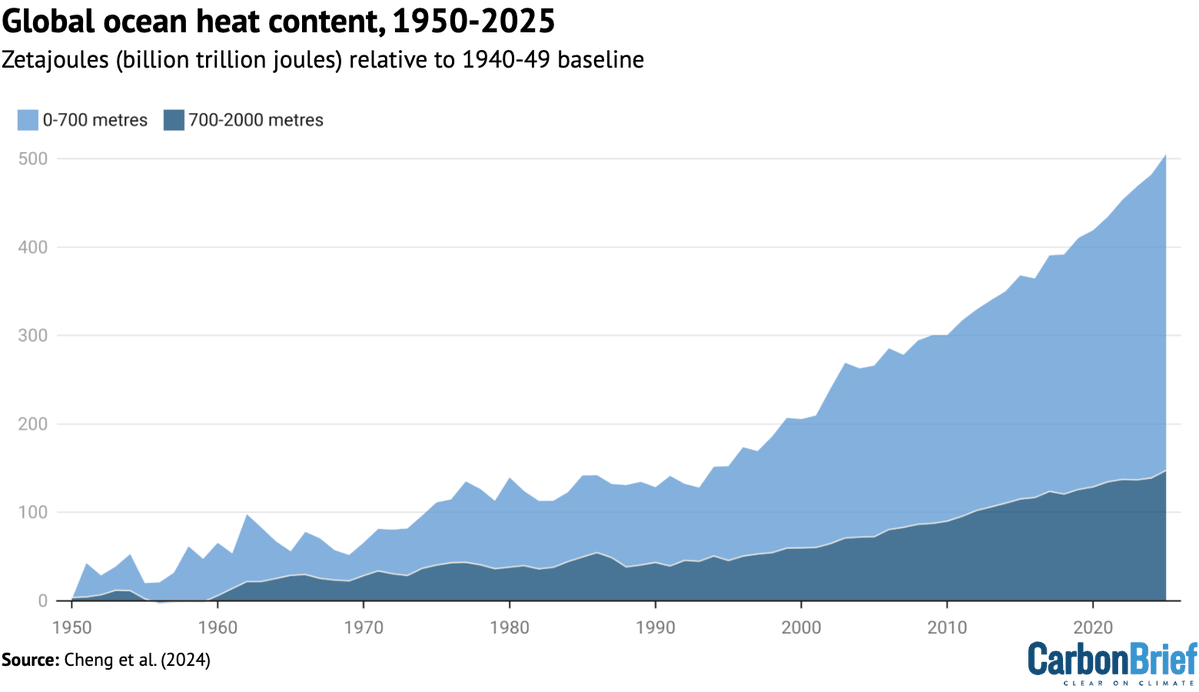

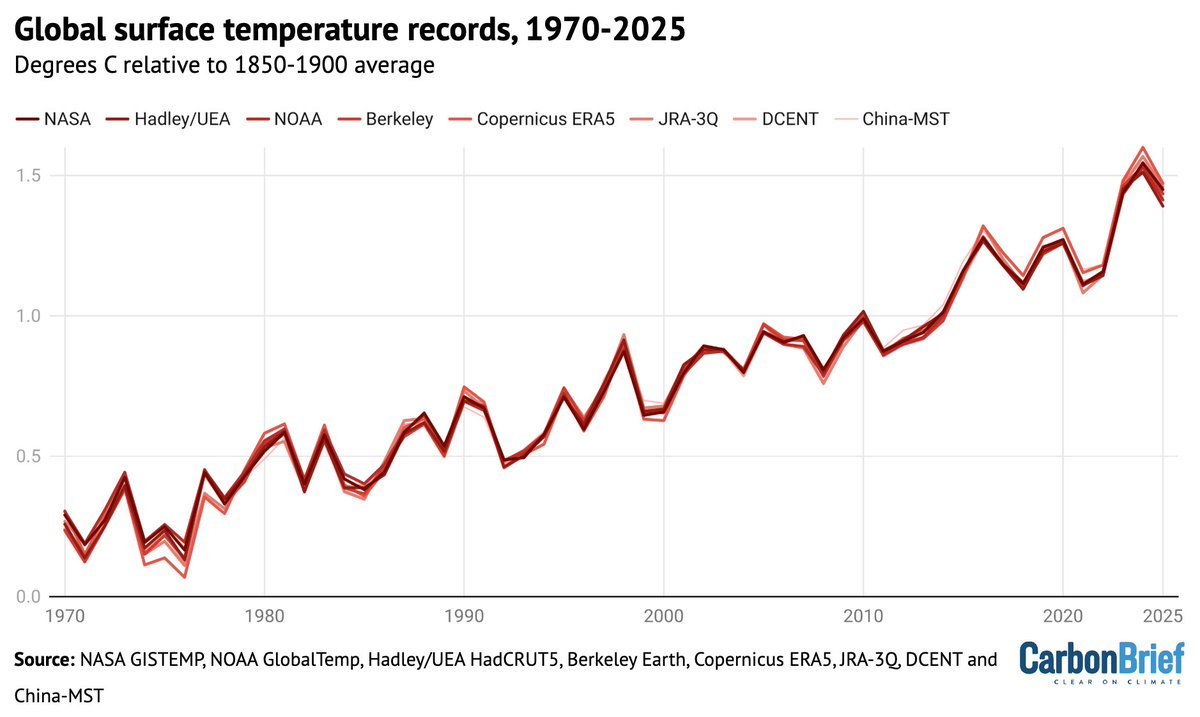

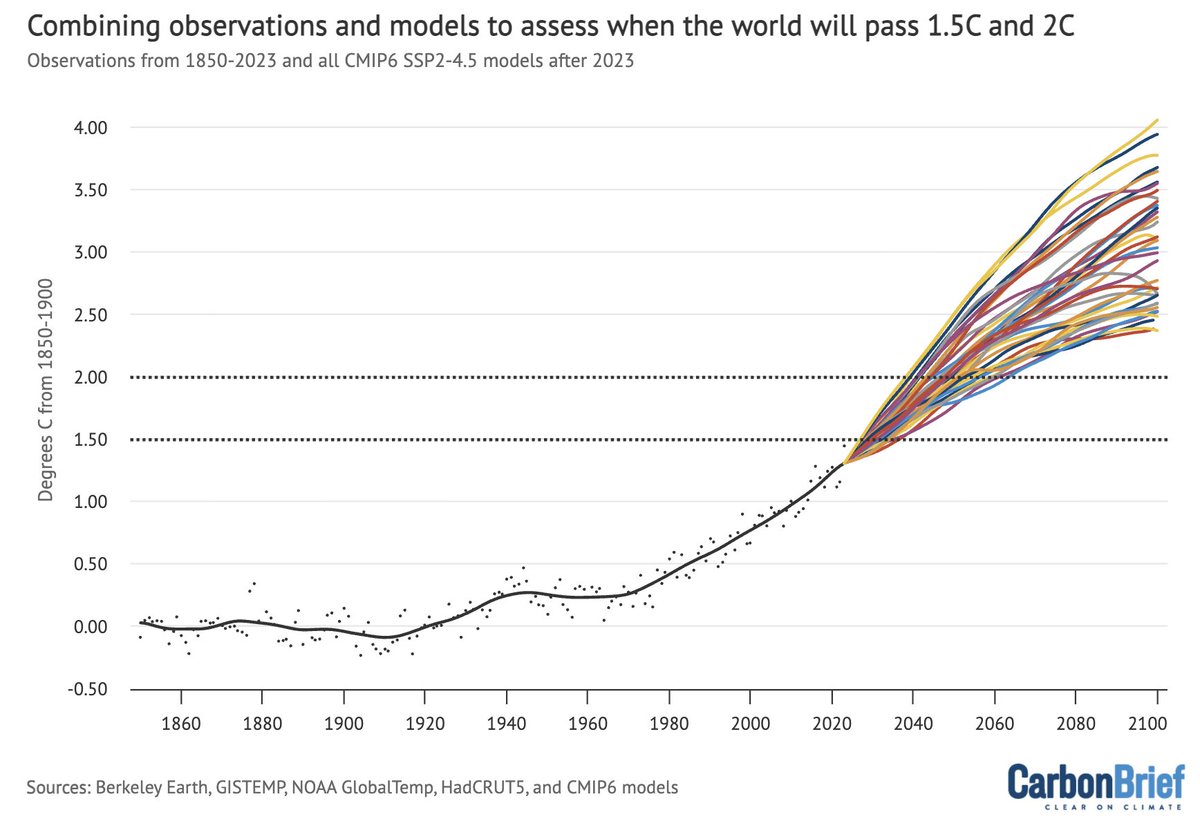

First of all, we all acknowledge that climate change has played a major role in making wildfires worse. Human emissions of greenhouse gases have increased spring and summer temperatures by around 2C in the Western U.S. over the past century. 2/15

This has extended both the area and time periods in which forests burn; in parts of California, fire season is now 50 days longer. The recent NCA4 suggested that about half the increase in burned area in the Western U.S. since 1980s can be attributed to a changing climate. 3/15

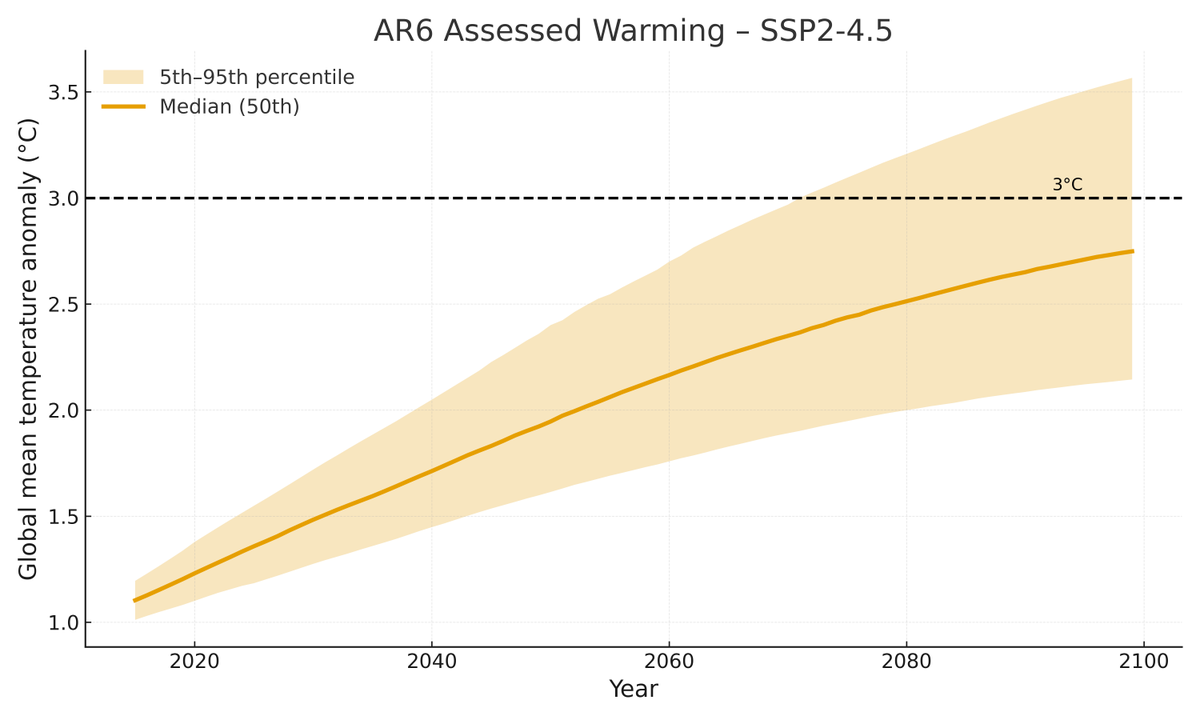

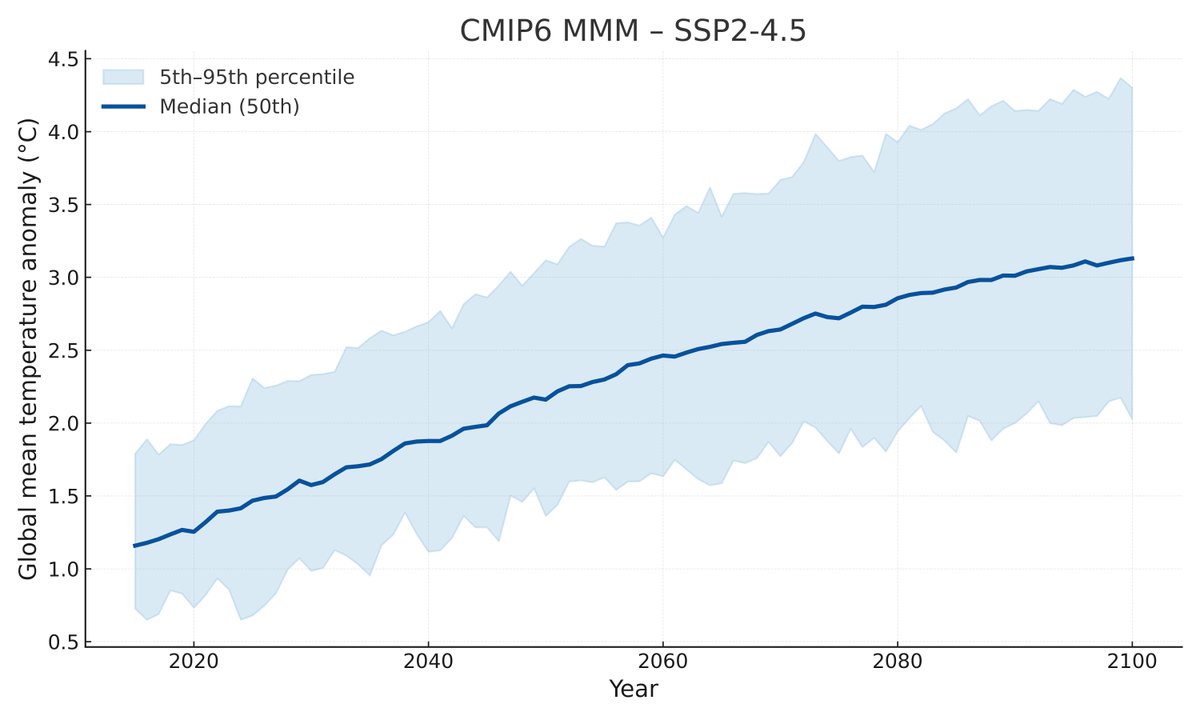

However, even if we were to magically slash our emissions to zero tomorrow, the climate would simply stop warming, not return to the conditions of the 1970s. The best we can hope for is to make our current climate the new normal and avoid making things potentially much worse 4/15

To reduce the severity of wildfires in our current climate, we need to improve forest management. We need to deal with the legacy of a century of overzealous fire suppression efforts in ecosystems adapted to frequent low-level burns. 5/15

We need to start controlling fires instead of extinguishing them, thinning small trees in some regions and doing controlled burns to clear out accumulated fuels. Some estimates suggest that 20 million acres will need to be thinned and/or burned to minimize fire risk. 6/15

At the same time, we need to allow the best available science to guide us and avoid extreme logging of our public forests under the guise of fire mitigation. We need to work to return to a regime where we can both actively manage forests and control natural ignitions. 7/15

We also need to streamline regulations around prescribed burns and thinning, removing red tape that trades short-term improvements in air quality for orange-sky catastrophes down the road. 8/15

We need to work closely with communities to get buy-in for forest management solutions and tailor interventions to what works best for their surrounding ecosystem and their socioeconomic reality. What works for Malibu and Paradise may be quite different! 9/15

We need to work with and learn from native fire practitioners who understand the land and have generations of experience with effective management techniques. We also need to institute better liability protections for groups undertaking prescribed burns. 10/15

We need to work from communities out, intensively managing areas in the wildland urban interface, but also acknowledge the need to eventually do prescribed burns and other management in more remote wildland regions to avoid air quality disasters associated with megafires. 11/15

We need to provide significantly more resources to harden homes and communities, paying for ember-resistant vent screens, defensible space clearing, and other cost-effective risk-reduction measures. 12/15

But we also need to deal with the drivers behind much of the wildland-urban interface expansion in California: our limited housing stock and astronomical prices. More housing and more affordable housing in urban areas can go a long way to reducing assets at risk. 13/15

Overall, its past time we gave forest management and wildfire risk reduction the resources it deserves. The 1989 Loma Prieta earthquake caused $10 billion in damages, but we spent $70 billion on earthquake retrofits after it occurred. 14/15

Yet despite hundreds of billions in losses from wildfires over the past five years, we only spend a small fraction today on wildfire risk reduction than what we spend on earthquake safety. While simply throwing money at the problem won't solve it, more resources are essential. 16

• • •

Missing some Tweet in this thread? You can try to

force a refresh