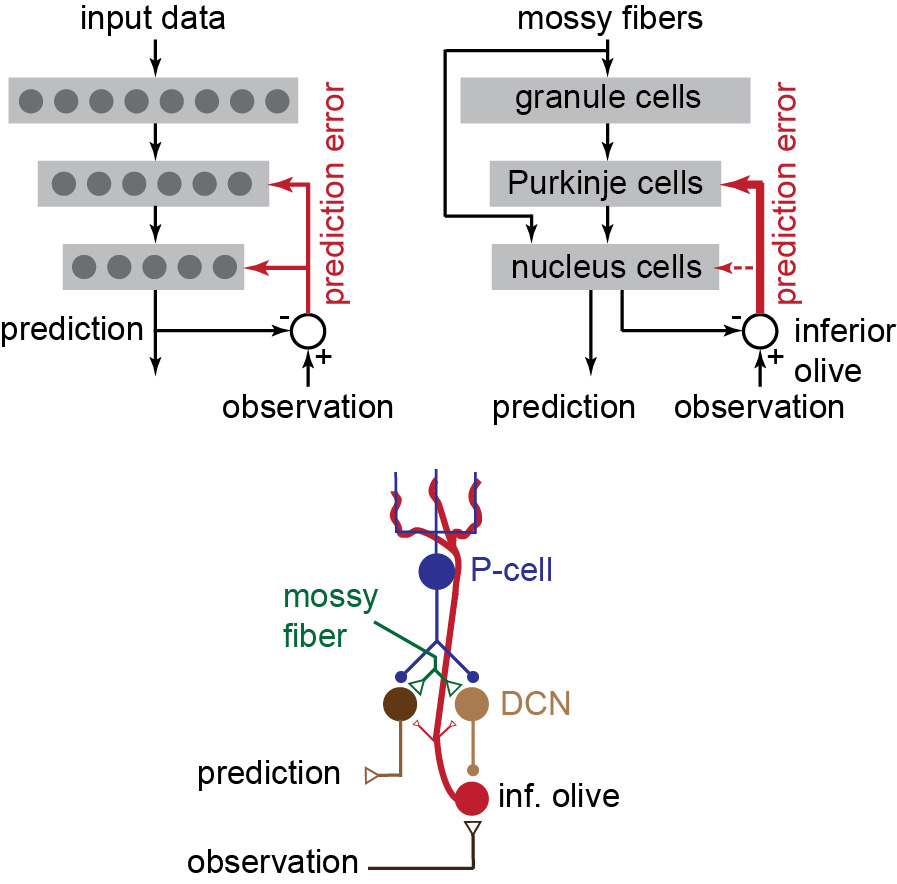

From a machine learning perspective, there is something quite odd about the cerebellum: the error information about the activities of the output layer neurons are sent strongly to the middle layer neurons (Purkinje cells), but not to the output layer neurons.

Why?

Why?

In this article, I look at this problem using elementary mathematics of machine learning. The result is an idea about population coding in the cerebellum.

reprints.shadmehrlab.org/Shadmehr_JNP20…

reprints.shadmehrlab.org/Shadmehr_JNP20…

It is possible that this coding contributes to curious features of behavior during adaptation, including multiple timescales of learning, protection from erasure, and spontaneous recovery of memory.

Your feedback is most welcomed.

Your feedback is most welcomed.

• • •

Missing some Tweet in this thread? You can try to

force a refresh