Convolution is not the easiest operation to understand: it involves functions, sums, and two moving parts.

However, there is an illuminating explanation — with probability theory!

There is a whole new aspect of convolution that you (probably) haven't seen before.

🧵 👇🏽

However, there is an illuminating explanation — with probability theory!

There is a whole new aspect of convolution that you (probably) haven't seen before.

🧵 👇🏽

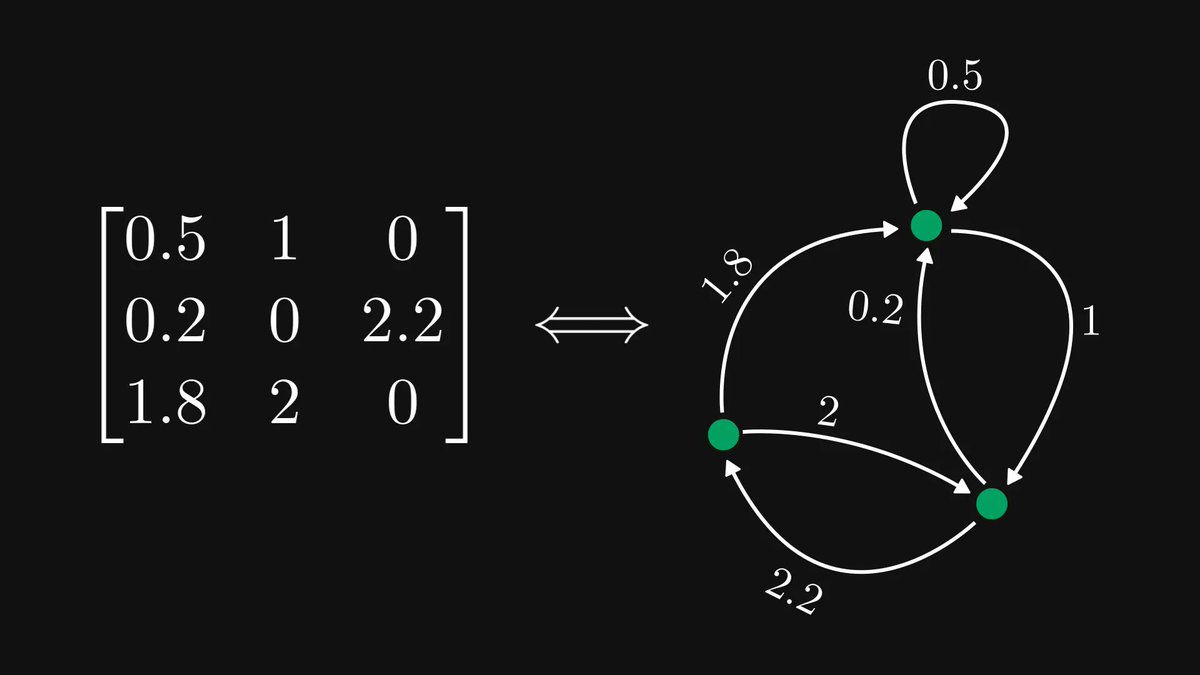

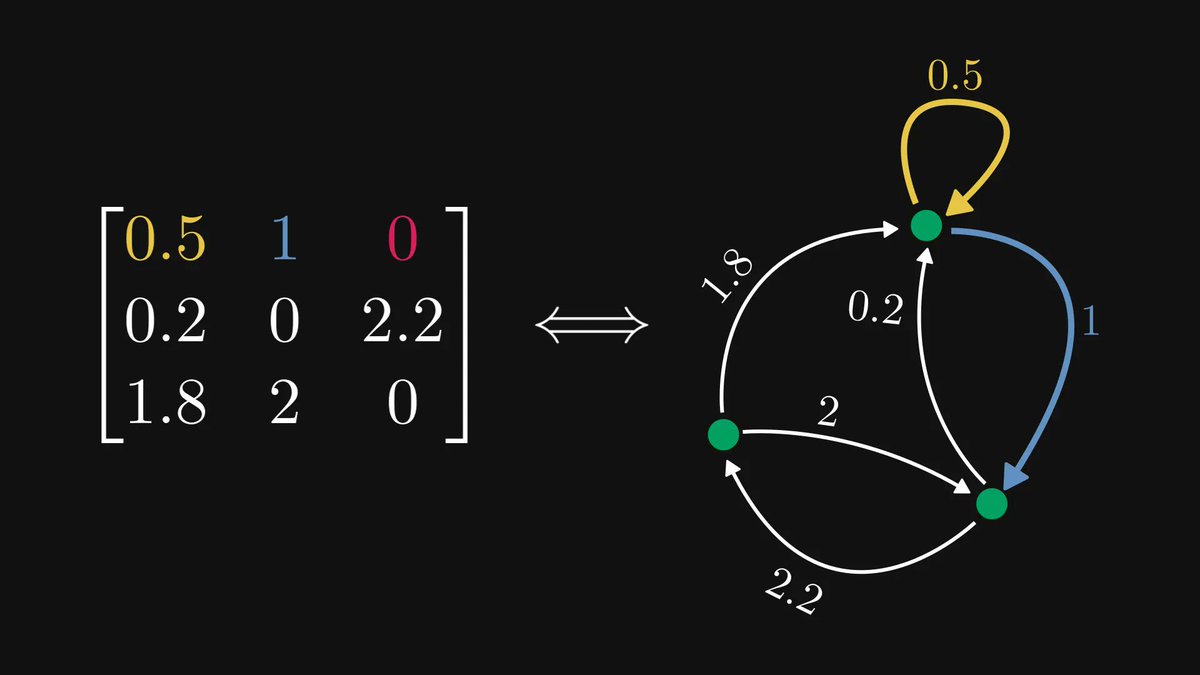

In machine learning, convolutions are most often applied for images, but to make our job easier, we shall take a step back and go to one dimension.

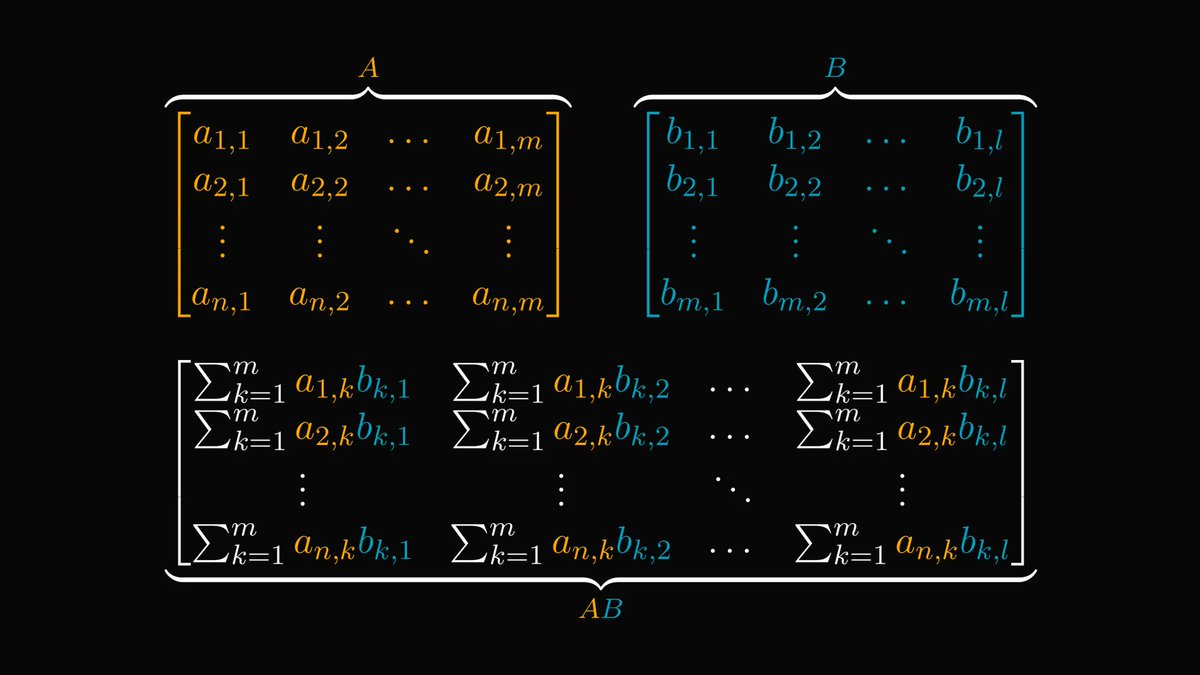

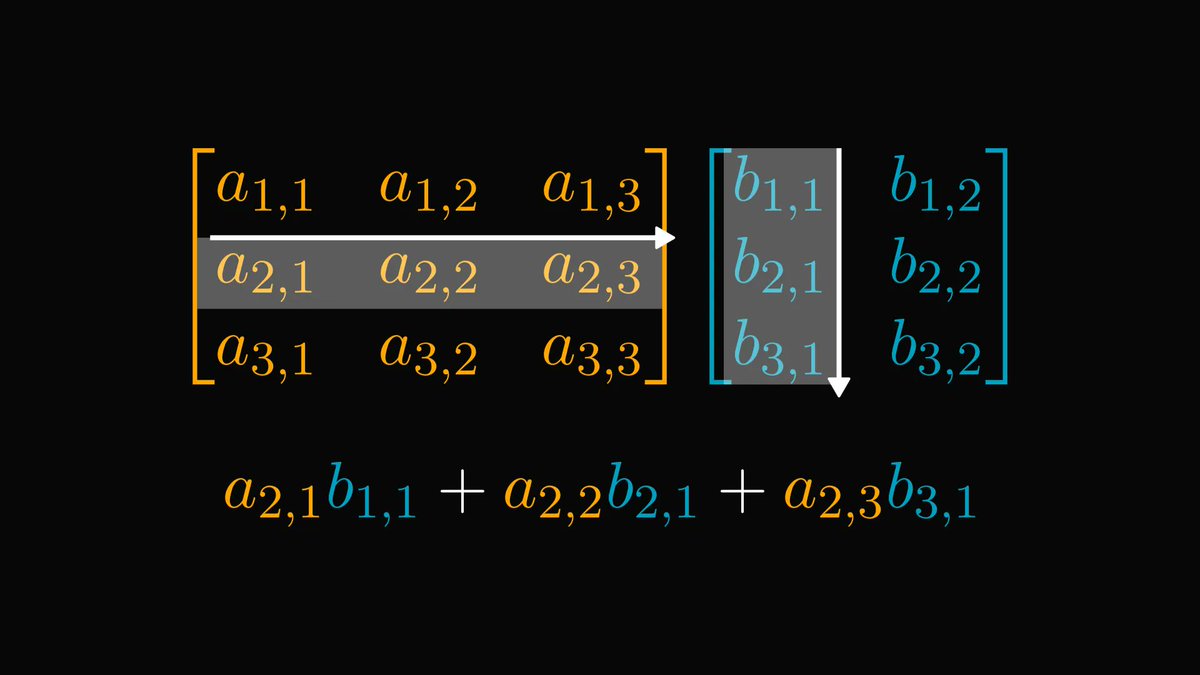

There, convolution is defined as below.

There, convolution is defined as below.

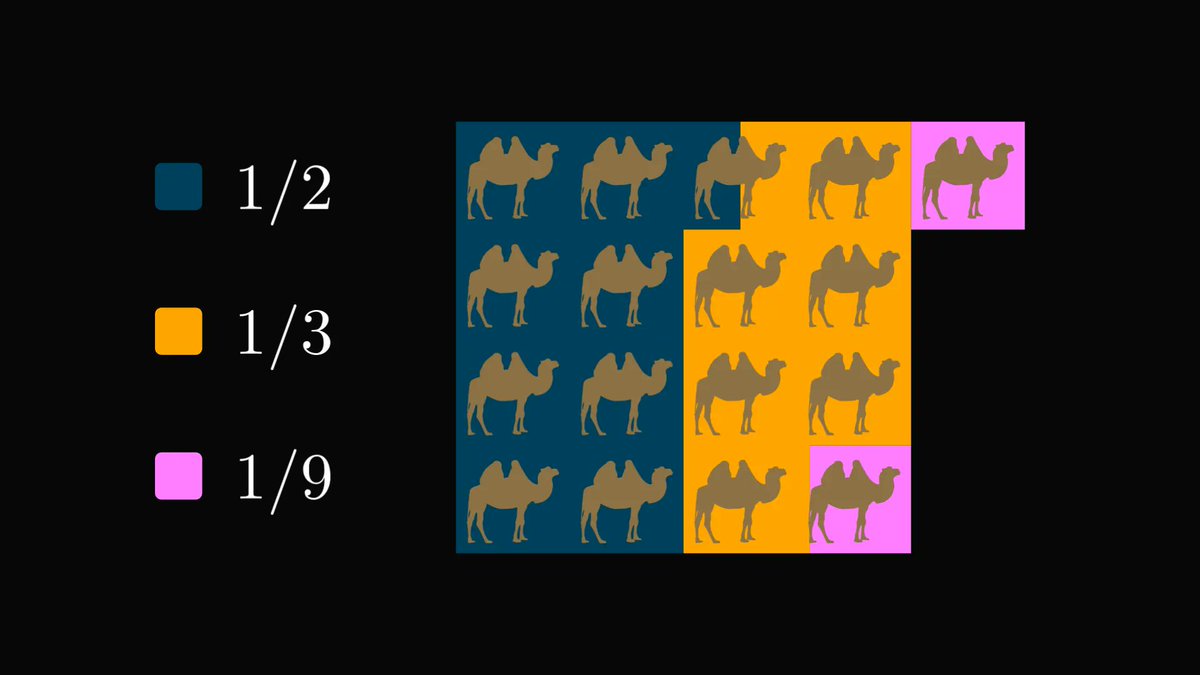

Now, let's forget about these formulas for a while, and talk about a simple probability distribution: we toss two 6-sided dices and study the resulting values.

To formalize the problem, let 𝑋 and 𝑌 be two random variables, describing the outcome of the first and second toss.

To formalize the problem, let 𝑋 and 𝑌 be two random variables, describing the outcome of the first and second toss.

Just for fun, let's also assume that the dices are not fair, and they don't follow a uniform distribution.

The distributions might be completely different.

We only have a single condition: 𝑋 and 𝑌 are independent.

The distributions might be completely different.

We only have a single condition: 𝑋 and 𝑌 are independent.

What is the distribution of the sum 𝑋 + 𝑌?

Let's see a simple example. What is the probability that the sum is 4?

That can happen in three ways:

𝑋 = 1 and 𝑌 = 3,

𝑋 = 2 and 𝑌 = 2,

𝑋 = 3 and 𝑌 = 1.

Let's see a simple example. What is the probability that the sum is 4?

That can happen in three ways:

𝑋 = 1 and 𝑌 = 3,

𝑋 = 2 and 𝑌 = 2,

𝑋 = 3 and 𝑌 = 1.

Since 𝑋 and 𝑌 are independent, the joint probability can be calculated by multiplying the individual probabilities together.

Moreover, the three possible ways are disjoint, so the probabilities can be summed up.

Moreover, the three possible ways are disjoint, so the probabilities can be summed up.

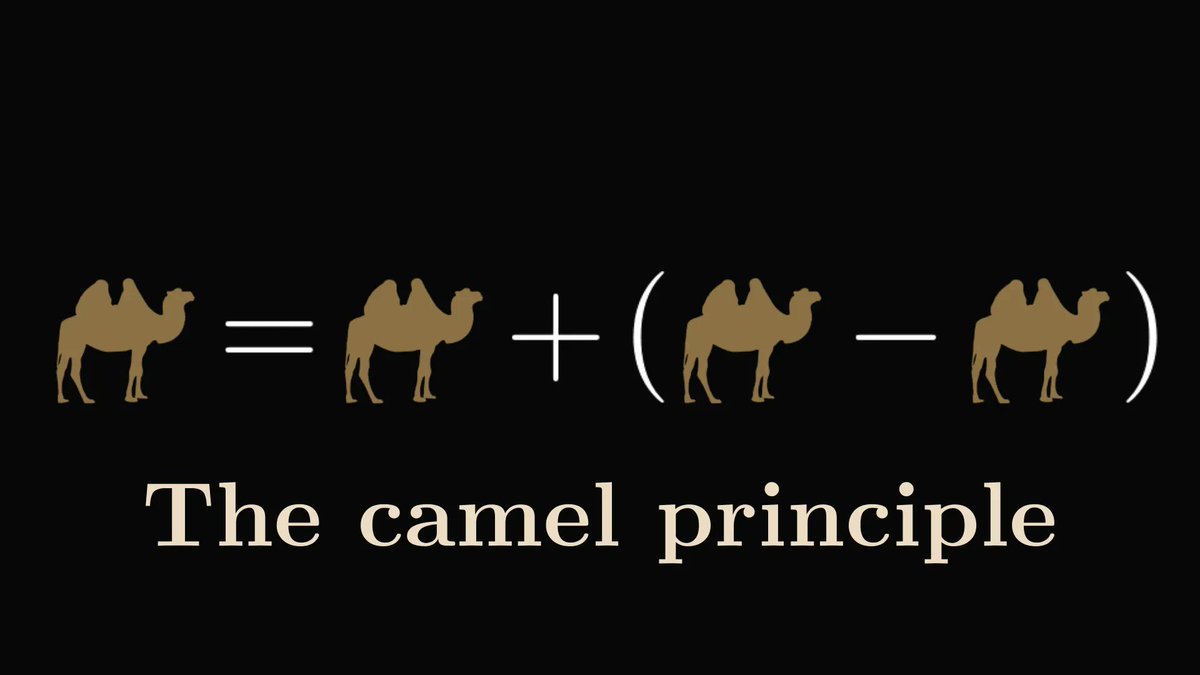

In the general case, the formula is the following.

(Don't worry about the index going from minus infinity to infinity. Except for a few terms, all others are zero, so they are eliminated.)

Is it getting familiar?

(Don't worry about the index going from minus infinity to infinity. Except for a few terms, all others are zero, so they are eliminated.)

Is it getting familiar?

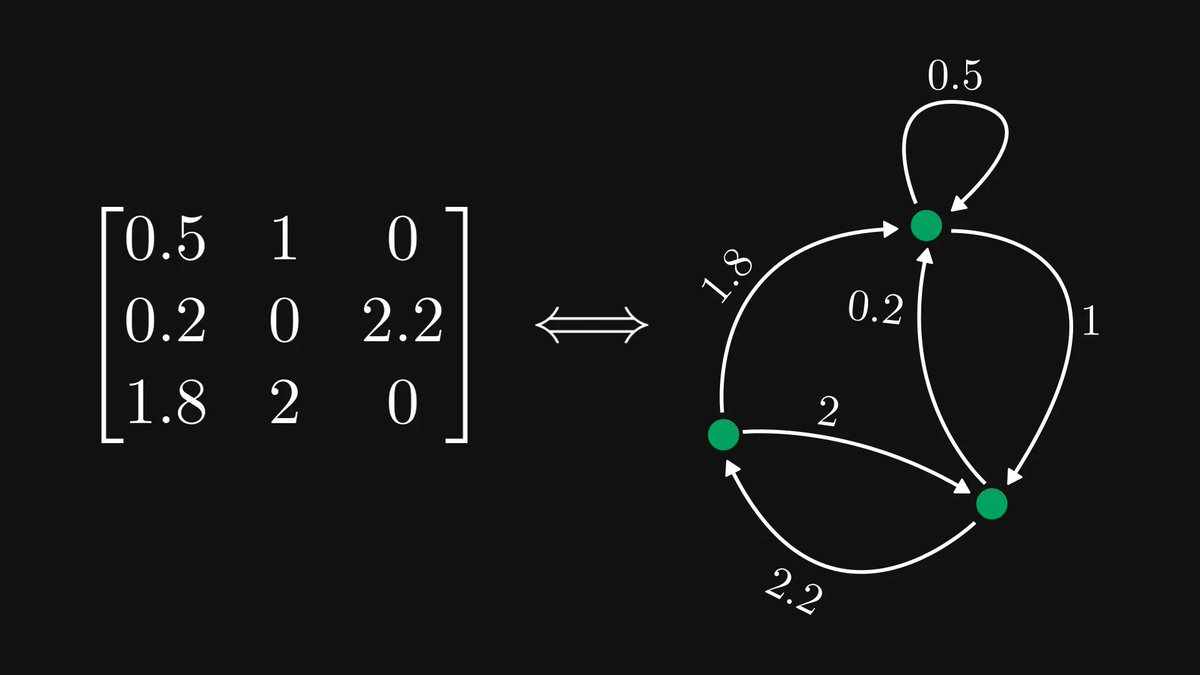

This is convolution.

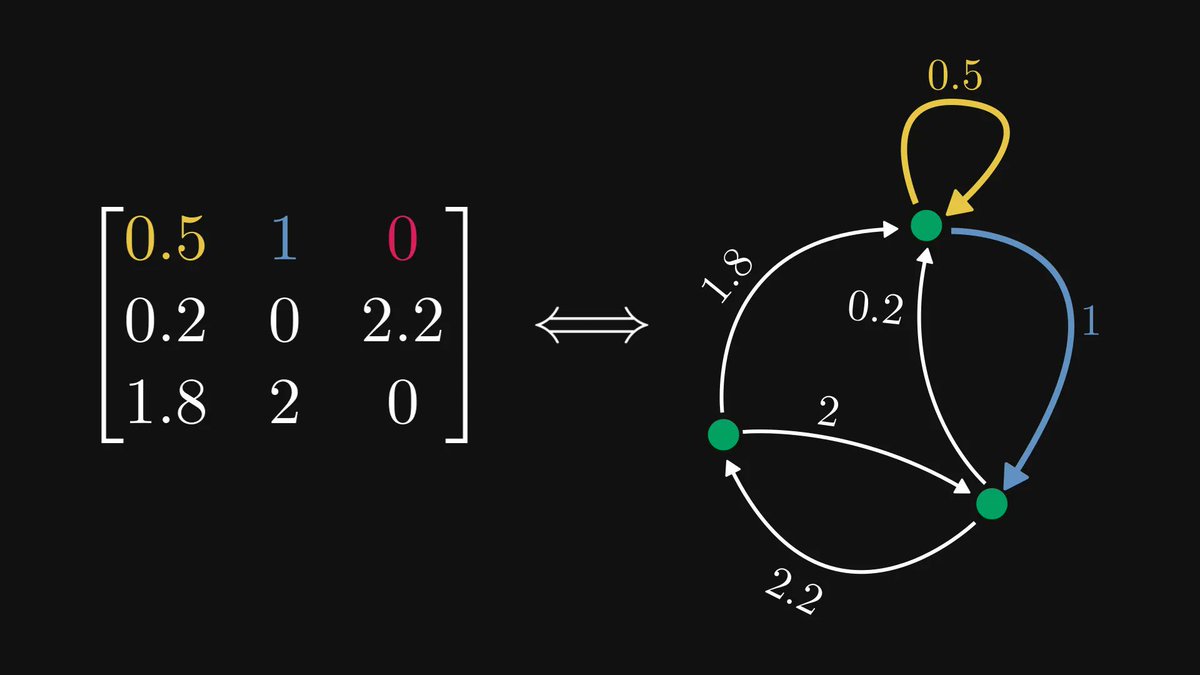

We can immediately see this by denoting the individual distributions with 𝑓 and 𝑔.

The same explanation works when the random variables are continuous, or even multidimensional.

Only thing that is required is independence.

We can immediately see this by denoting the individual distributions with 𝑓 and 𝑔.

The same explanation works when the random variables are continuous, or even multidimensional.

Only thing that is required is independence.

Even though images are not probability distributions, this viewpoint helps us make sense of the moving parts and the everyone-with-everyone sum.

If you would like to see an even simpler visualization, just think about this:

If you would like to see an even simpler visualization, just think about this:

https://twitter.com/TivadarDanka/status/1381620364007116802

• • •

Missing some Tweet in this thread? You can try to

force a refresh