*Reproducible Deep Learning*

The first two exercises are out!

We start quick and easily, with some simple manipulation on Git branches, scripting, audio classification, and configuration with @Hydra_Framework.

Small thread with all information 🙃 /n

The first two exercises are out!

We start quick and easily, with some simple manipulation on Git branches, scripting, audio classification, and configuration with @Hydra_Framework.

Small thread with all information 🙃 /n

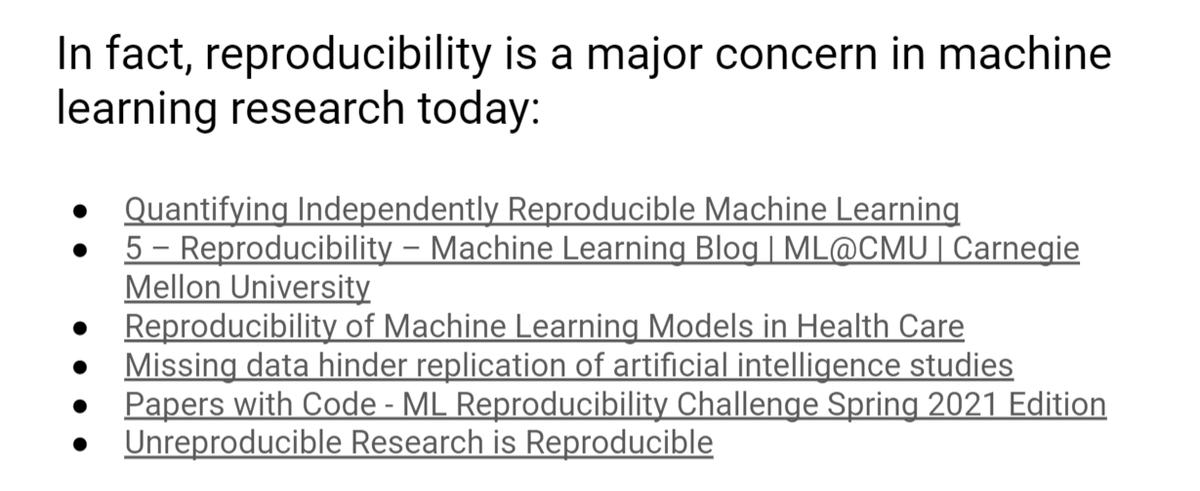

Reproducibility is associated to production environments and MLOps, but it is a major concern today also in the research community.

My biased introduction to the issue is here: docs.google.com/presentation/d…

My biased introduction to the issue is here: docs.google.com/presentation/d…

The local setup is on the repository: github.com/sscardapane/re…

The use case for the course is a small audio classification model trained on event detection with the awesome @PyTorchLightnin library.

Feel free to check the notebook if you are unfamiliar with the task. /n

The use case for the course is a small audio classification model trained on event detection with the awesome @PyTorchLightnin library.

Feel free to check the notebook if you are unfamiliar with the task. /n

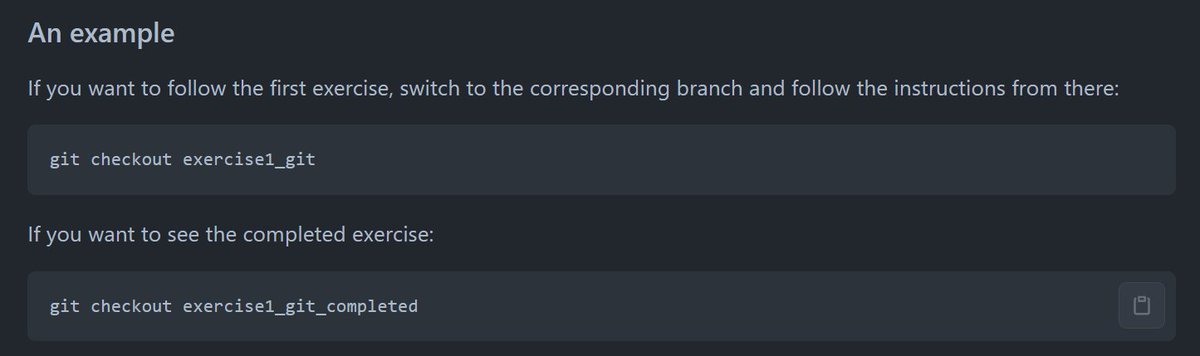

I spent some time understanding how to make the course as modular and "reproducible" as possible.

My solution is to split each exercise into a separate Git branch containing all the instructions, and a separate branch with the solution.

Two branches for now (Git and Hydra). /n

My solution is to split each exercise into a separate Git branch containing all the instructions, and a separate branch with the solution.

Two branches for now (Git and Hydra). /n

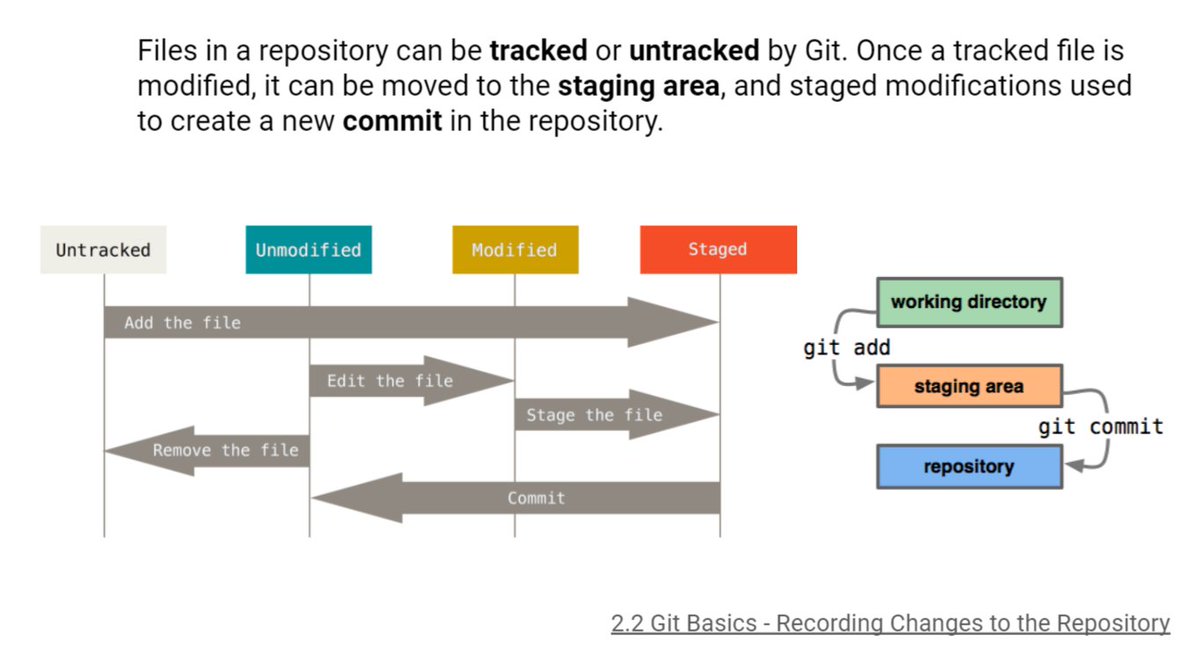

How well do you *really* know Git? The more I learn, the more I find it incredible.

I summarized most of the information on a separate set of slides: docs.google.com/presentation/d…

Be sure to check them out before continuing! /n

I summarized most of the information on a separate set of slides: docs.google.com/presentation/d…

Be sure to check them out before continuing! /n

Exercise 1 is a simple example of turning a notebook into a working script.

To make things more interesting, you have to complete the exercise while working on a separate Git branch!

github.com/sscardapane/re…

Nothing incredible, but it is always good to start small /n

To make things more interesting, you have to complete the exercise while working on a separate Git branch!

github.com/sscardapane/re…

Nothing incredible, but it is always good to start small /n

Once you have a working training script, it is time to add some "bell and whistles"!

My must-have is some external configuration w/ @Hydra_Framework. Exercise 2 guides you in all the required steps.

Plus side: colored logging!

github.com/sscardapane/re…

My must-have is some external configuration w/ @Hydra_Framework. Exercise 2 guides you in all the required steps.

Plus side: colored logging!

github.com/sscardapane/re…

That is all for the moment. The next exercises will explore having external data versioning with @DVCorg and complete isolation with @Docker.

You can follow by starring the official repository, or here on Twitter. 👀

github.com/sscardapane/re…

You can follow by starring the official repository, or here on Twitter. 👀

github.com/sscardapane/re…

• • •

Missing some Tweet in this thread? You can try to

force a refresh