This past Sunday (May 9th) was Mother's Day in multiple countries, and someone apparently chose to mark the occasion by creating a spam botnet to spread feel-good content related to #Xinjiang, China. #HolidayAstroturf

cc: @ZellaQuixote

cc: @ZellaQuixote

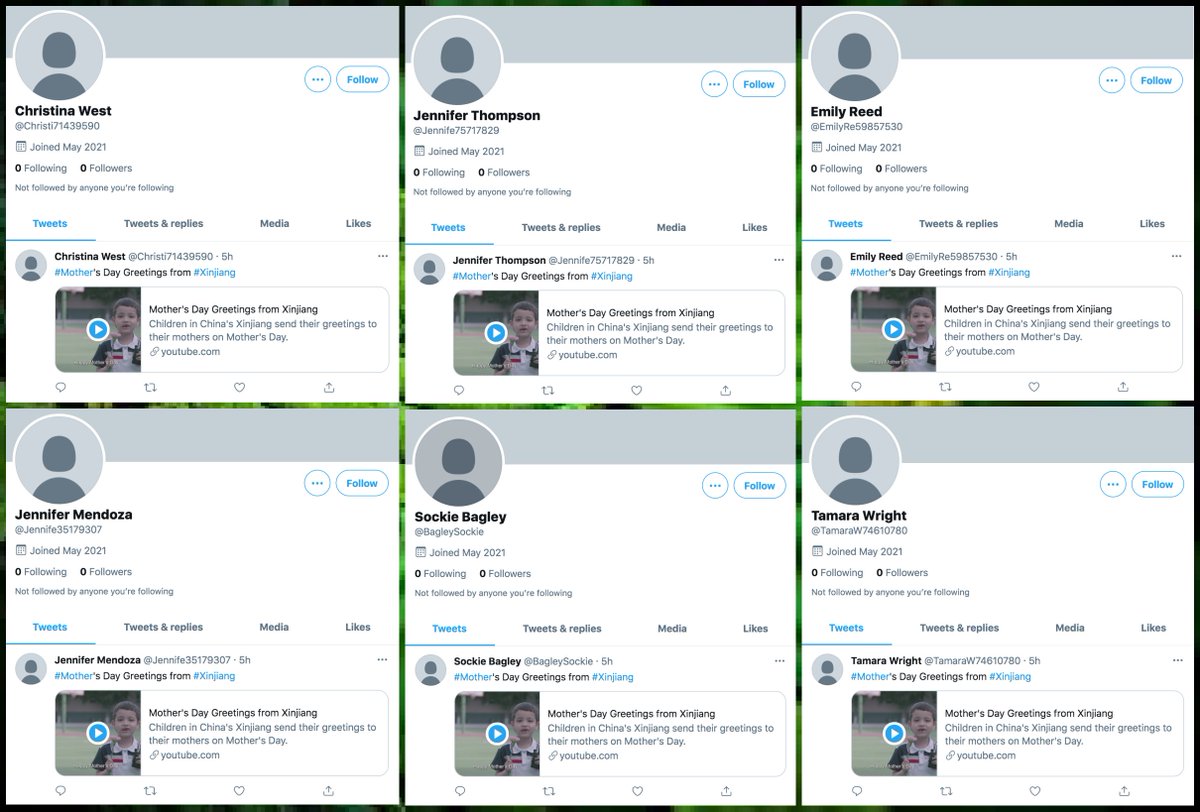

This botnet consists of 65 accounts with default profile pics created on May 9th, 2021. All have tweeted exactly three times, with the exception of @CaraLambrecht, which has only tweeted twice. All tweets thus far were (supposedly) sent via Twitter for Android.

Each account in this botnet has tweeted the same three tweets in the same order (again, with the exception of @CaraLambrecht, which skipped one). The most recent tweet from each account is a Xinhua YouTube video of children (allegedly in Xinjiang) saying "happy Mother's Day".

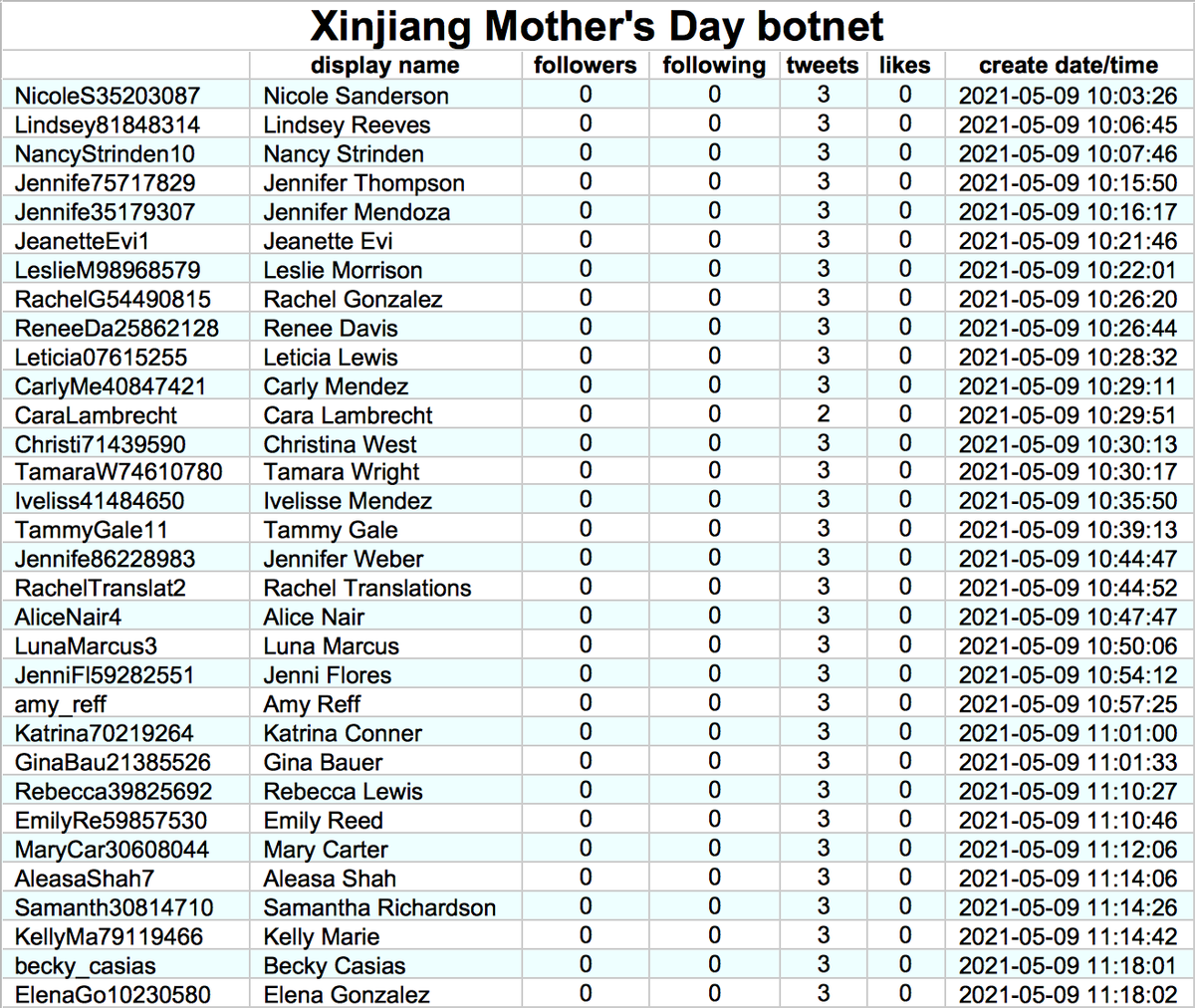

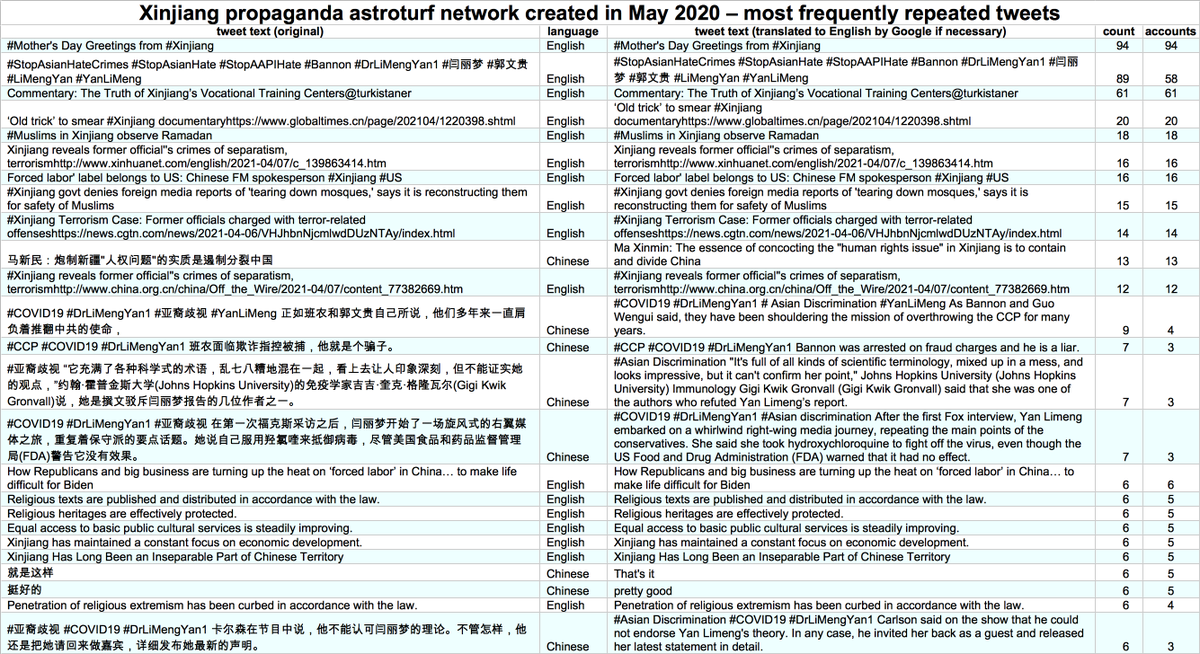

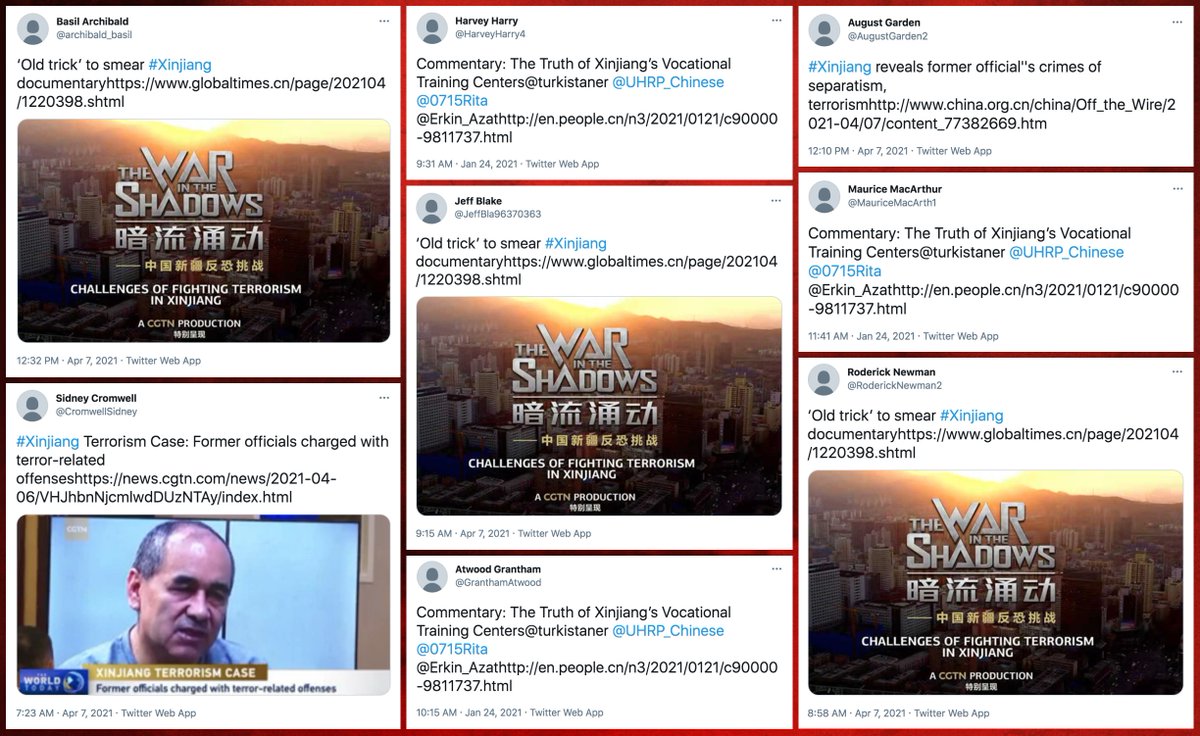

We found a second astroturf network pushing the same "#Mother's Day Greetings from #Xinjiang" YouTube video. This network consists of 94 accounts with default profile pics created over a two-week period in May 2020. This network tweets via the Twitter Web App.

This network's tweets are repetitive, with tweets disputing reports of human rights abuses in Xinjiang and tweets about terrorism and separatism in Xinjiang as recurring themes. A few tweets countering US right-wing claims that China caused the COVID-19 pandemic turn up as well.

This network has a recurring glitch: many of its tweets contain links that don't work due to the lack of a space between the links and the tweet text. These glitchy tweets are generally duplicated across multiple accounts in the network.

Finally, who does this network follow? Mostly big accounts with millions or tens of millions of followers, so a small botnet doesn't really do much to inflate their follower counts. @nytimes is the only account followed by all 94 bots in the network.

This is not the first time we've encountered astroturf networks focused on Xinjiang. Some previous examples:

https://twitter.com/conspirator0/status/1391175028708892673

https://twitter.com/conspirator0/status/1372007492276916224

https://twitter.com/conspirator0/status/1352408453143269378

• • •

Missing some Tweet in this thread? You can try to

force a refresh