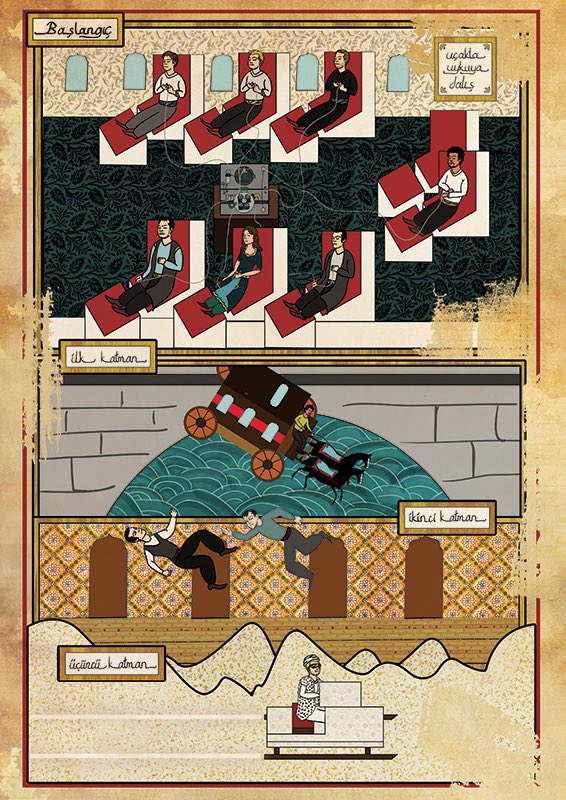

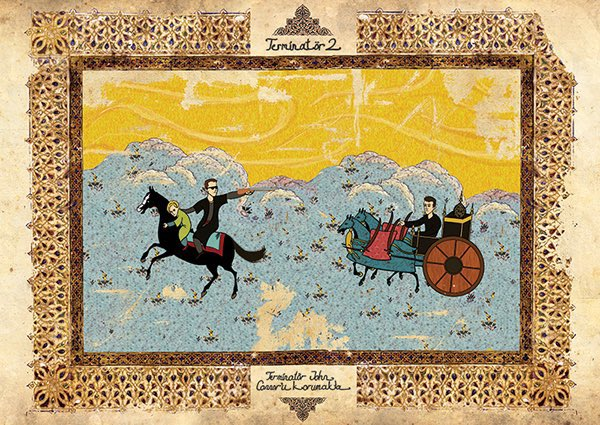

Blending cultures is awesome. What if Star Wars & Fahrenheit 451 were classic Russian lubok wood prints (Note samovar)? Or else Ottoman miniatures (details like the scimitar lightsaber)?

But wait, there's more! All 🇷🇺 art is by Andrey Kuznetsov & 🇹🇷 art is by @_Muratpalta 1/

But wait, there's more! All 🇷🇺 art is by Andrey Kuznetsov & 🇹🇷 art is by @_Muratpalta 1/

Can you guess these? Here we have the original movie as a Russian woodblock & the sequel as a Ottoman miniature. Plus two other well-known films. 2/

• • •

Missing some Tweet in this thread? You can try to

force a refresh